Red Hat OpenShift Data Science is an open source machine learning (ML) platform for the hybrid cloud. As you can probably guess from the title of this post, that’s not what I will be discussing here. OpenShift Container Platform supports a rich ecosystem of AI/ML solutions from very simple to complex, including both free open source as well as vendor-supported options.

This is the first of a series of posts highlighting the independent software vendor (ISV) ecosystem of AI solutions. The first ISV we are going to look at is ClearML.

Note: These posts assume some technical knowledge of Kubernetes, OpenShift, and containers. I will talk a lot about Security Context Constraints (SCCs) so this section of the OpenShift documentation may be a helpful primer.

ClearML is an open source suite of tools that cover the entire machine learning workflow. From experiment tracking to model training to hyperparameter optimization and more, ClearML offers many components to help with all aspects of both data science as well as MLOps.

Open Source and OpenShift

Often, people will bring a piece of software they found on the internet to OpenShift, attempt to run it, fail quickly, and then abandon their quest. Usually, the failure is related to very simple security configurations.

OpenShift has a fairly restrictive set of default security permissions designed to reduce the blast radius of badly acting software and to protect enterprises. But that list of permissions is not set in stone. Cluster administrators and even sometimes namespace owners have the ability to make narrowly scoped changes to these permissions. And of course, you are always talking to your administrators and security personnel about your software deployments, right? The Phoenix Project told you to start doing that way back in 2013.

Helm and Operators

The Kubernetes Operator paradigm is an extremely powerful method of writing control software with the intelligence to install, update/upgrade, back up, and even self-heal the software it deploys. But writing operators that support the full level 5 maturity spectrum is not a trivial task.

Helm charts provide a relatively simple way to templatize the installation and upgrade of deployed software in a Kubernetes environment. OpenShift has supported Helm for several years now, and a very large portion of software projects out there include Helm charts as a way to be deployed into Kubernetes. ClearML is no exception here, and they ship a robust set of Helm charts to enable deploying their solution on Kubernetes.

ClearML and OpenShift

The ClearML Helm chart repository contains three different charts, but the one I will focus on is the core “server” chart that deploys the web-app component of ClearML along with supporting data storage and other components. The rest of this post will detail the steps for deploying ClearML on OpenShift and will point out any gotchas.

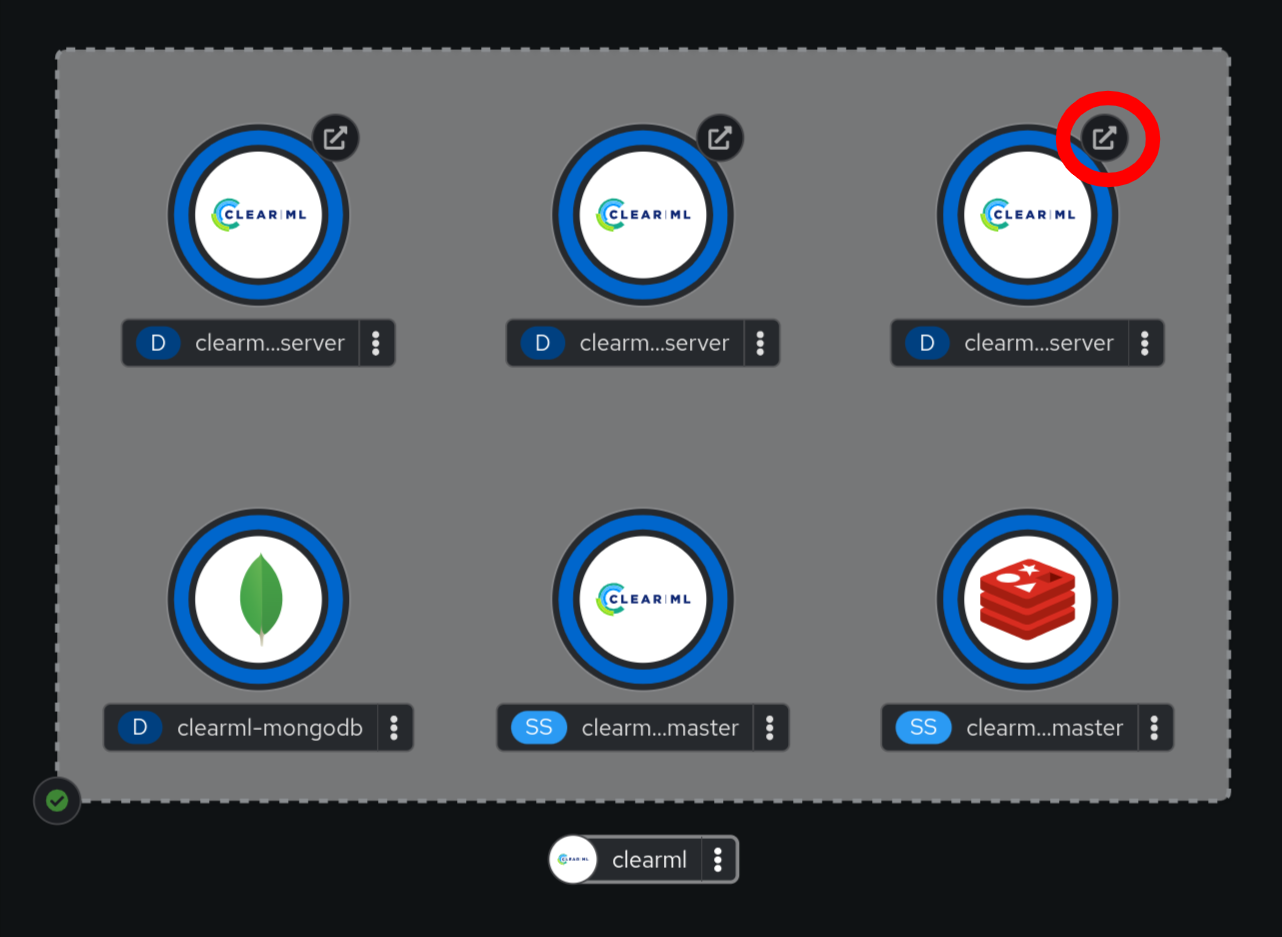

What’s the goal? To get to the fully deployed chart such that the topology indicates all systems are go:

We will make the following assumptions:

- You are the administrator of your OpenShift cluster or have sufficient permissions to change Security Context Constraint settings for ServiceAccounts in the cluster.

- You have the OpenShift CLI, oc, installed, and you are logged into the cluster as said user with permissions.

- Your cluster has the OpenShift router running and configured in such a way that Routes are accessible to you.

- Your cluster has some kind of persistent storage configured that will respond to PersistentVolumeClaims for RWO volumes with ~155GB or more available.

One easy way to handle all of the prerequisites above is to use one of Red Hat’s managed OpenShift offerings in the cloud.

On to the installation:

As with nearly all public Helm repositories, first tell your client about the repo:

$ helm repo add allegroai https://allegroai.github.io/clearml-helm-charts

$ helm repo updateNow, the next step in the ClearML instructions is to install the chart into a namespace. But as I’ve already tried that for you and know what will go wrong, here are some special prerequisites for OpenShift.

Create your OpenShift Project

The instructions aren’t explicitly clear about this, but perhaps they are just assuming that such general Kubernetes knowledge goes without saying. For the purposes of exhaustive completeness, don’t forget to create your project and make sure your client is configured to use it:

$ oc new-project clearml

Create the following local values.yaml file to use with the helm installation:

elasticsearch:

rbac:

create: true

serviceAccountName: "clearml-elastic"

apiserver:

podSecurityContext:

runAsUser: 0

service:

type: ClusterIP

fileserver:

service:

type: ClusterIP

webserver:

service:

type: ClusterIPConfigure the following SCCs for the ServiceAccounts:

$ oc adm policy add-scc-to-user anyuid -z clearml-mongodb

$ oc adm policy add-scc-to-user anyuid -z clearml-redis

$ oc adm policy add-scc-to-user anyuid -z default

$ oc adm policy add-scc-to-user privileged -z clearml-elastic

Let’s take a moment to analyze the above.

One feature of Helm charts is that they can refer to or include other charts. ClearML’s solution uses MongoDB, Redis, and ElasticSearch, and they have embedded the respective charts for each.

The MongoDB and Redis charts both create ServiceAccounts (SA) when deployed. The containers for each of those solutions run as specific users/UIDs. By default, OpenShift does not allow you to run a container with a specified UID instead randomizing the UID before running. Since the deployments for each of Mongo and Redis explicitly request UIDs, they fail to deploy with an error.

The anyuid SecurityContextConstraint (SCC) allows, as you might expect from the name, a ServiceAccount to run a container with any specified user ID, including root (UID=0). As such, the first two commands allow the specific SAs created to use the anyuid SCC.

ElasticSearch requires a little more, as one of the things the upstream chart wants to do is change some systems on the node where it runs. Unfortunately, sysctl manipulation is a privileged operation and as such, requires that the privileged SCC be used. Additionally, the ElasticSearch chart does not create an SA by default, so you will notice that the values.yaml from the previous step specifies creating one.

Lastly, the ClearML components (webserver, apiserver, fileserver) do not specify an SA in their respective deployments. When an SA is not specified in the Pod template, OpenShift will use the SA called default. The ClearML webserver container expects to be running as UID 0, but the deployment does not specify this. It was necessary to both tell OpenShift to run the web server as UID0 (via the podSecurityContext/runAsUser setting in the Helm chart) and then allow this behavior by granting the default SCC anyuid.

You may have read all of the above and thought, “But we haven’t installed ClearML yet – why are we changing the SCCs? Do these SAs even exist?” The reality is that these permissions settings are a form of mandatory access control. The commands ultimately create entries in Kubernetes that allow the specified behavior. If nothing and no one ever attempts to do the thing that the rule allows, then it doesn’t matter. However, when these various deployments are created and the SAs are used, those rules will exist and allow the desired behavior.

With the SCC settings in place, the Helm chart can now be installed:

$ helm install -f values.yaml clearml allegroai/clearml

The values file did not request Ingresses to be created for the ClearML components, and the normal behavior for the chart is not to create any. The values do specify that the services should be created as ClusterIP and not NodePort. Since your OpenShift environment has a router present, you can simply create Route objects for the services that were created by the Helm chart:

$ oc expose svc clearml-apiserver

$ oc expose svc clearml-webserver

$ oc expose svc clearml-fileserverAfter waiting a few moments, you can visit the OpenShift UI and go to the Developer perspective to look at the topology of the deployed ClearML environment:

The OpenShift topology view makes it easy to access applications that are exposed outside the cluster: Just click the little icon (circled in red above) for the clearml-webserver deployment, which will open its URL in a new browser tab.

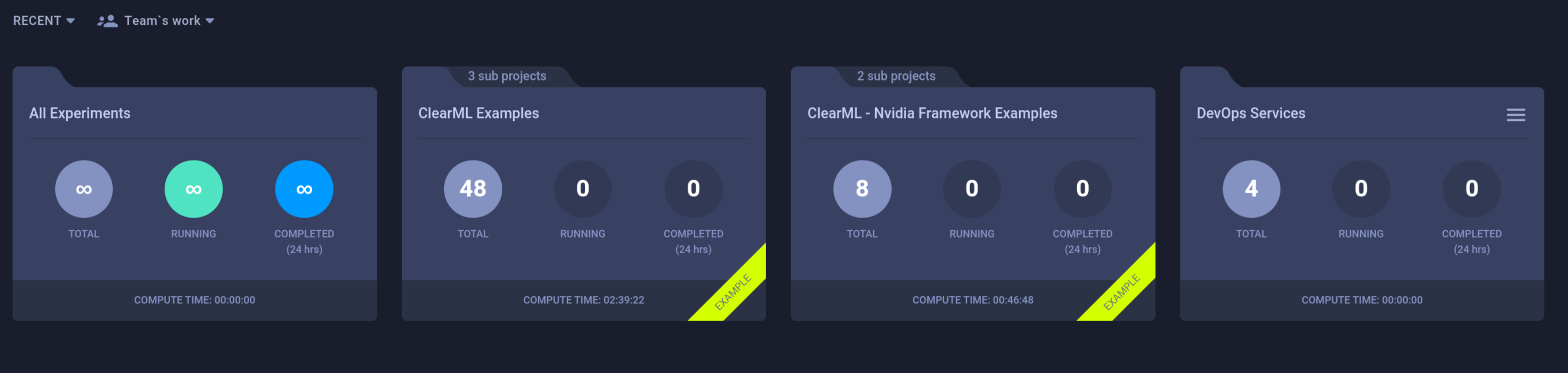

Eventually, ClearML will sync in all of the example content and workspaces:

And that’s all there is to it. You are now ready to use ClearML open-source with OpenShift! In a future post, I’ll look at a more production-grade deployment of ClearML Enterprise.

About the author

More like this

What even is the harness in AI?

Bringing Claude self-hosted sandboxes to OpenShell on Red Hat AI

Technically Speaking | Build a production-ready AI toolbox

Technically Speaking | Platform engineering for AI agents

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds