The factory-precaching-cli tool is a containerized Go binary publicly available in the Telco RAN tools container image. This blog shows how the factory-precaching-cli tool can drastically reduce the OpenShift installation time when using the Red Hat GitOps Zero Touch Provisioning (ZTP) workflow. This approach becomes very significant when dealing with low bandwidth networks, either when connecting to a public or disconnected registry.

The factory-precaching-cli tool is a Technology Preview feature only.

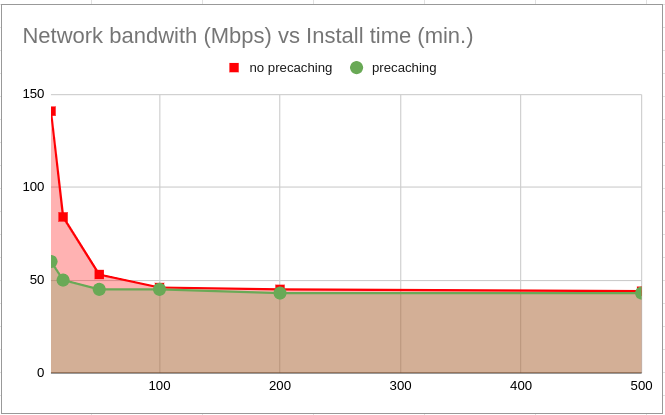

The X-axis in the graph represents network speed in Mbps. The installation time increases considerably in low bandwidth networks (under 100Mbps). Notice that using the factory-precaching-cli tool improves the installation time in those environments. It is important to mention that only some things can be precached today. When precaching, the network speed also affects the installation time, but in a minimal way compared with not using precache.

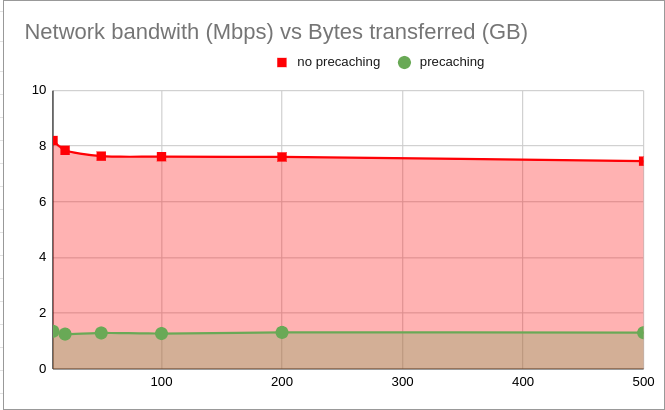

In terms of bytes exchanged with the registry, we can see how we can save up to 85% percent of the total bytes transferred just by using the factory-precaching-cli tool.

Motivation

In environments with limited bandwidth using the Red Hat GitOps ZTP solution, avoiding using the network to download the OCP installation artifacts to deploy many SNO clusters might be desirable. In cases where many clusters are provisioned, and the content is pulled from the same private registry, minimizing the traffic usage and avoiding the registry being a bottleneck can be advisable.

Bandwidth to remote sites might also be limited, resulting in long deployment times with the existing Red Hat GitOps ZTP solution. Therefore, improvement of the installation time is a valid goal as well.

To address the bandwidth limitations, a factory pre-staging solution helps to eliminate downloading many of the artifacts at the remote site. Artifacts refers both to container images and optional images like the rootfs.

Description

The factory-precaching-cli tool facilitates the pre-staging of servers before they are shipped to the site for later ZTP provisioning. The tool does the following:

- Creates a partition from the installation disk labeled as data.

- Formats the disk (XFS).

- Creates a GPT data partition at the end of the disk. Size is configurable by the tool.

- Copies the container images required by ZTP to install OCP.

- Optionally, day-2 operators are copied to the partition.

- Optionally, third-party container images can be copied to the partition.

- Optionally, downloads the RHCOS rootfs image required by the minimal ISO to boot.

This blog will cover the four stages of the process and discuss the results:

- Booting the RHCOS live.

- Partitioning the installation disk.

- Downloading the artifacts to the partition.

- ZTP configuration for precaching.

Boot the RHCOS live image

Technically, you can boot from any live ISO that provides container tools like Podman. However, the supported and tested OS is Red Hat CoreOS. You can obtain the latest live ISO from here.

Optional: Create a custom RHCOS live ISO

Observe that the SSH server is not enabled in the RHCOS live OS by default. Therefore, booting from the mentioned live ISO is valid but tedious since you need to connect to the server console to execute the commands. Also, it is not suitable for automating the precache process. In such cases, leverage butane and the coreos-installer utilities. They allow you to create a customized RHCOS live ISO with sshd enabled, including some predefined credentials to access it right after booting. This link contains an example, including an sshd configuration.

Partition the installation disk

The disk can not be partitioned before starting the partitioning process. If it is partitioned, boot from an RHCOS live ISO, delete the partition, and wipe the entire device. It is also best to erase filesystem, RAID, or partition-table signatures from the device:

[root@liveiso]$ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sr0 11:0 1 1G 0 rom /run/media/iso

nvme0n1 259:1 0 1.5T 0 disk

├─nvme0n1p1 259:2 0 1M 0 part

├─nvme0n1p2 259:3 0 127M 0 part

├─nvme0n1p3 259:4 0 384M 0 part

├─nvme0n1p4 259:5 0 1.2T 0 part

└─nvme0n1p5 259:6 0 250G 0 part

[root@liveiso]$ wipefs -a /dev/nvme0n1

/dev/nvme0n1: calling ioctl to re-read partition table: Success

[root@liveiso]$ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sda 8:0 0 893.3G 0 disk

sr0 11:0 1 1G 0 rom /run/media/iso

nvme0n1 259:1 0 1.5T 0 disk

A second prerequisite is pulling the quay.io/openshift-kni/telco-ran-tools:latest container image that will run the factory-precaching-cli tool. The image is publicly available in quay.io. You must copy the image from there if you are in a disconnected environment or have a corporate private registry.

[root@liveiso]$ podman pull quay.io/openshift-kni/telco-ran-tools:latest

Trying to pull quay.io/openshift-kni/telco-ran-tools:latest...

Getting image source signatures

… REDACTED …

Copying blob 146306f19891 done

Finally, be sure that the disk is big enough to precache all the container images required: OCP release images and, optionally, day-2 operators. Based on our experience, 250GB is more than enough for OpenShift installation.

Begin with partitioning. Use the partition argument from the factory-precaching-cli. Create a single partition and a GPT partition table. This partition will be automatically labeled as data by default and created at the end of the device. Notice that the tool also formats the partition as XFS.

[root@liveiso]$ podman run --rm -v /dev:/dev --privileged quay.io/openshift-kni/telco-ran-tools:latest \

-- factory-precaching-cli partition -d /dev/nvme0n1 -s 250

Partition /dev/nvme0n1p1 is being formatted

Verify that a new partition exists:

[root@liveiso]$ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sr0 11:0 1 1G 0 rom /run/media/iso

nvme0n1 259:1 0 1.5T 0 disk

└─nvme0n1p1 259:2 0 250G 0 part

The coreos-installer requires the partition to be created at the end of the device and labeled as data. Both requirements are necessary to save the partition when writing the RHCOS image to disk.

Once you create the partition, mount the device into /mnt and move to the next stage, which is downloading the OpenShift release bits:

[root@liveiso]$ mount /dev/nvme0n1p1 /mnt/

Download the artifacts

This stage manages downloading the OCP release images. More advanced scenarios can be set up, too, such as downloading operators' images or images from disconnected registries.

Pre-requisites

- The partitioning stage must have already been executed successfully.

- Currently, you must connect the target or jump-host server to the Internet to obtain the OCP release image's dependencies.

- A valid pull secret to the registry involved in the downloading process of the container images is required. You can obtain Red Hat's pull secret from the Red Hat's console UI.

If your systems are in a strictly disconnected environment, you can execute the download process from a connected jump-host and then copy the artifacts and metadata information to the target server's partition.

Red Hat GitOps ZTP leverages Red Hat Advanced Cluster Management for Kubernetes to provision multiple managed clusters. The RHACM and MCE versions of the hub cluster will determine what assisted installer container images are used by the SNO to provision, report back inventory information, and monitor the installation progress of the managed cluster. Precache those images, too.

Check the version of RHACM and MCE by executing these commands in the hub cluster:

[user@hub]$ oc get csv -A -o=custom-columns=NAME:.metadata.name,VERSION:.spec.version \

| grep -iE "advanced-cluster-management|multicluster-engine"

multicluster-engine.v2.3.1 2.3.1

advanced-cluster-management.v2.8.1 2.8.1

Next, copy a valid pull secret to access the container registry. Do this on the server that will be installed. If you are using a disconnected registry to pull down the images, the proper certificate is required, too:

[root@liveiso]$ mkdir /root/.docker

[root@liveiso]$ cp config.json /root/.docker/config.json

[root@liveiso]$ cp /tmp/rootCA.pem /etc/pki/ca-trust/source/anchors/.

[root@liveiso]$ update-ca-trust

If you are downloading the images from the Red Hat registry, you only need to copy the Red Hat images's pull secret. No extra certificates are required.

In recent versions, the factory-precaching-cli tool provides a feature for filtering unneeded images from the download for a given configuration. Below is an example of excluding images for a bare metal server installation:

---

patterns:

- alibaba

- aws

- azure

- cluster-samples-operator

- gcp

- ibm

- kubevirt

- libvirt

- manila

- nfs

- nutanix

- openstack

- ovirt

- sdn

- tests

- thanos

- vsphere

The factory-precaching-cli tool will use parallel workers to download multiple images simultaneously to speed up the pulling process. You can now download the required artifacts locally to the /mnt partition.

As seen in the execution below, several host paths are mounted into the container:

- /mnt folder where the artifacts are going to be stored.

- $HOME/.docker folder where the registry pull secret is located.

- /etc/pki folder where the private registry certificate is stored.

Add the download parameter and the OCP release version to precache, along with the previously obtained RHACM and MCE versions of the hub cluster. Observe that some extra images can also be precached using the --img flag, and the --filter option is included, avoiding precaching unneeded container images.

[root@snonode ~]$ podman run -v /mnt:/mnt -v /root/.docker:/root/.docker -v /etc/pki:/etc/pki \

--privileged --rm quay.io/openshift-kni/telco-ran-tools:latest -- factory-precaching-cli \

download -r 4.13.11 --acm-version 2.8.1 --mce-version 2.3.1 -f /mnt \

--img quay.io/alosadag/troubleshoot --filter /mnt/image-filters.txt

… REDACTED …

gent-rhel8@sha256_d670e52864979bd31ff44e49d790aa4ee26b83feafb6081c47bb25373ea91f65

Downloaded artifact [165/165]: agent-service-rhel8@sha256_f2929eb704ae9808ea46baf3b3511a9e4c097dfca884046e5b61153875159469

Summary:

Release: 4.13.11

ACM Version: 2.8.1

MCE Version: 2.3.1

Include DU Profile: No

Workers: 64

Total Images: 165

Downloaded: 165

Skipped (Previously Downloaded): 0

Download Failures: 0

Time for Download: 2m32s

After a couple of minutes, depending on the network speed, a complete summary of the process will be displayed. Finally, unmounting the partition before starting the installation process is suggested.

[root@liveiso]$ sudo umount /mnt/

TIP: If you are installing multiple servers with the same configuration, you can speed up the process by uploading artifacts and metadata to an HTTP server reachable from the target devices. Then, download the content from there locally.

ZTP precaching configuration

In this final stage, you will prepare to provision one or multiple SNOs using the precached artifacts local to each cluster. You need to let Zero Touch Provisioning know that there are container images that do not have to be pulled from a registry before starting the installation steps.

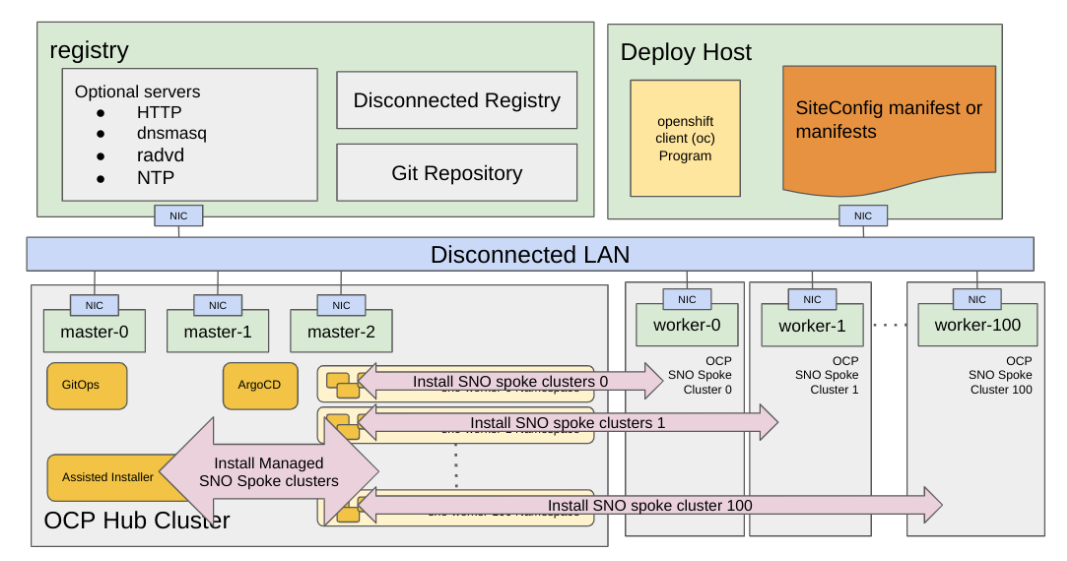

Red Hat GitOps ZTP allows you to provision OpenShift SNO, compact, or standard clusters with declarative configurations of bare-metal equipment at remote sites following a GitOps deployment set of practices. ZTP is a project to deploy and deliver Red Hat OpenShift 4 in a hub-and-spoke architecture (in a relation of 1-N), where a single hub cluster manages many managed or spoke clusters. The central or hub cluster will manage, deploy, and control the lifecycle of the spokes using Red Hat Advanced Cluster Management (RHACM).

Red Hat GitOps ZTP Workflow

Red Hat GitOps Zero Touch Provisioning (ZTP) leverages multiple components to deploy OCP clusters using a GitOps approach. The workflow starts when the node is connected to the network and ends with the workload deployed and running on the nodes. It is logically divided into two stages: Provisioning the SNO and applying the desired configuration.

The provisioning configuration is defined in a siteConfig custom resource that contains all the necessary information to deploy one or more clusters. The provisioning process includes installing the host operating system (RHCOS) on a blank server and deploying the OpenShift Container Platform. This stage is managed mainly by a ZTP component called Assisted Installer, which is part of the Multicluster Engine (MCE).

Once the clusters are provisioned, day-2 configuration can be optionally defined in multiple policies included in PolicyGenTemplates (PGTs) custom resources. That configuration will be applied to the specific managed clusters automatically.

Preparing the siteConfig

As mentioned, a siteConfig manifest defines in a declarative manner how an OpenShift cluster will be installed and configured. Here is an example single-node OpenShift SiteConfig CR. However, unlike the regular ZTP provisioning workflow, three extra fields are included:

- clusters.ignitionConfigOverride: This field adds an extra configuration in ignition format during the ZTP discovery stage. Find detailed information here.

- nodes.installerArgs: This field allows you to configure how the coreos-installer utility writes the RHCOS live ISO to disk. It helps to avoid overriding the precache partition labeled as data. You can find more information here.

- nodes.ignitionConfigOverride: This field adds similar functionality as the clusters.ignitionConfigOverride, but in the OCP installation stage. It allows the addition of persistent extra configuration to the node. Find more information here.

In recent versions of the factory-precaching-cli tool, things are much easier. The user can provide a valid siteConfig custom resource, and the siteconfig sub-command will help verify the correct extra fields and any updates to pre-staging hooks (bug fixes, etc.) are reflected in the siteConfig custom resource.

The command writes the updated siteConfig to stdout, which you can redirect to a new file for comparison with the original before adoption.

[root@liveiso]$ Update site-config, using default partition label

podman run --rm -i quay.io/openshift-kni/telco-ran-tools:latest -- factory-precaching-cli siteconfig \

<site-config.yaml >new-site-config.yaml

Start ZTP

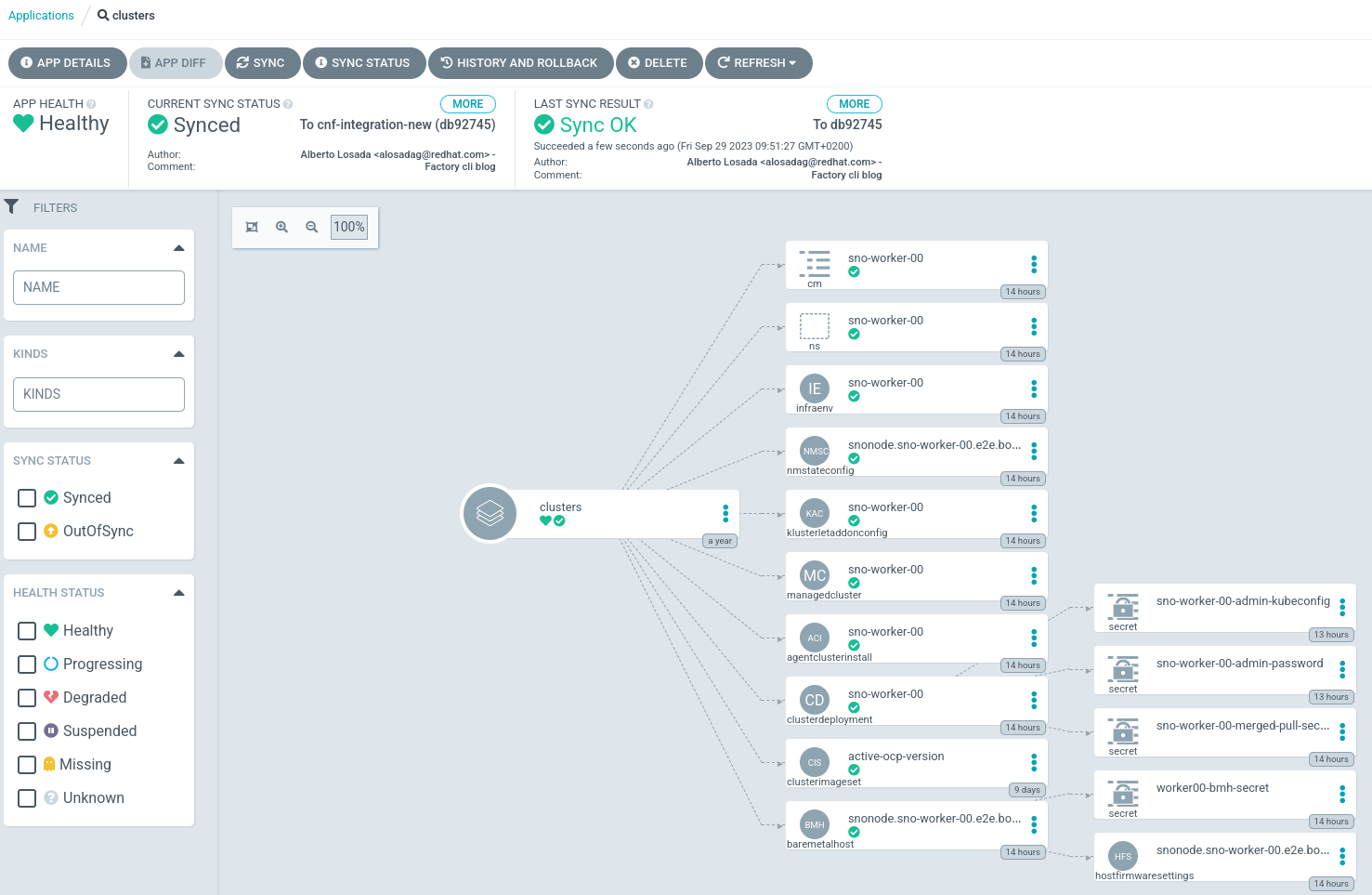

Once the resulting siteConfig is uploaded to the Git repo that OpenShift GitOps is monitoring, you are ready to push the sync button to start the whole process. Remember that the process should require Zero Touch.

OpenShift GitOps is configured by default on auto-sync, so you do not even have to click on any button. That's Zero Touch.

The OpenShift GitOps operator running in the hub cluster will sync the configuration from the remote Git repository and apply it. You will see this as green circles on every resource:

You can also verify that the resource has been applied using the oc command line on the hub cluster:

[user@hub]$ oc get bmh,clusterdeployment,agentclusterinstall,infraenv -A

NAMESPACE NAME STATE CONSUMER ONLINE ERROR AGE

baremetalhost.metal3.io/snonode.sno-worker-00.e2e.bos.redhat.com registering true 50s

NAMESPACE NAME INFRAID PLATFORM REGION VERSION CLUSTERTYPE PROVISIONSTATUS POWERSTATE AGE

sno-worker-00 clusterdeployment.hive.openshift.io/sno-worker-00 agent-baremetal Initialized 52s

NAMESPACE NAME CLUSTER STATE

sno-worker-00 agentclusterinstall.extensions.hive.openshift.io/sno-worker-00 sno-worker-00 insufficient

NAMESPACE NAME ISO CREATED AT

sno-worker-00 infraenv.agent-install.openshift.io/sno-worker-00 2023-09-29T09:05:24Z

Notice that the siteConfig custom resource is divided into multiple resources that the MCE operator can understand.

Monitor the process

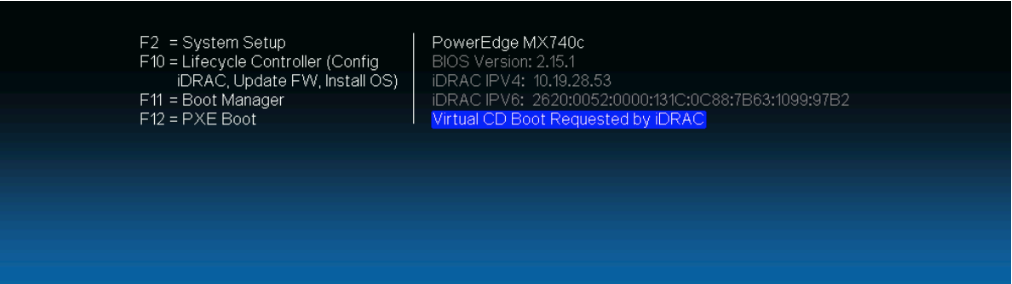

Monitoring the provisioning means examining the ZTP workflow with some additional verifications. First, a few minutes after syncing the configuration, notice that the server is rebooted. It boots from the virtual CD.

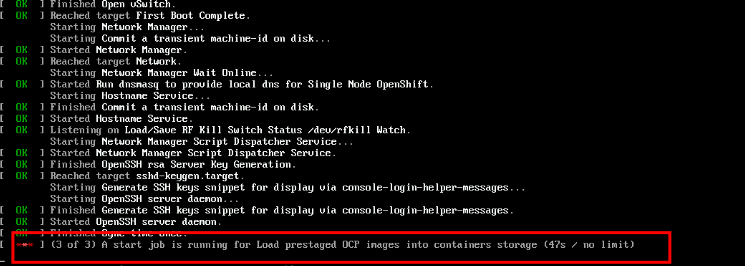

During the boot process, a systemd unit executes before finishing the boot process. That unit will extract a few precached container images needed during the ZTP process's discovery stage. You can monitor them from the console. The information is also logged into the journal log:

$ journalctl

…

Sep 29 09:10:52 snonode bash[2452]: extract-ai.sh: [DEBUG] Processing image quay.io/openshift-release-dev/ocp-v4.0-art-dev@sha256:4d2959b34b95a21265c39be7fe3cd3029f98b6e65de8fd3b8a473af0bd2>

Sep 29 09:10:52 snonode bash[2884]: extract-ai.sh: [DEBUG] Extracting image quay.io/openshift-release-dev/ocp-v4.0-art-dev@sha256:3aeff89d5126708be7616aea34cb88470145a71c82b81646e64b8919d4b>

… REDACTED …

Sep 29 09:11:26 snonode systemd[1]: precache-images.service: Deactivated successfully.

Sep 29 09:11:26 snonode systemd[1]: Finished Load prestaged images in discovery stage.

Sep 29 09:11:26 snonode systemd[1]: precache-images.service: Consumed 3min 38.548s CPU time.

The discovery stage finishes after the RHCOS live ISO is written to the disk. The server will boot from the hard drive with the next restart, and the OCP installation stage begins. In this phase, the precached OCP release images are extracted and ready to be used during the installation. See the systemd service loading the precache image in the image below:

Finally, the cluster is installed. Download the kubeconfig and kubeadmin credentials from the multicloud console running on the hub cluster. The cluster is now ready.

Wrap up

The factory-precaching-cli tool is a promising utility when dealing with slow networks to provision one or multiple SNO clusters. It can also be useful when your corporate registry or network may be a bottleneck when installing many SNO clusters simultaneously. You can find more information in the official docs and the telco-ran-tools GitHub repository, where you can drive the future of the tool.

About the author

More like this

AI in telco – the catalyst for scaling digital business

Metrics that matter: How to prove the business value of DevEx

Crack the Cloud_Open | Command Line Heroes

Press Start | Command Line Heroes

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds