table { border: #ddd solid 1px; } td, th { padding: 8px; border: #ddd solid 1px; } td p { font-size: 15px !important; }

The future release of Red Hat OpenStack Platform director will bring some changes to how the overcloud nodes are configured during the deployment and how it makes it faster with custom Ansible strategy plugins.

Note: if you haven’t read about “config-download” yet, we suggest you take a look at this previous post ("Greater control of Red Hat OpenStack Platform deployment with Ansible integration") before reading this one.

This post is going to take a deep dive on the changes we made regarding how Ansible strategy plugins can impact the way overcloud nodes are deployed at a large scale, and present a new feature which allows a certain amount of nodes to fail during a deployment or day 2 operation.

How the Overcloud Deployment Executes

When a customer is deploying their cloud, the Red Hat OpenStack Platform director will construct a set of Ansible playbooks to execute the required actions and software configurations to manage their cloud. The Ansible playbook execution is governed by the task strategies defined as part of the playbooks.

The default Ansible execution strategy is the "linear" strategy. This strategy starts with the first task defined in the playbook and proceeds to execute it across all the target systems prior to moving on to the next task. Once all tasks have been completed, the cloud is fully deployed.

Ansible Strategy Plugins Explained

By default Ansible runs each task on all hosts targeted by a task in a play before starting the next task on any host. This linear strategy describes the way that the overall task execution is performed. The task execution is done per host batch as defined by the serial parameter (default is “all”). It will execute each task at the same time for the series of hosts until this host batch is done. Then it goes to the next task. Strategies also handle failure logic as part of the execution.

Ansible offers other strategies, which can be found on GitHub. Here are a short description of how they work:

debug: Following the execution as linear does, but controlled by an interactive debug session.

free: the task execution will not wait for other hosts to finish the current task and queue more tasks for other hosts that are done with that task. Therefore, new tasks for hosts which are done with the previous task won’t be blocked and also slow nodes won’t slow down the execution of the remaining tasks in a play for the other nodes.

Strategy Impacts at Scale

The linear strategy works well if you are describing a specific order of tasks across an entire environment. The strategy starts to impact the overall execution performance when these tasks are long running or there are more nodes than available Ansible playbook forks. Since the linear strategy has to wait for all of the tasks to complete on all of the hosts prior to moving on, many of the systems will sit idle either waiting for a task or having already completed their task while the other nodes are executing. If there are no inter-node dependencies between the tasks, this can lead to wasted time waiting for a task instead of working on the next bit of work.

Because the linear strategy may not be ideal for some playbook executions, Ansible provides a ‘free’ strategy that will move on to the next task for a host without waiting for the entire set of target hosts to complete a task. An end user can specify a specific set of tasks that can be run freely to completion by changing the playbook strategy to use the ‘free’ strategy. This can speed up the overall execution of a playbook by better parallelizing the execution across their nodes.

Deploying OpenStack

The deployment and configuration of OpenStack requires some coordination at specific times during the installation, update, and upgrade processes. Because there are times when we have execution dependencies across the nodes, we cannot simply switch to the free strategy to run all the time.

For certain times during the deployment, we want the nodes to update in a controlled fashion prior to moving on to the next phase of the deployment. Historically this was controlled by the concept of our deployment steps in the Overcloud deployment. These steps have long been documented in the tripleo-heat-templates as follows:

Load Balancer and Cluster services

Core Shared service setup (Database, Messaging, Time)

Core OpenStack service setup (Authentication, etc.)

Other OpenStack service setup (Storage, Compute, Networking, etc.)

Service activation and final configurations

We can parallelize the execution of each of these steps, however we need to pause the overall execution of the deployment at the end of each of the steps until all hosts have finished. If we did not pause at the end of the steps, we may try to access a service that has yet to be enabled on a different host.

In previous versions of Red Hat OpenStack Platform (13 and below), Heat was used to handle this part of the overall deployment orchestration. Heat would trigger the Steps to be executed across the cloud and wait until the Step was completed prior to moving on to the next Step. Starting with OpenStack Platform 14, we converted the overall deployment process to be handled by Ansible. The initial conversion to Ansible lost some of this step parallelization because of the previously mentioned strategy execution. This was fine for smaller scale deployments however as we move on to larger scales (500+ nodes), this parallelization as part of the deployment is a must have.

Developing New Strategies in TripleO

As part of our investigation to improve the overall execution at scale, we started looking into how we can improve the Ansible playbook execution. We developed a similar strategy to the upstream Anisble "free" strategy that more closely aligned with our expectation around the steps being parallel but failures should stop the overall execution.

The overall deployment steps are defined as individual "plays" in the overall execution. This allows the strategy to halt at the end of a play if there is a failure, while still allowing the other nodes to complete their tasks. Additionally while investigating strategies, we also identified other areas that could be improved with the use of custom strategies (e.g. tolerating failures better).

We developed a "tripleo_free" strategy that allows Ansible to execute on the target hosts freely for a given playbook. These tasks will be executed assuming no dependencies on the other hosts that are running the same tasks. Failures on any given host will not stop future tasks from executing on other hosts, but it will cause the overall playbook execution to stop at the end of the play. This strategy is used for the execution of the deployment steps so that tasks run on all hosts (based on the number of workers) freely, without locking at each task.

We also developed a "tripleo_linear" strategy that operates very similarly to the Ansible "linear" strategy, however it is used to handle a configurable failure percentage based on a TripleO node role. This is further detailed in the later section around this topic.

Results (before / after)

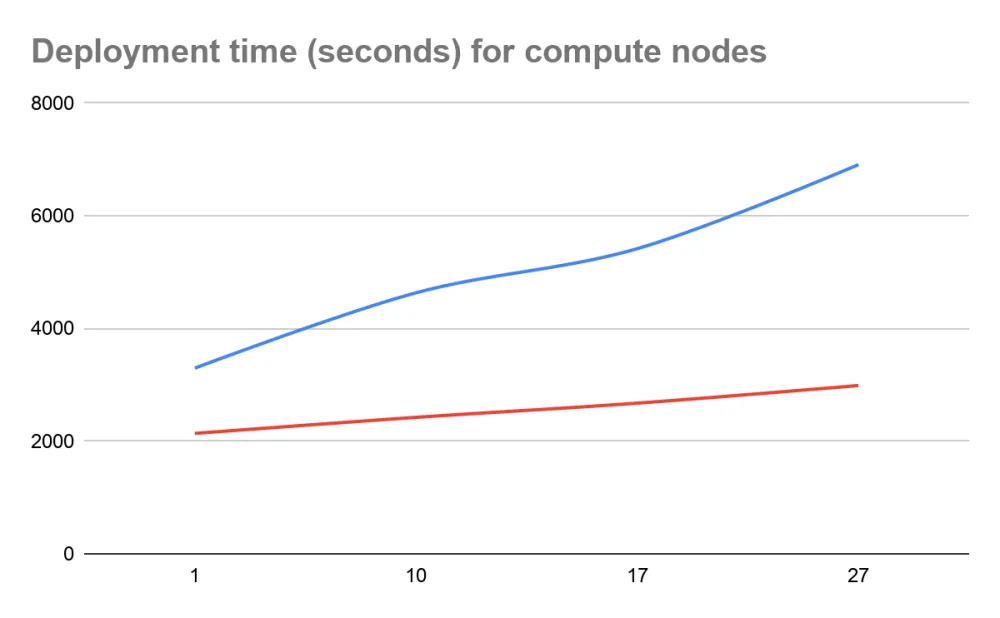

During the Ussuri cycle, we deployed several clouds to show the impact of the number of nodes on the overall execution time. By parallelizing the steps, we were able to reduce the overall execution time and reduce the impact of adding additional nodes to the cloud.

Total Node Count | Architecture | Linear (default) | TripleO Free (new!) |

4 | 3 controllers, 1 compute | 0:54:55.900 | 0:35:37.660 |

13 | 3 controllers, 10 computes | 1:17:13.682 | 0:40:22.737 |

20 | 3 controllers, 17 computes | 1:30:17.337 | 0:44:34.960 |

30 | 3 controllers, 27 computes | 1:55:08.668 | 0:49:47.799 |

From the table, you can see that we were able to reduce the overall deployment time of a basic 30 node cloud to less time than it used to take to deploy a 4 node cloud. Additionally the time it takes to deploy for each additional 10 nodes that are deployed.

From the chart, you can see in blue the time it takes with linear and in red with tripleo free. We can observe that beside the fact it’s faster with tripleo free, when adding more compute nodes the trend is not growing as fast as with the default linear strategy.

Future Work: Failure Tolerance

By implementing our own strategies, we are able to implement special logic to handle deployment failures based on node roles in the cloud. Many customers have expressed the desire to be able to deploy a cloud and allow for some failures on Compute related nodes. With the strategy efforts we are able to understand when a failure occurs on a node that is not a critical component of the cloud (e.g. a Compute can fail but a Controller cannot).

We implemented additional logic within the strategies that looks up the TripleO node role for the system that the task was executing on when it failed and checks if the number of failed nodes exceeds a specific threshold. This allows a user to define an acceptable amount of failures for a node type before completely failing the overall deployment. This is most useful for Compute related nodes as sometimes the hardware or systems may fail for some unrelated reason but the end user would like to configure what is available.

As an example, let’s say that we want to deploy a 13 node cloud. This cloud is configured with 3 Controllers and 10 Computes. As the owner, you need to get the cloud up as soon as possible but only really need 5 Compute nodes immediately.

You could configure ComputeMaxFailPercentage: 40 as part of the deployment. While executing the deployment related tasks against the cloud, 3 of the nodes had an incorrect network configuration so they failed while trying to execute the network setup and became unreachable.

Prior to this strategy work, the overall cloud deployment would fail at the initial network setup for the entire cloud. You would have to troubleshoot the failed network on these nodes before continuing again. With the defined failure percentage the overall deployment should continue. At the end of the deployment the cloud would end up consisting of 3 Controller and 7 Compute nodes. The cloud would be operational and the deployer is free to troubleshoot the failed nodes before running a deployment update to add the resources into the cloud.

Roadmap

While focusing on the deployment steps, we realized that other operations could take benefit of this work, such as the updates and upgrades operations. This is currently under review and testing, but we think that the same strategies could be re-used to execute these playbooks. Our hope is to make these operations faster at scale, and potentially reduce the maintenance window for our customers.

We are investigating backporting all these features down to OpenStack Platform 16; which isn’t just about code backports but also testing (including performance benchmarks) and documentation. We hope to release these nice improvements in a future OpenStack Platform 16 minor release!

About the authors

A Principal Software Engineer with more than 17 years of development and operations experience with a wide range of environments from small startups to large enterprises. Focuses on stability and excels with troubleshooting and scaling applications. Currently a core reviewer and major contributor to multiple OpenStack projects. Responsibilities include infrastructure management, systems lifecycle management (Containers, OpenStack, Virtualization), configuration management (Ansible, Puppet) and CI/CD systems (Zuul, Jenkins).

Emilien has been contributing to OpenStack since 2011 when it was still a young project. He has helped customers have a better experience when deploying, upgrading and operating OpenStack at large scale. His current focus is the integration between OpenShift and OpenStack. He's maintainer of core components including the Cluster API Provider for OpenStack and Gophercloud. Technical and team leader at Red Hat, he's developing leadership skills with passion for teamwork and technical challenges. He loves sharing his knowledge and often give talks to conferences.

More like this

Take your automation to the next level with Ansible Content Collections for Windows, Splunk, AIOps, MCP, and more

Planning your upgrade path to Ansible Automation Platform 2.6

Technically Speaking | Taming AI agents with observability

DevSecOps decoded | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds