The release of Red Hat OpenStack Platform director in version 14 brings some changes to how the overcloud nodes are configured during the deployment. The biggest feature is called “config-download” and it enables using Ansible to apply the overcloud software configuration.

This post is going to take a look at some of the OpenStack operator and deployer facing changes that can be expected with config-download, and show some tips and tricks on how to more easily interact and control the OpenStack deployment with director.

config-download Explained

Let’s quickly explain a little bit about the config-download feature.

With config-download, Ansible is used to replace the communication and transport of the software configuration deployment data between Heat and the Heat agent (os-collect-config) on the overcloud nodes. The usage of Ansible provides a more familiar operator experience and can give more flexibility for interacting with the deployment, which we’ll cover shortly.

Heat is still used to create the stack and the OpenStack resources. The same parameter values and environment files are passed to Heat as they were previously. However, instead of the Heat agent polling the Heat API for software configuration, Ansible is used to apply the software configuration with the undercloud taking the role of the Ansible control node. ansible-playbook is run from the undercloud and it configures all the overcloud nodes via a push model with an SSH transport.

The whole deployment process is still automated behind the API, and the single CLI command openstack overcloud deploy. However, new options have been added to add more operator control as we’ll show here.

config-download related CLI arguments

First, it's important to realize that we have designed config-download not to have interface changes that are backwards incompatible. Existing CLI parameters, Heat environments, and Heat parameters should continue to be specified in the same manner as with Red Hat OpenStack Platform director in version 13.

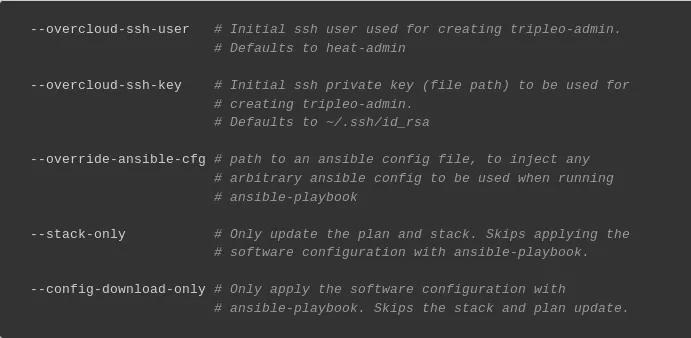

The first two of these are --overcloud-ssh-user and --overcloud-ssh-key. Since the Ansible control node is the undercloud, it will need to connect via ssh to each of the overcloud nodes to perform the configuration. These CLI parameters can be used to control how that connection is done.

The --overcloud-ssh-user parameter defaults to heat-admin, but can be set to any username that is needed to perform the ssh connection. The --overcloud-ssh-key parameter defaults to ~/.ssh/id_rsa, but likewise can be set to the path to the ssh private key that is needed to perform the ssh connection.

Running Ansible manually (using --stack-only)

You can think of the Heat stack as being responsible for deployment of bare metal resources such as node provisioning and network configuration. Ansible is responsible for the software configuration, such as applying manifests, generating config files, and orchestrating containers.

Suppose you want to interact with Ansible more directly to take advantage of some of its operator facing features. config-download gives you a way to separate the phases of the Heat stack operation from the Ansible phase of the deployment.

The way to do that is to pass --stack-only to openstack overcloud deploy. This will stop the overcloud deployment after the Heat stack is complete, and give you the chance to run Ansible manually with more available customizations.

Generating the Ansible inventory

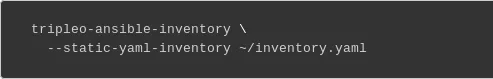

After running with --stack-only, the first thing to do is to generate the Ansible inventory file with the tripleo-ansible-inventory command (use --help to see a full list of options):

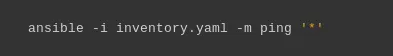

Testing connectivity

At this point, it's a good idea to test connectivity to the nodes in the inventory, and resolve any issues before moving on. Use Ansible with the ping module to test connectivity to the nodes:

Resolve any connection issues before moving forward.

Creating the Ansible project directory

The next step is to download the software configuration data from Heat. The openstack overcloud config download command is used to do that (use --help to see a full list of options):

This command creates an Ansible project directory at the path specified by --config-dir.

Running ansible-playbook

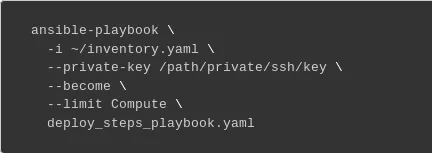

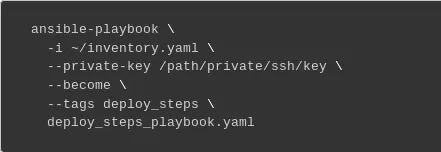

Once the configuration has been downloaded and the inventory generated, change to the config-download directory and run ansible-playbook to configure the overcloud nodes.

The deploy_steps_playbook.yaml playbook is used to fully configure the overcloud nodes. This command should apply the full software configuration of the overcloud nodes:

Limiting Hosts

Certain hosts can be included or excluded from any changes by using the --limit option with ansible-playbook. As an operator, you may wish to limit what nodes are affected by changes in the deployment. This feature may be useful when dealing with hardware issues, enforcing change control policy in your environment, or for debugging certain nodes. Using --limit can allow for faster feedback as not all changes will need to be applied to all nodes.

For example, to apply any changes only to hosts in the compute group from the inventory file the following command could be used:

--limit takes an Ansible pattern value that matches against the set of hosts defined in the inventory file. For more information on specifying a pattern, see "Working with Patterns" in the Ansible docs. Open the inventory file called inventory.yaml to see the available host groups that can be used as patterns.

Running specific tasks

Running only specific tasks (or skipping certain tasks) can be done from within the Ansible project directory. Specifying particular tasks to run could be used to debug tasks that may have failed previously, or to rerun certain tasks if you know other tasks have already been applied. It can also be used to resume a previously failed deployment after the issue has been resolved. By controlling what tasks are run, subsequent deployment updates can complete more quickly.

This can be useful during troubleshooting and debugging scenarios, but should be used with caution as it can result in an overcloud that is not fully configured. All tasks that are part of the deployment eventually need to be run, and in the proper order. Note that it’s recommended that all changes to the deployed cloud still be applied through the Heat templates and environment files passed to the openstack overcloud deploy command. Doing so ensures that the deployed cloud is kept in sync with the state of the templates and the state of the Heat stack.

The way to control running specific tasks is with with --tags, --skip-tags, or --start-at-task.

Tags

Tags can be used to only run certain tasks that have been tagged with the specified tag(s).

For example to run just the five deployment steps used for service configuration, the following command could be used:

The playbooks use tagged tasks for finer-grained control of what to apply if desired. As shown above, tags can be used with the ansible-playbook CLI arguments --tags or --skip-tags to control what tasks are executed. --list-tags can also be used to view what tags have been enabled on which plays and tasks. At a high level, the enabled tags are:

|

Tag |

description |

|

facts |

fact gathering |

|

common_roles |

ansible roles common to all nodes |

|

overcloud |

all plays for overcloud deployment |

|

pre_deploy_steps |

deployments that happen pre deploy_steps |

|

host_prep_steps |

host preparation steps |

|

deploy_steps |

deployment steps |

|

post_deploy_steps |

deployments that happen post deploy_steps |

|

external |

all external deployments |

|

external_deploy_steps |

external deployments that run on the undercloud |

Server specific pre and post deployments

The list of server specific pre and post deployments run during the Server deployments and Server Post Deployments plays (see deploy_steps_playbook.yaml) is dependent upon what custom roles and templates are used with the deployment.

The list of these tasks is defined in an Ansible group variable that applies to each server in the inventory group named after the Heat role. From the Ansible project directory, the value can be seen within the group variable file named after the Heat role:

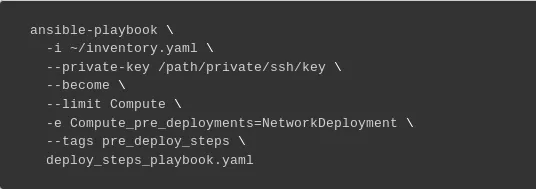

<Role>_pre_deployments is the list of pre deployments, and <Role>_post_deployments is the list of post deployments. To specify the specific task to run for each deployment, the value of the variable can be defined on the command line when running ansible-playbook, which will overwrite the value from the group variable file for that role. For example:

Using the above example, only the task for the NetworkDeployment resource would get applied since it would be the only value defined in Compute_pre_deployments, and --tags pre_deploy_steps is also specified, causing all other plays to get skipped. The task would only apply to Compute nodes since --limit is also specified.

Starting at a specific task

To start the deployment at a specific task, use the ansible-playbook CLI argument --start-at-task. To see a list of task names for a given playbook, --list-tasks can be used to list the task names.

Previewing changes

Changes can be previewed to see what will be changed before any changes are applied to the overcloud. As an operator you may want to see how a change in the templates affects values in configuration files, or see what other configuration data has changed.

When ansible-playbook is run, use the --check CLI argument with ansible-playbook to preview any changes. The extent to which changes can be previewed is dependent on many factors such as the underlying tools in use (puppet, docker, etc) and the support for ansible check mode in the given ansible module.

The --diff option can also be used with --check to show the differences that would result from changes.

Status and Error reporting

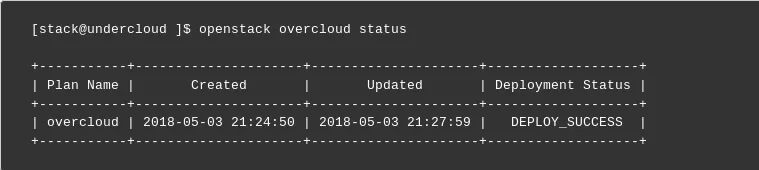

There are 2 new commands that are useful for status and error reporting when dealing with config-download. The first is openstack overcloud status, which shows the status of the deployment, taking into account both the Heat and Ansible phases of the deployment:

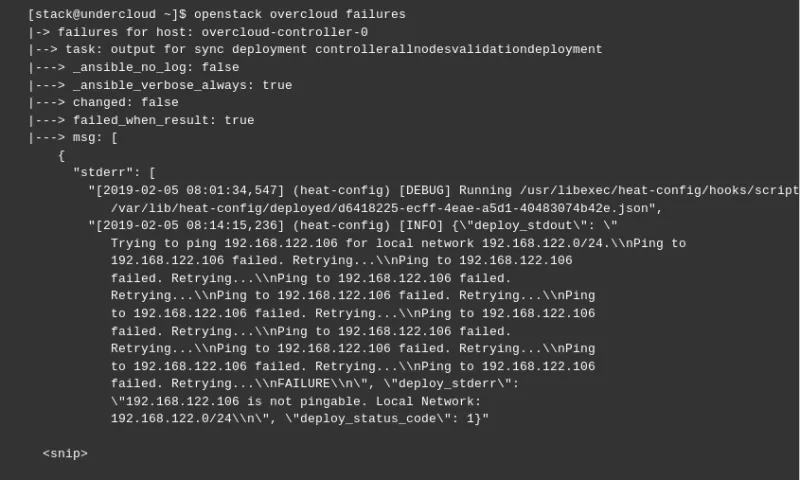

The second command is openstack overcloud failures, which will show any errors that were encountered during the Ansible phase of the deployment:

Conclusion

Our config-download gives the operator a greater ability to control certain aspects of the deployment with Ansible. If you’re deploying with Red Hat OpenStack Platform director in version 14, these features should make it easier to debug and troubleshoot failed tasks and give some increased flexibility with the deployment.

About the author

James Slagle is a Senior Principal Software Engineer at Red Hat. He's been working on OpenStack since the Havana release in 2013. His focus has been on OpenStack deployment and particularly OpenStack director. Recently, his efforts have been around optimizing director for edge computing architectures, such as the Distributed Compute Node offering (DCN).

More like this

The zero touch future: Enabling Telstra’s path to a fully autonomous, self-healing network

Friday Five — April 17, 2026 | Red Hat

Collaboration In Product Security | Compiler

Keeping Track Of Vulnerabilities With CVEs | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds