Since the first release of OpenShift 4, we have supported deployments using the “pre-existing infrastructure” method, also known as user provisioned infrastructure or UPI. This method relies on the administrator to provision the virtual machines and install Red Hat Enterprise Linux CoreOS (RHCOS) before handing them over to the OpenShift install process. We are very happy to announce that, with the release of OpenShift 4.5, the full-stack automated install experience, also known as installer provisioned infrastructure or IPI, is now available for VMware vSphere infrastructure.

The full-stack automated install process has several important and helpful differences from the other methods. First, you, the administrator, don’t need to create the virtual machines, install RHCOS, and then start the OpenShift install process. Nor do you need to manually manage the nodes, via the vCenter interface, when adding or removing nodes for capacity in the cluster. Instead, the installer (openshift-install) will use the credentials you provide to connect to vCenter, upload a template, then clone that template to be used for the bootstrap and control plane nodes.

This leads to the second major difference between the user provisioned and installer provisioned deployment methods. With the full-stack automated method, the vSphere cloud provider is deployed, configured, and used to create the worker nodes, both during the install process and afterward. To scale the cluster for additional compute capacity, or to create customized MachineSets, the administrator uses the integrated automation to manually or automatically change the number of nodes and rely on OpenShift to take action without intervention.

The video below shows the vSphere full-stack automated install process, highlighting the simplicity of installing using this method:

You may have noticed in the video that we did not need to create and configure a load balancer, and the only DNS entries we needed are for two IPs (outside of the DHCP range) for the OpenShift ingress and API endpoints. With each of the on-premises full-stack automation methods, vSphere, Red Hat Virtualization, and Red Hat OpenStack, the OpenShift cluster uses an integrated load balancer and DNS solution, which is automatically configured and managed. Let’s dig into each of these a little bit.

Load Balancer

There are typically three load-balanced endpoints needed when deploying OpenShift: the API (api.clustername.domainname), the internal API (api-int.clustername.domainname), and application ingress (*.apps.clustername.domainname). In a pre-existing infrastructure deployment, we ask the administrator to configure the load balancer to point to the appropriate nodes and ports at install time and then maintain the configuration as they add or remove nodes. With full-stack automation, the cluster can scale nodes, both up and down, at any time, which means that the load balancer’s configuration needs to be updated automatically.

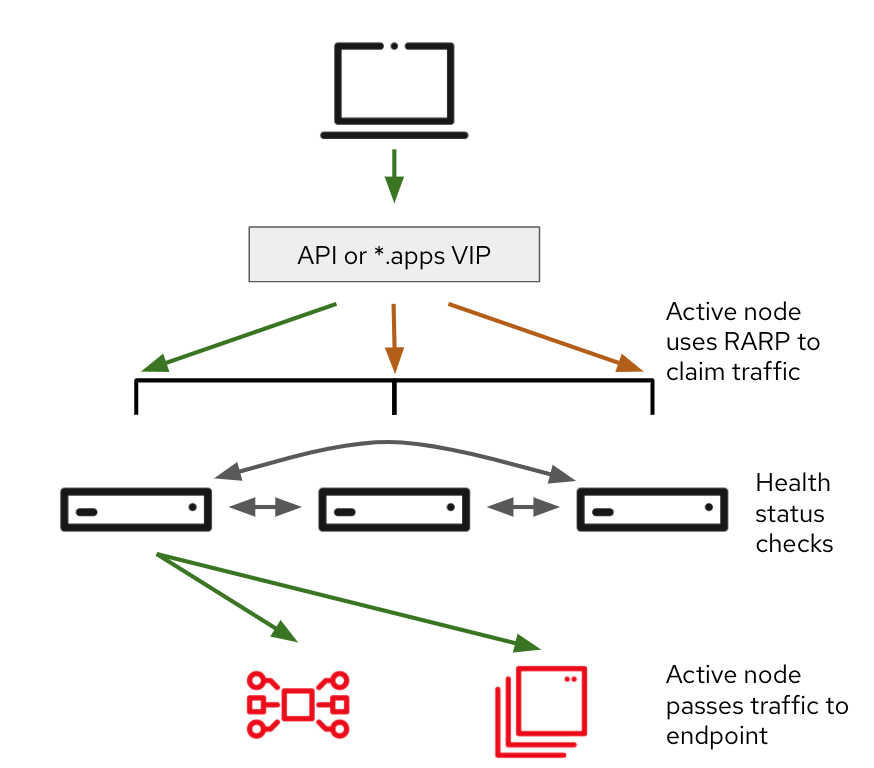

To address this issue, the cluster requests two virtual IPs (VIP) during the install configuration: one for the API and API-INT endpoints and another for the ingress. The API VIP is managed by a Keepalived instance deployed to the control plane nodes. The node hosting the VIP is the node in which traffic is received when creating a new connection to the OpenShift API. Once reaching the node with the VIP, the traffic then goes to an HAProxy instance, also on each of the control plane nodes. This HAProxy instance forwards connections, using the RoundRobin policy, to each of the available control plane nodes in the cluster.

This same process is used for the ingress VIP with two differences:

- The Keepalived instance is only active on worker nodes which are hosting a Router instance.

- The connections are forwarded to a Router instance, which takes action to pass the traffic to the deployed application, instead of another HAProxy instance.

This configuration enables simplified deployment and scaling of the OpenShift cluster without having an external dependency. However, several Red Hat partners, such as Citrix, F5, and Cisco, have certified Operators which can be integrated after deployment to replace the integrated load balancer configuration for the ingress endpoint. This can enable greater performance, additional features, and more based on the capabilities you decide to leverage from our partners.

DNS

The other change I highlighted from the video above is that we don’t need to configure DNS entries for each of the nodes as well as create SRV records for etcd and several other requirements. Some of this, particularly the node entries, are addressed by DHCP, which is required for the full-stack automated deployment.

For the other DNS requirements, the OpenShift cluster will take advantage of mDNS, deployed to the control plane, when using full-stack automation with vSphere, Red Hat Virtualization, or OpenStack Platform. This allows the nodes, and the services hosted on them, to publish DNS entries for each of their functions. When a service needs to find that function, it uses the mDNS multicast address to request the hostname or IP and waits for the mDNS responders on each of the nodes to respond with the data:

With the exception of the two entries noted above (ingress and API), this approach removes the DNS configuration entirely for etcd entries and the other services, instead making it an internally managed resource for the OpenShift cluster.

OpenShift the Easy Way

With this addition you can now deploy OpenShift clusters to VMware vSphere, Red Hat OpenStack, and Red Hat Virtualization on-premises infrastructures using the same integrated automation for node deployment and management as with the hyperscale providers. Whether you need a small cluster for dev and test operations, or a cluster that is constantly scaling up and down to accommodate fluctuations in workload, the full-stack automation process, combined with features like MachineConfigPools and the OpenShift Router, make deploying, managing, and scaling quick, easy, and repeatable.

About the author

More like this

The agentic paradox and the case for hybrid AI

Context-aware advisor recommendations in Red Hat Lightspeed

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds