Introduction to the Compliance Operator

In part one of this series, we looked at the difference between the Compliance Operator, Red Hat Advanced Cluster Management for Kubernetes (RHACM), and Open Policy Agent (OPA) to help us understand how the Compliance Operator secures and hardens the clusters. This article describes the basic understandings of the Compliance Operator’s architecture, including the correlation of custom resources that represent security policy content, configuration, or result, and its scanning processes to be prepared for running scans. Now let’s dive into it.

Compliance as Code

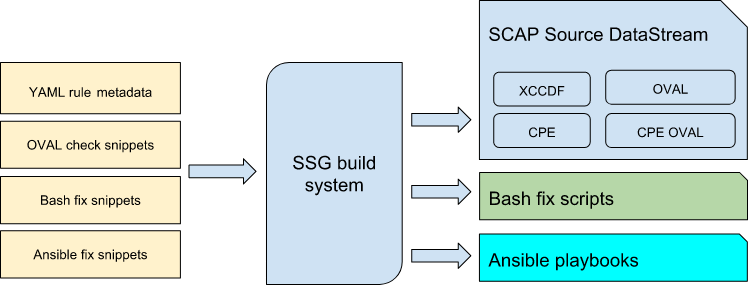

How does scanning run, and where do the security policies come from in the Compliance Operator? First of all, the Compliance Operator leverages OpenSCAP recognized in the RHEL space to scan the OpenShift cluster and the worker machines (nodes) running the cluster, using a community-based compliance content developed in the ComplianceAsCode/content project. This creates security policy content for various platforms, such as Debian, Fedora, RHEL, or Ubuntu, and security standards such as CIS Benchmark, HIPAA, PCI-DSS. The content represents the compliance benchmarks such as appropriate file owners or permissions and is distributed as a container image and independent of the operator for rapid updates of the content. For instance, SCAP components consist of Extensible Configuration Checklist Description Format (XCCDF) as the security checklists, Open Vulnerability and Assessment Language (OVAL) as assertions about the state of the system, or DataStream used for packaging other components into a single file to distribute. All the components are based on the XML format.

Next, let’s see what custom resources are used in the operator, because each operator provides its own custom resource definitions, and it is important to understand the correlation between them.

Custom Resources for profiles

The operator manages custom resource objects to configure scan settings as well as the results as follows, so you need to understand which custom resource objects you need to create when you run the scan.

When the operator is deployed, it creates two ProfileBundle objects named ocp4 and rhcos4 by default; one for the cluster-level scan and another for the node-level scan. The ProfileBundle contains a reference to the container image with the content and a content file name to make it easier for users to discover what profiles are shipped in the image. For instance, the contentFile value in the ocp4 ProfileBundle would ssg-ocp4-ds.xml that contains the platform checks and is related to the root of the filesystem, while the contentImage value would refer to the image path located at quay.io/complianceascode/ocp4:latest as follows.

apiVersion: compliance.openshift.io/v1alpha1

kind: ProfileBundle

metadata:

name: ocp4

namespace: openshift-compliance

spec:

contentFile: ssg-ocp4-ds.xml

contentImage: quay.io/complianceascode/ocp4:latest

status:

dataStreamStatus: VALID

Next, the operator parses and creates Profile objects when the ProfileBundle object is created. The Profile object represents or defines the compliance benchmarks with a large number of rules in the rules section as follows, because a profile is a set of rules that validate a specific compliance target and each rule corresponds to a single check. You will see most of the rules are for RHCOS, and no rules for RHEL appear in the profile because the operator is only available for RHCOS.

apiVersion: compliance.openshift.io/v1alpha1

description: |-

This compliance profile reflects the core set of Moderate-Impact Baseline

configuration settings for deployment of Red Hat Enterprise

Linux CoreOS into U.S. Defense, Intelligence, and Civilian agencies.

<...>

id: xccdf_org.ssgproject.content_profile_moderate

kind: Profile

metadata:

annotations:

compliance.openshift.io/product: redhat_enterprise_linux_coreos_4

compliance.openshift.io/product-type: Node

labels:

compliance.openshift.io/profile-bundle: rhcos4

<...>

name: rhcos4-moderate

namespace: openshift-compliance

ownerReferences:

- apiVersion: compliance.openshift.io/v1alpha1

blockOwnerDeletion: true

controller: true

kind: ProfileBundle

name: rhcos4

<...>

rules:

- rhcos4-account-disable-post-pw-expiration

- rhcos4-accounts-no-uid-except-zero

- rhcos4-audit-rules-dac-modification-chmod

<...>

title: NIST 800-53 Moderate-Impact Baseline for Red Hat Enterprise Linux CoreOS

Besides, the Rule object is also exposed as API objects, and it mostly contains informational data so that it will enable auditing of what will be checked and how it could be fixed. Below is the Rule object to modify an audit rule for auditd daemon, and it specifies the MachineConfig object to apply the rule file being used, because the Machine Config Operator (MCO) applies persistent changes to the operating system.

apiVersion: compliance.openshift.io/v1alpha1

severity: medium

title: Record Events that Modify the System's Discretionary Access Controls - chmod

id: xccdf_org.ssgproject.content_rule_audit_rules_dac_modification_chmod

kind: Rule

availableFixes:

- disruption: medium

fixObject:

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

spec:

config:

ignition:

version: 3.1.0

storage:

files:

- contents:

source: <...>

mode: 420

path: /etc/audit/rules.d/75-chmod_dac_modification.rules

<...>

metadata:

name: rhcos4-audit-rules-dac-modification-chmod

While the operator comes with ready-to-use profiles, the TailoredProfile object is useful to customize profiles by enabling or disabling rules to fit different requirements or needs in the organization. For instance, the TailoredProfile object below disables two rules about file permission and password expiration in the disableRules value.

apiVersion: compliance.openshift.io/v1alpha1

kind: TailoredProfile

metadata:

name: rhcos4-with-usb

spec:

extends: nist-moderate-modified

title: My modified NIST moderate profile

disableRules:

- name: rhcos4-file-permissions-node-config

rationale: This breaks X application.

- name: rhcos4-account-disable-post-pw-expiration

rationale: No need to check this as it comes from the IdP

setValues:

- name: rhcos4-var-selinux-state

rationale: Organizational requirements

value: permissive

Now, some of you may be curious why the security content is shipped as a container image where the ProfileBundle object refers to instead of being directly referring to the content repository or in any other way. SCAP content needs to be compiled into a huge, single XML file called DataStream that bundles other SCAP content such as XCCDF, OVAL, or other SCAP related files when scanned. Therefore, it is not user-friendly to create lower-level API objects that refer to the image and an XML file inside it, initiating a scan that references a compliance profile using an OpenSCAP specific XCCDF identifier, which is similar to running the oscap xccdf eval --profile <selected_profile> <datastream_file> command. Instead, the ProfileBundle object wraps the container image with the content and parses the API objects that represent the compliance data stream file and its elements such as profiles, rules, or variables by name to avoid OpenSCAP terminology via an abstraction layer with the API object.

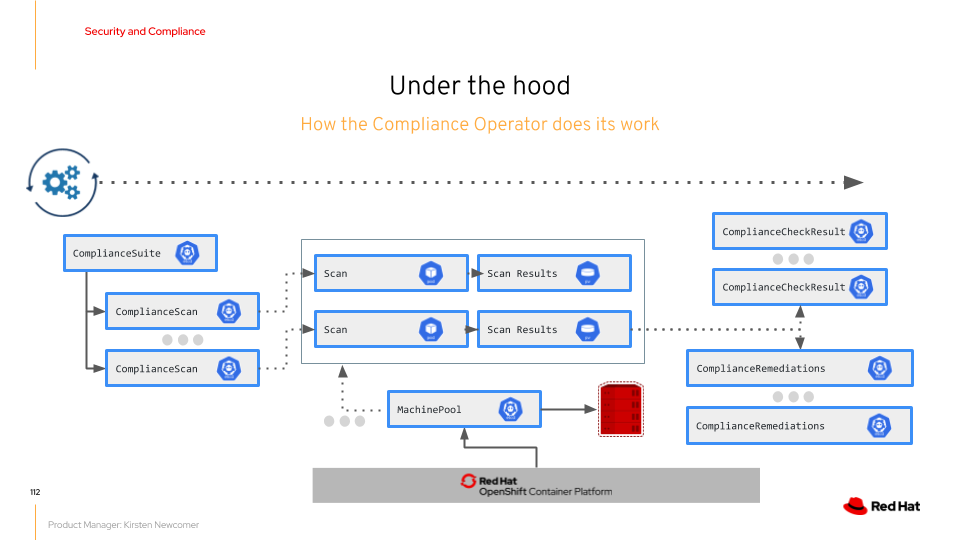

Custom Resources for Scan

The ScanSetting and ScanSettingBinding objects are straightforward because they define which profiles to use and when to scan and then which scanner pods are launched when they are created. (More precisely, the objects generate the ComplianceSuite and ComplianceScan objects which generate scanner pods.) For instance, below is the example of the ScanSettingBinding object where two profiles are referred to in the profiles section. One is the rhcos-with-usb tailored-profile and the other is the ocp4-moederate profile. The settingsRef value is a reference to the ScanSetting object and my-companys-constraints ScanSetting object is referred to here.

apiVersion: compliance.openshift.io/v1alpha1

kind: ScanSettingBinding

metadata:

name: my-companys-compliance-requirements

profiles:

# Node checks

- name: rhcos4-with-usb

kind: TailoredProfile

apiGroup: compliance.openshift.io/v1alpha1

# Cluster checks

- name: ocp4-moderate

kind: Profile

apiGroup: compliance.openshift.io/v1alpha1

settingsRef:

name: my-companys-constraints

kind: ScanSetting

apiGroup: compliance.openshift.io/v1alpha1

The following ScanSetting object mainly contains schedule in the schedule value and the target hosts or machines for the node-level scan specifying the node-role.kubernetes.io label in the roles value. The scan begins at every 1 am and the node-level scan runs on the control plane machines (master) and worker machines (worker). More importantly, the autoApplyRemediations value specifies whether a fix for the scan is automatically applied and it is disabled in the example below. So, if you want to automatically apply all the fixes for a set of rules, autoApplyRemediations should be set true instead of false.

apiVersion: compliance.openshift.io/v1alpha1

kind: ScanSetting

metadata:

name: my-companys-constraints

autoApplyRemediations: false

schedule: "0 1 * * *"

rawResultStorage:

size: "2Gi"

rotation: 10

roles:

- worker

- master

While it is recommended to use the ScanSetting and ScanSettingBinding objects that generate the ComplianceSuite object, you need to directly create the ComplianceSuite object in the following situations. See Performing advanced Compliance Operator tasks - Compliance Operator | Security and compliance | OpenShift Container Platform 4.6 for further details.

- Specifying only a single rule to scan for debugging by increasing the OpenSCAP scanner verbosity or testing. Since the debug mode tends to get verbose, you want to limit to test just one rule to lower the amount of the message.

- Providing a custom nodeSelector for a remediation to be applicable when the nodeSelector must match a pool.

- Pointing to a custom tailoring file with the TailoringConfigMap value if your organization has been using OpenSCAP previously and wants to use an existing XCCDF tailoring file.

Below is an example of the ComplianceSuite object that scans worker machines with only a single rule:

apiVersion: compliance.openshift.io/v1alpha1

kind: ComplianceSuite

metadata:

name: workers-compliancesuite

spec:

scans:

- name: workers-scan

profile: xccdf_org.ssgproject.content_profile_moderate

content: ssg-rhcos4-ds.xml

contentImage: quay.io/complianceascode/ocp4:latest

debug: true

rule: xccdf_org.ssgproject.content_rule_no_direct_root_logins

nodeSelector:

node-role.kubernetes.io/worker: ""

Custom Resources for Result and Remediation

Assuming the scan is finished, how can you see the result? The ComplianceSuite object keeps track of each scan and is actually a bundle of ComplianceScan objects that represent a single scan. Most of the values referenced in the spec section, such as schedule or scans, are based on those of the ScanSetting and ScanSettingBinding objects. The status section shows the overall result. For example, Phase value indicates whether the entire scan is completed, the Result value is the overall whether it passes the whole checklists, and the scanStatuses value contains the record of each scan, such as node-level and cluster-level scan.

apiVersion: compliance.openshift.io/v1alpha1

kind: ComplianceSuite

metadata:

name: fedramp-moderate

spec:

autoApplyRemediations: false

schedule: "0 1 * * *"

scans:

- name: workers-scan

scanType: Node

profile: xccdf_org.ssgproject.content_profile_moderate

content: ssg-rhcos4-ds.xml

contentImage: quay.io/complianceascode/ocp4:latest

rule: "xccdf_org.ssgproject.content_rule_no_netrc_files"

nodeSelector:

node-role.kubernetes.io/worker: ""

status:

Phase: DONE

Result: NON-COMPLIANT

scanStatuses:

- name: workers-scan

phase: DONE

result: NON-COMPLIANT

Since the ComplianceScan object indicates a single scan and is represented by the ComplianceSuite object, all the attributes are the same. The ComplianceScan object is similar to the Pod object in Kubernetes, so you do not have to directly create it. These objects are automatically created when the ScanSetting and ScanSettingBinding objects are created. But there are cases when you want to create them directly to run only a single scan. That will be covered later. Lastly, let’s check the details of the result and the remediation:

apiVersion: compliance.openshift.io/v1alpha1

kind: ComplianceScan

metadata:

name: worker-scan

spec:

scanType: Node

profile: xccdf_org.ssgproject.content_profile_moderate

content: ssg-ocp4-ds.xml

contentImage: quay.io/complianceascode/ocp4:latest

rule: "xccdf_org.ssgproject.content_rule_no_netrc_files"

nodeSelector:

node-role.kubernetes.io/worker: ""

status:

phase: DONE

result: NON-COMPLIANT

The ComplianceCheckResult object represents the state for a specific rule referenced in the Rule object, while the ComplianceRemediation object indicates the state of a possible fix for the rule. When running a scan with a specific profile for the node-level or the cluster-level scans, several rules in the profiles will be validated, so the ComplianceCheckResult object is created for each Rule object. For example, the following object is the result of a rule to disable SSH login as the root user:

apiVersion: compliance.openshift.io/v1alpha1

kind: ComplianceCheckResult

metadata:

labels:

compliance.openshift.io/check-severity: medium

compliance.openshift.io/check-status: FAIL

compliance.openshift.io/suite: example-compliancesuite

compliance.openshift.io/scan-name: workers-scan

name: workers-scan-no-direct-root-logins

namespace: openshift-compliance

ownerReferences:

- apiVersion: compliance.openshift.io/v1alpha1

blockOwnerDeletion: true

controller: true

kind: ComplianceScan

name: workers-scan

description: |-

Direct root Logins Not Allowed

Disabling direct root logins ensures proper accountability and multifactor

authentication to privileged accounts. Users will first login, then escalate

to privileged (root) access via su / sudo. This is required for FISMA Low

and FISMA Moderate systems.

id: xccdf_org.ssgproject.content_rule_no_direct_root_logins

severity: medium

status: FAIL

On the other hand, the ComplianceRemediation object shows how to apply the fix for the rule, and it is seen in the spec section. The apply value shows whether a fix should be applied, and the object value indicates how to fix the file permission modifying the MachineConfig object as follows. One of the fixes is to modify the Kubernetes object, and the other is to modify the MachineConfig object, especially the node-level scanning rules. To avoid worker machines restart several times depending on the number of the remediations, they are merged into a single object:

apiVersion: compliance.openshift.io/v1alpha1

kind: ComplianceRemediation

metadata:

labels:

compliance.openshift.io/suite: example-compliancesuite

compliance.openshift.io/scan-name: workers-scan

machineconfiguration.openshift.io/role: worker

name: workers-scan-disable-users-coredumps

namespace: openshift-compliance

ownerReferences:

- apiVersion: compliance.openshift.io/v1alpha1

blockOwnerDeletion: true

controller: true

kind: ComplianceCheckResult

name: workers-scan-disable-users-coredumps

uid: <UID>

spec:

apply: false

object:

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

spec:

config:

ignition:

version: 2.2.0

storage:

files:

- contents:

source: data:,%2A%20%20%20%20%20hard%20%20%20core%20%20%20%200

filesystem: root

mode: 420

path: /etc/security/limits.d/75-disable_users_coredumps.conf

Last but not least, the raw results are stored in a persistent volume, and you can retrieve them using oc cp command. This is because of OpenSCAP output results in Assessment Result Format (ARF) that consists of a huge XML file and includes all the details about the checks, profiles, and other information Then, how does the actual scan begins? The answer is that the ComplianceScan object initiates scanner pods that run OpenSCAP scan through the Profile object referenced in the ScanSettingBinding object, generating the two kinds of results. One contains summary data, such as fail or pass exposed as a ConfigMap, and the other is the full results in ARF that is finally stored in a PV. You can see details at Retrieving Compliance Operator Raw results - Compliance Operator | Security and Compliance | OpenShift Container Platform 4.6. Unfortunately, there are no features to automatically publish the raw results when the scan is finished yet, but there is an issue related to this.

Takeaway

We have looked through how to run the node-level scan using the Compliance Operator and the related custom resources for profiles and scans. To wrap up, here is the takeaway today:

- The profile is limited to check for RHCOS (node-level) so far, and cluster-level remediations will be implemented as a next step.

- The operator is only available for RHCOS and RHEL is not supported.

Compliance profiles for CIS OpenShift Benchmark, FISMA Moderate, PCI-DSS, or more are scheduled in the Compliance Profiles Roadmap.

While the operator is GA in 4.6, the security policies are still under development. Additional compliance checks will be delivered roughly every 2 months, while a limited set of checks for RHCOS is implemented so far. Besides, for production, content coverage for several security standards such as CIS OpenShift Benchmark, FISMA Moderate, PCI-DSS, or more would be the key factors shown in the Compliance Profiles Roadmap below.

Reference

- Dev confus.2020 compliance operator (Slide)

- Openshift Compliance & Security Operators (Slide)

- Automating Openshift Compliance Scanning (Recording)

- compliance-operator - The Custom Resource Definitions.

- Understanding the Compliance Operator - Compliance Operator | Security and compliance | OpenShift Container Platform 4.6

About the author

More like this

Stop managing, start orchestrating: Streamlining catalyst operations with Red Hat Ansible Automation Platform

4 reasons to start using image mode for Red Hat Enterprise Linux right now

Technically Speaking | Taming AI agents with observability

You Can’t Automate Cultural Change | Code Comments

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds