Introduction

Red Hat uses the subscription model to allow customers to download Red Hat tested and certified enterprise software. This way customers are supplied with the latest patches, bug fixes, updates and upgrades.

We can leverage a Red Hat subscription also when building container images, which is named here during the blog as an entitled build. A customer wants all the advantages of a Red Hat subscription also available in RHEL based containers be it RHEL7/8 or UBI7/8 based.

UBI is a subset of packages of a RHEL distribution, to have all needed packages to build a sophisticated container image, the build needs access to all repositories and this is where entitled builds can help.

Beware that entitled builds, which are using software beyond the UBI base set, are not publicly redistributable.

Entitled Builds on non-RHEL hosts

Entitled builds can be used on any non-RHEL host as long as the prerequisites are fulfilled in the container. Using a UBI based container, the only missing part is the subscription certificate.

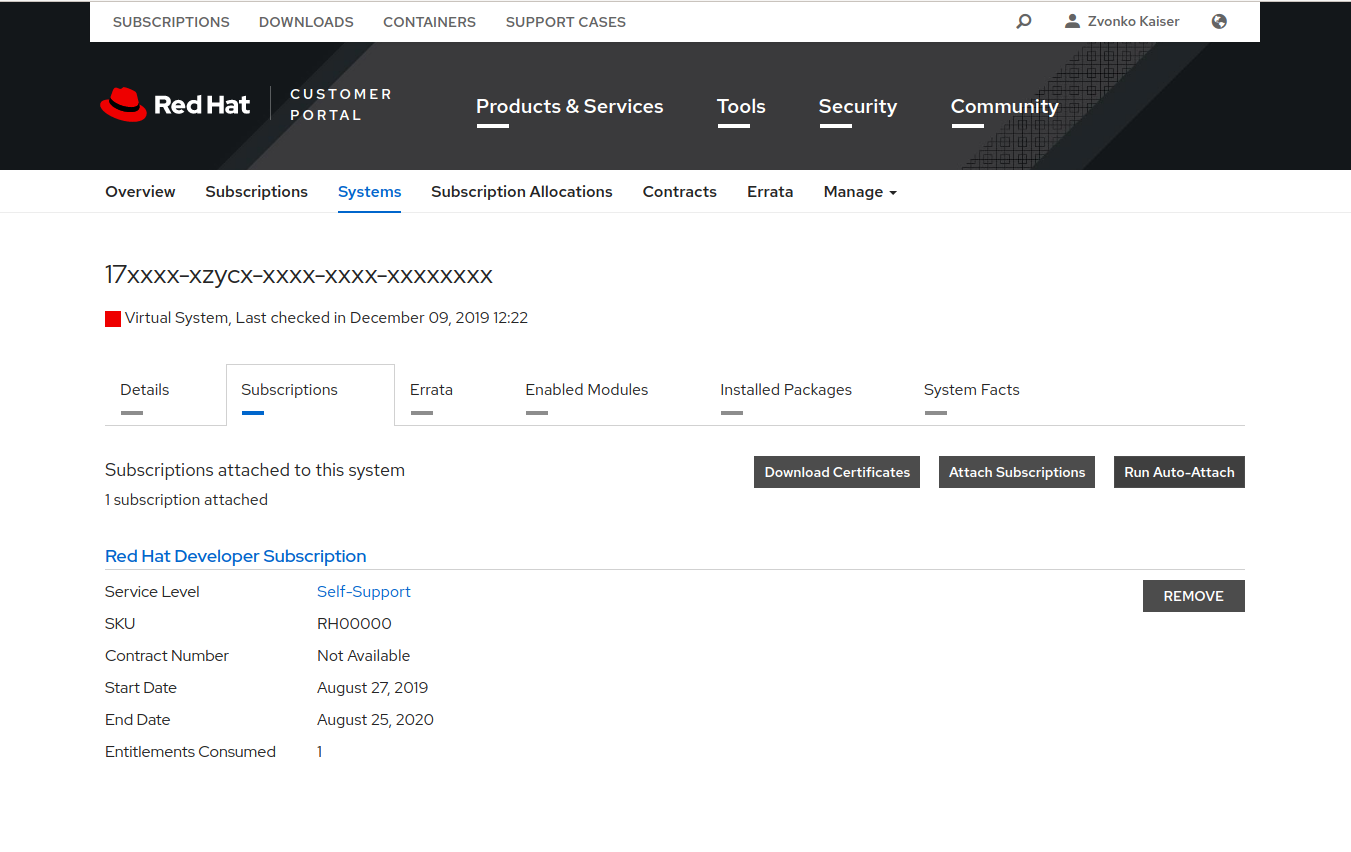

The Red Hat Customer Portal lets customers manage the subscriptions, access downloads and knowledge for products. The customer portal requires credentials to access. Once logged in, one has to navigate to the subscription page to download certificates needed for the next steps.

There are different flavours of subscriptions available, a (1) paid subscription, (2) developer subscriptions and (3) NFRs for partners.

Download the subscription certificate from the Systems tab. Click the Download Certificates and save the .zip on the host where one wants to build the entitled container image.

Extract all files and place the *.pem/root/entitlement/{ID}.pem.

Install podman according to your distribution's documentation. Here, we are using Ubuntu as the prime example.

# add-apt-repository -y ppa:projectatomic/ppa

# apt -y install podman

# mkdir -p /etc/containers

# curl https://raw.githubusercontent.com/projectatomic/registries/master/registries.fedora -o /etc/containers/registries.conf

# curl https://raw.githubusercontent.com/containers/skopeo/master/default-policy.json -o /etc/containers/policy.json

The next step is to run the UBI container and mount the *.pem file into the right directories. The *.pem consists of several parts ( private-key, signature, …) but it can be used as a single file. Using dnf to search for kernel-devel packages as an example to illustrate that we can install package that are not in UBI per default.

# podman run -ti --mount type=bind,source=/root/entitlement/{ID}.pem,target=/etc/pki/entitlement/entitlement.pem --mount type=bind,source=/root/entitlement/{ID}.pem,target=/etc/pki/entitlement/entitlement-key.pem registry.access.redhat.com/ubi8:latest bash -c "dnf search kernel-devel --showduplicates | tail -n2"

kernel-devel-4.18.0-147.0.3.el8_1.x86_64 : Development package for building kernel modules to match the kernel

Entitled Builds on OpenShift with a Pod in a Namespace

Having downloaded the subscription certificate in the previous step we can use this file to populate a secret that can be used later by a Pod to get the entitlement in the container.

Lets first create a secret that is used in the Pod:

$ oc create secret generic entitlement --from-file=entitlement.pem={ID}.pem --from-file=entitlement-key.pem={ID}.pem

Per default a RHCOS or RHEL7 node is not entitled. CRI-O will automount the certificates from the host. Since we do not want to place the subscription on the host and the files needed are already in UBI, we're going to disable the automount with a MachineConfig.

file: 0000-disable-secret-automount.yaml

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

labels:

machineconfiguration.openshift.io/role: worker

name: 50-disable-secret-automount

spec:

config:

ignition:

version: 2.2.0

storage:

files:

- contents:

source: data:text/plain;charset=utf-8;base64,Cg==

filesystem: root

mode: 0644

path: /etc/containers/mounts.conf

To verify that the settings are applied, one can use oc debug node to spin up a debug container on the specified node. It is also possible to chroot /host and execute host binaries.

$ oc debug node/<worker>

Starting pod/ip-xx-x-xxx-xx-us-west-2computeinternal-debug ...

To use host binaries, run `chroot /host`

Pod IP: 10.x.xxx.xx

If you don't see a command prompt, try pressing enter.

sh-4.2# chroot /host

sh-4.4# ls -l /etc/containers/mounts.conf

sh-4.4#

The next step is to create the entitled Pod and use the secret previously created.

file: 0001-entitled-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: entitled-build-pod

spec:

containers:

- name: entitled-build

image: registry.access.redhat.com/ubi8:latest

command: [ "/bin/sh", "-c", "dnf search kernel-devel --showduplicates" ]

volumeMounts:

- name: secret-entitlement

mountPath: /etc/pki/entitlement

readOnly: true

volumes:

- name: secret-entitlement

secret:

secretName: entitlement

restartPolicy: Never

To verify that the Pod has access to entitled software packages it will look for all kernel-devel packages available.

$ oc logs entitled-build-pod |tail -n2

kernel-devel-4.18.0-147.0.3.el8_1.x86_64 : Development package for ...

Entitled Builds on OpenShift with a BuildConfig in a Namespace

One can reuse the secret that was created in the first step to enable entitled builds with a BuildConfig. Again dnf will be used to demonstrate that we can access kernel-devel packages from entitled repositories.

Following an example BuildConfig that can be used as an example for entitled BuildConfigs: 0002-entitled-buildconfig.yaml.

Let's get the logs from the build container that runs the build and pushes the image to the internal registry and ImageStream.

$ oc logs entitled-buildconfig-1-build | tail -n2

kernel-devel-4.18.0-147.0.3.el8_1.x86_64 : Development package for ..

Cluster-Wide Entitled Builds on OpenShift

So far, we have only enabled entitled builds in a specific Namespace due to the namespaced Secret we created in the first step. If there is a need to have the complete cluster have entitled builds in any namespace we have to take another approach.

Assuming we have a fresh cluster or that one has reverted the changes to the nodes and deleted all secrets, the following steps are needed to enable cluster-wide entitled builds.

RHCOS does not have the subscription-manager package installed, which means we're missing /etc/rhsm/rhsm.conf. This file is publicly available and we can get if from e.g. a UBI image.

The first step is to create the needed files on the nodes, we're omitting here the creation of the Secret because we want it cluster-wide and are laying down the files on all workers.

Using this template: 0003-cluster-wide-machineconfigs.yaml.template, which has the rhsm.conf already encoded to create the actual manifest.

One can now replace the BASE64_ENCODED_PEM_FILE with the actual {ID}.pem content and create the MachineConfigs on the cluster.

$ sed "s/BASE64_ENCODED_PEM_FILE/$(base64 -w 0 {ID}.pem)/g" 0003-cluster-wide-machineconfigs.yaml.template > 0003-cluster-wide-machineconfigs.yaml

$ oc create -f 0003-cluster-wide-machineconfigs.yaml

Since we do not need the Secret anymore our Pod yaml is really simple. One can use the following file: 0004-cluster-wide-entitled-pod.yaml to create the simplified Pod. Lets verify our new configuration, again looking for the logs. First create the Pod:

$ oc create -f 0004-cluster-wide-entitled-pod.yaml

$ oc logs cluster-entitled-build-pod | grep kernel-devel | tail -n 1

kernel-devel-4.18.0-147.0.3.el8_1.x86_64 : Development package for ...

Any pod based on RHEL can now execute entitled builds.

Building Entitled DriverContainers

DriverContainers are used more and more in cloud-native environments, especially when run on pure container operating systems to deliver hardware drivers to the host.

DriverContainers are not only a delivery mechanism. They are far more than that. They enable the complete user and kernel stack. They configure the hardware, start daemons that are essential for hardware to work and perform other important tasks.

One of the most important things, we have no issues with SELinux, because we are interacting with the same SELinux contexts (container_file_t). Accessing host devices, libraries, or binaries from a container breaks the containment

as we have to allow containers to access host labels (that's why we have an SELinux policy for NVIDIA ). Now with the DriverContainer this policy is obsolete.

DriverContainers work on RHCOS/RHEL 7,8, Fedora (on a laptop) and they would work on any other distributions that is able to launch containers, assuming there are builds for such.

With DriverContainers the host stays always "clean", we have no clashes in different library versions or binaries. Prototyping is far more easier, updates are done by pulling a new container (btw the loading and unloading is done

by the DriverContainer with checks on /proc and /sys and other files to make sure that all traces are removed).

To demonstrate entitled DriverContainer builds we're going to use NVIDIAs DriverContainer to build GPU drivers for a cluster.

The BuildConfig: 0005-nvidia-driver-container.yaml uses as input a git repository with a Dockerfile. Since one has enabled the cluster-wide entitlement the BuildConfig can use any RHEL repository to build the DriverContainer.

The DriverContainer is exclusive to the namespace where it was build and is pushed to the internal registry which is also namespaced. Creating the BuildConfig,

$ oc create -f 0005-nvidia-driver-container.yaml

will create a build Pod that one can monitor the progress of the build.

$ oc get pod

NAME READY STATUS RESTARTS AGE

nvidia-driver-internal-1-build 0/1 Init:0/2 0 2s

Looking at the logs one can verify that one is using an entitled build by examining the installed packages.

$ oc logs nvidia-driver-internal-1-build | grep kernel-devel | head -n 5

STEP 2: RUN dnf search kernel-devel --showduplicates

================ Name Exactly Matched: kernel-devel ====================

kernel-devel-4.18.0-80.1.2.el8_0.x86_64 : Development package for ...

kernel-devel-4.18.0-80.el8.x86_64 : Development package for building ...

kernel-devel-4.18.0-80.4.2.el8_0.x86_64 : Development package for ...

Using entitled builds one makes sure that builds are reproducible and only using compatible and updated software (CVE, Bugfix) in their RHEL environments.

If you want to see DriverContainers in action, please have a look at: Part 1: How to Enable Hardware Accelerators on OpenShift

About the author

More like this

AI in telco – the catalyst for scaling digital business

Simplify Red Hat Enterprise Linux provisioning in image builder with new Red Hat Lightspeed security and management integrations

Edge computing covered and diced | Technically Speaking

Kubernetes and the quest for a control plane | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds