Author:

In this blog, I discuss how to manage OpenShift clusters in isolated networks and demonstrate how to integrate Red Hat Advanced Cluster Management for Kubernetes (RHACM) with Web Application Firewalls (WAF) in edge environments. I describe why an organization may consider placing a WAF between a RHACM hub cluster and managed clusters at the edge. I go through the challenges I had in such integrations and I provide possible ways to solve them.

Overview

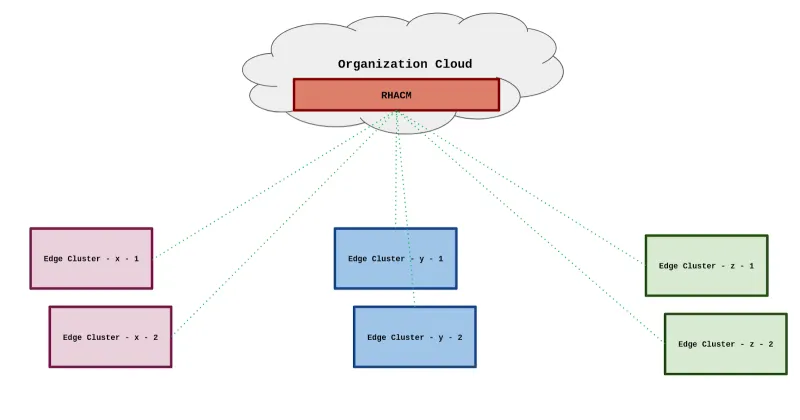

Deploying OpenShift at the edge became routine for many organizations. Red Hat provides the platform and tools to maintain a stable containerized environment closer to the organizational sources of data. You can deploy OpenShift on small 3 server setups or single node OpenShift. You can even deploy OpenShift on a Raspberry Pi 4! Furthermore, Red Hat allows you to maintain all of your clusters using RHACM, which will usually be deployed in a centralized data center or public cloud. See the following diagram:

It sounds nice in theory, but may be problematic in practice. In many cases the edge clusters are placed in unsecured remote sites, vehicles, ships, or even aircraft. Therefore, the clusters might be at risk of being compromised by malicious attackers that gain physical access to the servers.

Since the Klusterlet agent (RHACM agent on managed clusters) is communicating directly with the hub cluster OpenShift API server, there is nothing to stop an attacker that has control over a managed cluster from accessing the management hub cluster API. By default, Klusterlet uses service accounts with a minimal set of permissions, but potential misconfigurations on the hub cluster may allow an attacker to take over the hub cluster and gain control over the managed OpenShift fleet.

In order to provide an additional security layer to the communication between the managed cluster and the hub cluster, organizations tend to place a WAF between the edge networks and central organizational network. By using a WAF, an organization may identify and address well known threats that start at the edge. The WAF scans the traffic and enforces L7 policies when it identifies malicious actions from the edge client side.

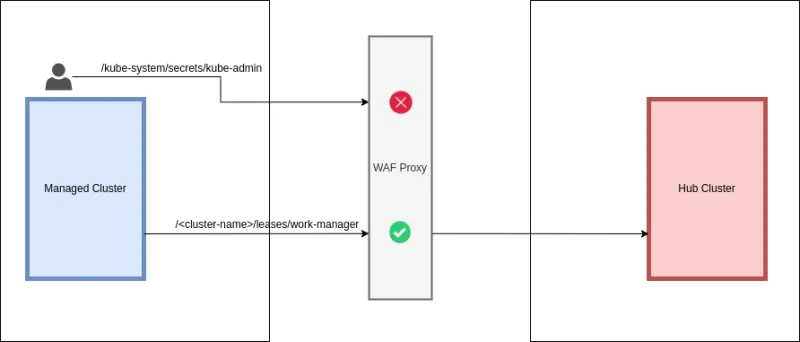

Furthermore, by using the WAF, you can control the API endpoints the Klusterlet agent has access to on the hub cluster. You can restrict the available API endpoints to the endpoints used by Klusterlet only. Even if an attacker knows the admin credentials of a hub cluster, they will not be able to access sensitive endpoints on the hub cluster API server. See the following diagram:

Managed cluster communication flow

In the next section of the article, I demonstrate how to simulate the traffic that the managed cluster initiates against the RHACM hub cluster API server. This simulation allows me to verify that the mechanism is working properly and it helps me see what changes occur as soon as I add a WAF into the architecture.

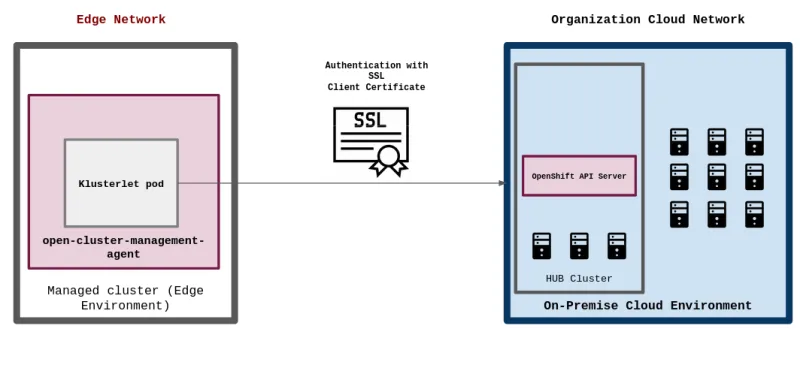

Before we try to integrate a WAF with RHACM, let's cover how a managed cluster communicates with a RHACM hub cluster. By default, most of the network flows between the RHACM hub cluster and managed cluster instances originate from the Klusterlet agent and add-ons which are installed on the managed cluster. The Klusterlet agent and add-ons on the managed clusters are using multiple client certificates to authenticate against the hub cluster. The client certificates contain the agent identity of the managed cluster and group affiliations. The following diagram demonstrates how the Klusterlet agent communicates with the RHACM hub cluster:

To extract the Klusterlet client certificate and private key, run the following commands against the managed cluster API:

<managed cluster> $ oc extract secret/hub-kubeconfig-secret --to=- -n open-cluster-management-agent

...

# tls.crt

-----BEGIN CERTIFICATE-----

MIIC4jCCAcqgAwIBAgIQG2GkwYx8O9K4mea6vKOCAzANBgkqhkiG9w0BAQsFADAm

MSQwIgYDVQQDDBtrdWJlLWNzci1zaWduZXJfQDE2NDE0NTg3NTQwHhcNMjIwMTEx

MTM1NDM3WhcNMjIwMjA1MDg0NTU1WjCBpjFqMDAGA1UEChMpc3lzdGVtOm9wZW4t

Y2x1c3Rlci1tYW5hZ2VtZW50Om9jcDQ5LXRlc3QwNgYDVQQKEy9zeXN0ZW06b3Bl

bi1jbHVzdGVyLW1hbmFnZW1lbnQ6bWFuYWdlZC1jbHVzdGVyczE4MDYGA1UEAxMv

c3lzdGVtOm9wZW4tY2x1c3Rlci1tYW5hZ2VtZW50Om9jcDQ5LXRlc3Q6YjVsODQw

WTATBgcqhkjPPQIBBggqhkjOPQMBBwNCAATs0y67RSCh0pSnlhTJ4BdoL2XNFdO7

JvtY3F8Pf7sHhp2ByvFCFb+fHZFj8zD1nLeg3SZKhybsN7CtMGRRXMRQo1YwVDAO

BgNVHQ8BAf8EBAMCBaAwEwYDVR0lBAwwCgYIKwYBBQUHAwIwDAYDVR0TAQH/BAIw

ADAfBgNVHSMEGDAWgBQXMy+4l6nCf17/JRvlWi0XaTM1/jANBgkqhkiG9w0BAQsF

AAOCAQEAQ4447yEvllb889wYnf4rL4LfWsdANbOjY4mEnHylG8I/rz0MSSAGtTYu

EA7hi58FBtyDRFr01h1sxrCW5fGf6qmmx1j94Nzg/bj4V7TbPkQV2WG2WeUnjFfD

pZZW2K8rff0jaD6j0ne7GvWRkbmfnCaKNxfN+IuxvOt+fuMQVgy+HtThgNNwYUXR

7Md5ADoAdnxNATzTmVACOgqR19Ns+GCy69p4C4d3myE2EOaY4xxRdzMOjgy9aZLM

4W6+/nd4pMj6fV1XggczVbmpO2RtQM3VUa+0Fomy1LY5KjDFZV5FRfcnDpQdGbOb

LVGRF6VZkX83F02U1Oa3a0ojCT2DZw==

-----END CERTIFICATE-----

# tls.key

-----BEGIN EC PRIVATE KEY-----

MHcCAQEEINPJakniAtmwqNGb/KbuG6CPBplqls+zM+yXQR8emzcKoAoGCCqGSM49

AwEHoUQDQgBEd5VtmmVgatumc1q3+fHlxjUQEZ0s068ztYWcbLXwP6zJ6ipJNb9B

4ZKdnb1xDBjfya7CVSqKhdmCaSIEITRjTA==

-----END EC PRIVATE KEY-----

...

To extract the user identity for which the certificate was issued for, run the following command:

<managed cluster> $ openssl x509 -in certificate.crt -text -noout

Certificate:

...

Issuer: CN = kube-csr-signer_@1611657754

Validity

Not Before: Jan 11 13:54:37 2022 GMT

Not After : Feb 5 08:45:55 2022 GMT

Subject: O = system:open-cluster-management:<cluster-name> + O = system:open-cluster-management:managed-clusters, CN = system:open-cluster-management:<cluster-name>:<cluster-id>

Note that the certificate is associated with the system:open-cluster-management:<cluster-name>:<cluster-id> user and the system:open-cluster-management:<cluster-name> and system:open-cluster-management:managed-clusters groups.

As I mentioned before, the managed cluster uses the identity that is defined in the certificate to access the RHACM hub cluster API server. On the hub cluster, you may notice that you have a ClusterRoleBinding resource that associates the system:open-cluster-management:<cluster-name> group with the open-cluster-management:managedcluster:<cluster-name> ClusterRole. Access the ClusterRoleBinding by running the following command on the hub cluster:

<hub cluster> $ oc get clusterrolebinding open-cluster-management:managedcluster:<cluster-name> -o yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: open-cluster-management:managedcluster:<cluster-name>

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: open-cluster-management:managedcluster:<cluster-name>

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:open-cluster-management:<cluster-name>

The open-cluster-management:managedcluster:<cluster-name> ClusterRole allows the system:open-cluster-management:<cluster-name> group to access the following resources on the hub cluster:

<hub cluster> $ oc get clusterrole/open-cluster-management:managedcluster:<cluster-name> -o yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: open-cluster-management:managedcluster:oc46-devel

rules:

- apiGroups:

- certificates.k8s.io

resources:

- certificatesigningrequests

verbs:

- create

- get

- list

- watch

- apiGroups:

- register.open-cluster-management.io

resources:

- managedclusters/clientcertificates

verbs:

- renew

- apiGroups:

- cluster.open-cluster-management.io

resourceNames:

- <cluster-name>

resources:

- managedclusters

verbs:

- get

- list

- update

- watch

- apiGroups:

- cluster.open-cluster-management.io

resourceNames:

- <cluster-name>

resources:

- managedclusters/status

verbs:

- patch

- update

Now that I know the resources the group has access to, let's try accessing one of these resources on the hub cluster API server by using the client certificate we extracted in the previous steps by running the following command:

$ curl -k --url https://api.<hub-cluster-fqdn>:6443/apis/cluster.open-cluster-management.io/v1/managedclusters/<managed-cluster-name> --key private-key.key --cert certificate.crt

{"apiVersion":"cluster.open-cluster-management.io/v1","kind":"ManagedCluster","metadata":{"annotations":{"...

As you can see, the result is successful. By running the command, I am able to simulate the traffic that the managed cluster initiates against the hub cluster. I am able to successfully get the <cluster-name> ManagedCluster resource on the hub cluster by using the client certificate and private key of the managed cluster instance.

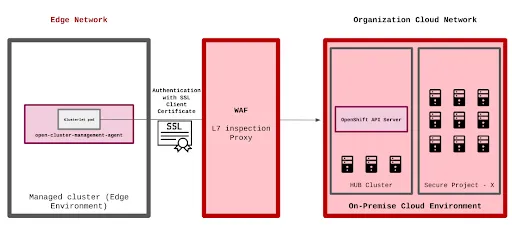

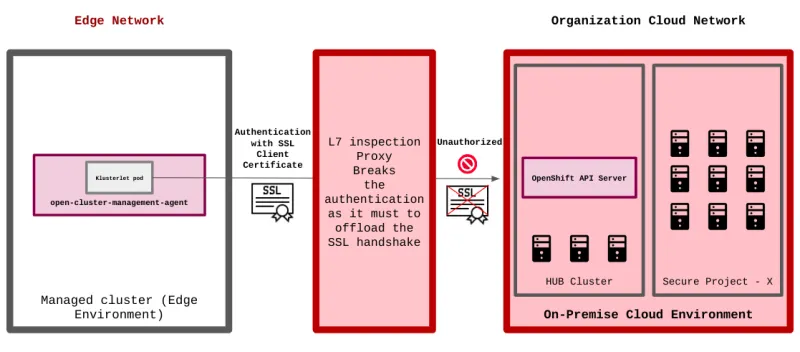

Adding WAF into the architecture

Now that we know how the mechanism works, let's add a WAF instance between the RHACM hub cluster and the managed cluster. On the WAF instance, I enable L7 traffic inspection and enforce a security policy. As soon as the WAF instance receives traffic from the managed cluster, it inspects the packet by stripping the client certificate off the packet. When the WAF finishes the packet inspection, it forwards the traffic towards the RHACM hub cluster. See the following diagram:

After placing the WAF instance between the clusters, let's initiate the same curl request I created in the previous section. This time, I send the request to the WAF instance that forwards it towards the RHACM hub cluster by running the following command:

$ curl -k --url https://<waf-endpoint>:6443/apis/cluster.open-cluster-management.io/v1/managedclusters/<managed-cluster-name> --key /tmp/private-key.key --cert /tmp/certificate.crt

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {

},

"status": "Failure",

"message": "managedclusters.cluster.open-cluster-management.io \"<managed-cluster-name>\" is forbidden: User \"system:anonymous\" cannot get resource \"managedclusters\" in API group \"cluster.open-cluster-management.io\" at the cluster scope",

"reason": "Forbidden",

"details": {

"name": "<managed-cluster-name>",

"group": "cluster.open-cluster-management.io",

"kind": "managedclusters"

},

"code": 403

}

Note that instead of receiving the definition of the requested resource, I get an 403 - Forbidden error. The error occurs because the WAF proxy breaks the tls during L7 inspection of the packets. The client certificate is stripped off the packet during inspection and no authentication parameters are forwarded toward the hub cluster API server. The hub cluster API server receives a packet with no authentication parameters and checks if the system:anonymous user has the rights to access the requested resource. The system:anonymous user does not have permissions to access the endpoint and receives a 403 - Forbidden error. See the following diagram:

In order to solve this problem, the authentication parameters must be preserved after the packet leaves the WAF instance. To preserve the authentication parameters you can choose one of the two following methods:

- Recreate the client certificate on the WAF instance.

- Forward the authentication parameters as HTTP headers.

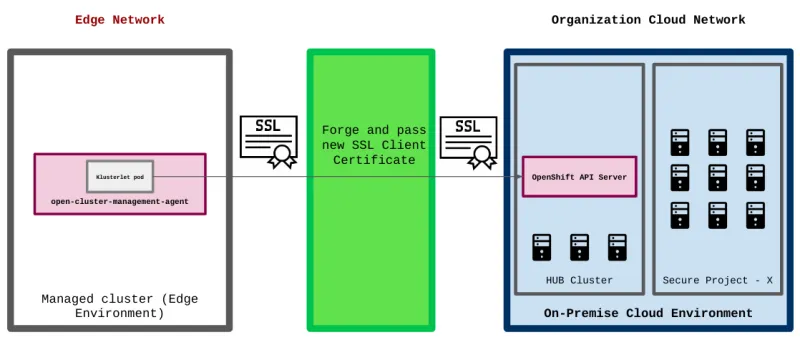

Method 1 - Recreate the client certificate

This method allows preserving the identity of the managed cluster by recreating its client certificate after the L7 inspection finishes on the WAF instance. The method requires the WAF instance to support such functionality (for example, BIG-IP C3D).

When enabled, traffic originating at the managed cluster will go through the steps described in the following diagram:

One big disadvantage of this method is that the certificate authority (CA) and private key that sign the client certificate of the managed cluster have to be placed on the WAF instance in order to allow successful certificate recreation. The CA that signs the client certificate in that case is the kube-csr-signer on the RHACM hub cluster. The kube-csr-signer CA expires every 30 days, which means that it has to be constantly rotated on the WAF instance in order for the mechanism to continue functioning. Any failure in the rotation procedure may cause authentication issues for the managed clusters against the RHACM hub cluster.

Method 2 - Forward authentication parameters as HTTP headers

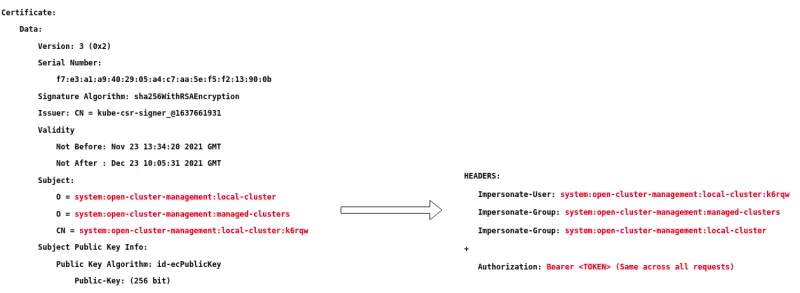

The next method allows preserving the identity of the managed cluster by swapping the authentication method in the WAF instance. Instead of preserving the client certificate, I tear it apart and place the authentication parameters that the client certificate contained into HTTP headers and forward them to the RHACM hub cluster.

In order for the mechanism to work, I use a Kubernetes feature called impersonation. By using impersonation, I provide a service account on the RHACM hub cluster with the ability to perform actions as other users, including the users and groups that represent the managed clusters on the RHACM hub cluster.

I configure the WAF instance to strip the CN and O fields off the client certificate and forward them as the Impersonate-User and Impersonate-Group HTTP headers. Alongside the impersonation headers, I configure the WAF instance to attach an additional Authorization HTTP header. The Authorization HTTP header contains the JWT token for an Impersonator service account. By defining this Authorization header in the outgoing HTTP request, I state that the Impersonator service account is the one who is going to authenticate against the RHACM hub cluster API server. And by defining the Impersonate-User and Impersonate-Group headers in the outgoing HTTP request, I state that the Impersonator service account is going to perform an action on behalf of the user and group specified in these headers. The original client certificate fields and resulting HTTP headers can be found in the following diagram:

In order to allow a service account to impersonate the users and groups of the managed cluster, run the following commands on the hub cluster:

$ git clone https://github.com/michaelkotelnikov/rhacm-l7-blog.git

# Creates a namespace for the “impersonator” service account

<hub cluster> $ oc apply -f rhacm-l7-blog/k8s-manifests/namespace.yaml

namespace/authentication-proxy created

# Creates the “impersonator” service account

<hub cluster> $ oc apply -f rhacm-l7-blog/k8s-manifests/serviceaccount.yaml

serviceaccount/proxy-authenticator created

# Creates a ClusterRole resource that allows impersonation to all users in the cluster

<hub cluster> $ oc apply -f rhacm-l7-blog/k8s-manifests/clusterrole.yaml

clusterrole.rbac.authorization.k8s.io/impersonator created

# Creates a ClusterRoleBinding resource that associates the previously created ClusterRole with the “impersonator” service account

<hub cluster> $ oc apply -f rhacm-l7-blog/k8s-manifests/clusterrolebinding.yaml

clusterrolebinding.rbac.authorization.k8s.io/impersonator created

To extract JTW token of the service account, run the following command on the hub cluster:

<hub cluster> $ oc sa get-token proxy-authenticator -n authentication-proxy

eyJhbGciOiJSUzI1NiIsImtpZCI6IkRMdnNDVWd5ekpySkJRVi00VHpnREV4TUtlLUV2RnhxcGdheGJCQmRyYWMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJhdXRoZW50aWNhdGlvbi1wcm94eSIsImt1YmVybmV0ZXMuaW8vc2Vydm...

Now that the impersonation mechanism is set on the RHACM hub cluster, you can test it by setting the Impersonation and Authorization HTTP headers manually in a curl request. An example would be -

$ curl -k -H "Authorization: Bearer <Impersonator Service Account Access Token>" -H "Impersonate-User: system:open-cluster-management:<cluster-name>:<cluster-id>" -H "Impersonate-Group: system:open-cluster-management:managed-clusters" -H "Impersonate-Group: system:open-cluster-management:<cluster-name>" https://api.<hub-cluster-fqdn>:6443/apis/cluster.open-cluster-management.io/v1/managedclusters/<cluster-name>

{"apiVersion":"cluster.open-cluster-management.io/v1","kind":"ManagedCluster","metadata":{"annotations":{"...

After you have validated that everything is working on the RHACM hub cluster side, make sure to configure the WAF instance to generate the HTTP headers out of the authentication information of the client certificate. Note that each WAF instance may have its own way to configure such a mechanism (for example, BIG-IP irule).

After you have configured the WAF instance, initiate traffic toward the WAF and make sure that it forwards the authentication parameters to the RHACM hub correctly by running the following command:

$ curl -k --url https://<waf-endpoint>:6443/apis/cluster.open-cluster-management.io/v1/managedclusters/<managed-cluster-name> --key /tmp/private-key.key --cert /tmp/certificate.crt

{"apiVersion":"cluster.open-cluster-management.io/v1","kind":"ManagedCluster","metadata":{"annotations":{"...

Now that everything is configured, you can import clusters from the edge networks to the central RHACM hub and scan all traffic using a WAF instance along the way.

Conclusion

In this blog, I went through the reasons an organization may consider placing a WAF between a RHACM hub cluster and OpenShift clusters at the edge. I described how managed clusters authenticate against the hub cluster and the challenges that come up with the authentication process when placing a WAF between the management and edge clusters. I proposed two solutions for the authentication challenges and demonstrated the procedures an organization would go through when implementing them.

Even though the solutions are not officially supported by Red Hat, they provide a practical way for organizations to deal with constraints that come up when dealing with OpenShift environments at the edge.

About the author

More like this

Elevate your vulnerabiFrom challenge to champion: Elevate your vulnerability management strategy lity management strategy with Red Hat

Simplifying modern defence operations with Red Hat Edge Manager

Data Security And AI | Compiler

Data Security 101 | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds