Determine and Mitigate Impact of Docker Hub Pull Request Limits starting Nov 2nd

If you are using Docker Hub to distribute your containerized software project, you will by now have received at least two emails about the new image pull consumption tiers. While the initially planned image retention policies (stale images are deleted after 6 months) have been postponed to mid-2021, pull-request limits are starting to be enforced effectively on November 2nd.

How your users are going to be impacted

What this means is that, if you are using the free tier of Docker Hub, all your images will be subject to a pull request limit of 100 pulls per six hours enforced per client IP for anonymous clients. Anonymous clients are all those users, who do not have a Docker Hub account or do not log in via docker login before pulling an image. Anonymous pulls are also very often used in CI/CD systems that build software from popular, public base images.

Pulls from authenticated users on the free tier of Docker Hub are limited to 200 per six hours.

What counts as a pull?

The new limits are enforced on a per-manifest basis. While in the early days of containers one image corresponded to one manifest, in today’s world of multi-arch images a container image is actually a list of manifests, with one manifest/image per supported system architecture (e.g. x86_64, aarch64, arm64v8, etc).

Starting November 2nd, a pull is counted against a single request of single manifest. In case of multi-arch images, most clients however will only download the one manifest that matches the system they are running on, so it would still count as a single pull.

It is important to note however, that a pull is also counted if the client system already has all the image layers present and nothing is actually downloaded. That means that image caching does not reduce the number of pulls counted against the limit.

How to determine if you reached the pull request limit

From a user perspective, since the pull limits are enforced on per client IP, it might be hard to predict if and when limits will be reached. You can however simulate what happens, when that is the case. There are two test repositories available that already have the limits enforced, one of which is permanently at the rate limit. Clients react differently to these.

$ docker pull docker.io/ratelimitalways/test:latest

Error response from daemon: toomanyrequests: Too Many Requests. Please see https://docs.docker.com/docker-hub/download-rate-limit/

This test repository has rate limiting enabled and always in effect. The pull request immediately aborts because the registry returned HTTP 429 (toomanyrequests).

If you are a podman user, the behavior is different:

$ podman pull docker.io/ratelimitalways/test:latest

Trying to pull docker.io/ratelimitalways/test:latest…

This command will initially seem to hang but will return eventually after 15 minutes. With a more verbose log level we can actually see what is going on:

$ podman --log-level debug pull docker.io/ratelimitalways/test:latest

INFO[0000] podman filtering at log level debug

...

[some lines omitted]

...

DEBU[0000] GET https://registry-1.docker.io/v2/ratelimitalways/test/manifests/latest

DEBU[0001] Detected 'Retry-After' header "60"

DEBU[0001] Too many requests to https://registry-1.docker.io/v2/ratelimitalways/test/manifests/latest: sleeping for 60.000000 seconds before next attempt

As you can see, the registry not only returns the “toomanyrequests” HTTP code but also specifies a desired retry interval of 60 seconds via a response header. podman will by default retry 5 times in case of HTTP 429 while respecting the pause duration specified in the “Retry-after” header. After 5 retries it backs off and considers the attempt failed. Above that, podman by default retries failed pulls 3 times, hence the overall duration of 15 minutes.

It eventually fails like the docker client:

DEBU[0977] Error pulling image ref //ratelimitalways/test:latest: Error initializing source docker://ratelimitalways/test:latest: Error reading manifest latest in docker.io/ratelimitalways/test: toomanyrequests: Too Many Requests. Please see https://docs.docker.com/docker-hub/download-rate-limit/

As of time of writing, there is also the ratelimitpreview/test available, which has request counting enabled and supposedly kicking in after the announced limits. However the author could not produce a rate limit being enforced as of yet.

Impact

Assessing the impact will be challenging. Anonymous pulls from Docker Hub are widely used in the FOSS community, especially in CI/CD systems. Almost everybody has image references to public images on Docker Hub in their container platforms and many software build pipelines create containerised software from base images in public repositories.

Container platforms like Kubernetes and OpenShift might run into these limits, when trying to scale or re-schedule a deployment from such an image, even when the nodes have the image cached. These events occur constantly in any container orchestration environment and are very likely to rapidly exhaust the quota of 100/200 pulls in 6 hours, which might cause a service outage. CI/CD pipelines might start to fail building and rolling out your software and those are usually the recovery tool of choice for such outages.

Mitigation strategies

For an enterprise DevOps practice relying on such a critical service via a free-tier offering is usually not acceptable. Especially for on-premise environments the on-going dependency on an online service is not considered a long term solution.

For these environments, enterprise users can leverage Red Hat Quay to provide a scalable and secure container registry platform on top of any supported on- and off-premise infrastructure. It provides massive performance in container image distribution, combined with the ability to scan container image contents for security vulnerabilities, while providing strict multi-tenancy.

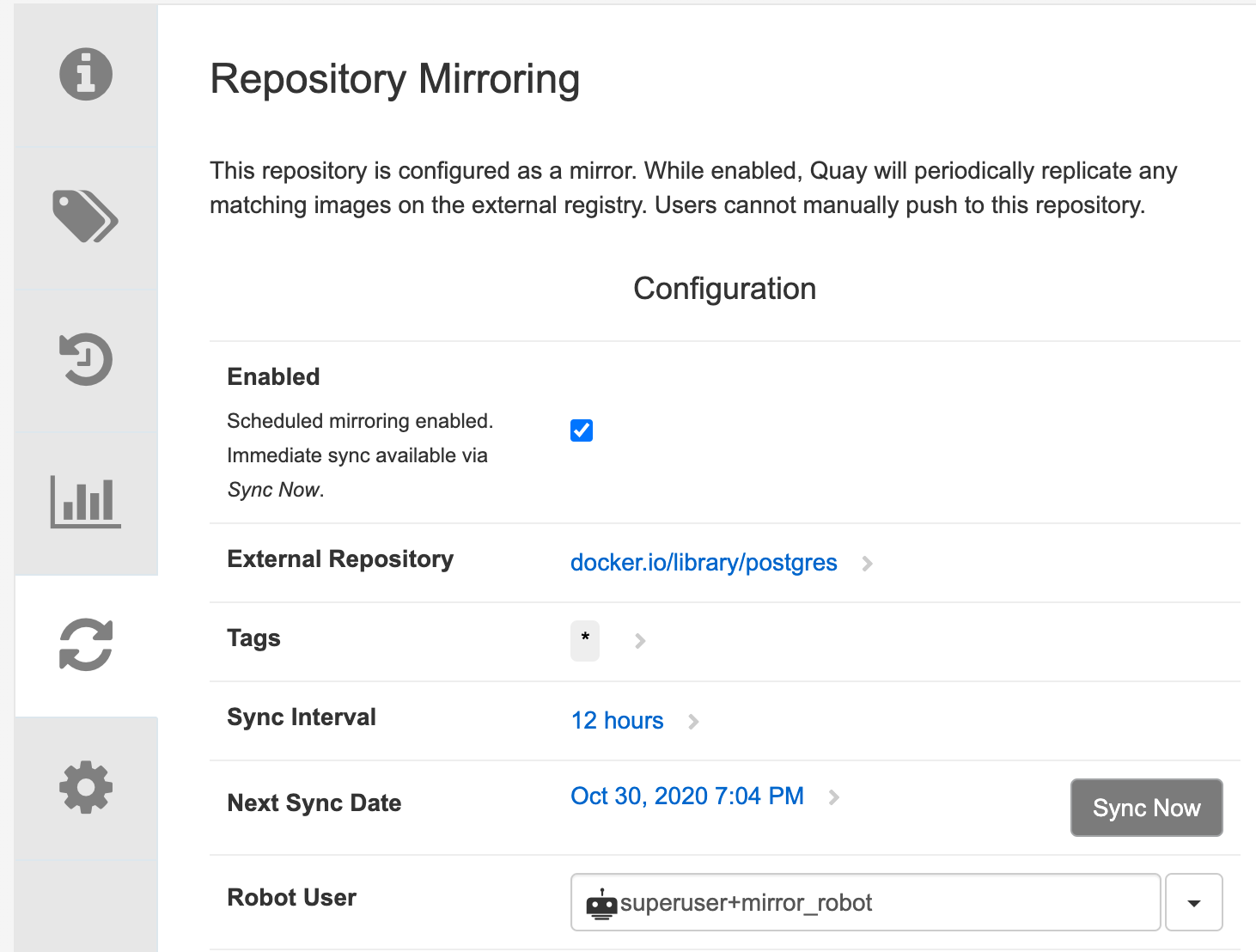

Such a deployment is not limited to a single data center or cloud region but can be scaled across the globe using geo-replication. On top of that, content can be copied into a Red Hat Quay instance on a continuous basis from any other container registry via repository mirroring, so you can provide a fast, local cache of public image repositories. For the future we are also planning to have Red Hat Quay run as a transparent proxy cache.

Example of a repository mirroring configuration in Red Hat Quay

On the other end of the spectrum there are customers that do not need their own registry service. And then there are the thousands of volunteers maintaining open source projects and containerized software.

For these audiences there is the online version of Red Hat Quay available at Quay.io. This is a public container registry service that shares the same code base as Red Hat Quay and has a proven track record among the open source community for more than 6 years. In August this year this platform served over 6 billion container image pulls with 100% uptime.

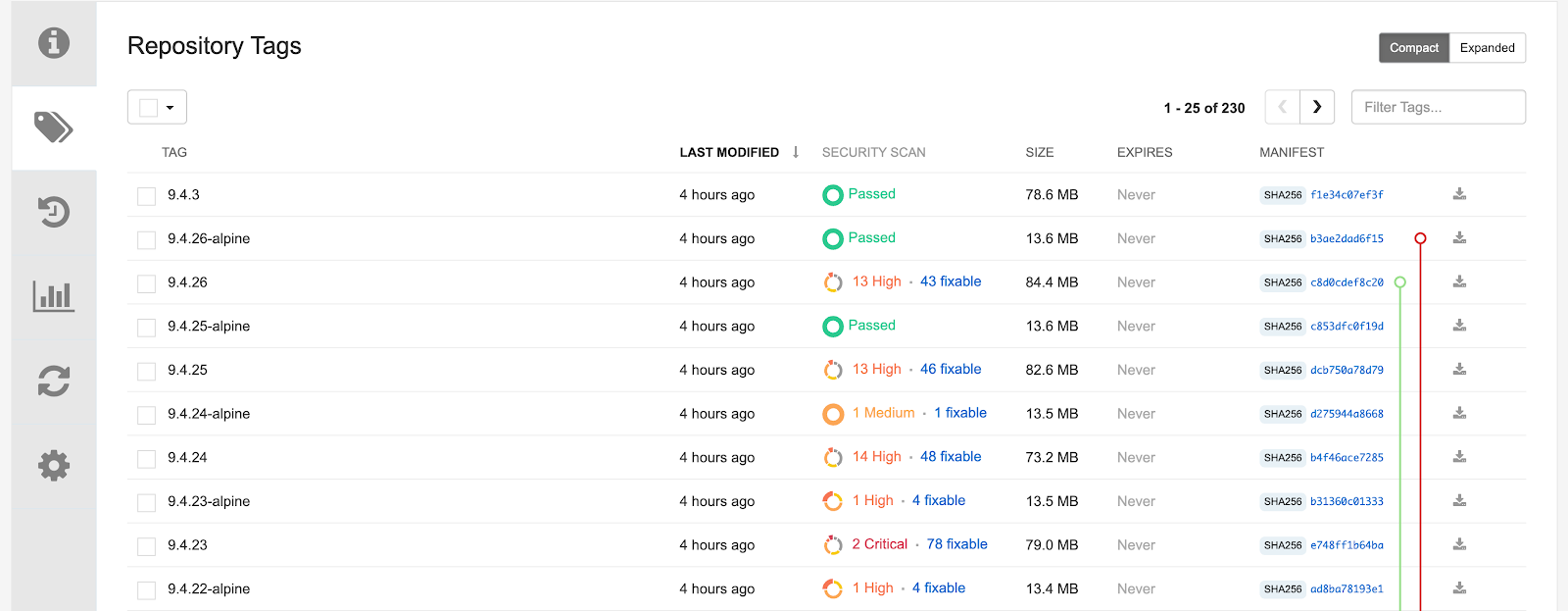

Quay.io not only hosts your container images and serves them to any OCI compatible client (docker, podman, etc) but it can also build your software. It connects to a source code management system of your choice (e.g. GitHub or GitLab) and builds images from your Dockerfile on every commit. At the same time it provides image content scanning, so you can become aware when your published images contain any known security vulnerabilities. This scanning covers a variety of package managers (apt, apk, yum, dnf) and language package managers (python pip) used inside container images.

Overview of the security vulnerabilities found in the official PostgreSQL container images by Red Hat Quay

Another alternative for CI/CD systems is to use a different base image from a different registry, like the Universal Base Image which contains a basic Red Hat Enterprise Linux environment, free to use.

Migrating images with skopeo

In case you want to migrate your existing images to another registry like Quay.io you can leverage skopeo. Like podman and buildah it is part of a toolchain that enables working with containers and images without the need for a docker daemon to be running and without requiring elevated privileges or root access on your OS.

skopeo can be used to easily copy your container images from one registry to another, like so:

$ skopeo login docker.io

Username: dmesser

Password:

Login Succeeded!

$ skopeo login quay.io

Username: dmesser

Password:

Login Succeeded!

$ skopeo sync --src docker --dest docker docker.io/dmesser/nginx quay.io/dmesser/

INFO[0000] Tag presence check imagename=docker.io/dmesser/nginx tagged=false

INFO[0000] Getting tags image=docker.io/dmesser/nginx

INFO[0001] Copying image tag 1/1 from="docker://dmesser/nginx:latest" to="docker://quay.io/dmesser/nginx:latest"

Getting image source signatures

Copying blob bc51dd8edc1b done

Copying blob 66ba67045f57 done

Copying blob bf317aa10aa5 done

Copying config 2073e0bcb6 done

Writing manifest to image destination

Storing signatures

INFO[0012] Synced 1 images from 1 sources

This is all it takes to sync an entire repository called nginx, including all tags, from Docker Hub to Quay.io.

$ podman pull quay.io/dmesser/nginx

Trying to pull quay.io/dmesser/nginx...

Getting image source signatures

Copying blob bf317aa10aa5 done

Copying blob 66ba67045f57 done

Copying blob bc51dd8edc1b done

Copying config 2073e0bcb6 done

Writing manifest to image destination

Storing signatures

2073e0bcb60ee98548d313ead5eacbfe16d9054f8800a32bedd859922a99a6e1

For mass migration of entire repositories skopeo has great facilitates for automation, check out the skopeo-sync documentation. This is suitable for one-off migration as well as regular synchronization of incremental changes as part of a simple cron job.

Notice that by default, Quay.io repositories are private after creation.. You can make them public in the settings menu of the repository. This is a default setting we plan to make configurable in the future.

Quay.io comes with a free tier which does not incur any cost and allows unlimited public container images. Subscription models are available, ranging from developers who need private repositories all the way to offerings suitable for entire organizations or companies, check out the available plans.

About the author

More like this

Confidential Containers workshop on Microsoft Azure Red Hat OpenShift: Learn interactively

AI in telco – the catalyst for scaling digital business

Scaling For Complexity With Container Adoption | Code Comments

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds