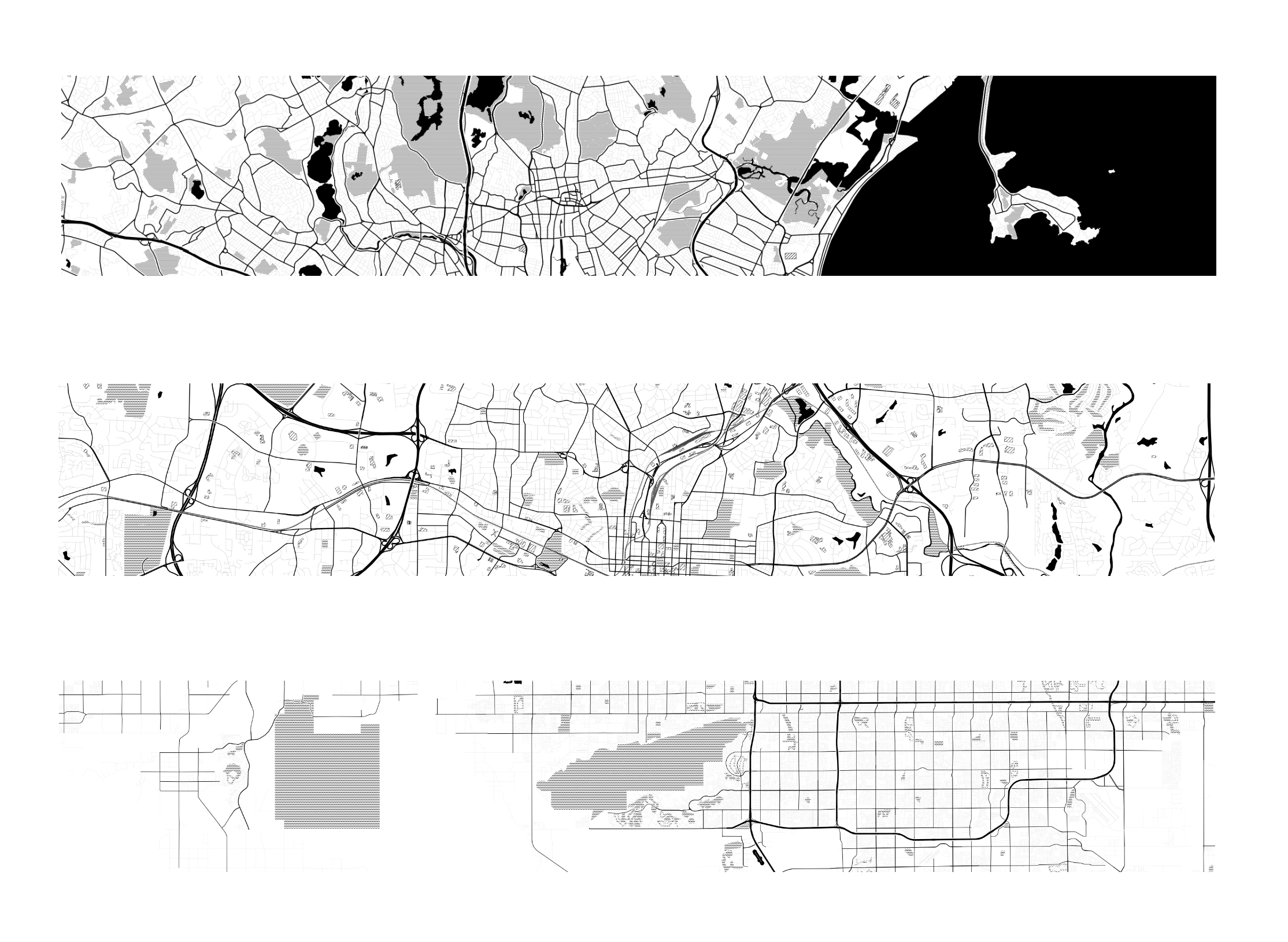

Image composed of map tiles created by Stamen Design, under CC BY 3.0. Data by OpenStreetMap, under ODbL. Map tiles are, from top to bottom, Boston, MA, Raleigh, NC, and Phoenix, AZ

Image composed of map tiles created by Stamen Design, under CC BY 3.0. Data by OpenStreetMap, under ODbL. Map tiles are, from top to bottom, Boston, MA, Raleigh, NC, and Phoenix, AZ

According to the OpenShift installation guide,

You can deploy an OpenShift Container Platform 4 cluster to both on-premise hardware and to cloud hosting services, but all of the machines in a cluster must be in the same datacenter or cloud hosting service.

Even as they reside in a single data center or cloud region, there are cases where an OpenShift cluster's nodes may span multiple failure domains, whether they involve power domains like a subset of generator or UPS feeds in a datacenter, or a cloud provider's availability zones in a region. Kubernetes provides node selection and node affinity mechanisms to provide applications the ability to span failure domains to keep them running through a planned or unplanned outage.

Set up and label nodes

The test cluster for this demonstration includes six nodes, named wkr0, mcp0, wkr1, mcp1, wkr2, and mcp2. For illustration purposes only, we pretend these nodes span three separate data centers in three cities in two geographical regions:

$ oc label node wkr0 topology.kubernetes.io/region=us-east topology.kubernetes.io/zone=bos

$ oc label node mcp0 topology.kubernetes.io/region=us-east topology.kubernetes.io/zone=bos

$ oc label node wkr1 topology.kubernetes.io/region=us-east topology.kubernetes.io/zone=rdu

$ oc label node mcp1 topology.kubernetes.io/region=us-mntn topology.kubernetes.io/zone=rdu

$ oc label node wkr2 topology.kubernetes.io/region=us-east topology.kubernetes.io/zone=phx

$ oc label node mcp2 topology.kubernetes.io/region=us-mntn topology.kubernetes.io/zone=phx

Additionally, to demonstrate adding hardware hints to a subset of nodes, all wkr* nodes are labeled as having faster SSD storage available:

$ oc label nodes wkr0 wkr1 wkr2 disktype=ssd

Assign virtual machines to nodes

Virtual machines in OpenShift follow similar node selection and affinity criteria to Pods with one notable exception. Pods can select a node by name by using nodeName, but this is not implemented for VirtualMachine resources. There are a number of use cases for nodeSelector and affinity rules, we will start with one of the simplest.

NodeSelector

The nodeSelector for a VirtualMachine belongs at the same level as the domain object, under the path spec.template.spec as seen here:

apiVersion: kubevirt.io/v1

kind: VirtualMachine

metadata:

name: boston

spec:

template:

spec:

nodeSelector:

topology.kubernetes.io/zone: bos

[ remainder of VM omitted ]

This nodeSelector will cause the VM to require one of the nodes labeled with the zone bos. In this case, either wkr0 or mcp0 may run this VM.

$ oc get vmi boston

NAME AGE PHASE IP NODENAME READY

boston 7m16s Running 10.129.2.107 wkr0 True

Should work need to be done on wkr0, start by cordoning and draining the node as outlined in the OpenShift Understanding node rebooting documentation

$ oc adm cordon wkr0

node/wkr0 cordoned

$ oc adm drain <node1> --ignore-daemonsets --delete-emptydir-data --force

node/wkr0 already cordoned

[ skipping updates of all the evicted pods ]

error when evicting pods/"virt-launcher-boston-pnbcd" -n "database" (will retry after 5s): Cannot evict pod as it would violate the pod's disruption budget.

[ skipping repeats of above message ]

pod/virt-launcher-boston-pnbcd evicted

node/wkr0 drained

While the drain command runs, it outputs error messages showing that it fails to immediately evict the VM's virt-launcher Pod. Behind the scenes, the eviction request has triggered a VM migration, which we can see afterwards with:

[kni@jump-cnv zones]$ oc get vmim

NAME PHASE VMI

kubevirt-evacuation-5xg8b Succeeded boston

Next, check the migrated VM landed in the other bos node, mcp0:

$ oc get vmi

NAME AGE PHASE IP NODENAME READY

virtualmachineinstance.kubevirt.io/boston 8m27s Running 10.131.0.34 mcp0 True

To return the node to service use:

$ oc adm uncordon wkr0

The nodeSelector field could also be used with the disktype or topology.kubernetes.io/region labels or even multiple labels at once, provided the logic required is AND:

apiVersion: kubevirt.io/v1

kind: VirtualMachine

metadata:

name: boston

spec:

template:

spec:

nodeSelector:

topology.kubernetes.io/zone: bos

disktype: ssd

[ remainder of VM omitted ]

Only the node wkr0 will satisfy both nodeSelector labels, and attempts to migrate the node will result in a failed migration:

$ oc get vmi,vmim

NAME AGE PHASE IP NODENAME READY

virtualmachineinstance.kubevirt.io/boston 6m21s Running 10.129.3.182 wkr0 True

NAME PHASE VMI

virtualmachineinstancemigration.kubevirt.io/kubevirt-migrate-vm-k77r5 Failed boston

Assign virtual machines to nodes using affinity rules

When more nuanced control is required, affinity rules come in to play. Affinity rules fall into three categories: nodeAffinity, podAffinity, and podAntiAffinity. The first behaves much as the nodeSelector above, but with more options. All three affinity rule categories further subdivide into preferredDuringSchedulingIgnoredDuringExecution and requiredDuringSchedulingIgnoredDuringExecution. Ignored during execution means these rules can not affect the behavior of running VMs. In other words, changes to a node's label while VMs are running will not cause a migration. The difference between preferred and required indicates whether the scheduler will make a best-effort attempt to schedule according to the weighted selectors (preferred), or will require all selectors be true and fail to schedule the VM if this is impossible (required).

As an example, we can adapt the above nodeSelector with the failed migration as preferredDuringSchedulingIgnoredDuringExecution, giving a 75% weight to staying in Boston, and 50% weight to having an SSD disk:

apiVersion: kubevirt.io/v1

kind: VirtualMachine

metadata:

name: boston

spec:

template:

spec:

affinity:

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- preference:

matchExpressions:

- key: topology.kubernetes.io/zone

operator: In

values:

- bos

weight: 75

- preference:

matchExpressions:

- key: disktype

operator: In

values:

- ssd

weight: 50

[ remainder of VM omitted ]

As before, the VM schedules on wkr0, which is the only node that satisfies both conditions:

$ oc get vmi,vmim

NAME AGE PHASE IP NODENAME READY

virtualmachineinstance.kubevirt.io/boston 4m54s Running 10.129.3.188 wkr0 True

Now when the VM is migrated, it will allow itself to run on node mcp0 which, while it is in Boston, does not carry the SSD label:

$ oc get vmi,vmim

NAME AGE PHASE IP NODENAME READY

virtualmachineinstance.kubevirt.io/boston 11m Running 10.131.0.56 mcp0 True

NAME PHASE VMI

virtualmachineinstancemigration.kubevirt.io/kubevirt-migrate-vm-jqdl4 Succeeded boston

Pod affinity and anti-affinity

The podAffinity selector covers the case where it is required to keep a VM in the same node, availability zone, or region as a related service. An example might be a latency sensitive front-end application that should run on the same node as its corresponding back-end service. The following Pod and VM definitions will always place the Pod on node mcp0 and allow the VM to migrate between wkr0 (preferred due to the disktype=ssd label there) and mcp0.

apiVersion: v1

kind: Pod

metadata:

name: httpd

labels:

app: low-latency

spec:

nodeName: mcp0

containers:

- name: httpd

image: httpd

imagePullPolicy: IfNotPresent

apiVersion: kubevirt.io/v1

kind: VirtualMachine

metadata:

name: back-end

spec:

template:

spec:

affinity:

podAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- low-latency

topologyKey: topology.kubernetes.io/zone

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- preference:

matchExpressions:

- key: disktype

operator: In

values:

- ssd

weight: 50

[ remainder of VM omitted ]

An example of this running would look like the following:

$ oc get vmi

NAME AGE PHASE IP NODENAME READY

back-end 12m Running 10.129.3.15 wkr0 True

$ oc get pod httpd -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

httpd 1/1 Running 0 30m 10.131.0.60 mcp0 <none> <none>

Note that changes to the Pod do not trigger an effect in the VM. As an example, we delete the httpd Pod and recreate it in mcp2:

$ oc get po httpd -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

httpd 1/1 Running 0 2m9s 10.128.3.198 mcp2 <none> <none>

The back-end VM stays running where it was, but if we migrate it now:

$ virtctl migrate back-end

VM back-end was scheduled to migrate

$ oc get vmi

NAME AGE PHASE IP NODENAME READY

back-end 18m Running 10.128.4.26 wkr2 True

Finally, consider the case where a clustered application like a database requires three cluster members, and for maximum protection, it is desired to keep those all in separate zones. Translating this to an anti-affinity rule would look something like:

apiVersion: kubevirt.io/v1

kind: VirtualMachine

metadata:

name: db01

spec:

template:

metadata:

labels:

app: database

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- database

topologyKey: topology.kubernetes.io/zone

A collection of VMs with the above anti-affinity rule and app: database labels will arrange themselves into nodes in the bos, rdu, and phx zones:

$ oc get vmi

NAME AGE PHASE IP NODENAME READY

db01 58m Running 10.130.2.173 wkr1 True

db02 58m Running 10.128.4.27 wkr2 True

db03 57m Running 10.131.0.62 mcp0 True

Caveats

As mentioned above, none of the affinity rules currently have any effect during execution. In other words, a running VM will continue running even if affinity rules suggest it should migrate to another node. For both nodeSelector and affinity rules, it is not possible to alter the set of rules applied to a VirtualMachineInstance and then migrate it according to the new rules. Instead, a shutdown and restart of the VM's OS is required to propagate the changes. In the case of a single VM, this could be some minutes worth of interruption. In the case of a clustered application like a database, this still could allow an admin to work around planned maintenance or unplanned emergencies without causing interruption of the clustered service. Work to update the KubeVirt API to allow propagating nodeSelector and affinity rules is scheduled for a future release, and can be tracked here. On the subject of future work, this blog was written based on version 4.11 of the OpenShift Virtualization operator. In 4.12, there will be an additional mechanism available to control distribution of virtual machines across a cluster, topology spread constraints. The Kubernetes documentation provides an explanation of how this works for Pods now.

Conclusion

Whether your goal is to avoid losing service during standard maintenance, or to make sure certain VMs always have particular hardware available, node selection and affinity is the way to go.

For more documentation about virtual machines and node assignment, see the upstream documentation at the KubeVirt User Guide.

About the author

More like this

Customer stories and continued momentum: OpenShift Virtualization sessions at Red Hat Summit 2026

Confidential guest reset on QEMU hypervisor: Design choices and approach

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds