From the beginning, I try to achieve a GitOps approach for application deployment and cluster configuration for every customer when starting the Kubernetes journey. I am on the infrastructure side, focused on environment configuration, including cluster configuration, operator installation, etc.

In this article, I will share how I deal with cluster configuration when certain Kubernetes objects depend on each other and how to use Kubernetes and Argo CD features to resolve these dependencies.

This article assumes you have installed and configured the openshift-gitops Operator, which provides Argo CD.

The idea

The basic concept is that everything should be seen as a code and be deployable in a repeatable way. Using GitOps, all code is stored in Git, and from there, a GitOps agent validates and synchronizes changes to one or more clusters.

With OpenShift, Red Hat supports Argo CD using the openshift-gitops Operator. This approach provides everything needed to deploy an Argo CD instance. The only thing you need to take care of is a Git repository, whether it is GitHub, GitLab, Bitbucket, etc.

The problem

Sometimes Kubernetes objects depend on each other. This is especially true when you want to install and configure Operators, where the configuration, based on a Customer Resource Definition (CRD), can only happen after the Operator has been installed and is ready.

Why is that? When you want to deploy an Operator, you will store a "Subscription object" in Git. Argo CD will take this object and apply it to the cluster. However, creating the Subscription object is just the first step for an Operator. Many other steps are required before the Operator is ready. Unfortunately, Argo CD cannot easily verify if the installation is successful. All it sees is that the Subscription object has been created. It then immediately tries to deploy the CRD. The CRD is not yet available on the system because the Operator is still installing it.

Even Argo CD features like Syncwaves would not cause Argo CD to wait until the Operator is successfully installed because, for Argo CD, "success" is the existence of the Subscription object. Custom Health checks inside Argo CD also do not help.

The Argo CD synchronization process will subsequently fail. You could attempt to automatically "Retry" the sync or use multiple Argo CD applications that you execute one after the other. However, I was not fully happy with that and tried a different approach.

My solution

There are different options to solve the problem. I use a Kubernetes Job that runs some commands to verify the Operator status. Suppose I want to deploy and configure the Compliance Operator. The steps would be:

- Install the Operator.

- Wait until the Operator is ready.

- Configure Operator-specific CRDs (for example ScanSettingBinding).

Waiting until the Operator is ready is the tricky part for Argo CD. What I have done is the following:

1. Install the Operator. This is the first step and occurs during Sync Wave 0.

2. Create a Kubernetes Job that verifies the Operator status. This Job also requires a ServiceAccount and a role with a binding. They are configured during Sync Wave 1.

Moreover, I use a Hook (another Argo CD feature) with the deletion policy HookSucceeded. This verifies that the Job, ServiceAccount, Role, and RoleBinding are removed after verifying the status. The verification is successful when the Operator status says Succeeded. All the Job does is execute some oc commands, such as:

oc get clusterserviceversion openshift-gitops-operator.v1.8.0 -n openshift-gitops -o jsonpath={.status.phase}

Succeeded

3. Finally, during the next Sync Wave (2+), the CRD can be deployed. In this case, I deploy the object ScanSettingBinding. Everything is correctly synchronized in Argo CD, and the Operator and its configuration are in place.

This approach helps me to install and configure any Operator in one Argo CD Application. While it is not 100% the GitOps way, the Job that is executed is repeatable.

You can find more information about Sync Waves and Hooks in the official Argo CD documentation: Sync Phases and Waves.

View the process in action

Prerequisites:

- OpenShift cluster 4.x.

- openshift-gitops is installed and ready to be used.

- Access to GitHub (or your own repository).

As an example repository for Helm charts, I am using https://charts.stderr.at/ and from there, the following charts:

- compliance-operator-full-stack

- helper-operator (sub chart): Responsible for installing the Operators.

- helper-status-checker (sub chart): Responsible for checking the Operator status (and maybe approve the InstallPlan if required).

Argo CD Application

I have the following Application configured in Argo CD:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: in-cluster-install-compliance-scans

namespace: openshift-gitops

spec:

destination:

namespace: default

server: 'https://kubernetes.default.svc' <1>

info:

- name: Description

value: Deploy and configure the Compliance Scan Operator

project: in-cluster

source:

path: charts/compliance-operator-full-stack <2>

repoURL: 'https://github.com/tjungbauer/helm-charts'

targetRevision: main

- Installing on the local cluster where Argo CD is installed.

- Git configuration, including path and revision.

The Application would synchronize the objects:

- Subscription

- OperatorGroup

- Namespace (openshift-compliance)

- ScanSettingBinding (CRD)

Where are the objects needed for the Job? They will not show up here since they are only available during the Sync-Hook. In fact, they will only appear when they are alive and will disappear again after the operator status is verified.

Helm Chart configuration

The Helm Chart gets its configuration from a values file. You can verify the whole file on GitHub.

The key point is that some variables are handed over to the appropriate Sub Charts.

Operator configuration

This part is handed over to the Chart helper-operator.

helper-operator:

operators:

compliance-operator:

enabled: true

syncwave: '0'

namespace:

name: openshift-compliance

create: true

subscription:

channel: release-0.1

approval: Automatic

operatorName: compliance-operator

source: redhat-operators

sourceNamespace: openshift-marketplace

operatorgroup:

create: true

notownnamespace: true

It is executed during Sync Wave 0 and defines whether a Namespace (openshift-compliance) shall be created (true) and the specification of the Operator, which you need to know upfront:

- channel: Defines which channel will be used. Some operators offer different channels.

- approval: Either Automatic or Manual. It defines whether the Operator will be updated automatically or require approval.

- operatorName: The actual Operator name (compliance-operator).

- source: Where does this Operator come from (redhat-operator).

- sourceNamespace: In this case, openshift-marketplace.

You can fetch these values by looking at the Packagemanifest:

oc get packagemanifest compliance-operator -o yaml

Status checker configuration

This part is handed over to the Helm sub chart helper-status-checker. The main values here are the operatorName and the namespace where the Operator is installed.

What is not visible here is the Sync Wave, which is set to 1 by default in the Helm Chart. You can configure it in this section if you need to overwrite it.

helper-status-checker:

enabled: true <1>

approver: false <2>

# use the value of the currentCSV (packagemanifest) but WITHOUT the version !!

operatorName: compliance-operator <3>

# where operator is installed

namespace:

name: openshift-compliance <4>

serviceAccount: <5>

create: true

name: "sa-compliance"

- Is the status checker enabled or not?

- Enable/disable automatic InstallPlan approver.

- The name of the operator as it is reported by the value currentCSV inside the packageManifest.

- The namespace where the Operator has been installed.

- The name of the ServiceAccount that is created temporarily.

The operatorName is sometimes different than the Operator name required for the helper-operator chart. Here it seems the value of the currentCSV must be used but without the version number. The Job will look up the version itself.

Operator CRD configuration

The final section of the values file manages the configuration for the Operator itself. This section does not use a sub chart. Instead, the variables are used in the main chart. In this example, the ScanSettingBinding will be configured during Sync Wave 3, which is all that's needed for basic functionality.

compliance:

scansettingbinding:

enabled: true

syncwave: '3' <1>

profiles: <2>

- name: ocp4-cis-node

- name: ocp4-cis

scansetting: default

- Define the Sync Wave. This value must be higher than the Sync Wave of the helper-status-checker.

- ScanSettingBinding configuration. Two profiles are used in this example.

Here is a full example of the values-file: https://github.com/tjungbauer/helm-charts/blob/main/charts/compliance-operator-full-stack/values.yaml

Synchronizing Argo CD

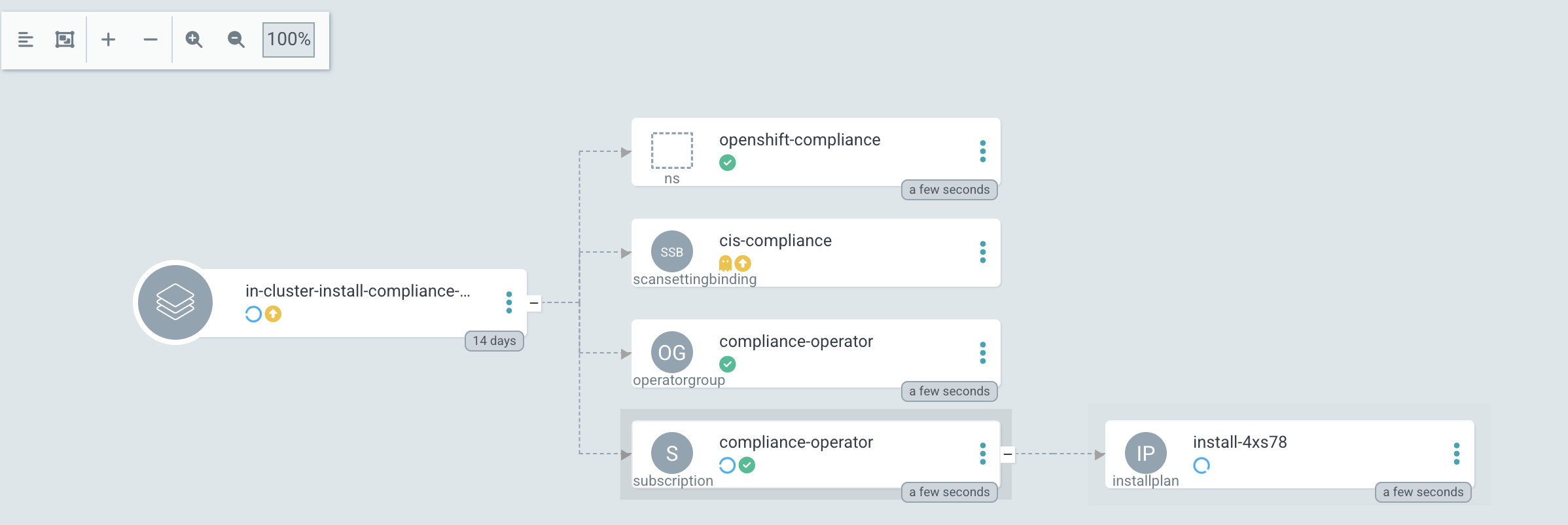

1. Basic Application in Argo CD before synchronization:

2. Sync Wave 0: Synchronization has started. Namespace and Subscription are deployed.

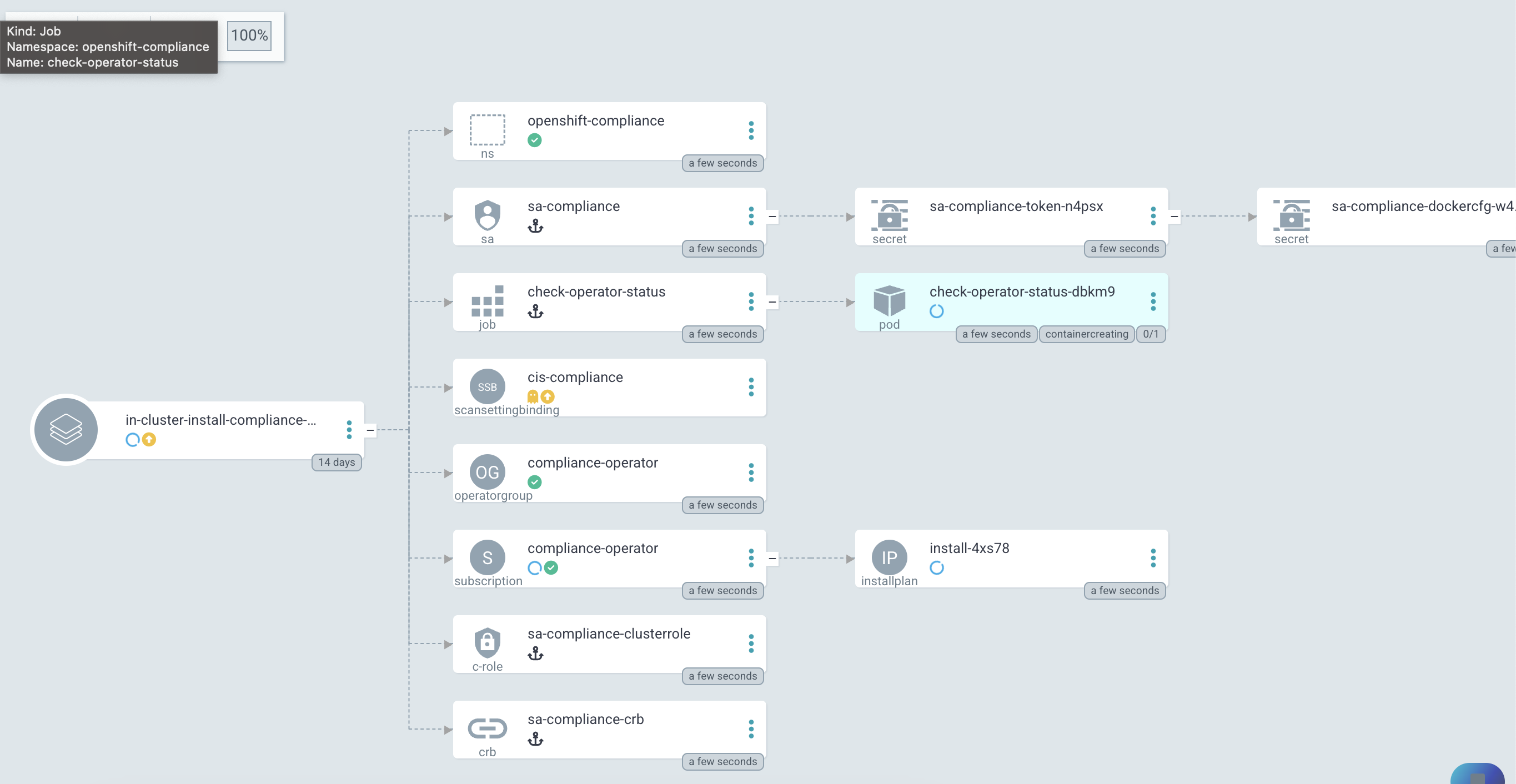

3. Sync Wave 1: Status Checker Job has started and tries to verify the Operator.

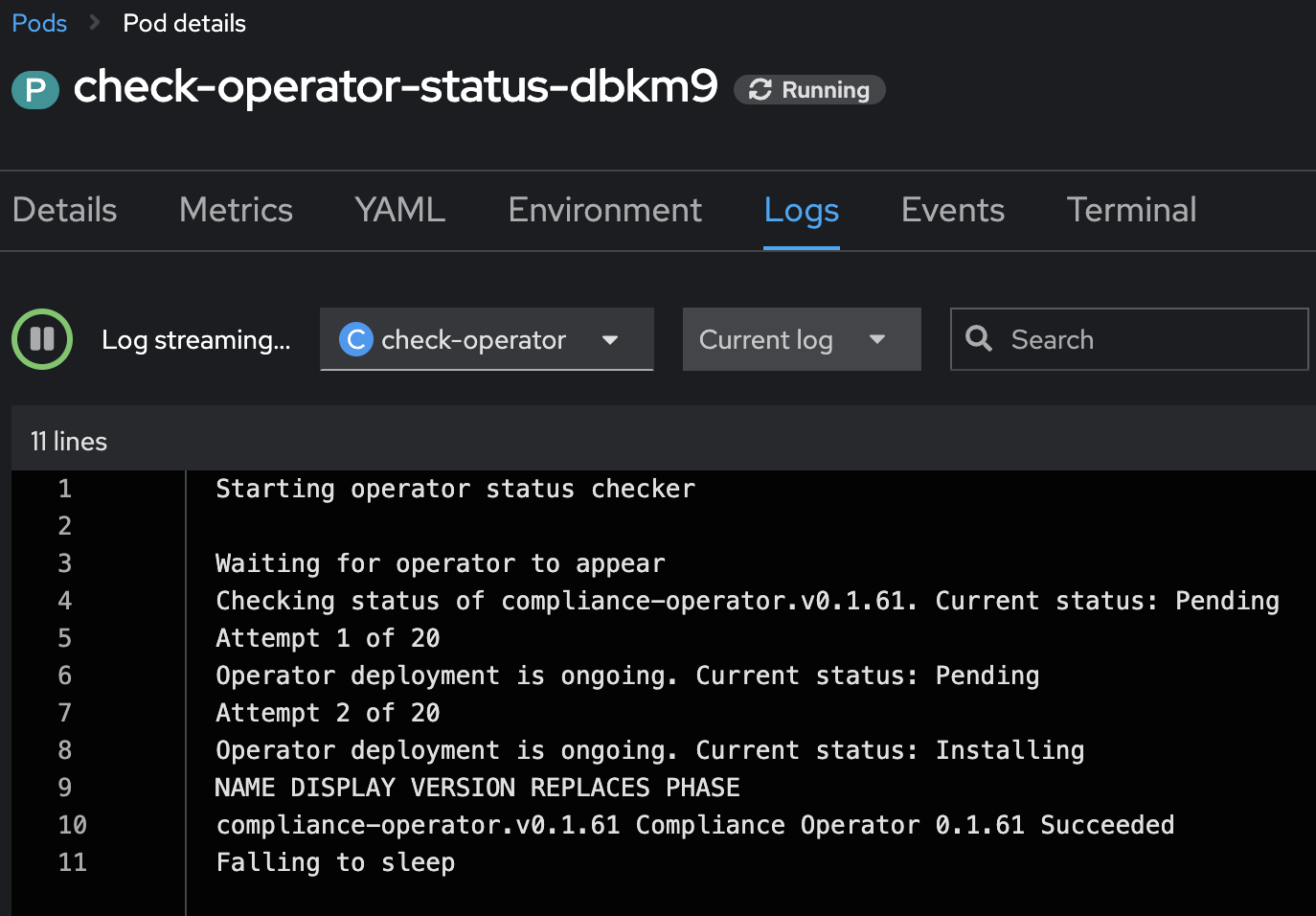

4. The Log output of the Operator. You can see that the status switches from Pending to Installing to Succeeded.

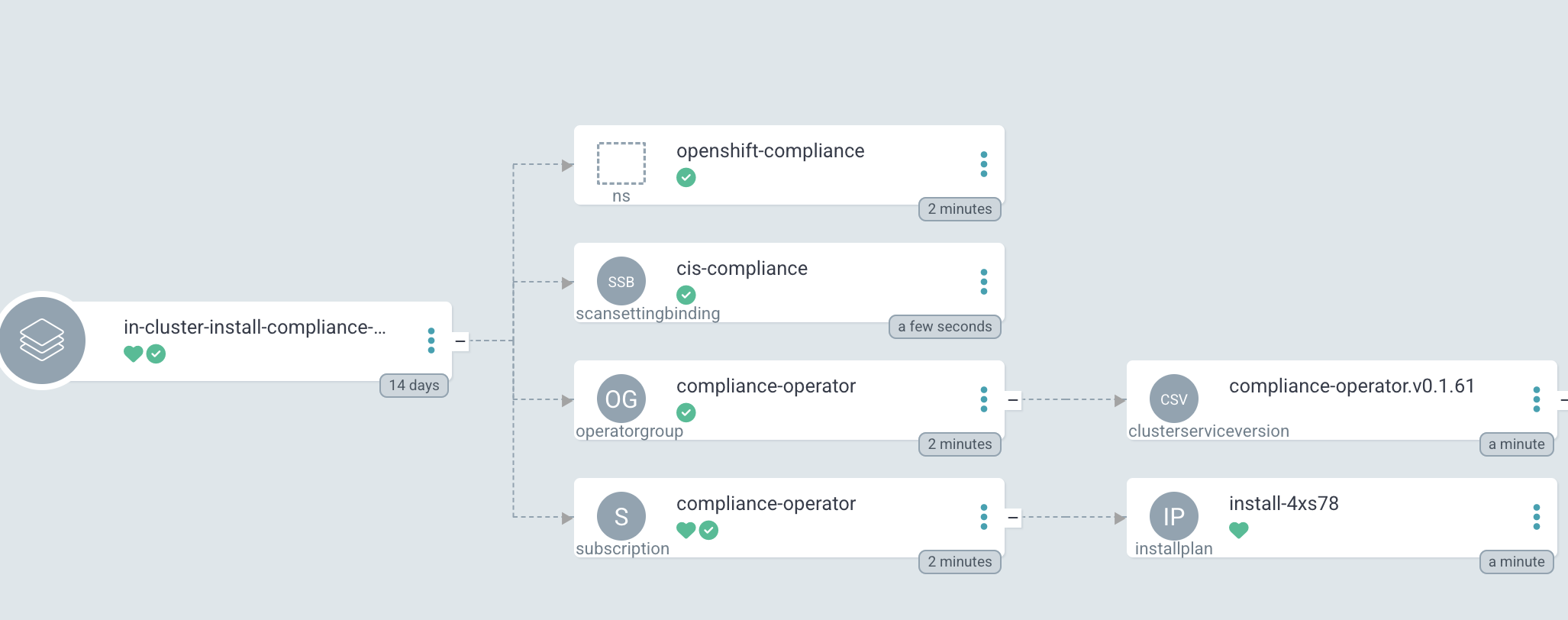

5. After Sync Wave 3, the whole Application was synchronized, and the Checker Job was removed.

Wrap up

This is one way to install and configure an Operator using a single Argo CD Application. It works on different installations and for different setups. Other options might still be feasible, like using retries or multiple Applications. I prefer this solution since I only manage a single App.

About the author

More like this

4 reasons to start using image mode for Red Hat Enterprise Linux right now

The agentic paradox and the case for hybrid AI

Edge computing covered and diced | Technically Speaking

Kubernetes and the quest for a control plane | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds