We’re pleased to announce Red Hat OpenShift 4.14 is now generally available. Based on Kubernetes 1.27 and CRI-O 1.27, this latest version accelerates modern application development and delivery across the hybrid cloud while keeping security, flexibility and scalability remain at the forefront.

In this blog, we cover the top highlights of Red Hat OpenShift 4.14. A comprehensive list of the Red Hat OpenShift 4.14 innovations and updates can be found in the OpenShift 4.14 Release Notes.

What’s New in Red Hat OpenShift 4.14 Infographic by Sunil Malagi

Optimize TCO via Hosted Control Planes for On-Premises Multi-cluster Deployments

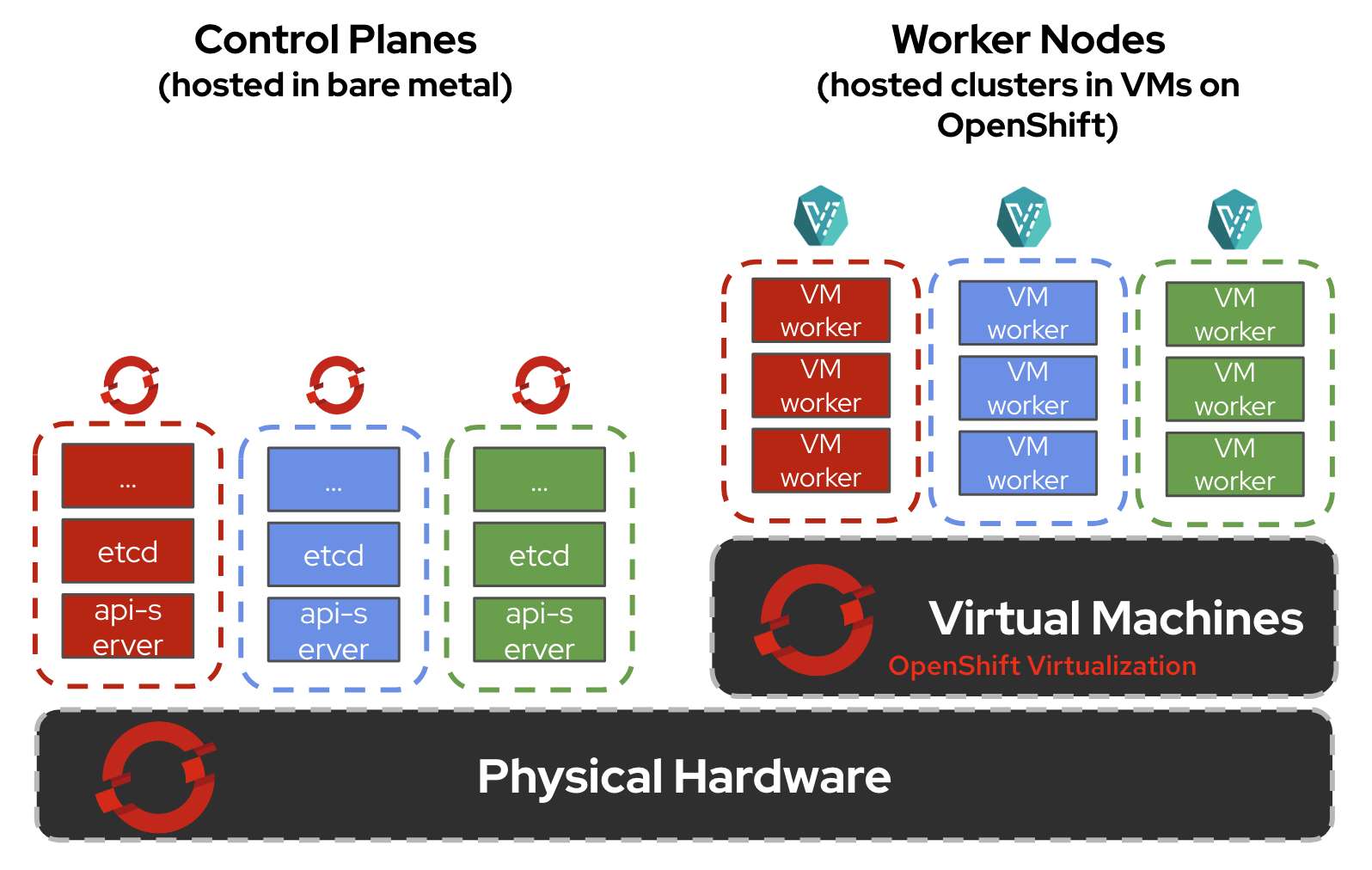

We’re pleased to announce hosted control planes on bare metal is generally available in this release. With hosted control planes, you can achieve 3x infrastructure cost savings from hosting multiple cluster control planes as workloads on the hosting service's cluster nodes, twice as fast provisioning times, increased reliability, and greater resiliency. Unlike a standalone cluster where some of the Kubernetes services in the control plane run as systemd services, control planes are deployed as just another workload, which can be scheduled on any available nodes placed in their dedicated namespaces. Hosted control planes on bare metal is enabled through the multicluster engine for Kubernetes operator version 2.4.

In addition, hosted control planes with OpenShift Virtualization will be generally available in the coming weeks. This lets you run hosted control planes and OpenShift Virtualization virtual machines on the same underlying base OpenShift cluster. The combination of hosted control planes with OpenShift Virtualization lets you run and manage virtual machine workloads alongside container workloads, while taking advantage of more efficient resource utilization by packing multiple hosted control planes and hosted clusters in the same underlying bare metal infrastructure.

Hosted control planes on bare metal with OpenShift Virtualization

24 months Red Hat OpenShift Lifecycle for Extended Update Support Releases on All Architectures

Previously, we announced that Red Hat OpenShift Extended Update Support (EUS) releases were supported for 24 months for OpenShift deployments that use x86_64 architecture, beginning with Red Hat OpenShift 4.12 and continuing on all subsequent even-numbered releases. Beginning with OpenShift Container Platform 4.14, the 24-month Extended Update Support (EUS) is extended to include 64-bit ARM, IBM Power (ppc64le), and IBM Z (s390x) platforms and will continue on all subsequent even-numbered releases. More information on Red Hat OpenShift EUS is available in OpenShift Life Cycle and OpenShift EUS Overview.

Secure your Infrastructure and Workloads

Red Hat OpenShift 4.14 continues to reinforce secure application development across the hybrid cloud with new security features and enhancements:

- Config maps and secrets can now be shared across OpenShift namespaces via the OpenShift Shared Resource CSI Driver. This enables you to more easily provide entitlements to Red Hat products as images are built.

- You can now bring your existing AWS security groups in conjunction with your existing Amazon Virtual Private Cloud (VPC) and apply them to your control plane and compute machines in your own OpenShift cluster in AWS.

- For self-managed OpenShift on Azure, you can now create and manage OpenShift clusters temporary, limited privilege credentials. Use Azure managed identities for Azure resources for authentication in conjunction with Azure Active Directory (AD) workload identities to securely access Azure cloud resources. See Configuring an Azure cluster to use short-term credentials for details.

- For confidential computing in the public cloud, installing a cluster on Google Cloud Platform (GCP) using Confidential VMs is now generally available. You can also install a cluster on Azure using Confidential VMs on Azure, which is available for Technology Preview.

- Ingress Node Firewall Operator is now generally available.

- We are excited to introduce the Secrets Store CSI (SSCSI) Driver Operator as Technology Preview. The SSCSI Driver Operator integrates with secret storage systems such as AWS Secrets Manager, AWS Systems Manager Parameter Store, and Azure Key Vault, which customers widely use to securely manage application secrets. This operator is aimed at security-conscious customers who require secrets and sensitive data to be managed in a centralized secrets management solution that meets their regulatory requirements around encryption and access. This solution is typically managed outside of OpenShift clusters, wherein customers need to pull in secrets for application usage. The SSCSI driver mounts external secrets in ephemeral in-line volumes to the application pod, so that when the pod is deleted, the volume and secret content are not persisted in the cluster. The SSCSI Driver needs a SSCSI Provider to integrate with the external secret stores, and we look forward to working with our partners to bring the end-to-end solution in future releases.

- You can use security context constraints (SCCs) to control permissions for the pods in your cluster. Default SCCs are created during installation, as well as when you install Operators or other OpenShift platform components. As a cluster administrator, you can create your own SCCs by using the OpenShift CLI (oc). However, these custom SCCs can sometimes lead to preemption issues that result in the following:

- Modifications of out-of-the-box SCCs can cause core workloads to malfunction.

- Addition of new higher priority SCCs that overrule existing pinned out-of-the-box SCCs during SCC admission can cause core workloads to malfunction.

- Secure token usage with oc cli – When you use oc login with an access token, pasting the token on the command line with oc login --token command is insecure. The token is logged in with Bash history and can be exploited with a simple “ps“ command. Moreover, the token is searchable by any system administrator. To deliver a secure option for CLI-based logins to a user’s OpenID Connect (OIDC) identity provider, we’ve added the oc login –-web in this release. This command launches a web browser whereby the user logs into their OIDC identity provider securely. Once login is successful, the user uses the oc commands for further access to the OpenShift APIs.

- The Security Technical Implementation Guide (STIG) from the U.S. Defense Information Systems Agency (DISA STIG) is now available for Red Hat OpenShift 4. In conjunction, the Compliance Operator includes the DISA-STIG profile, as well as the latest CIS 1.4 benchmark for OpenShift.

- To protect your Red Hat OpenShift environments and Kubernetes services in all major cloud providers, such as Amazon Elastic Kubernetes Service (EKS), Microsoft Azure Kubernetes Service (AKS), and Google Kubernetes Engine (GKE), you can take advantage of Red Hat Advanced Cluster Security Cloud Service, which includes a complimentary 60 day trial. Red Hat Advanced Cluster Security Cloud Service is a fully managed software-as-a-service to protect containerized applications and Kubernetes across the full application lifecycle: build, deploy, and runtime.

Modernize your Virtual Machines with Red Hat OpenShift Virtualization in AWS and ROSA

Red Hat OpenShift Virtualization is now supported on AWS and Red Hat OpenShift Service on AWS (ROSA). You can bring your virtual machines (VMs) using the Migration Toolkit for Virtualization and run your VMs side-by-side with containers on AWS or ROSA, while simplifying management and improving time to production. This lets you preserve your existing virtualization investments, while taking advantage of the simplicity and speed of a modern application platform.

Improved Scaling and Stability with Open Virtual Network (OVN) Optimizations

Red Hat OpenShift 4.14 introduces an optimization of Open Virtual Network Kubernetes (OVN-K), where its internal architecture was modified to reduce operational latency for the purposes of removing barriers to scale and performance of the networking control plane. Network flow data is now localized on every cluster node versus having a heavy control plane with centralized information. This reduces operational latency and reduces cluster-wide traffic between worker and control nodes, thus cluster networking scales linearly with node count to optimize larger clusters. Because network flow is localized on every node, RAFT leader election of control plane nodes is no longer needed, and a primary source of instability is removed. An additional benefit to localized network flow data is that the effect of any single node loss on networking is limited to the failed node and has no bearing on the rest of the cluster’s networking, thereby making the cluster more resilient to failure scenarios. See OVN-Kubernetes architecture for more information

Boost AI and Graphics Workloads with Red Hat OpenShift and NVIDIA L40S and NVIDIA H100

Red Hat and NVIDIA are partnered to unlock the power of AI for every business by delivering an end-to-end enterprise platform optimized for AI workloads. In conjunction, we’re pleased to announce support for the NVIDIA L40S and NVIDIA H100 GPU accelerators with Red Hat OpenShift across the hybrid cloud. Together, Red Hat OpenShift and NVIDIA help power next generation data center workloads, such as generative Artificial Intelligence (AI) and Large Language Model (LLM) inference and training, intelligent chatbots, graphics, and video processing applications.

VMware vSphere Container Storage Interface (CSI) Automatic Migration

For existing OpenShift on VMware vSphere clusters that were initially deployed in Red Hat OpenShift 4.12 or earlier, clusters will need to undergo the vSphere CSI migration after upgrading to Red Hat OpenShift 4.14. The vSphere CSI migration is automatic and seamless. Migration does not change how you use all existing API objects, such as persistent volumes, persistent volume claims, and storage classes.

Cloud native sustainability for Red Hat OpenShift

Monitoring and optimizing power consumption in Kubernetes environments is crucial for efficient resource management. To address this need, OpenShift 4.14 includes the Developer Preview of power monitoring for Red Hat OpenShift. Power monitoring is the product realization of Kepler, the Kubernetes-based Efficient Power Level Exporter. Available at the OperatorHub on OpenShift, it uses eBPF to compute and export granular power consumption for Pods, Namespaces and Nodes. It provides data measured from Intel’s Running Average Power Limit (RAPL) or Advanced Configuration and Power Interface (ACPI), together with the ability to add pre-trained models for setups in which this information is not available. By leveraging this model, Kepler provides estimations of workload energy consumption in the public cloud and in private data centers, aiding in future steps towards resource planning and optimization.

OpenShift across the Hybrid Cloud

You can now centrally deploy and manage on-premises bare metal clusters from Red Hat Advanced Cluster Management (RHACM) running in AWS, Azure, and Google Cloud. This hybrid cloud solution extends the reach of your central management interface to deliver bare metal clusters into restricted environments. In addition, RHACM features an improved user experience for deploying OpenShift in Nutanix, expanding the range of partnerships providing metal infrastructure where you need it.

For resiliency across the hybrid cloud, Red Hat OpenShift Data Foundation (ODF) 4.14 introduces general availability for regional disaster recovery (DR) for Red Hat OpenShift workloads. Coupled with RHACM, we address business continuity needs for stateful workloads and enable administrators to provide DR solutions for geographically distributed clusters. Asynchronous replication can be set at the application level of granularity to achieve the right Recovery Point Objective (RPO) and Recovery Time Objective (RTO) for mission critical workloads.

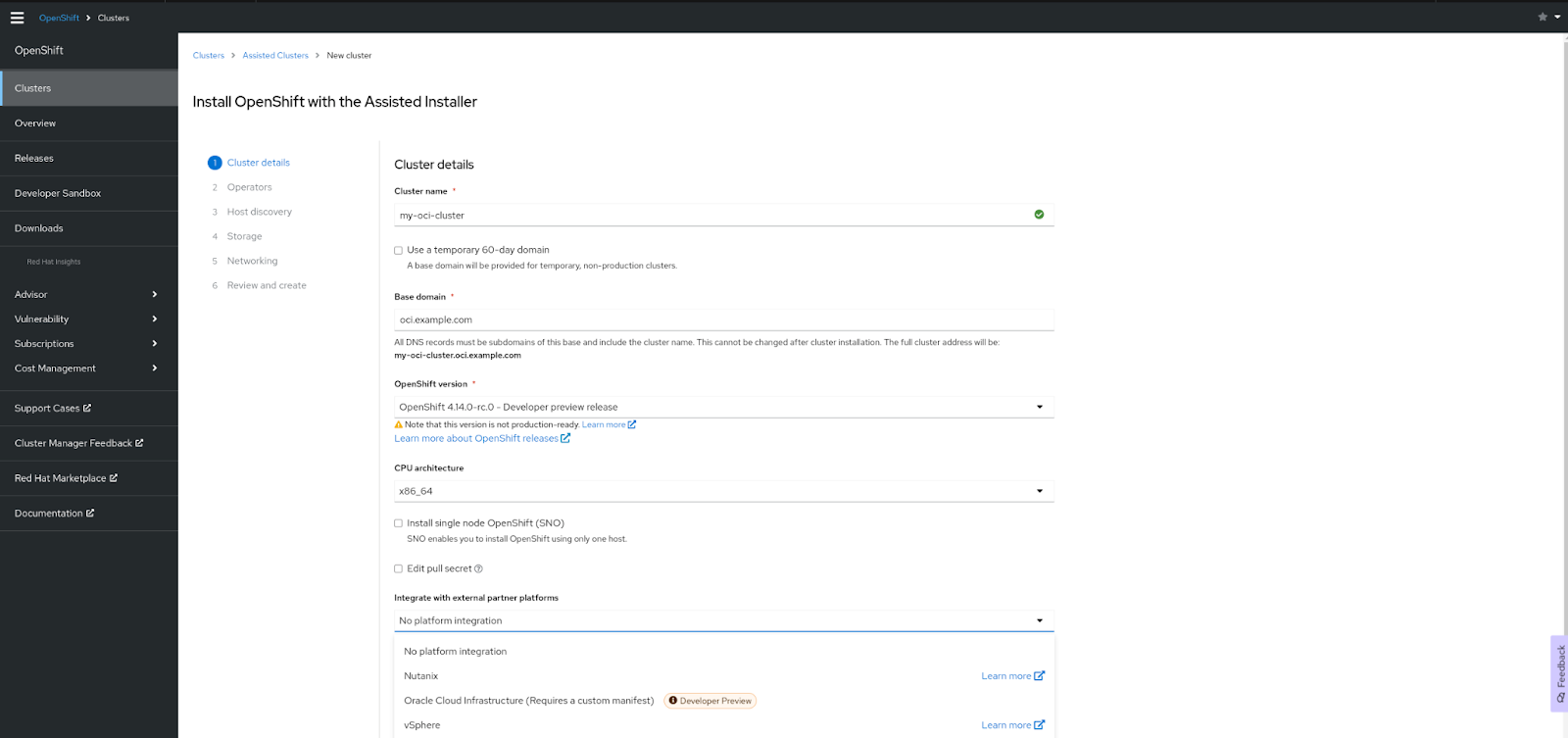

We’re adding the capability to deploy OpenShift on Oracle Cloud Infrastructure, which is available for Developer Preview. You deploy OpenShift on Oracle Cloud Infrastructure using Assisted Installer for connected deployments or the Agent-based Installer for restricted network deployments. Request access to try OpenShift on Oracle Cloud Infrastructure by filling out this form.

Red Hat OpenShift on Oracle Cloud Infrastructure (Developer Preview)

This release includes MicroShift 4.14, which is used in Red Hat Device Edge. MicroShift enables deployments at the far edge. It provides enterprise-ready, lightweight Kubernetes container orchestration, extending operational consistency across edge and hybrid cloud environments no matter where devices are deployed in the field. Being lightweight is critical in supporting different use cases and workloads on small, resource-constrained devices at the farthest edge. It balances the efficiency of low resource utilization with the familiar tools and processes that Kubernetes users are already accustomed to, as a natural extension of their Kubernetes environments. Example use scenarios include assembly lines, IoT gateways, points of sale/information, and vehicle systems that have to operate with limited computing resources, power, cooling, and connectivity.

Try Red Hat OpenShift 4.14 Today

Get started today at https://console.redhat.com/ and take advantage of the latest features and enhancements. To find out what’s next, check out the following resources:

- What’s New and What’s Next in Red Hat OpenShift

- OpenShift YouTube Channel

- OpenShift Blogs

- OpenShift Commons community

- Red Hat Developer Blogs

- Red Hat Portfolio Architecture Center

Thank you for reading about what’s new in Red Hat OpenShift 4.14. Please comment or send us feedback through your Red Hat contacts, or create an issue on OpenShift in GitHub.

About the author

Ju Lim works on the core Red Hat OpenShift Container Platform for hybrid and multi-cloud environments to enable customers to run Red Hat OpenShift anywhere. Ju leads the product management teams responsible for installation, updates, provider integration, and cloud infrastructure.

More like this

A decade of open innovation: Red Hat continues to scale the open hybrid cloud with Microsoft

Stop managing the past and start building IT’s future

Collaboration In Product Security | Compiler

Keeping Track Of Vulnerabilities With CVEs | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds