At Red Hat, performance and scale are treated as first class citizens and a lot of time and effort are put into making sure our products scale. We have a dedicated team of performance and scale engineers that work closely with product management, developers, and quality engineering to identify performance regressions, provide product performance data and guidance to customers, come up with tuning and test Red Hat OpenStack Platform deployments at scale. As much as possible, we test our products to match real-world use cases and scale.

In the past, we had scale tested director-based Red Hat OpenStack Platform deployments to about 300 bare metal overcloud nodes in external labs with the help of customers/partners. While the tests helped surface issues, we were more often than not limited by hardware availability than product scale when trying to go past the 300 count.

Over the last few years we have built capabilities, internally, to scale test Red Hat OpenStack Platform’s deployments with more than 500 bare metal nodes. We hope to test, identify and fix issues before our customers run at this scale. While there is no theoretical limit to the number of Red Hat OpenStack Platform nodes that can be deployed and run by Red Hat OpenStack director, the sizing and configuration of the environment including the undercloud and overcloud controllers can affect scale, hence it becomes important to run these kinds of tests.

In the past few weeks, the performance and scale team successfully completed an enormous effort to deploy, run and test Red Hat OpenStack Platform 13, which is our current Long Term Supported Release, at a scale of 510 bare metal nodes, all deployed and managed through Red Hat OpenStack Platform director.

So, how did we do it?

Red Hat OpenStack Director

The Red Hat OpenStack Platform director is a toolset for installing and managing a complete OpenStack environment. Director is based primarily on the OpenStack project TripleO, which is an abbreviation of "OpenStack-On-OpenStack." This project consists of OpenStack components that you can use to install a fully operational OpenStack environment. This includes Red Hat OpenStack Platform components that provision and control bare metal systems to use as Red Hat OpenStack Platform nodes.

Over the years, director has grown to be a versatile, highly customizable (including configuring with Ansible) and robust deployment tool serving a wide variety of customer use cases, be it for general purpose cloud, NFV or Edge. The director host is also known as the “undercloud” and is used to deploy and manage the “overcloud” which is the actual workload cloud

Hardware

We used a total of 10 different models of Dell and Supermicro servers spanning from Ivy Bridge to Skylake with different NIC layouts to get to this scale, all deployed using the Composable Roles feature of director which makes deploying across non-homogenous nodes easy.

For the undercloud host, which hosts director, we used a Supermicro 1029P Skylake server with 32 cores/64 threads and 256 GB of memory. Similar Supermicro 1029P machines were also used for deploying the Red Hat OpenStack Platform controllers which host the APIs and other clustered services – such asGalera, RabbitMQ, HaProxy, etc. The compute nodes were a mix of all the available 10 different server varieties available.

Testing

Our goal was simple, to get to as many compute nodes as possible – while identifying and debugging issues. In the process, several tunings were tried to determine the impact on scale and performance. We also ran control plane tests once we achieved a 500+ node overcloud.

-

Deploy an undercloud for doing a large scale deployment of overcloud.

-

Set appropriate network ranges large enough for the control plane subnet.

-

User 10G NIC for provisioning.

-

-

Deploy a bare minimum of 3 overcloud controllers and 1 compute node of each server variety to validate flavors/nic-configs.

-

Deploy and scale up compute nodes incrementally using the default method of deployment (initially, all 500+ bare metal nodes were not available to use at once, they were provided incrementally).

-

Once at the 500+ overcloud node mark, measure how much time is taken to add a compute node to a large, pre-existing overcloud.

-

Run control plane tests, such as creating a large number of networks/routers and VMs after the final scale-out of the overcloud (reaching 500+ nodes).

-

Delete overcloud stack.

-

Repeat scale deployment test using config-download (Technology Preview for Red Hat OpenStack Platform 13 using Ansible configuration).

Findings

With the out-of-the-box configuration/tuning for the undercloud we were able to scale up to an overcloud of 252 overcloud nodes.

Trying to scale up from 252 to 367, through a stack update, was when we first started seeing issues as captured in this bug. The update would not progress with the following reason, “RemoteError: resources.UpdateConfig: Remote error: DiscoveryFailure Could not determine a suitable URL for the plugin”.

Upon closer investigation, it was evident that Keystone requests from Heat were timing out. By default, we deploy 12 Keystone admin and 12 Keystone main workers on the undercloud, this was not enough to handle all of the authentication requests from the nested stacks in such a large overcloud stack. We intentionally limit the Keystone workers to 12, as each additional worker not only consumes additional memory but also persistent connections to the database. However, in large scale clusters like this, it makes sense to increase the number of Keystone workers as needed.

We raised the number of Keystone admin workers to 32 and main workers to 24. We also had to enable caching with memcached to improving Keystone performance. Even after our tweaks, Keystone main was consuming only 3-4 GB of memory and Keystone main was consuming 2-3 GB, so we determined that these are safe tunings without memory consumption tradeoffs for large scale clusters.

After these changes, the scale up to 367 nodes succeeded. On further scale ups, we ran into a few issues with connection timeouts to Keystone and DBDeadlock errors, which were patched to increase the number of retries.

Once we finished deploying with Heat/os-collect-config (default method), and running some control plane tests at the 500+ node overcloud scale, we deleted the stack and retried deploying using config-download (the Ansible-based configuration).

In OpenStack Queens, director/TripleO defaults to use an agent running on each overcloud node called os-collect-config. This agent periodically polls the undercloud Heat API for software configuration changes that need to be applied to the node. The os-collect-config agent runs os-refresh-config and os-apply-config as needed whenever new software configuration changes are detected.

This model is a “pull” style model given each node polls the Heat API and pulls changes, then applies them locally. With the Ansible-based config-download, director/TripleO has switched to a “push” style model. Heat is still used to create the stack (and all of the Red Hat OpenStack resources) but Ansible is used to replace the communication and transport of the software configuration deployment data between Heat and the Heat agent (os-collect-config) on the overcloud nodes.

Instead of os-collect-config running on each overcloud node and polling for deployment data from Heat, the Ansible control node applies the configuration by running ansible-playbook with an Ansible inventory file and a set of playbooks and tasks.

While running with config-download we ran into some issues which resulted in the deployment being slower than Heat/os-collect-config method and also timeouts during the SSH enablement workflow. Both of these issues were fixed in collaboration with the development teams which helped the deployment progress.

Tuning

To achieve the 500-plus overcloud node scale, a lot of tuning needed to be done to the undercloud. Several of these tunings should be available as a default on or around the time this is published as we continue our effort to ship the product with out-of-the-box tuning for scale. However, there are a few other cases, like RPC timeouts, in which tuning only makes sense at scale.

Here is the summary of the tunings that we did on the undercloud to get to the 500+ node scale.

Keystone

-

In

/etc/httpd/conf.d//10-keystone_wsgi_admin.conf-

WSGIDaemonProcess keystone_admin display-name=keystone-admin group=keystone processes=32 threads=1 user=keystone-

Admin processes 32

-

Admin threads 1

-

-

-

In

/etc/httpd/conf.d/10-keystone_wsgi_main.conf-

WSGIDaemonProcess keystone_main display-name=keystone-main group=keystone processes=24 threads=1 user=keystone-

Main processes 24

-

Main threads 1

-

-

-

Keystone processes do not take a substantial amount of memory, so it is safe to increase the process count. Even with 32 processes of admin workers, keystone admin takes around 3-4 GB of memory and with 24 processes, Keystone main takes around 2-3 GB of RSS memory.

-

Enabling caching using memcached

-

The token subsystem is Red Hat OpenStack Platform Identity’s most heavily used API. As a result, all types of tokens benefit from caching, including Fernet tokens. Although Fernet tokens do not need to be persisted, they should still be cached for optimal token validation performance.

-

[cache]

-

enabled = true

-

backend = dogpile.cache.memcached

-

-

-

Set notification driver to

noopin/etc/keystone/keystone.conf, to ease up pressure on rabbitmq (recommended not to use telemetry, can also do this by settingenable_telemetrytofalseinundercloud.confbefore undercloud install)-

driver=noop

-

Heat

-

In

/etc/heat/heat.conf-

num_engine_workers=48

-

executor_thread_pool_size = 48

-

rpc_response_timeout=1200

-

-

Enable caching in

/etc/heat/heat.conf-

[cache]

-

backend = dogpile.cache.memcached

-

enabled = true

-

memcache_servers = 127.0.0.1

-

-

Set notification driver to

noop/etc/heat/heat.conf, to ease up pressure on rabbitmq (recommended not to use telemetry, can also do this by settingenable_telemetrytofalseinundercloud.confbefore undercloud install)-

driver=noop

-

MySQL

-

In

/etc/my.cnf.d/galera.cnf-

[mysqld]

-

innodb_buffer_pool_size = 5G

-

Neutron

-

Set notification driver to

noopin/etc/neutron/neutron.conf, to ease up pressure on rabbitmq (recommended not to use telemetry, can also do this by settingenable_telemetrytofalseinundercloud.conf)-

notification_driver=noop

-

Ironic

-

In

/etc/ironic/ironic.conf-

sync_power_state_interval = 180

-

This reduced the frequency at which ironic-conductor CPU usage peaks.

-

Mistral

-

In

/etc/mistral/mistral.conf-

[DEFAULT]

-

rpc_response_timeout=600

-

-

The config-download execution_field_size_limit_kb in

/etc/mistral/mistral.confneeds to be raised-

[engine]

-

execution_field_size_limit_kb=32768

-

Nova

-

In

/etc/nova/nova.conf, disable notifications/telemetry to ease up some pressure on rabbitmq on undercloud (recommended not to use telemetry, can also do this by settingenable_telemetrytofalseinundercloud.conf)-

driver=noop

-

On top of the above tunings, we recommend that Ironic Node Cleaning be enabled (by setting clean_nodes=true in undercloud.conf) and root device hints be used when overcloud nodes have more than one disk.

Observations

-

Using Heat/os-collect-config method (default) we eventually scaled out to 510 overcloud nodes comprised of 3 controllers and 507 compute nodes. The resulting overcloud had close to 20,000 cores.

-

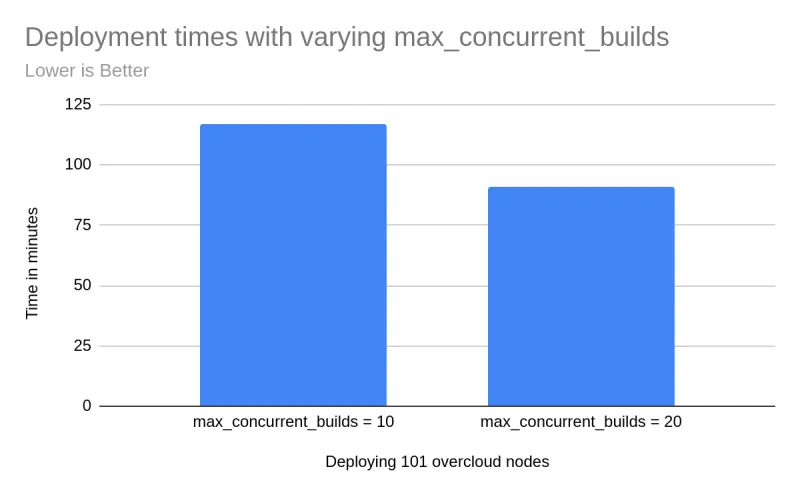

During deployment, the value of

max_concurrent_buildscan be tweaked on the undercloud innova.conf. This can result in faster deployment times provided that you have a performant provisioning interface (10Gb recommended). When deploying 101 overcloud nodes, we observed that it takes significantly less time whenmax_concurrent_buildswas set to20instead of the default10.

-

To add 1 compute node to a 500+ node overcloud using the default Heat/os-collect-config method, the stack update took approximately 68 minutes. The time taken for stack update as well as the amount of CPU resources consumed by the heat-engine can be significantly cut down by passing the

--skip-deploy-identifierflag to the overcloud deploy which prevents puppet from running on previously deployed nodes where no changes are required. In this example, the time taken to add 1 compute node was reduced from 68 to 61 minutes along with reduced CPU usage byheat-engine. -

While running control plane scalability tests on the large overcloud by creating 1000s of networks/routers/VMs we ran into Out of Memory errors on the controllers. Based on this testing, we were able to establish that the number of HA neutron routers that can be created on any overcloud is limited by the available memory on the controllers.

-

Config-Download was only tested up to 200 overcloud nodes.

What’s next?

With this effort to scale test Red Hat OpenStack Platform director, we successfully uncovered and resolved issues that we believe will improve the overall scalability of the platform. We filed a total of 17 bugs and more than 19 patches have been proposed to 7 OpenStack projects (keystone, keystoneauth, tripleo-heat-templates, heat, tripleo-common, instack-undercloud and tripleo-ansible) as a direct result of this effort, improving the product scalability as well as customer experience. Open source benefits everyone!

Our next long term release is Red Hat OpenStack Platform 16, which is planned to include the improvements from the findings described here and will be further tested for performance and scale, aiming at a larger number of nodes.

About the authors

Sai is a Senior Engineering Manager at Red Hat, leading global teams focused on the performance and scalability of Kubernetes and OpenShift. With over a decade of deep expertise in infrastructure benchmarking, cluster tuning, and real-world workload optimization, Sai has driven critical initiatives to ensure OpenShift scales seamlessly across diverse deployment footprints- including bare metal, cloud, and edge environments.

Sai and his team are committed to making Kubernetes the premier platform for running mission-critical workloads across industries. A strong advocate for open source, he has played a pivotal role in bringing performance and scale testing tools like kube-burner into the CNCF ecosystem. He holds 8 U.S. patents in systems and performance engineering and has published at leading conferences focused on systems performance and cloud infrastructure.

Sai is passionate about mentoring engineers, fostering open collaboration, and advancing the state of performance engineering within the cloud-native community.

More like this

Friday Five — June 5, 2026

Planning your path forward from Amazon Linux 2: Why consistency is the ultimate upgrade

Operating System Management | Compiler

Technically Speaking | Inside open source AI strategy

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds