Today, Red Hat announced a developer preview of Red Hat Enterprise Linux AI (RHEL AI), a foundation model platform to seamlessly develop, test and run best-of-breed, open source Granite generative AI models to power enterprise applications. RHEL AI is based on the InstructLab open source project and combines open source-licensed Granite large language models from IBM Research and InstructLab model alignment tools, based on the LAB (Large-scale Alignment for chatBots) methodology, in an optimized, bootable RHEL image to simplify server deployments.

The main objective of RHEL AI and the InstructLab project is to empower domain experts to contribute directly to Large Language Models with knowledge and skills. This allows domain experts to more efficiently build AI-infused applications (such as chatbots). RHEL AI includes everything you need by:

- taking advantage of community innovation via open source models and open source skills and knowledge for training

- providing a user-friendly set of software tools and workflow that targets domain experts without data science experience and allows them to do training and fine tuning

- packaging software and operating system with optimized AI hardware enablement

- enterprise support and intellectual property indemnification

Background

Large Language Models (LLMs) and services based on them (like GPT and chatGPT) are well-known and increasingly adopted by enterprise organizations. These models are most often closed source, or with a custom license. More recently, a number of open models have started to appear (like Mistral, Llama, OpenELM). However, the openness of some of these models is limited (for example: limitations on commercial use and/or lack of openness when it comes to training data and transformer weights and other factors related to reproducibility). The most important factor perhaps is the absence of ways in which communities can collaborate and contribute to the models to improve them.

LLMs today are large and general-purpose. Red Hat envisions a world of purpose-built, cost- and performance-optimized models, surrounded by world class MLOps tooling placing data privacy, sovereignty, and confidentiality at the forefront.

The training pipeline to fine-tune LLMs requires data science expertise, and can be expensive: both in terms of resource usage for training, and also because of the cost of high-quality training data.

Red Hat (together with IBM and the open source community) proposes to change that. We propose to introduce the familiar open source contributor workflow and associated concepts like permissive licensing (e.g. Apache2) to models and the tools for open collaboration that enable a community of users to create and add contributions to LLMs. This will also empower an ecosystem of partners to deliver offerings and value to enable extensions and the incorporation of protected information by enterprises.

Introducing Red Hat Enterprise Linux AI!

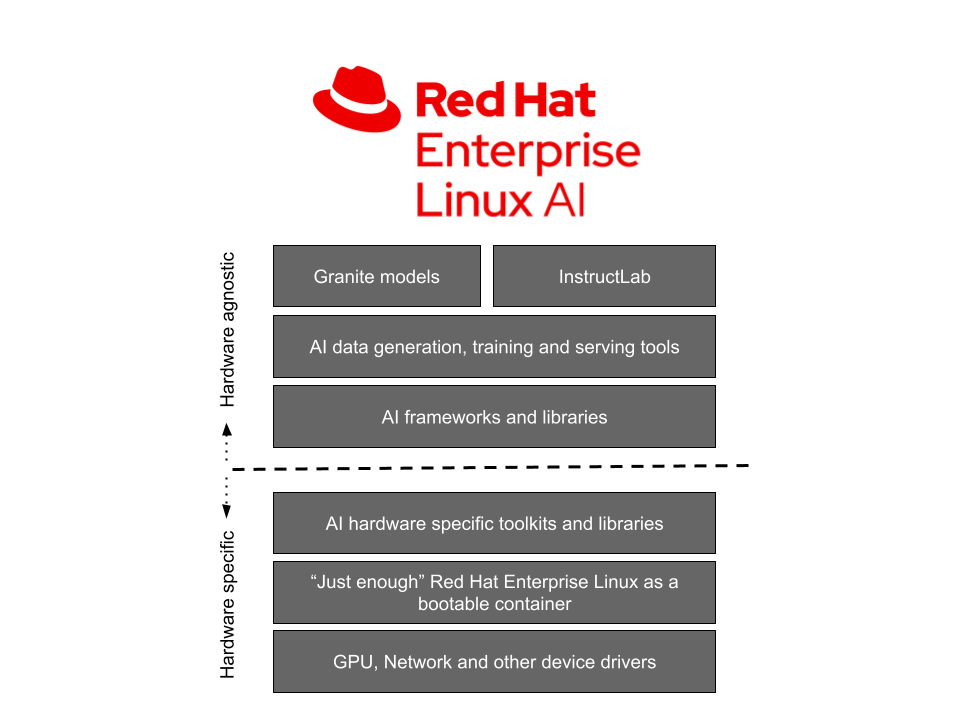

Red Hat Enterprise Linux AI is comprised of four distinct foundational components:

1. Open Granite models

RHEL AI includes highly performant, open source licensed, collaboratively-developed Granite language and code models from the InstructLab community, fully supported and indemnified by Red Hat. These Granite models are Apache 2 licensed and provide transparent access to data sources and model weights.

Users can create their own custom LLM by training the base models with their own skills and knowledge. They can choose to either share the trained model and the added skills and knowledge with the community or keep them private. See more on that in the next section.

For developer preview, users have access to the Granite 7b english language (base) model, and the corresponding Granite 7b LAB model (see below for more on LAB).

In the future, Red Hat Enterprise Linux AI will also include additional Granite models including the Granite code model family. Stay tuned for that.

2. InstructLab model alignment

LAB: Large-Scale Alignment for ChatBots is a novel approach to instruction alignment and fine tuning of large language models with a taxonomy-driven approach leveraging high-quality synthetic data generation. In simpler terms, it allows for users to customize an LLM with domain-specific knowledge and skills. InstructLab then generates high-quality synthetic data that is used to train the LLM. A replay buffer is used to prevent forgetting. See section 3.3 of the academic paper for more details.

The LAB technique contains four distinct steps (see diagram):

- Taxonomy based skills and knowledge representation

- Synthetic data generation (SDG) with a teacher model

- Synthetic data validation with a critic model.

- Skills and knowledge training on top of the student model(s)

InstructLab is the name of the software that implements the LAB technique. It consists of a command-line interface that interacts with a local git repository of skills and knowledge, including new ones that the user has added, to generate synthetic data, run the training of the LLM, to serve the trained model and chat with it.

For the developer preview, InstructLab uses a) a git workflow for adding skills and knowledge, b) Mixtral as the teacher model for generating synthetic data, c) deepspeed for phased training and d) vllm as the inference server. In addition to that, the tooling offers gates that allow for human review and feedback. We are working to add more tooling to make it easier and scalable in the future. As with any open source project, you can help steer its direction!

InstructLab is also the name of the open source community project started by Red Hat in collaboration with IBM research. The community project brings together contributions into a public, Apache 2.0 -licensed taxonomy that is maintained by community members. A LAB trained model is released periodically to the community that includes community taxonomy contributions to the model.

3. Optimized bootable Red Hat Enterprise Linux for Granite models and InstructLab

The aforementioned Granite models & InstructLab tooling are downloaded and deployed on a bootable RHEL image with an optimized software stack for popular hardware accelerators from vendors like AMD, Intel and NVIDIA. Furthermore, these RHEL AI images will boot and run across the Red Hat Certified Ecosystem including public clouds (IBM Cloud validated at developer preview) and AI-optimized servers from Dell, Cisco, HPE, Lenovo and SuperMicro. Our initial testing indicates that with 320GB VRAM (4 x NVIDIA H100 GPUs) or equivalent is needed to complete an end to end InstructLab run in a reasonable amount of time.

4. Enterprise support, lifecycle & indemnification

At general availability (GA), Red Hat Enterprise Linux AI Subscriptions will include enterprise support, a complete product life cycle starting with the Granite 7B model and software, and IP indemnification by Red Hat.

Please note that for developer preview, Red Hat Enterprise Linux AI is community-supported with no Red Hat support and no indemnification.

From experimentation to production

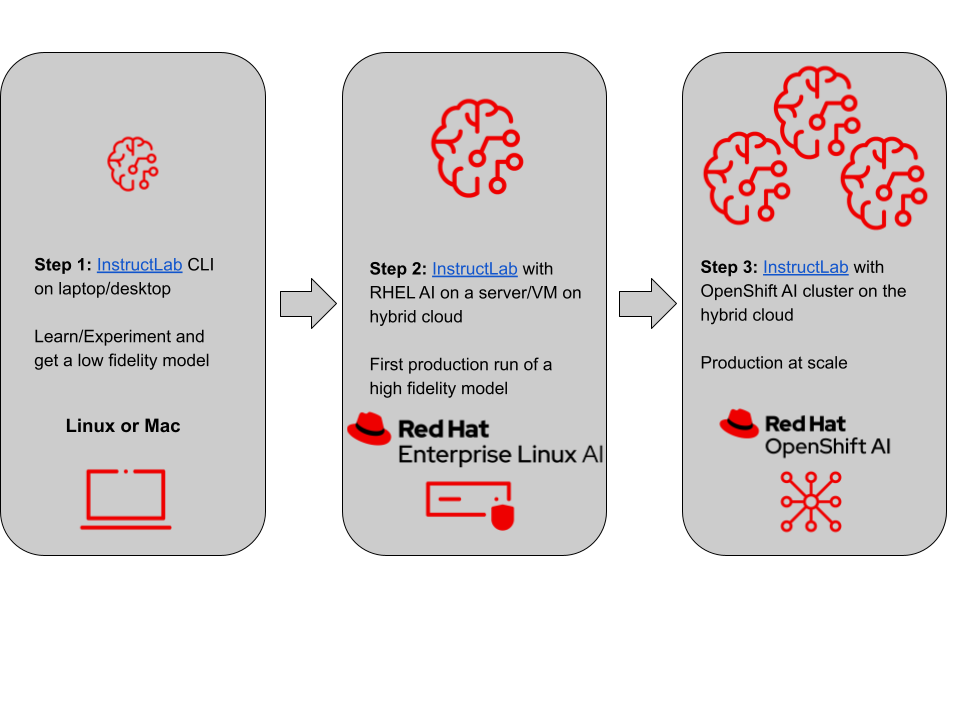

Here is a three step phased approach on how to get started to first production deployments to production at scale:

- Step 1: You can get started with your laptop (or desktop) using the open source InstructLab CLI. This will familiarize you with the InstructLab and produce a low fidelity model (qlora) with your skills and knowledge added.

- Step 2: You can boot Red Hat Enterprise LInux AI into a bare metal server, a virtual machine on-premise or in the cloud. You can now add a corpus of skills and knowledge and train to get a high fidelity trained and tuned model using InstructLab, that you can then chat with and integrate with your applications.

- Step 3: For production at scale, you can use the same methodology as above (with RHEL AI), but with the added benefit of being able to spread the training across multiple nodes for faster completion and for more throughput with OpenShift AI. Furthermore, OpenShift AI makes it much easier to integrate your trained model with your cloud native applications in production.

Conclusion

Red Hat, together with IBM, the open source community and our partners are embarked on an incredible journey that will bring the power of open source innovation and collaboration to Large Language Models and open enterprise software to companies and organizations. We believe this is only the beginning and there are lots of opportunities ahead.

We invite you to be part of that journey. Please join our open source community and start contributing!

You can get started training open source Granite models by downloading InstructLab cli on your laptop/desktop or jump right to the RHEL AI developer preview. If you need help with the developer preview, please contact help-rhelai-devpreview@redhat.com.

References

- Press release

- Main Landing Page for Red Hat Enterprise Linux AI

- Watch a video on how InstructLab lowers barriers to AI adoption

- Learn more about the open source-licensed Granite models

- InstructLab Community Page

- RHEL AI developer preview

- LAB: Large-Scale Alignment for ChatBots

- Granite code model family

- IBM research blog on Synthetic training for LLMs

- How to run RHEL AI on IBM Cloud

About the authors

A 20+ year tech industry veteran, Jeremy is a Distinguished Engineer within the Red Hat OpenShift AI product group, building Red Hat's AI/ML and open source strategy. His role involves working with engineering and product leaders across the company to devise a strategy that will deliver a sustainable open source, enterprise software business around artificial intelligence and machine learning.

More like this

The subject matter expert advantage in the AI era

The Open Accelerator joins the Google for Startups Cloud Program to empower the next generation of innovators

Technically Speaking | Build a production-ready AI toolbox

Technically Speaking | Platform engineering for AI agents

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds