Kubernetes is the industry standard for orchestrating container workloads. Some of its success can be attributed to providing an easy way to declare workloads in YAML while hiding the complexity of a distributed system from the user. Kubernetes YAML exposes all kinds of control knobs for running containers and pods, such as the container image, the command, ports, volumes, secrets, and more. Think of it as command-line options baked into YAML.

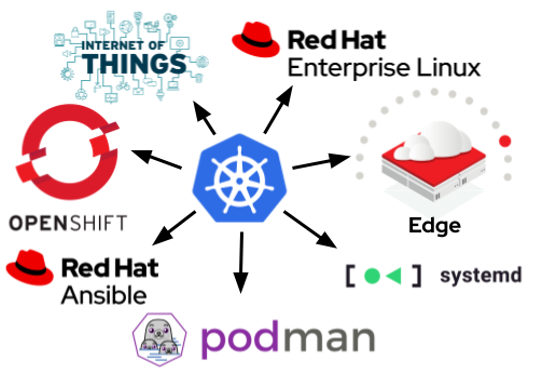

Podman has supported Kubernetes YAML since its early days via the podman-play-kube command. This command is extremely helpful for developers because it allows workloads to be developed on the local machine before being deployed to a cluster. It is also a widely used feature among administrators who are already familiar with the Kubernetes YAML specification and want to run workloads on single nodes instead of a cluster. We see huge interest in using Kubernetes YAML in other contexts beyond a traditional cluster, such as single-node server systems, Internet of Things (IoT) devices, and especially edge computing.

Having a single specification to declare containerized workloads is a big leap forward. Two of the most important advantages are portability and ease of adoption. First, a workload can achieve a high degree of portability in the form of Kubernetes YAML that organizations can deploy in various environments across the IT landscape. Second, it's helpful when developers, users, and administrators need to be familiar with only one specification—organizations can train their staff accordingly. While alternatives such as Compose are still widely used, I believe that Kubernetes YAML is the future for declaring container workloads.

Why run Kubernetes YAML in containerized systemd services?

One significant benefit of Podman is its integration with systemd. Systemd manages many tools and their dependencies, and containerized workloads should not be an exception. As we wrote in a previous article, Podman eliminates the complexities of running a container in systemd by allowing unit generation with the podman-generate-systemd command. Quadlet, which will soon be integrated with the Podman project, helps simplify it further.

[ Download now: Podman basics cheat sheet .]

The Podman feature set has grown over time. It can now run both containers and pods as systemd units. Podman's integration with systemd has also made auto updates and rollbacks possible, something IoT and edge computing commonly rely on. Since Podman version 4.2, Kubernetes YAML can run using Podman and systemd.

Running Kubernetes YAML in systemd has been a popular request by the community. The request resonated with our team's vision of having a single specification for containerized workloads that run in all kinds of environments, including systemd.

Run Kubernetes workloads in systemd with Podman

The workflow for running Kubernetes workloads in systemd with Podman is simple. All you need to do is to execute the new podman-kube@.service systemd template with a Kubernetes YAML file. Podman and systemd take care of the rest. Here's a closer look at how this works.

First, create a Kubernetes YAML file. The workload below powers a guestbook using two containers for the database and the PHP frontend:

$ cat ~/guestbook.yaml

apiVersion: v1

kind: Pod

metadata:

name: guestbook

spec:

containers:

- name: backend

image: "docker.io/redis:6"

ports:

- containerPort: 6379

- name: frontend

image: gcr.io/google_samples/gb-frontend:v6

privileged: true

ports:

- containerPort: 80

hostPort: 8080

env:

- name: GET_HOSTS_FROM

value: "env"

- name: REDIS_SLAVE_SERVICE_HOST

value: "guestbook-backend"

Next, put the Kubernetes YAML file into the systemd template. A systemd template allows for managing multiple units from a single service file. The idea is to pass the path of the Kubernetes YAML file to the template and let systemd and Podman take care of spinning up the systemd unit, pods, and containers. Note that you must escape the path using systemd-escape:

$ escaped=$(systemd-escape ~/guestbook.yaml)

$ systemctl --user start podman-kube@$escaped.service

Make sure the service is active and that the pod is running:

$ systemctl --user is-active podman-kube@$escaped.service

active

$ podman pod ps

POD ID NAME STATUS CREATED INFRA ID # OF CONTAINERS

cd12d516cb6f guestbook Running 43 seconds ago 6c4d8a918ced 3

The service is active, and the guestbook pod is running. There are three containers running inside the guestbook pod:

-

guestbook-backendis the database container running Redis. guestbook-frontendis the container running the Apache guestbook.147333416a5c-infrais the so-called infra container keeping certain pod resources, such as namespaces and ports, open.

But as you can see below, there is a fourth container running. 5eabcc753cb5-service is a service container. Service containers, introduced with Podman v4.2, execute before any pod or container during play kube and stop after. They span the entire lifecycle of a Kubernetes workload and let systemd track and manage the service accordingly.

$ podman ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

4306a0e4af64 localhost/podman-pause:4.2.0-dev-1658325496 5 seconds ago Up 3 seconds ago 5eabcc753cb5-service

1c8cd5e235ad localhost/podman-pause:4.2.0-dev-1658325496 5 seconds ago Up 3 seconds ago 0.0.0.0:8080->80/tcp 147333416a5c-infra

81d6414dce1a docker.io/library/redis:6 redis-server 3 seconds ago Up 2 seconds ago 0.0.0.0:8080->80/tcp guestbook-backend

af0a26964fa9 gcr.io/google_samples/gb-frontend:v6 apache2-foregroun... 2 seconds ago Up 2 seconds ago 0.0.0.0:8080->80/tcp guestbook-frontend

[ Learn how to manage your Linux environment for success. ]

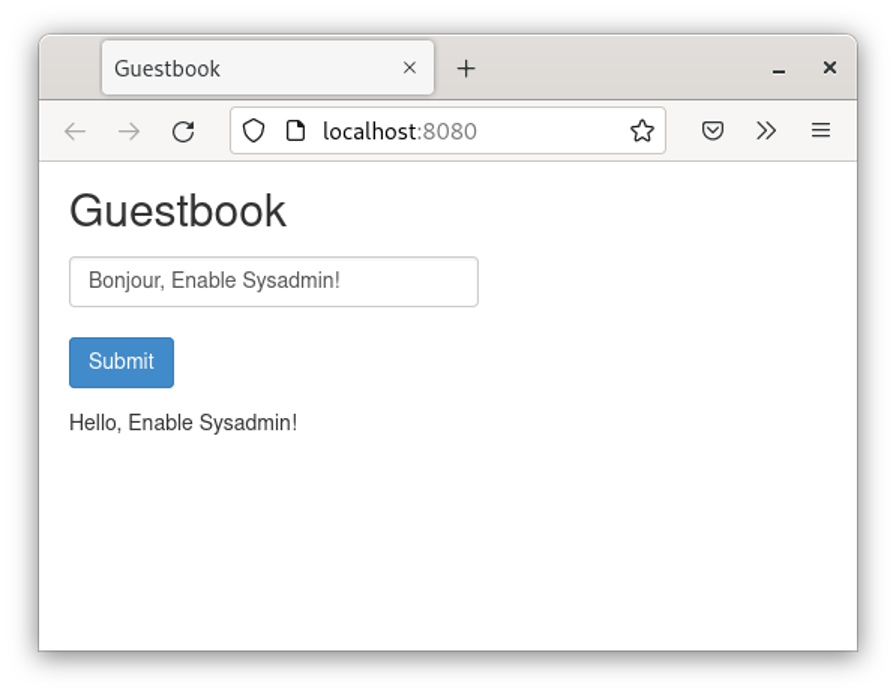

Check whether the guestbook works by visiting http://localhost:8080/ in your browser of choice.

It works as expected. Once you are done testing, you can stop the service via systemctl:

$ systemctl --user stop podman-kube@$escaped.service

$ podman pod ps

POD ID NAME STATUS CREATED INFRA ID # OF CONTAINERS

We consider this workflow superior to the podman-generate-systemd workflow, which requires a user to create a container or pod first and then generate a systemd unit for it, which subsequently needs to be installed. In these instances, by using the start podman-kube@.service template, users just need to pass their Kubernetes YAML and the template; systemd and Podman will take care of the rest. You can expect future releases of Podman to improve on this template-based approach along with best practices we want to develop with the community.

The vision: Unifying containerized workloads

Imagine you are designing complex applications with multiple containers running within various pods. Declaring the workload using Kubernetes YAML enables portability to run the workload in all kinds of environments, from developing on a local workstation, to deploying it to single-node environments using Ansible, to running it in systemd.

Furthermore, the applications can also be deployed across different edge tiers, such as MicroShift or single-node OpenShift. However, this implies running extensive services on your devices, which can consume lots of memory and CPU time and may not work in all environments or use cases. Without a unified way of declaring container workloads across these environments (such as Kubernetes YAML), we had to use alternative and more complex approaches, such as Compose. Having multiple ways of running the same workload adds complexity to the architecture and increases the costs of training, tools development, testing, and maintenance. Using Podman for running the Kubernetes YAML is now a viable alternative, and systemd takes care of the lifecycle management.

Finally, the same workload can be deployed to Kubernetes offerings, including OpenShift, entailing all the benefits of a real distributed system, including failovers, replication, and more.

Wrap up

With the addition of Kubernetes YAML, Podman provides a unified solution to declare container workloads across environments and simplify complexity for developers and sysadmins. Feel free to test the feature on Fedora 36 and CentOS Stream 9 where Podman v4.2 is already available.

About the authors

Preethi Thomas is an Engineering Manager for the containers team at Red Hat. She has been a manager for over three years. Prior to becoming a manager, she was a Quality Engineer at Red Hat. She is passionate about open source software, software quality, and open management practices and has rich experience working with upstream communities and projects.

Daniel Walsh has worked in the computer security field for over 30 years. Dan is a Senior Distinguished Engineer at Red Hat. He joined Red Hat in August 2001. Dan leads the Red Hat Container Engineering team since August 2013, but has been working on container technology for several years.

Dan helped developed sVirt, Secure Virtualization as well as the SELinux Sandbox back in RHEL6 an early desktop container tool. Previously, Dan worked Netect/Bindview's on Vulnerability Assessment Products and at Digital Equipment Corporation working on the Athena Project, AltaVista Firewall/Tunnel (VPN) Products. Dan has a BA in Mathematics from the College of the Holy Cross and a MS in Computer Science from Worcester Polytechnic Institute.

More like this

4 reasons to start using image mode for Red Hat Enterprise Linux right now

Hardened, ready, and no cost: Container security evolved

The Containers_Derby | Command Line Heroes

Can Kubernetes Help People Find Love? | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds