In recent years, application development has started to focus on a new concept: containers. No, these aren’t shipping containers, however, most people working in a tech field have heard the term ‘container’ come up in some technical design meeting or discussing ‘the future of technology.’ It has quickly become a buzzword and an important concept, but what actually is a container? What is all the excitement about? Why should we care?

Check out our YouTube video: Container fundamentals, security and usage in the enterprise.

What is a container?

Containers are units of software that package applications and their dependencies in isolation from their environment. They are independent executable pieces of code that contain the dependencies required by an application. They share the host kernel with other containers and applications running on the host. This means that instead of each container needing its own operating system (OS), the containers instead use the operating system of the underlying host, requiring significantly fewer resources. While this is the technical definition of a container, an analogy may help make the concept easier to understand.

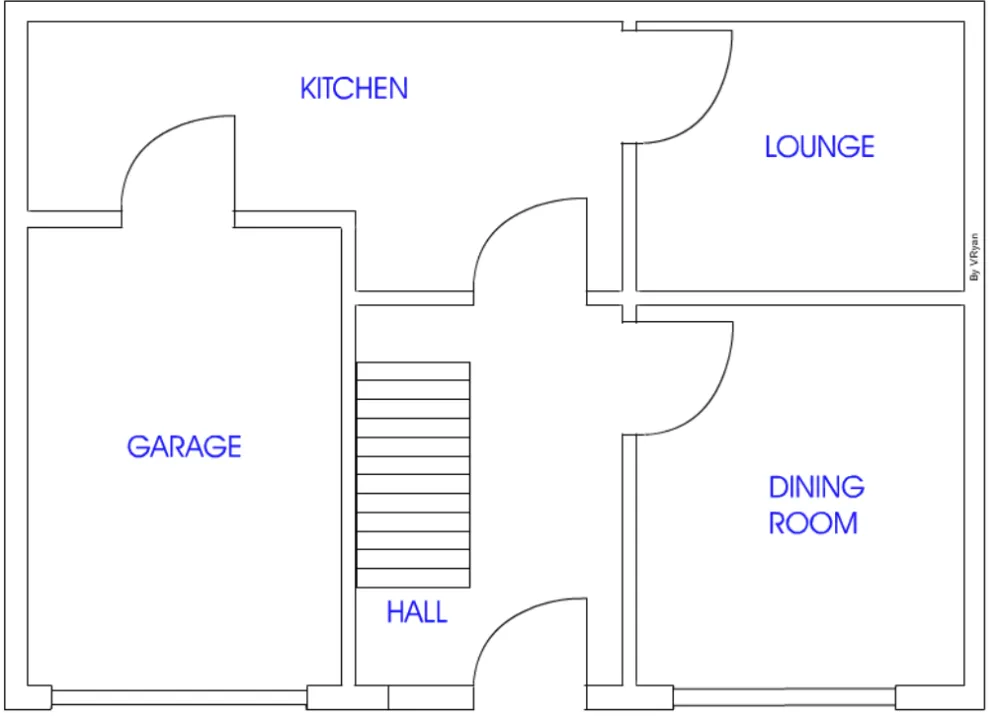

Think of a container as a single room in a house. This room is self-contained with everything it needs within its walls, yet it relies on the electricity and structure provided by the house. Similar to how a container relies on the OS kernel of the underlying host. Each room is separate from other rooms, but is still a part of the house. For instance, in the diagram below, the hall, lounge, kitchen and the dining room are isolated rooms in the house, similar to the modular components of an application.

It sounds like a Virtual Machine…

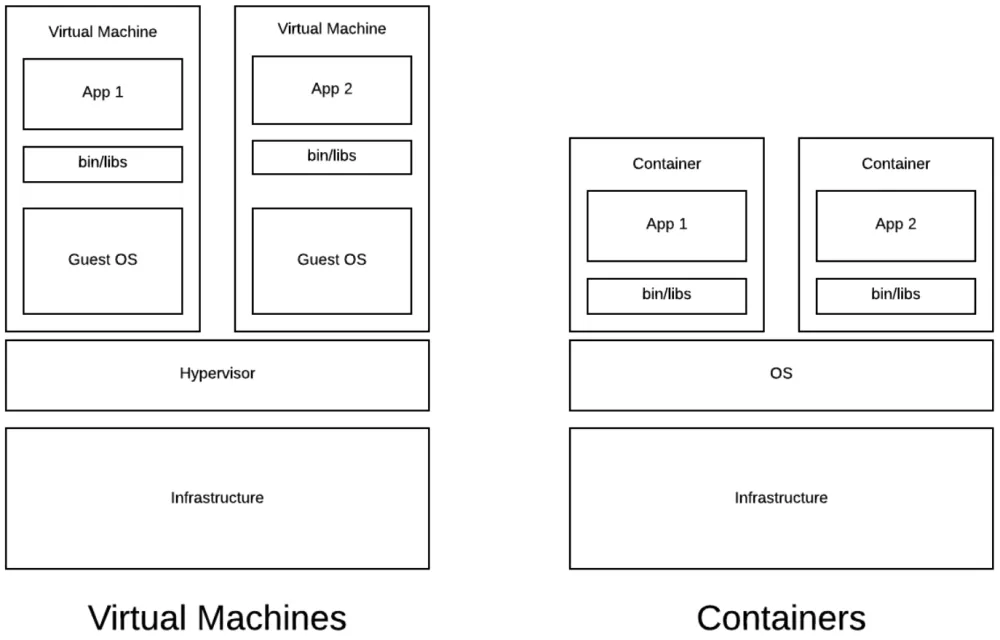

On the surface, a container sounds a lot like a virtual machine (VM). They indeed have similarities; however they differ in a few important ways.

VMs require a hypervisor and their own operating system, while containers share the operating system kernel of the underlying host. This key difference makes VMs more resource-heavy and slower to start. To start up a VM, you must first bring up the hypervisor, wait for the operating system for the specific VM to come up, and then start your application on the VM. But for a container, you just launch the container, and your application is up and running. Building off the house analogy, adding new functionality to a VM would be equivalent to building an entirely new house, while the container equivalent would just be adding a new room.

But surely containers aren't secure…

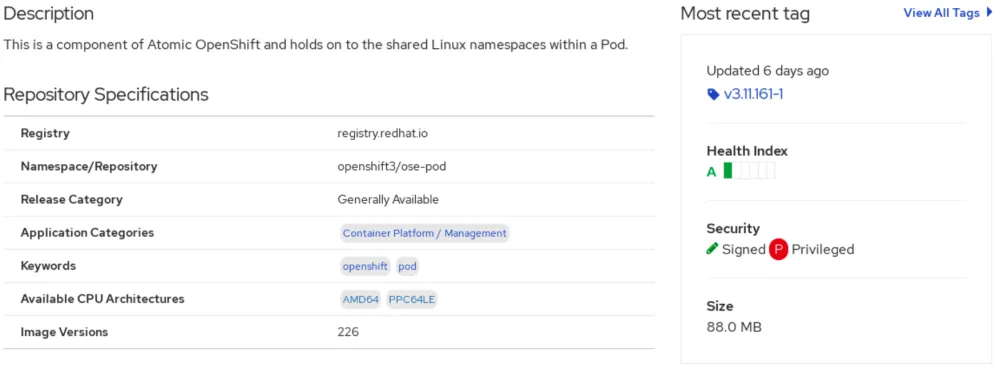

Containers can be incredibly secure if configured correctly. The security of the host operating system can impact the security of the containers running on it, so the operating system should be frequently patched and properly hardened. With the underlying operating system properly secured, a container’s security mainly depends on the image it uses. In the house analogy, an image would be the blueprint of a room, describing exactly what the room is. Image scanners such as OpenSCAP (Security Content Automation Protocol) can be used to check the integrity of container images. These scanners look for configuration settings that could be security risks. Some container image registries, such as Red Hat Quay, provide an at-a-glance view of security health. There it gives you a red flag for an unhealthy image and a green flag for a good one.

There are a couple of key differences between containers and VMs that give containers a security advantage. First, containers are immutable, which means that a running container is not affected by any changes to its image. A modified image creates a new container leaving the original container unchanged and secure. Any results produced at any given time can be reproduced using the same image. Second, containers often have shorter life spans than VMs. A VM may run for months or years at a time, while a container likely only runs for hours or days. This shorter life span allows for easier security patching and updating, while also shortening the attack window for known Common Vulnerabilities and Exposures (CVEs). Third, containers allow applications to be much more transparent than applications running on a VM. A list of all software and programs running in a container exists, as these must be explicitly defined for the container image. This list can only be obtained by knowing the exact image the container is using and then inspecting the image. An outside attacker most likely would not have this knowledge. Alternatively VMs have no defined list of software and can be similar to a black box, making it difficult to know what is actually in the VM. While this lack of transparency does not allow an attacker to know what is running in a VM, it does make it more difficult for an administrator to consistently patch every package in the VM, possibly leaving vulnerabilities unpatched for a longer period of time. By knowing what is in a container, it is easier to know when specific software needs to be patched due to known vulnerabilities.

Since containers offer process isolation, they are very reliable. If one container is somehow compromised, the other containers continue to function as normal. The other containers and the underlying host system should likely remain unbreached, as long as those components don’t have additional security flaws. For additional security features, Security Enhanced Linux (SELinux) should be enabled and running as much as possible. SELinux is a security enhancement to Linux, allowing users and administrators more control over access and other components.

Why use containers?

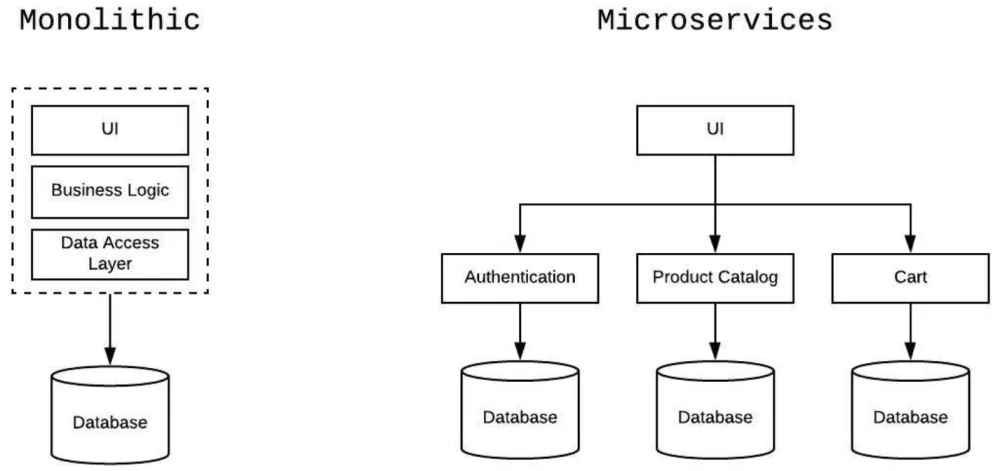

Containers can help break up a large monolithic application into a bunch of smaller, easier to manage applications. By using design patterns which are common for application architecture, containerized applications can benefit from increased efficiency, reduced overhead, and are reusable. This concept is known as microservices: an architecture that consists of many small applications that each perform one task with high efficiency. These smaller applications are easier to test, maintain, and can be reused easily. Containers naturally lend themselves towards a microservices architecture since each service is self-contained, light on resources, and extremely portable.

One simple example that all of us can relate to is an online eCommerce site. As you can see in this diagram, this application can be broken down into three microservices: the customer authentication service, a product catalog, and a shopping cart. Each of these microservices independently executes their tasks and interacts with the other components when needed. If we were to utilize containers for the eCommerce site, each microservice would run in its own container and have rules about which of the other microservices it can access. Breaking the application up this way allows for updates and patches to each microservice, rather than having to do so to the entire application.

These traits also make containers ideal for adopting Agile and DevOps Methodology. Developers can continuously develop, test, and rapidly deploy their containerized applications for integration tests. Since containers work reliably in different environments, they allow for smaller iterations and faster deployments. Containers can also make it easier to update software in a streamlined manner. Updating one part of the application or a microservice and restarting the container does not change any other part of the application.

That seems like a lot to manage…

Luckily, there is software available to help manage containerized applications. For example, Kubernetes is an open-source system that aids in container orchestration and automated deployments. It eases management and orchestration of containerized applications, even across multiple clusters. Kubernetes was originally developed by Google to manage its Google Cloud, and can be scaled very efficiently.

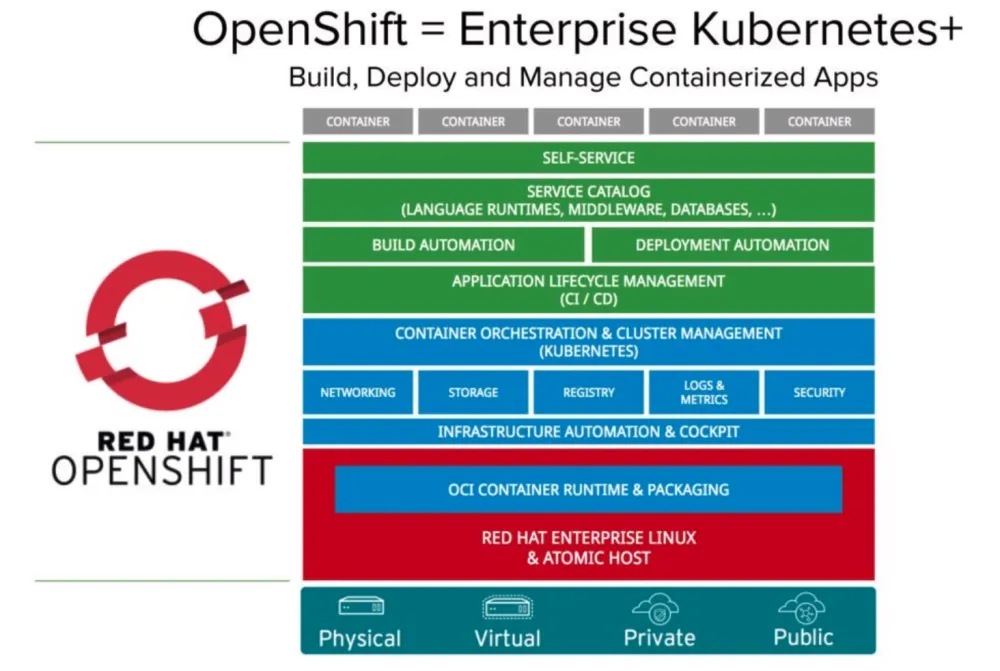

Red Hat OpenShift is Red Hats Kubernetes distribution to provide an enterprise solution for container management. In a nutshell, OpenShift is a smarter platform, extending k8s to provide additional security and scalability for container management. Unlike Kubernetes, OpenShift comes with an automated installer, greatly simplifying the process of adoption. It can help accelerate application development through its web UI and command line client to provide developers tools for interacting with and managing their containerized applications. The web UI supports several authentication mechanisms, adding an extra layer of security.

OpenShift's built-in internal registry is compliant with the Open Container Initiative (OCI) and can take advantage of external registries as well, such as Docker Hub. With a multitude of capabilities, OpenShift brings security, reliability, and ease-of-use to container management. Learn more about Kubernetes on nitro aka OpenShift at https://www.redhat.com/en/openshift-4.

If you are interested in getting help with this solution reach out to your existing Red Hat Account Executive or visit Red Hat Consulting to get a conversation started.

Connect with Red Hat Services

Learn more about Red Hat Consulting

Learn more about Red Hat Training

Join the Red Hat Learning Community

Learn more about Red Hat Certification

Subscribe to the Training Newsletter

Follow Red Hat Services on Twitter

Follow Red Hat Open Innovation Labs on Twitter

Like Red Hat Services on Facebook

Watch Red Hat Training videos on YouTube

Follow Red Hat Certified Professionals on LinkedIn

About the author

More like this

From metal to agent: Why agentic AI is an application evolution

Build security into ITOps from the start with automation

Operating System Management | Compiler

Technically Speaking | Inside open source AI strategy

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds