By Jason Dickerson, Adam Miller, and Josh Swanson

As Red Hat pushes further out to the edge, more flexibility is required when building and running automation topologies to facilitate the automation of technology outside the standard hybrid cloud environment.

Recently, we've begun pushing an architecture pattern that involves managing discrete deployments of Ansible Automation Platform that are highly distributed and typically deployed within individual sites. While this approach brings freedom and flexibility to the automation topology, it also introduces complexity, especially when considering how to traverse network boundaries.

This post is a deep dive on Controller of Controllers as an architecture. It explores how you can use Red Hat Interconnect to address complexity and facilitate deployment into edge sites.

Compelling Factors

A few key points drive the requirement to deploy Ansible Automation Platform in a Controller of Controllers configuration:

- Remote sites will not allow inbound connections but will allow outbound connections.

- Automation autonomy should be maintained even if the external connection to the site is lost.

- Blast radiuses are limited to individual instances of Controllers at the sites instead of taking down the organization's entire automation capability.

- Supports true multi-tenancy if deployed across multiple disjointed environments, such as a managed service provider managing numerous customers' environments.

When added up, Controller of Controllers meets these requirements while remaining a highly automated solution. Really, this becomes a bit of automation inception, with Ansible Automation Platform automating against Ansible Automation Platform.

Controller of Controllers

Controller of Controllers is an architectural approach to automation that uses a parent instance of Ansible Controller to instantiate, configure, and manage child instances of Ansible Controller. This is almost always accomplished via the consumption of Ansible Controller's API, which provides the ability to fully configure Controller and run automation.

The parent controller doesn't do direct automation against endpoints; instead, it configures the child controllers to do that automation. Since this is the Ansible ecosystem, typically, the configuration is defined as Ansible variables, then fed into collections such as ansible.controller and infra.controller_configuration.

Once the child controllers are configured, they begin the automation against the endpoints. This reflects the "traditional" automation model used in hybrid cloud deployments: Ansible Controller automating directly against endpoints.

When these two elements come together, they offer a highly scalable solution that can perform automation at a massive scale while retaining local autonomy for individual deployments of Controller and keeping the configuration and management of that automation fabric highly automated.

Red Hat Interconnect

While Controller of Controllers is very powerful as an architecture for highly distributed automation, one of the main stumbling blocks at implementation is having to span disparate network topologies. Typically, this includes low bandwidth, unreliable links, multiple layers of stateful firewalls that do not allow inbound access, constantly updating routing tables, and even traversing the public internet.

This is where Red Hat Interconnect enters the architecture. As a layer 7 interconnect, it can lash together services across these network topologies. It presents these services as true services within a Kubernetes namespace, even if the service runs far away at a remote site on a limited compute device within a highly protected network.

Using Red Hat Interconnect, a Controller of Controllers architecture can be secured and spanned across a full-scale edge deployment.

This demonstration uses a ROSA cluster set up to run a parent controller and a RHEL virtual machine running the child controller in a lab environment behind several firewalls and routers that can reach outbound .

Set Up the Parent Controller

First, I've deployed an instance of Ansible Controller via the Ansible Automation Platform operator on top of OpenShift. For demonstration purposes, I'm using the default aap namespace; however, this is configurable.

I've configured a few items to manage child controllers within the parent controller instance. First, I created an inventory of child controllers and added a host:

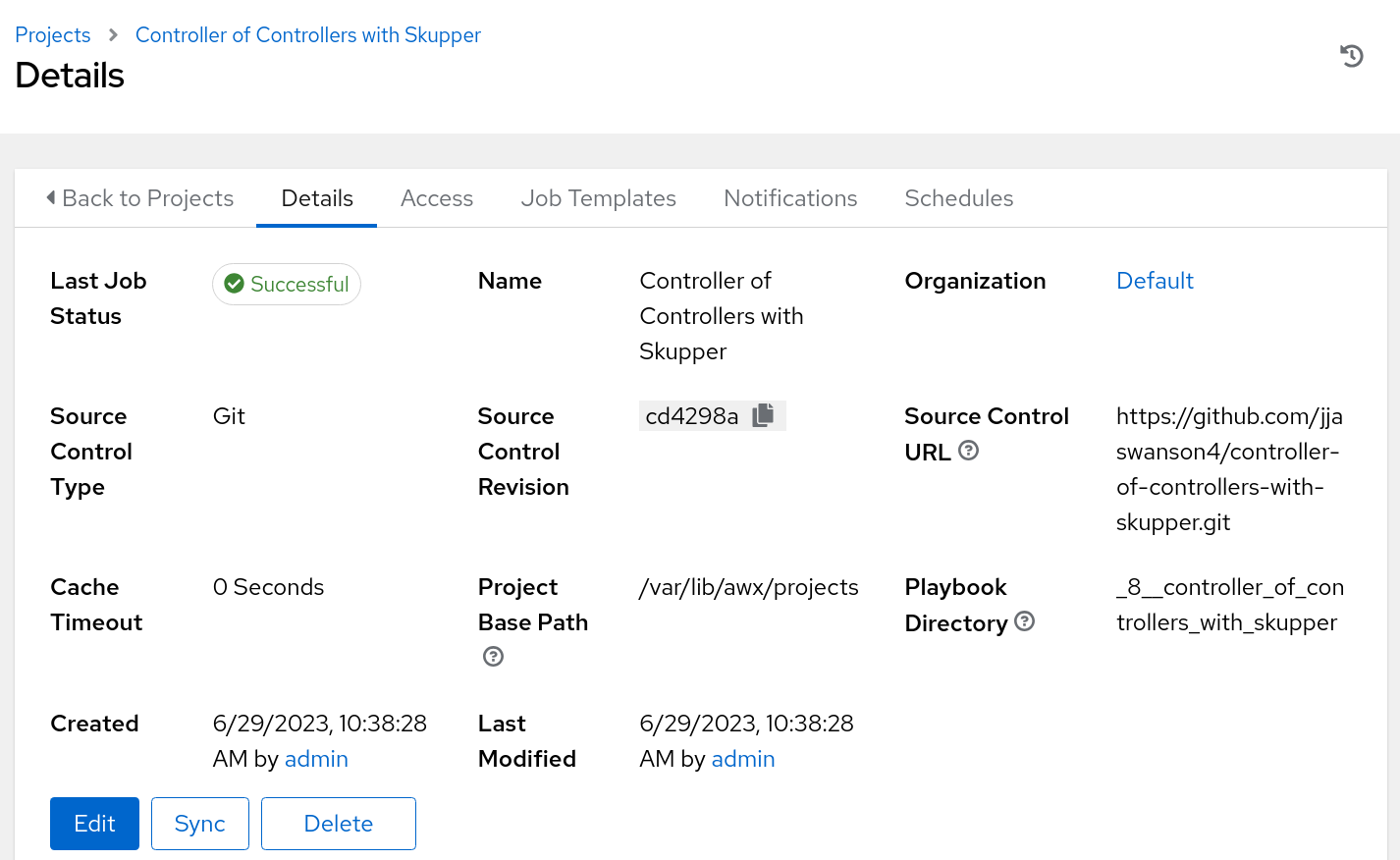

Next, I created a project containing the YAML configuration of the child controllers:

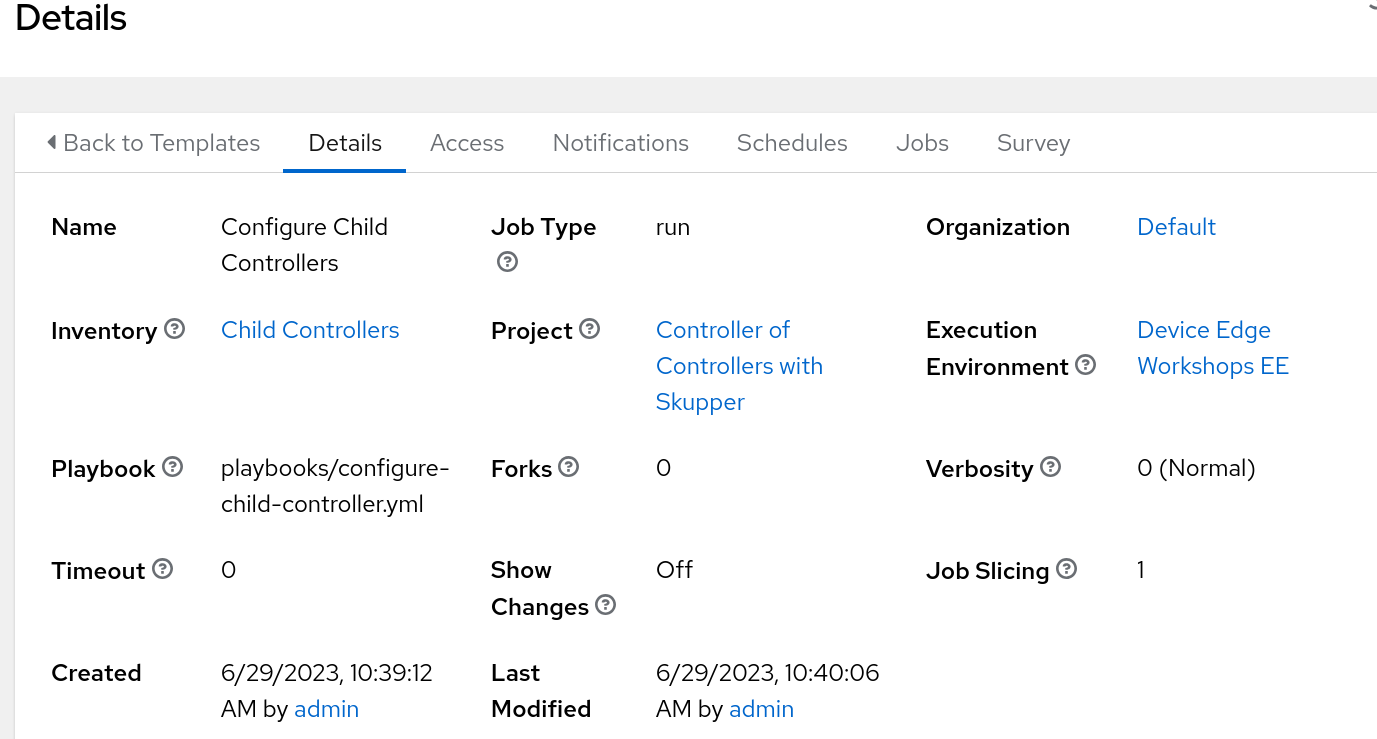

And finally, I made a job template to run that configures the child controllers:

Here is the child controller configuration for reference:

controller_execution_environments:

- name: Device Edge Workshops Execution Environment

image: quay.io/device-edge-workshops/provisioner-execution-environment:latest

pull: missing

controller_inventories:

- name: Edge Devices

organization: Default

variables:

site: site1

controller_hosts:

- name: switch1

inventory: Edge Devices

variables:

ansible_host: 10.15.108.3

- name: switch2

inventory: Edge Devices

variables:

ansible_host: 10.15.108.4

- name: firewall1

inventory: Edge Devices

variables:

ansible_host: 10.15.108.1

- name: firewall2

inventory: Edge Devices

variables:

ansible_host: 10.15.108.2

- name: dcn1

inventory: Edge Devices

variables:

ansible_host: 10.15.108.10

- name: dcn2

inventory: Edge Devices

variables:

ansible_host: 10.15.108.11

- name: acp-node1

inventory: Edge Devices

variables:

ansible_host: 10.15.108.100

- name: acp-node1

inventory: Edge Devices

variables:

ansible_host: 10.15.108.100

- name: acp-node2

inventory: Edge Devices

variables:

ansible_host: 10.15.108.101

- name: acp-node3

inventory: Edge Devices

variables:

ansible_host: 10.15.108.102

controller_groups:

- name: switches

inventory: Edge Devices

hosts:

- switch1

- switch2

- name: firewalls

inventory: Edge Devices

hosts:

- firewall1

- firewall2

- name: dcns

inventory: Edge Devices

hosts

- dcn1

- dcn2

- name: acp

inventory: Edge Devices

hosts:

- acp-node1

- acp-node2

- acp-node3

controller_credentials:

- name: Device Credentials

organization: Default

credential_type: Machine

inputs:

username: admin

password: 'R3dh4t123!'

controller_projects:

- name: Controller of Controllers with Skupper

organization: Default

scm_type: git

scm_branch: main

scm_url: https://github.com/jjaswanson4/controller-of-controllers-with-skupper.git

controller_templates:

- name: Example Job

organization: Default

inventory: Edge Devices

project: Controller of Controllers with Skupper

playbook: playbooks/sample-playbook.yml

Establish the Connection between the Parent and Child Controller

At a remote site is an instance of Ansible Controller without any configuration. I've installed Skupper (the upstream of Red Hat Interconnect) CLI.

First, authenticate to the OpenShift cluster with the OC CLI tooling:

[jswanson@site1-controller aap-containerized-installer]$ oc login --token=sha256~my-token-here --server=https://api.rosa-nmvzn.goqx.p1.openshiftapps.com:6443

Logged into "https://api.rosa-nmvzn.goqx.p1.openshiftapps.com:6443" as "cluster-admin" using the token provided.

You have access to 103 projects, the list has been suppressed. You can list all projects with 'oc projects'

Using project "default".

Welcome! See 'oc help' to get started.

Next, switch to the namespace where AAP is deployed and initialize Red Hat Interconnect:

[jswanson@site1-controller aap-containerized-installer]$ skupper init

clusterroles.rbac.authorization.k8s.io "skupper-service-controller" not found

Skupper is now installed in namespace 'aap'. Use 'skupper status' to get more information.

[jswanson@site1-controller aap-containerized-installer]$ skupper status

Skupper is enabled for namespace "aap" in interior mode. Status pending... It has no exposed services.

[jswanson@site1-controller aap-containerized-installer]$ oc get pods | grep -i skupper

skupper-router-c56685cc6-bbjs8 2/2 Running 0 28s

skupper-service-controller-6c59c94fb9-mz79m 1/1 Running 0 26s

Here you can see that Interconnect has been initialized in my namespace, and a few pods are now running.

Now, initialize the gateway on the remote system running Ansible Controller:

[jswanson@site1-controller aap-containerized-installer]$ skupper gateway init --type podman

Skupper gateway: 'site1-controller-jswanson'. Use 'skupper gateway status' to get more information.

[jswanson@site1-controller aap-containerized-installer]$ skupper gateway status

Gateway Definition:

╰─ site1-controller-jswanson type:podman version:2.4.1

Once the gateway is initialized, create a service and expose it within the namespace:

[jswanson@site1-controller aap-containerized-installer]$ skupper service create site1-controller 443

[jswanson@site1-controller aap-containerized-installer]$ skupper gateway bind site1-controller localhost 443

2023/06/28 18:23:21 CREATE io.skupper.router.tcpConnector site1-controller:443 map[address:site1-controller:443 host:localhost name:site1-controller:443 port:443 siteId:2c2edf54-4fcf-49d8-a7c3-9a66600a60c4]

Once complete, the connection between the namespace and the remote system running Controller has been established, and a service has been created within the namespace:

[jswanson@site1-controller aap-containerized-installer]$ oc get svc | grep site1-controller

site1-controller ClusterIP 172.30.63.183 <none> 443/TCP 74s

To confirm, set up a route that allows access to the remote controller instance via the OpenShift controller ingress:

kind: Route

apiVersion: route.openshift.io/v1

metadata:

name: site1-controller

namespace: aap

spec:

host: site1-controller.apps.rosa-nmvzn.goqx.p1.openshiftapps.com

to:

kind: Service

name: site1-controller

port:

targetPort: 443

tls:

termination: passthrough

insecureEdgeTerminationPolicy: None

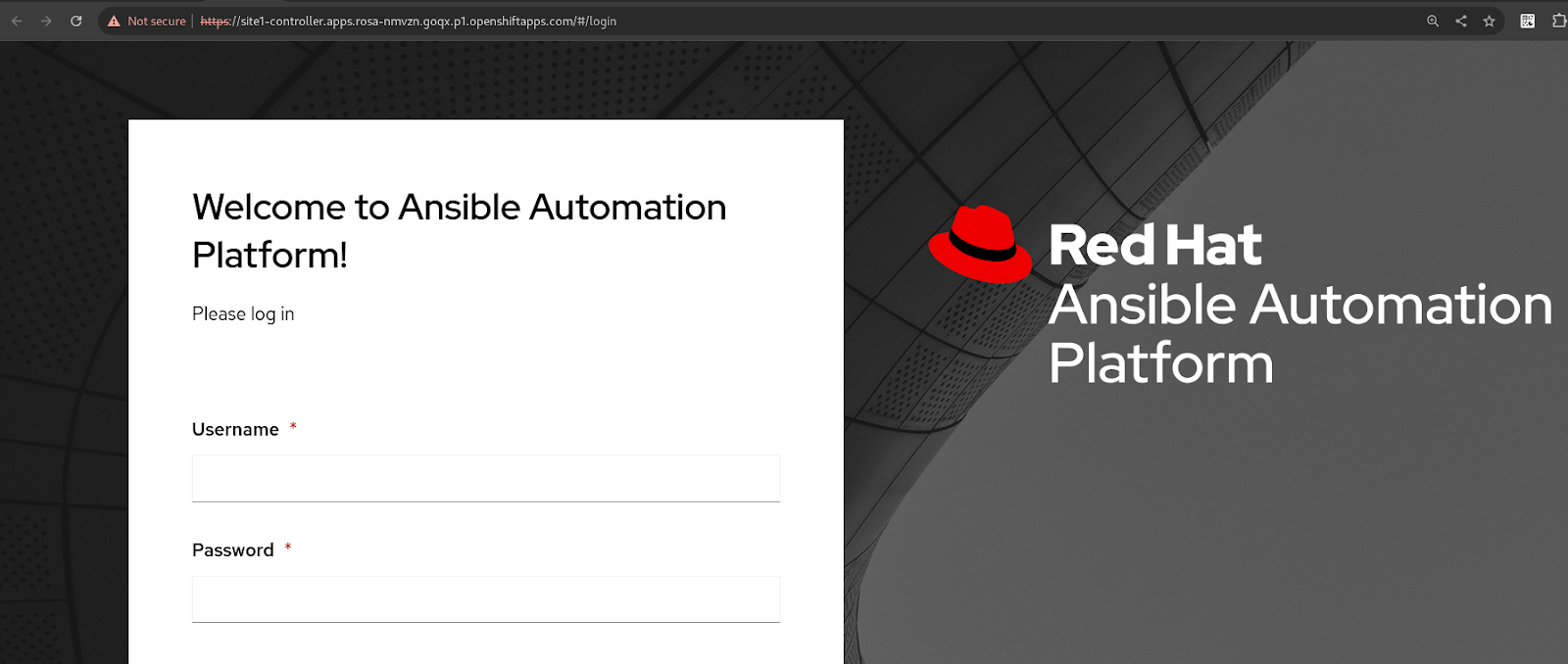

Once you have configured the route, confirm functionality by opening a web browser, accessing the route, and being greeted with the Controller Web Interface:

Use the Parent Controller to Configure the Child Controller

With connectivity configured and functioning, the API of the child controller is now accessible to the parent controller, and you can begin automating against the child controller.

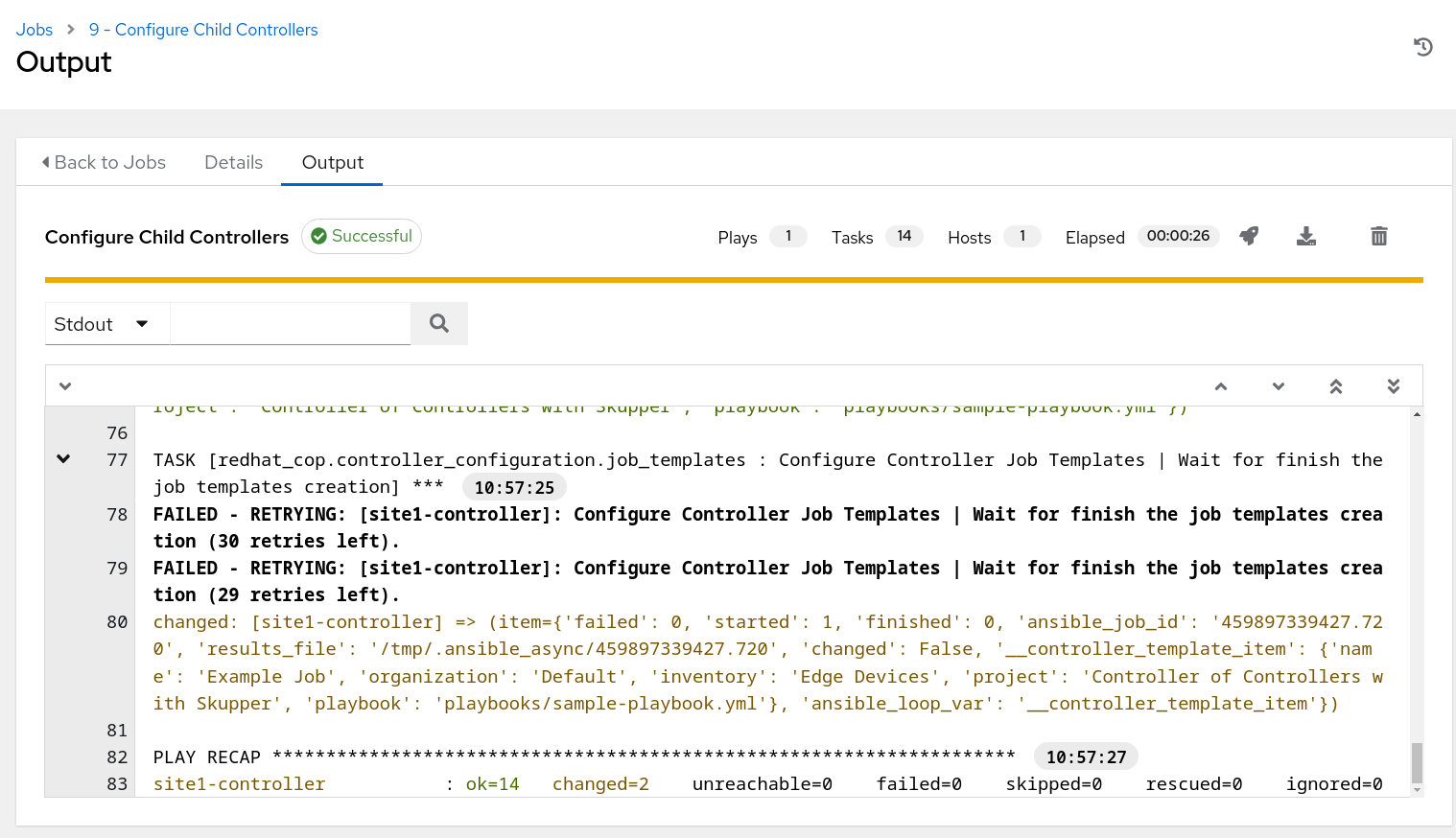

Launch the job template to configure the child controller. Red Hat Interconnect will transparently handle the traffic between the execution environment running the automation and the child controller's API.

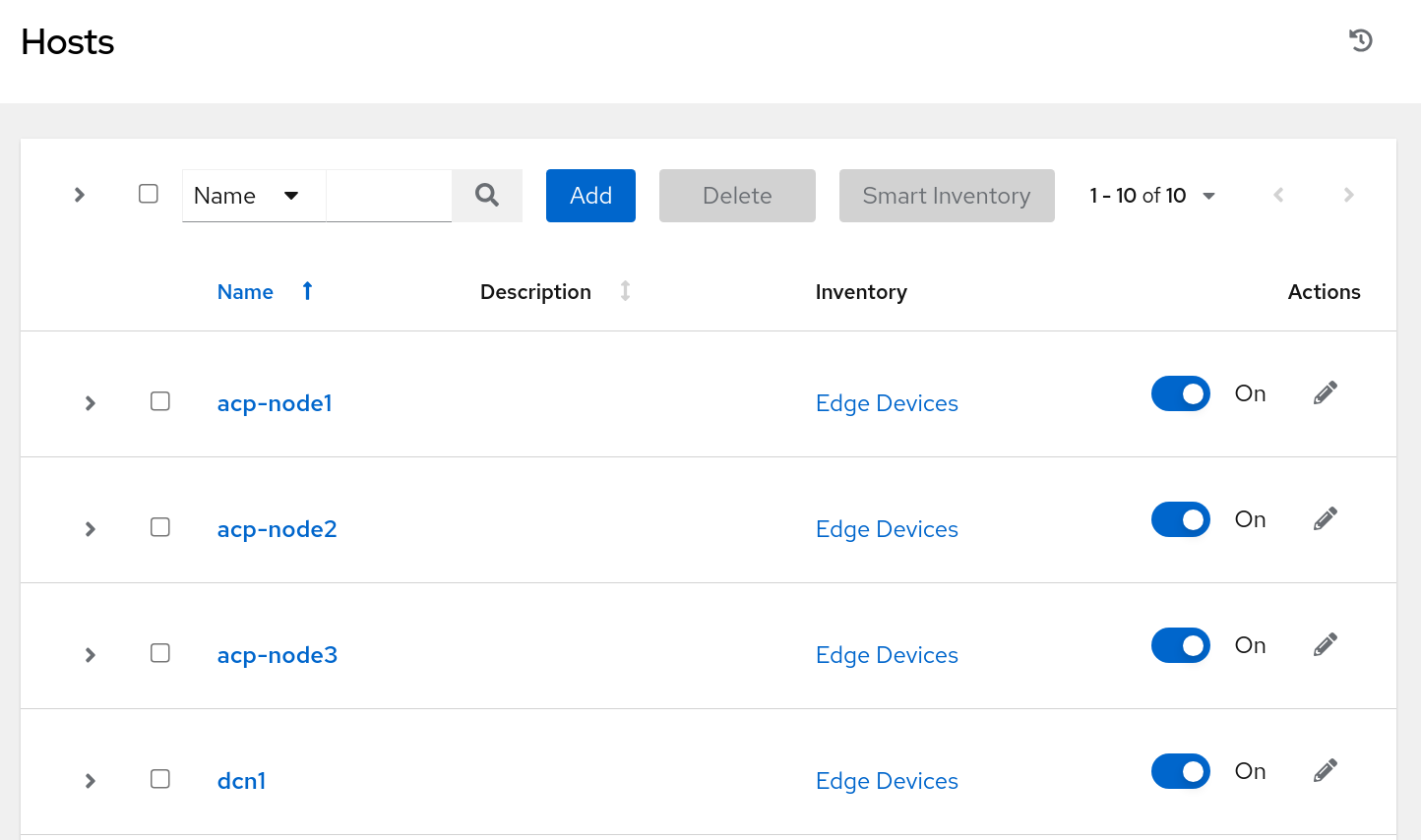

To confirm, visit the child controller's web interface and look for configured items, such as hosts:

Wrap Up

This demonstration deployed the Controller of Controller architecture across a highly disparate network topology using Red Hat Application Interconnect as a connection overlay to enable connectivity between a centralized parent Controller and a child Controller.

In the future, this could be expanded to encompass additional services running in the central location, such as source control or image registries for execution environments, or it could be scaled up to include thousands of child controllers.

About the authors

Josh is Red Hat’s industrial edge architect on the global edge architecture team, focused on the industrial edge. He’s worked on the floor of manufacturing plants and built industrial control systems before moving over into enterprise architecture to handle IT/OT convergence. While at Red Hat, he’s worked with large automotive companies, the oil and gas supermajors, and major manufacturing companies on their approach to next generation compute at the industrial edge.

More like this

The agentic paradox and the case for hybrid AI

Context-aware advisor recommendations in Red Hat Lightspeed

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds