This is part 1 of a 5 part series. Links to the other parts are here:

- Introduction to OpenShift Pipelines

- Using Source 2 Image Build in Tekton

- Manage a Runtime Image

- Application Deployment and Pipeline Orchestration

- Using the Examples in this Series

Introduction

OpenShift Pipelines is a Continuous Integration / Continuous Delivery (CI/CD) solution based on the open source Tekton project. The key objective of Tekton is to enable development teams to quickly create pipelines of activity from simple, repeatable steps. A unique characteristic of Tekton that differentiates it from previous CI/CD solutions is that Tekton steps execute within a container that is specifically created just for that task. This provides a degree of isolation that supports predictable and repeatable task execution, and ensures that development teams do not have to manage a shared build server instance. Additionally, Tekton components are Kubernetes resources which divests to the platform the management, scheduling, monitoring and removal of Tekton components.

This article is the first in a series that aims to show specific capabilities of Tekton for the purpose of carrying out the following main activities :

- Build an application from source code using Maven and the OpenShift Source to Image process

- Create a runtime container image

- Push the image to the Quay image repository

- Deploy the image into a project on OpenShift (after first clearing out the old version)

Access to the source content

All assets required to create your own instance of the resources described in these articles can be found in the GitHub repository here. In an attempt to save space and make the article more readable only the important aspects of Tekton resources are included within the text. Readers who want to see the context of a section of YAML should clone or download the Git repository and refer to the full version of the appropriate file.

A comment on names

The Open Source Tekton project has been brought into the OpenShift platform as OpenShift Pipelines.

‘OpenShift Pipelines’ and Tekton are often used interchangeably, and both will be used in this article.

Introducing OpenShift Pipelines

OpenShift Pipelines is delivered to an OpenShift platform using the Operator framework, which takes care of installing and managing all of the cluster components for you. This ensures that the system is simple to install and maintain over time within an OpenShift cluster. As soon as the Tekton Operator is installed users can start to add Tekton resources to their projects to create a build automation process.

Users can interact with OpenShift Pipelines using the web user interface, command line interface, and via a Visual Studio Code editor plugin. Other editor plugins do exist so check with your editors plugins page to see what it offers for Tekton. The command line access is a mixture of the OpenShift ‘oc’ command line utility and the ‘tkn’ command line for specific Tekton commands. The tkn and oc command line utilities can be downloaded from the OpenShift web user interface. Simply press the white circle containing a black question mark near your name on the top right corner and then select Command Line Tools as shown in figure 1.

Figure 1 - OpenShift web user interface command line tools access

Tekton resources

The fundamental resource of the Tekton process is the task. A Task contains at least one step to be executed and performs a useful function. It is possible to create a taskRun object that makes reference to a task, and enables the user to invoke the task. This will not be covered in this article since the purpose here is to create richer objects that can be used from within the OpenShift web user interface and can be called from a webhook as part of a CI/CD process. In the example presented here tasks are grouped into an ordered execution using a pipeline resource.

Task execution hierarchy

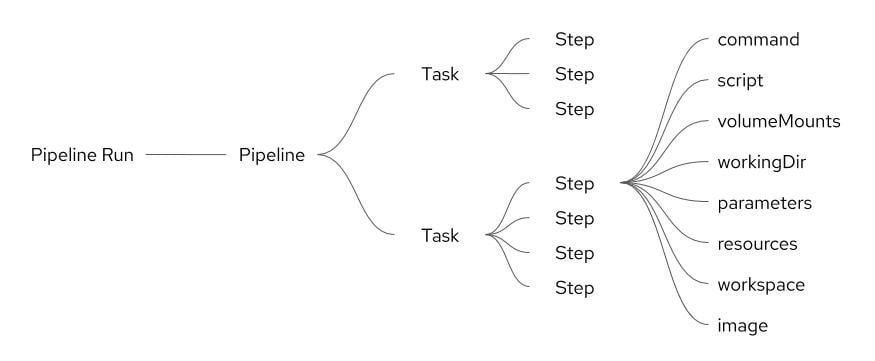

Tasks execute steps in the order in which they are written, with each step completing before the next step will start.

Pipelines execute tasks in parallel unless a task is directed to run after the completion of another task. This facilitates parallel execution of build / test / deploy activities and is a useful characteristic that guides the user in the grouping of steps within tasks.

A pipelineRun resource invokes the execution of a pipeline. This allows specific properties and resources to be used as inputs to the pipeline process, such that the steps within the tasks are configured for the requirements of the user or environment.

Tasks

Each step has a number of elements that define how it will execute the required command. Figure 2 shows the elements of the step and the relationship between the above resources.

Figure 2 - Tekton resource relationship

Elements of a step

The elements of the step are described below. Note that the snippets of YAML used to show the use of an element are not complete with respect to a working task, step or the command within the step.

command

The command to be executed. This can take the format of a sequence of a command and arguments as shown below under the step name :

- name: generate

command:

- s2i

- build

- $(params.PATH_CONTEXT)

- registry.access.redhat.com/redhat-openjdk-18/openjdk18-openshift

- '--image-scripts-url'

- 'image:///usr/local/s2i'

script

An alternative to the command is to use a script which can be useful if a single step is required to perform a number of command line operations as show below:

- name: parse-yaml

script:|-

#!/usr/bin/env python3

...

volumeMounts

A volumeMount is a mechanism for adding storage to a step. Since each step runs in an isolated container any data that is created by a step for use by another step must be stored appropriately. If the data is accessed by a subsequent step within the same task then it is possible to use the /workspace directory to hold any created files and directories. A further option for steps within the same task is to use an emptyDir storage mechanism which can be useful for separating out different data content for ease of use. If file stored data is to be accessed by a subsequent step that is in a different task then a Kubernetes persistent volume claim is required to be used.

Volumes are defined in a section of the task outside the scope of any steps, and then each step that needs the volume will mount it. The example below shows the volume definition and the use of a volume within a step.

- name: view-images

volumeMounts:

- name: pipeline-cache

mountPath: /var/lib/containers

- name: gen-source

mountPath: /gen-source

volumes:

- name: gen-source

emptyDir: {}

- name: pipeline-cache

persistentVolumeClaim:

claimName: pipeline-task-cache-pvc

In the above example the view-images step uses an emptyDir volume which is mounted under the path /gen-source. Other steps within the task can also mount this volume and reuse any data placed there by this step.

A persistent volume claim called pipeline-cache is mounted into the step at the path /var/lib/containers. Other steps within the task and within other tasks of the pipeline can also mount this volume and reuse any data placed there by this step. Note that the path used in this example is where the Buildah command expects to find a local image repository. As a result any steps that invoke a Buildah command will mount this volume at this location.

workingDir

The path within the container which is to be the current working directory when the command is executed.

parameters

Textual information that is required by a step such as a path, a name of an object, a username etc. In a similar manner to volume Mounts, parameters are defined outside the scope of any step within a task and then they are referenced from within the step. The example below shows the definition of the TLSVERIFY parameter with a name, description, type and default value, together with the use of the parameter using the syntax :

$(params.<parameter-name>).

kind: Task

spec:

params:

- name: TLSVERIFY

description: Verify the TLS on the registry endpoint

type: string

default: 'false'

steps:

- name: build

command:

- buildah

- bud

- '--tls-verify=$(params.TLSVERIFY)'

Resources

Resources are similar to properties described above in that a reference to the resource is declared within the task and then the steps use the resources in commands. The example below shows the use of a resource called intermediate-image which is used as an output in a step within the task, meaning that the image is created by a command in the step. The example also shows the use of a Git input resource called source.

In Tekton there is no explicit Git pull command. Simply including a Git resource in a task definition will result in a Git pull action taking place, before any steps execute, which will pull the content of the Git repository to a location of /workspace/<git-resource-name>. In the example below the Git repository content is pulled to /workspace/source.

kind: Task

resources:

inputs:

- name: source

type: git

outputs:

- name: intermediate-image

type: image

steps :

- name: build

command:

- buildah

- bud

- '-t'

- $(resources.outputs.intermediate-image.url)

Resources may reference either an image or a Git repository and the resource entity is defined in a separate YAML file. Image resources may be defined as either input or output resources depending on whether an existing image is to be consumed by a step or whether the image is to be created by a step.

Examples of the definition of Git and image resources are shown below.

Git resource

apiVersion: tekton.dev/v1alpha1

kind: PipelineResource

metadata:

name: liberty-rest-app-source-code

namespace: liberty-rest

spec:

params:

- name: url

value: https://github.com/marrober/pipelineBuildExample.git

type: git

The Git resource can also have a revision parameter which can be a reference to either a branch, tag, commit SHA or ref. If a revision is not specified, the resource inspects the remote repository to determine the correct default branch from which to pull.

Image resource

apiVersion: tekton.dev/v1alpha1

kind: PipelineResource

metadata:

name: liberty-rest-app

namespace: liberty-rest

spec:

params:

- name: url

value: [image registry url]:5000/liberty-rest/liberty-rest-app

type: image

The image resource does not have to point to a valid image stream on OpenShift. It is possible to create images within a local Buildah registry (stored on a shared volume as described above), manipulate the image as required and then push it to an OpenShift or Quay image repository as required. An example of an image resource that does not point to an image stream is shown below.

apiVersion: tekton.dev/v1alpha1

kind: PipelineResource

metadata:

name: intermediate

namespace: liberty-rest

spec:

params:

- name: url

value: intermediate

type: image

Workspace

A workspace is similar to a volume in that it provides storage that can be shared across multiple tasks. A persistent volume claim is required to be created first and then the intent to use the volume is declared within the pipeline and task before mapping the workspace into an individual step such that it is mounted. Workspaces and volumes are similar in behaviour but are defined in slightly different places.

Image

Since each Tekton step runs within its own image the image must be referenced as shown in the example below.

steps :

- name: build

command:

- buildah

- bud

- '-t'

- $(resources.outputs.intermediate-image.url)

image: registry.redhat.io/rhel8/buildah

Summary

This article introduced some of the basic building block resources of a Tekton pipeline, and elements of the YAML that is used within those resources.

What’s next

The next article in this series covers the OpenShift ‘source 2 image’ process and how this innovative software build process can be used from a pipeline process in Tekton.

About the author

More like this

Stop managing the past and start building IT’s future

The agentic paradox and the case for hybrid AI

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds