In this article, I will demonstrate how to monitor an Openshift cluster using Zabbix and Prometheus, that is, we will collect Prometheus metrics in the openshift-monitoring project and we will register as collections and create triggers to inform us of any problems.

For those who have not seen the first part of this article, demonstrating how to install and configure an External Zabbix and use the Zabbix Operator in Openshift, click here to check it out.

In this monitoring, we will use some features of Zabbix such as:

- http agent - will be used to collect our metrics

- LLD (low level discovery) - this feature performs an automatic object discovery and can create our items and triggers.

Using an http agent type item, we will provide an endpoint / metrics, which we will collect from the Prometheus target list, this collection will store the / metric output in Zabbix.

Using the LLD, we will parse this output, process and filter the metrics that interest us, once this is done, we will create a rule for the automatic creation of items and triggers.

Knowing what we're going to use, let's start:

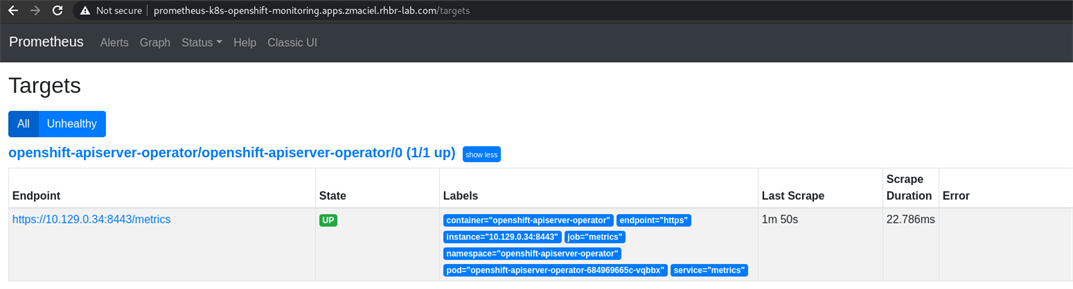

1. Let's take the prometheus url and access the context / targets, to see which targets we can use to monitor with Zabbix

oc get routes -n openshift-monitoring | grep prometheus-k8s

2. After identifying the prometheus url, access via browser and search for endpoints using IP addresses in the same range as the openshift nodes, in my example: 10.36.250.x

In this example, we will monitor the status of Cluster Operators, for that, we will use this endpoint.

3. When accessing the url of the target cluster-operator-version, we can see several metrics related to operators, however in this example, we will use the metrics of cluster_operator_up, which informs 1 when the operator is ok and 0 when there is a problem.

4. In Zabbix, go to Configuration > Host > Create Host, let's add a new host and, for easy identification, define a friendly and easy to understand name, select the appropriate group and click on Add

5. Now, click on the host we added and click on Items > Create Item and define the fields listed below and click on Add:

| Option | Value |

|---|---|

|

Name

|

Prometheus Metrics Operators

|

|

Type

|

HTTP agent

|

|

Key

|

prom_operators

|

|

URL

|

https://{{ IP ADDRESS }}:9099/metrics

|

|

Request type

|

GET

|

|

Request body type

|

Raw data

|

|

Type of Information

|

Text

|

|

Update Interval

|

30s

|

Obs.: Values such as name, key and Update Interval, can be customized

6. Now let's create our discovery rule, for that go to Configuration > Hosts > click on the host we just created > Discovery rules > Create discovery rule

| Option | Value |

|---|---|

|

Name

|

LLD Cluster Operator Up

|

|

Type

|

Dependent item

|

|

Key

|

lld.co.sts

|

|

Master item

|

Prometheus Metrics Operators

|

Define the Name field with a suggestive name, in type, we will use the option Dependent item, as this LLD depends on our collection item http agent, created in the previous step, in Master item, we will select our collection Prometheus Metrics Operators

7. Still on this screen, we will click on Preprocessing, in steps click on add, select Prometheus to JSON and in Parameters, we will add one of the collections we want to add, for example:

cluster_operator_up {name = "authentication", version = "4.7.3"}

As we want it to be an automatic discovery, we will replace the values of name and version according to the example below:

cluster_operator_up{name=~".*",version=~".*"}

This step, will convert our collection of metrics that is in plain text to json, to validate and identify the fields, we will use the test option

Click Test on the right side, in the Value field, paste a metric according to the one collected in Prometheus, click Test and after that click on result, we will have our metric in json format, now we know which fields we need to add a mapping to next step.

To facilitate visualization and understanding, our metric has this structure in the json format

[

{

"name":"cluster_operator_up",

"value":"1",

"line_raw":"cluster_operator_up{name=\"authentication\",version=\"4.7.3\"} 1",

"labels":{

"name":"authentication",

"version":"4.7.3"

},

"type":"untyped"

}

]

8. Now click on LLD macros, in this step, we will map the fields of our json and create macros, that is, variables for Zabbix, the names defined in the LLD macro column, can be customized according to the need always keeping the standard {#NAME}, in JSONPath we need to specify according to our json using the standard $ ['name'] and $ .labels ['name'], done that click on Add.

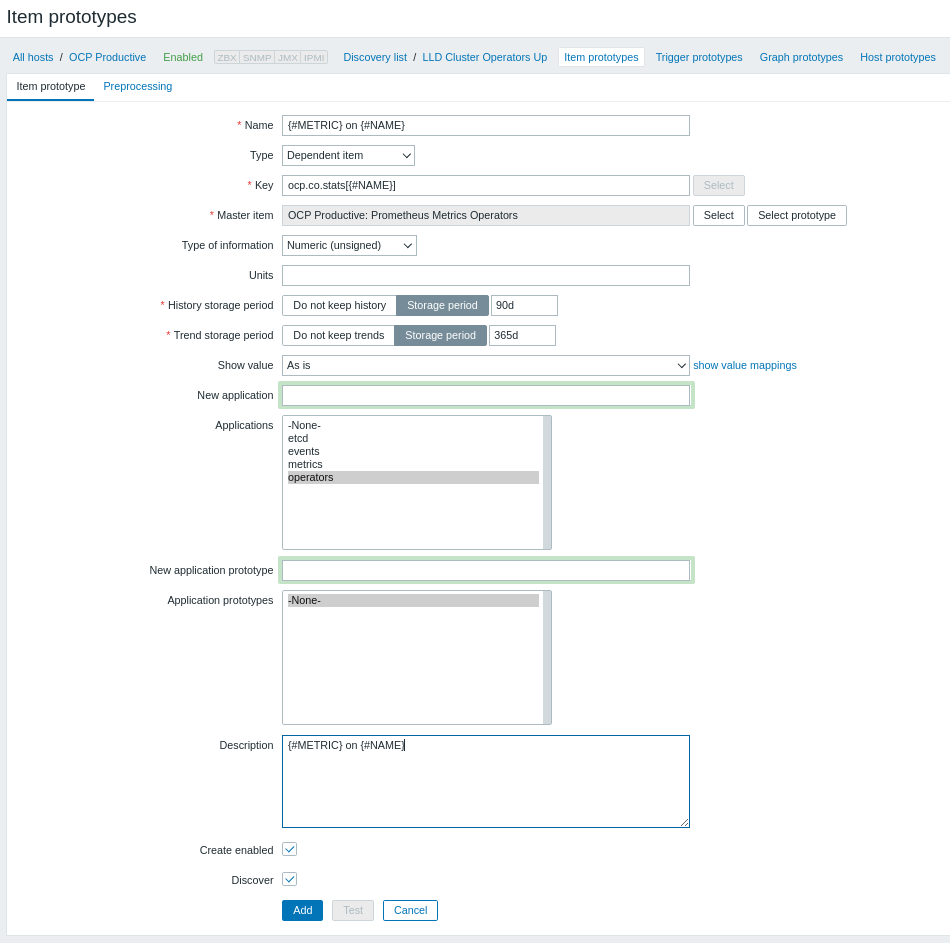

9. After creating our LLD, we want it to automatically create our collection items, that is, it will create an item for each operator based on our LLD rule, for that we go to Item prototypes > Create item prototype

| Option | Value |

|---|---|

|

Name

|

{#METRIC} on {#NAME}

|

|

Type

|

Dependent item

|

|

Key

|

ocp.co.stats[{#NAME}]

|

|

Master item

|

OCP Productive: Prometheus Metrics Operators

|

|

Type of Information

|

Numeric(unsigned)

|

|

New Application / Applications

|

Operators

|

|

Description

|

{#METRIC} on {#NAME}

|

In the Name, Key and Description fields, we will use our macros/variables created previously, to customize/personalize the creation of our items, the value in Key, can be customized as you see fit, however it is important to use a macro with unique value , so that there is no duplication at the time of creation.

The New Application option can be used to organize the items created according to the type of collection, as this collection refers to operators, I added an application called Operators

Importantly, the collection time for these items will be the same as the collection time defined for the collection of the http agent type, created in step 5.

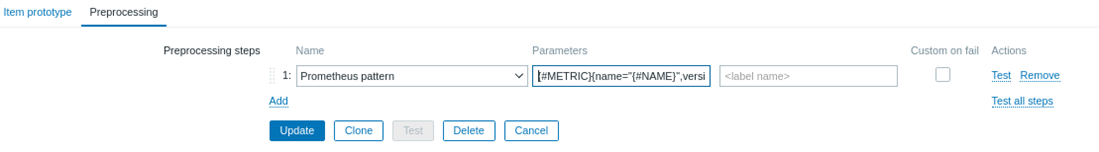

10. Still in Item prototype, click Preprocessing, in steps click Add, select Prometheus Pattern and in Parameters add the syntax below:

{#METRIC}{name="{#NAME}",version="{#VERSION}"}

Using this syntax, we will follow the format of what is being displayed in our metric, however we are using the macros / variables created previously in step 8, click Add or Update (if you clicked add when you finished creating the prototype item).

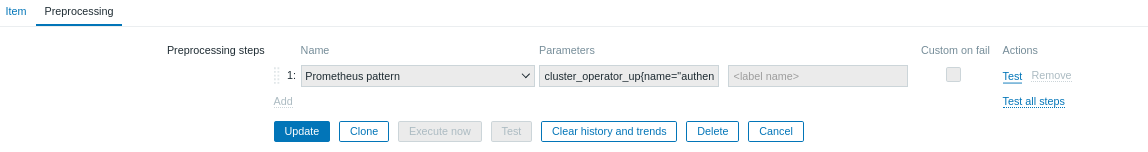

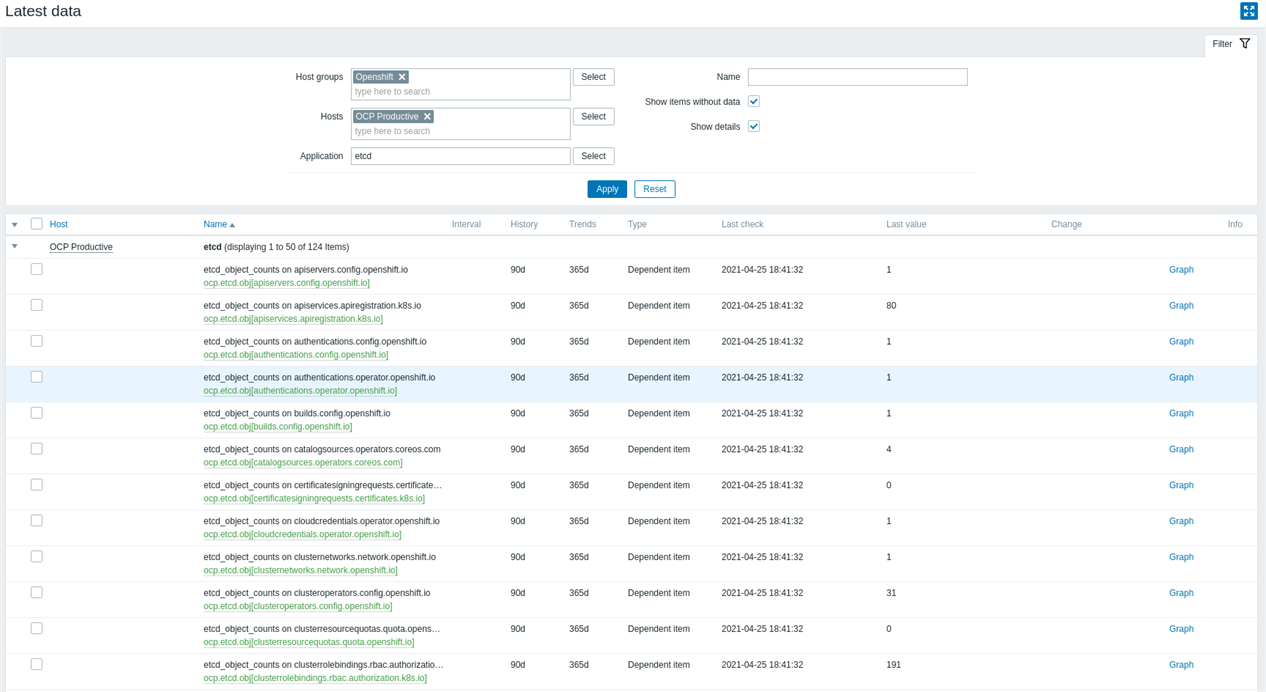

11. Now, let's validate if our LLD is working, go to Monitoring > Latest data > use the filters to facilitate the search, select the host group, host and application and click Apply

If everything is right, we will be able to see our items with the proper collection, to validate that the metric was created correctly, click on the desired item and then click on Preprocessing,

Check the Parameters field, which was filled in automatically by the LLD

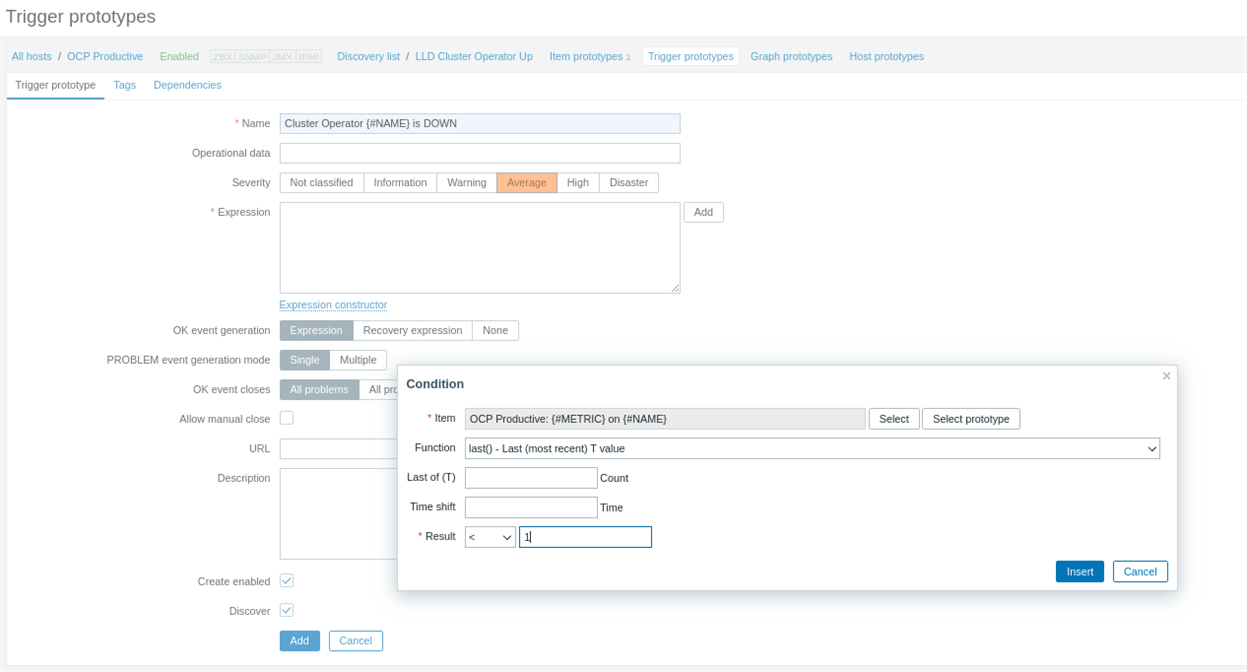

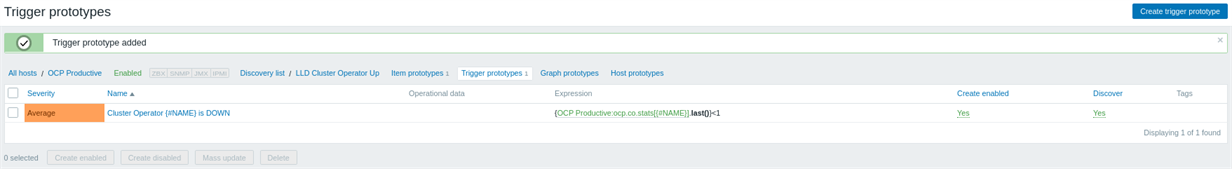

12. Now that we have our items being collected, we need to create a trigger, to let us know when we hear a problem with any of our Operators, for that go to: Configuration> Hosts> click on the created Host> Discovery rules> click on the created LLD> Trigger prototypes> Create trigger prototype

Set the name you want for your trigger to severity, now in expression, click add> under item click select prototype and select our prototype item, in function, keep the option last () and in result set the option <(less than ) 1.

Remembering that the status of the metric is 1 for OK and 0 for NOK.

Click Insert and then click Add.

Now that we have our trigger created, let's test it.

13. To view our alerts, go to Monitoring > Dashboard, here we can view our triggers if there are any.

To validate whether it is correct or not, we can access our endpoint /metrics again and check the metrics we added to our LLD.

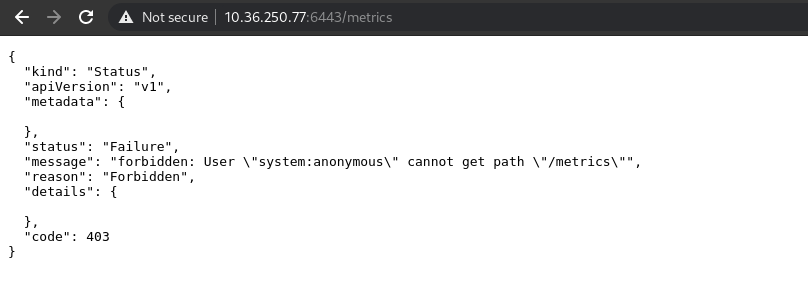

14. Now, if you choose a metrics endpoint that needs authentication, for example, kube-apiserver as listed below, to monitor API data

Access via browser or using cURL to validate whether the authentication error will be displayed

$ curl -k https://10.36.250.77:6443/metrics

15. Now in openshift, we will list our serviceaccounts within the zabbix project

$ oc project zabbix

$ oc get sa

We will grant cluster-reader privilege to our zabbix-agent serviceaccount so that you have privileges to authenticate at this endpoint

$ oc adm policy add-cluster-role-to-user cluster-reader -z zabbix-agent

To validate that our service is now able to authenticate, we will test using the curl by passing the token through bearer authorization

$ TOKEN=`oc sa get-token zabbix-agent`

$ curl -Ik -H "Authorization: Bearer $TOKEN" https://10.36.250.77:6443/metrics

HTTP/2 200

audit-id: 6d3ff3b6-b687-494c-ae04-ec46e813aea1

cache-control: no-cache, private

content-type: text/plain; version=0.0.4; charset=utf-8

x-kubernetes-pf-flowschema-uid: 3a22c354-288e-4c16-ac53-f828d6e66303

x-kubernetes-pf-prioritylevel-uid: a4bc8a8e-784c-45ab-b68b-1620bfb48ef5

date: Sun, 25 Apr 2021 21:25:34 GMT

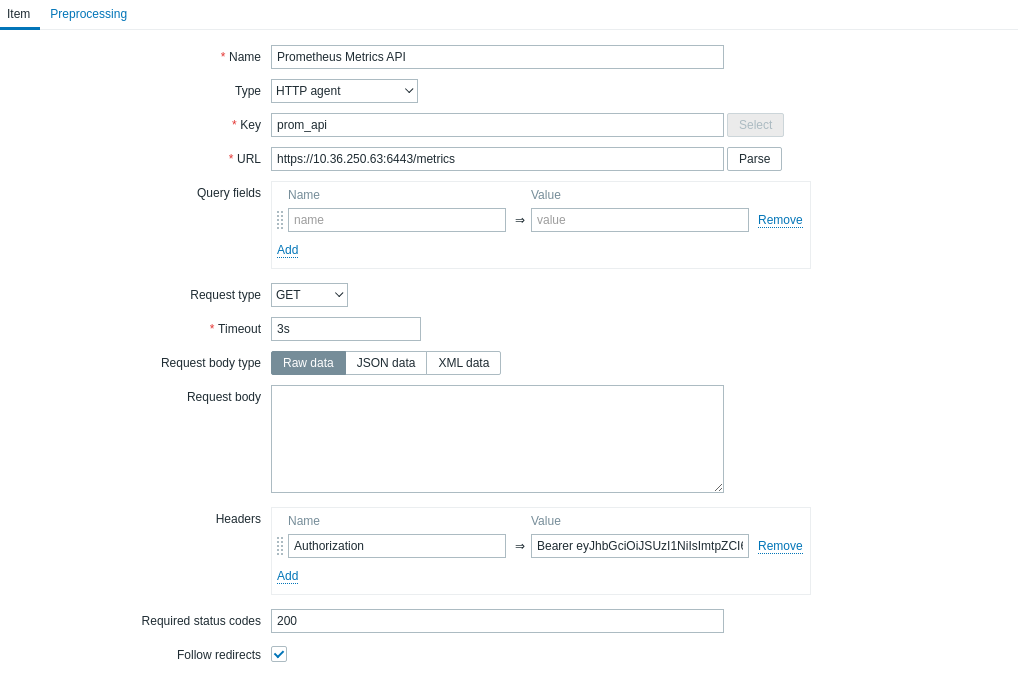

16. In zabbix, now we will register our metric collection item, go to Configuration > Hosts > Host previously created > Items > Create item

To authenticate via header by passing a bearer token, let's add our token in Headers, for that, in name define Authorization and in Value add Bearer followed by the token, as the example below:

| Authorization | Bearer xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx |

Now repeat the steps from step 6 to step 12, analyze all the endpoints, validate the metrics that make sense for monitoring your environment and add them to your Zabbix using these steps.

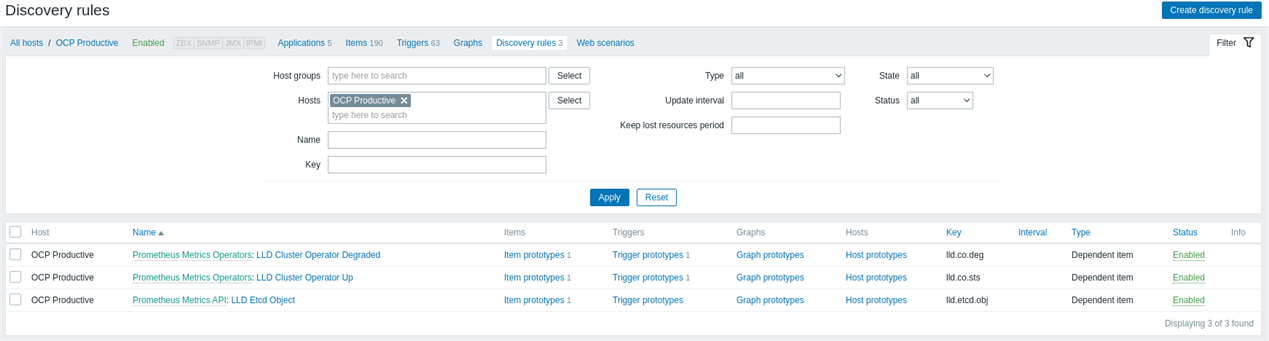

LLD for operators with status degraded and LLD Objects Etcd

Etcd items created from the LLD and already stating the most recent value

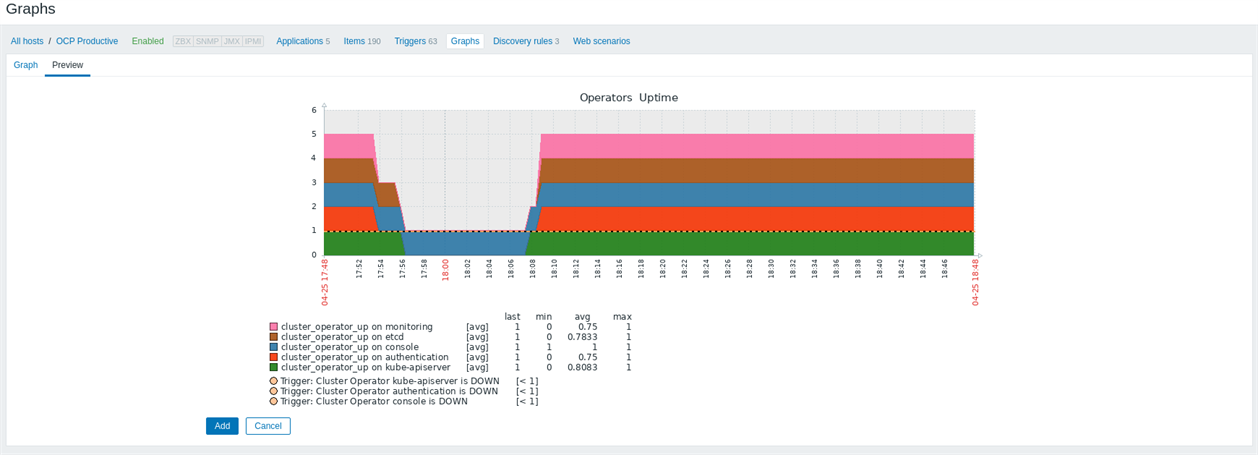

After creating all your collections and triggers, take the opportunity to create some graphs with indicators of your environment:

Conclusion

We can use Zabbix to collect Prometheus metrics in the openshift-monitoring project, add graphics to have a timeline view and leave the monitoring team with a centralized view.

About the author

More like this

Stop managing the past and start building IT’s future

The agentic paradox and the case for hybrid AI

Composable infrastructure & the CPU’s new groove | Technically Speaking

Machine learning model drift & MLOps pipelines | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds