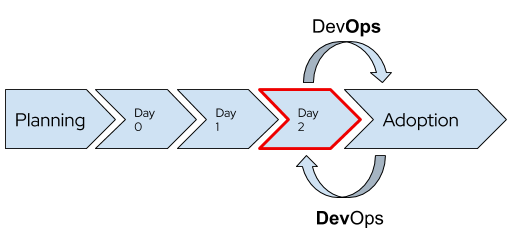

In the previous post we discussed how the installation process changed from OpenShift 3 to OpenShift 4, with many tasks moved from being a part of the installer to being post-install or “day 2” tasks. OpenShift 4 also introduced Operators as the core management paradigm for many features and functionality. This means that a substantial amount of customization to the cluster is done after initial deployment.

This change provides some interesting benefits. For example, because the post-install configuration is done using standard Kubernetes YAML objects instead of Ansible playbooks, I can now revision control each aspect of my deployment in a very granular fashion and adopt a GitOps management philosophy if desired. Additionally, it is easy to have a single “standard” deployment for the initial install, but then I can quickly customize the cluster for a specific purpose by choosing which of the revision controlled YAML files to apply. Since the configuration is applied by Operators, I do not have to be aware of specific dependencies; instead, I can rely on OpenShift itself to manage that for me.

That being said, we understand that it is a change from before, and one of the most frequent requests is for a guide that helps to organize all of that configuration that might need to be done post-install. With that in mind, the list below is an incomplete collection of potential tasks. All of this information is sourced from the documentation, but it has been organized to make it easier for the admin team to discover and apply configuration that is relevant.

Very few, if any, of these are mandatory; rather, they are items that improve the usability, security, and functionality of your deployment so that you, and your applications, can use the full potential of OpenShift.

Compute

- Cluster Tasks

- If you incorrectly sized the worker nodes during deployment, adjust them by creating one or more new MachineSets (AWS, Azure, GCP), scaling them up, and then scaling the original down before removing them.

- This is also a good time, if desired, to create and configure additional MachineSets to dedicate for specific workloads, such as recreating the infrastructure node concept.

- Decide whether to enable/disable specific feature gates.

- When using a full-stack automation capable platform, enable and configure cluster autoscaling.

- Configure etcd encryption.

- Review recommended etcd practices for large and dense clusters.

- Backup etcd (and test restore!).

- Configure the pod disruption budget to prevent accidental outages.

- Node Tasks

- Add RHEL 7 Server node(s), if needed.

- Enable MachineHealthChecks.

- Pre-deploy additional nodes if needed.

- Review and apply the relevant recommended host practices.

- Configure the node features / capabilities.

- If desired, configure CPU manager.

- Decide whether to use huge pages.

- Configure device plugins.

- Add labels and taints to nodes, using MachineSets, for controlling pod scheduling.

- Configure node topology manager for NUMA awareness, etc.

- Optionally, enable overcommitment.

- Enable garbage collection for node resources.

- Adjust the node tuning operator for your needs.

- Adjust pods per node as needed for expected workload.

Network

- If you’re using NetworkPolicy, configure as needed.

- Use the MachineConfig Operator to define and configure additional node networks, if not done at install.

- Configure private DNS, if needed.

- If needed and not done during install, enable and configure the cluster-wide proxy.

- Customize the cluster network, including the SDN, if needed.

- Configure additional networks, for example bridge, MACVLAN, host device, and SR-IOV networks to be attached to pods by Multus.

- Replace the Ingress / Router certificate with non-self signed.

- Configure Ingress traffic for sharding, additional load balancer(s), and/or external IPs.

- If desired, deploy the service mesh.

- Review the “Optimizing routing” documentation.

Storage

- If using, deploy OCS.

- Deploy and configure additional dynamic storage provisioners.

- Some storage vendors publish and support their dynamic provisioner separately. Be sure to work with your storage team and vendor to determine if they have a CSI provisioner that works with OpenShift.

- Several partners have created Operators and certified their storage offerings for OpenShift, which can be found in the Marketplace.

- Configure any additional storage class(es) for dynamic provisioner(s).

- Review the “Optimizing storage” documentation.

Making Rational Changes

OpenShift has an almost dizzying number of features and capabilities, which can be configured, customized, adjusted, and otherwise “fiddled with” endlessly. Fortunately, the defaults are sane and safe for almost all instances, so you can choose which are the most important for you and your applications to adjust, while trusting that the others are working just fine.

This article has covered a large swath of the options, but they are changing and growing with each release. It is important to keep up with the changes using the release notes and to periodically review the documentation, as a whole, for new and interesting capabilities that are relevant to you.

About the author

More like this

AI in telco – the catalyst for scaling digital business

Introducing OpenShift Service Mesh 3.2 with Istio’s ambient mode

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds