What Is a Workload?

The Merriam-Webster dictionary defines a workload as “the amount of work performed or capable of being performed (as by a mechanical device) usually within a specific period.” In computing, we typically refer to any program or application that runs on a computer. The definition is often loosely applied and can describe a simple “hello world” program or a complex monolithic application. Today, the terms workload, application, software, and program are used interchangeably. While they are often used interchangeably, the definition is vague by design and does not always convey the user’s inferred context.

The Challenge in Defining Your Workloads

Since the term workload is vague by design, how we use and apply it can vary. Traditionally, there have been a few specific types of workloads when referring to our computer systems:

- CPU Workload

- Memory Workload

- I/O Workload

- Database Workload

And while this is a traditional way of referring to workloads, it is not accurate to define our modern cloud solutions. With the rise of cloud computing technologies and virtualization, another layer of abstraction has been created, and with this added layer, our workload definition changes as well.

How We Traditionally Secured Our Workloads

Under the traditional on-premises IT infrastructure deployment, meeting organizational compliance requirements can take a significant amount of time. Failure to meet compliance requirements leads to delivery delays and slows the pace of business units. The compliance requirements continue to change and evolve, leading to organizational needs that must also adapt to the changing security landscape.

It takes dedicated professionals to keep up with security and compliance requirements. Developing and maintaining a controlled environment requires an ongoing investment at multiple levels of the IT stack. Depending on your stack, your IT requirements likely include:

This list is not exhaustive, and hardening a traditional data center is a constant and never-ending process, but we can make it easier. The way we develop, deploy, integrate, and manage IT is dramatically changing. The adoption of public cloud infrastructure allows its users to inherit the provider’s implementation of global security and compliance controls, helping to ensure high standards of privacy and data security. The offloading of existing security controls and migrations of workloads to the cloud necessitates a change in perspective.

Managing Your Workloads Has Changed

How we manage, deploy, and secure our workloads is dramatically changing. Virtual machines added process density and segmentation, increasing performance and development speed. Containers changed how we build and develop applications, producing portability, even greater development speed, and increased scalability. Kubernetes brought containers together as the de facto container orchestration tool for scaling, managing, and securing your containerized workloads.

Over the last decade, the emergence of cloud computing and container technologies has driven the development of more workload types, including software as a service (SaaS), microservices-based applications, and serverless computing. The sheer number of cloud services is vast and complex, with various software architectures, operating systems, network stacks, and others. It is well worth taking the time to set up some boundaries to evaluate your workloads accurately moving forward.

Securing Your Workloads Has Changed

Public cloud providers also often have integrated security services, allowing aspects of monitoring and security to be automated by the appropriate unit in your organization. These managed services reduce the effort required to configure parts of the security infrastructure to support timely event response and an overall reduction in risk. By adopting multiple independent layers of security, the momentum and effectiveness of an attack are decreased, and the effort required to mount a successful attack becomes difficult and costly.

A constant challenge with the adoption of public cloud infrastructure is the use of existing infrastructure. Migrations to the cloud create a challenge in managing workloads, with cloud workloads being handled differently than on-premise workloads. On top of this challenge, existing security tools need to be adapted for the challenges of Hybrid and multicloud environments.

Kubernetes Workloads Are Managed Differently

Since 90% of the cloud is comprised of Linux operating systems, it would follow that container technology would be the natural, technological progression after the large cloud migration. Containers are native to Linux and allow for high-density processing and faster development, and the scalability that containers bring is immensely useful. Gartner predicts that 70% of enterprises will be running three or more applications in containers by 2023. However, containerization alone is not responsible for this shift. Companies are recognizing the development speed brought on by the accelerated build, deploy, runtime model enabled by managed cloud services.

Kubernetes brings a massive upside to the organizations that can take advantage of its technology and scalability. However, a common grievance is that the management of the orchestration software is complex and requires significant in-house knowledge to avoid pitfalls. This common complaint is the main driver behind the managed Kubernetes services you see today, such as Red Hat’s OpenShift Service on AWS. Kubernetes also complicates our workload definition even further.

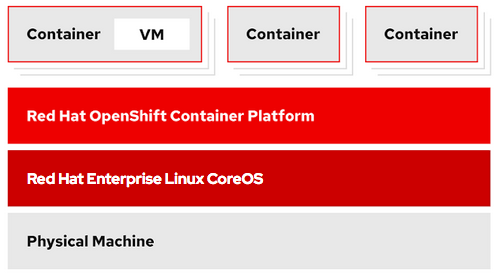

Kubernetes clusters can be deployed on a bare-metal server or with the use of virtual machines. A sysadmin may have to manage virtual machines, containers, and Kubernetes clusters with all of the logging, monitoring and networking aspects of running applications.

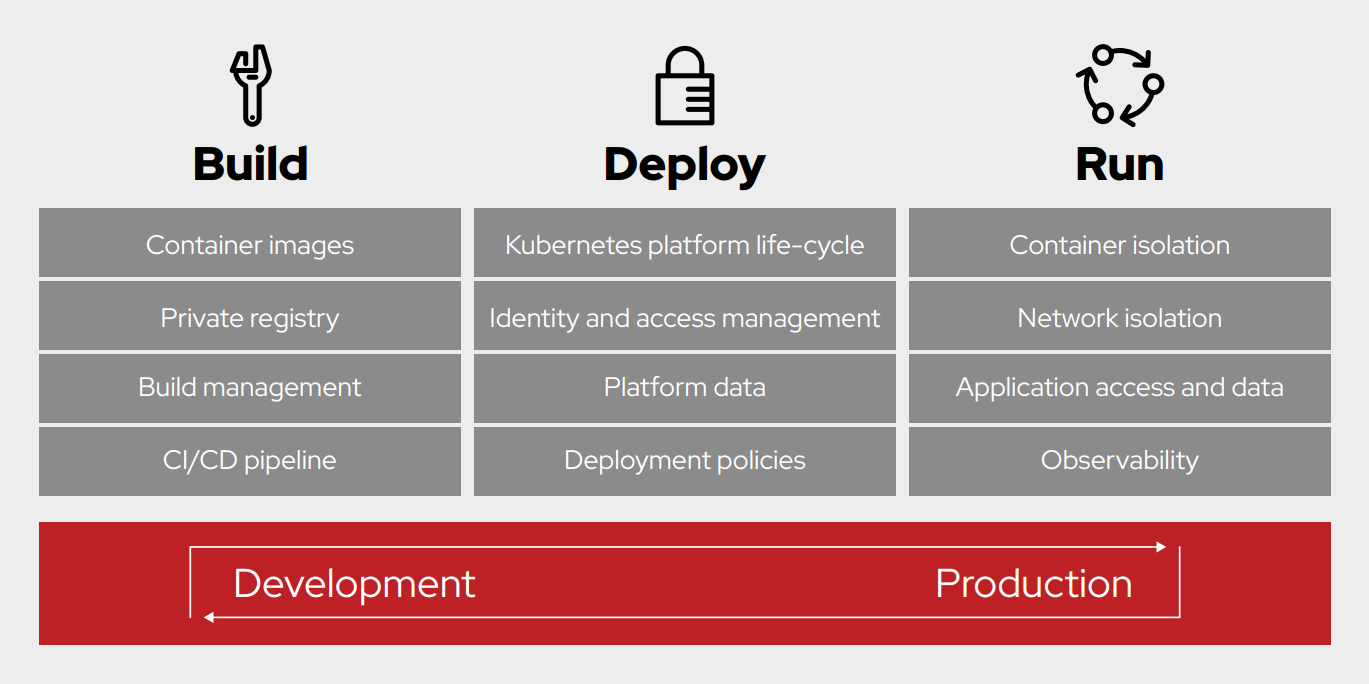

One method to manage this change in context is the build, deploy, run approach. This layered approach with Kubernetes allows for flexibility in the way your workloads are managed and scaled. It is the software layer that can be consistent between clouds and on-premises environments.

By having a consistent Kubernetes environment across cloud and on-premises environments, you simplify the management and security of your workloads. This model creates a shared context across teams and environments, enabling faster communication and standard solutions thanks to the simplified context. The final step is securing your workloads in this simplified context.

Kubernetes Workloads Are Secured Differently

The shared context provided by Kubernetes separates your workloads into two distinct categories, applications and infrastructure. Your applications are containerized, can be deployed in seconds, and require specific networking, security, and data considerations provided by the infrastructure. Your infrastructure seeks to support your applications with containerization technologies and all of the management tools necessary to manage, scale, and secure your applications.

Different workloads have different characteristics, and the best platform for a particular workload to run on depends on the nature of the specific workload. Workloads and their layers of abstraction need to be addressed independently since the workflow of its users will vary between use cases.

What Is Workload Security?

With the various levels of infrastructure and applications available today, we propose a layered approach to security. With different infrastructure layers and various cloud services available, it is essential to look at each stage and platform holistically. If you are using Kubernetes, you will want a security solution built specifically to secure Kubernetes clusters.

Workload security will need to come with a modifier. Cloud workload security, container workload security, and Kubernetes workload security all convey more meaningful information to the listening and can illustrate the appropriate layer that is being referenced.

In Summary

Organizations are moving through a transitional moment in IT. We can build, deploy, and manage infrastructure and applications quicker and more efficiently thanks to cloud computing and containerization. This change comes with different management issues and communication hurdles separate from traditional infrastructure methods.

Organizations should focus on a layered approach to security and platform management and move away from an ever-changing “workload” paradigm. Managed platforms, such as Kubernetes, allow for selecting monitoring, logging, and security solutions for the defined layer.

About the author

Michael Foster is a CNCF Ambassador, the Community Lead for the open source StackRox project, and Principal Product Marketing Manager for Red Hat based in Toronto. In addition to his open source project responsibilities, he utilizes his applied Kubernetes and container experience with Red Hat Advanced Cluster Security to help organizations secure their Kubernetes environments. With StackRox, Michael hopes organizations can leverage the open source project in their Kubernetes environments and join the open source community through stackrox.io. Outside of work, Michael enjoys staying active, skiing, and tinkering with his various mechanical projects at home. He holds a B.S. in Chemical Engineering from Northeastern University and CKAD, CKA, and CKS certifications.

More like this

Why flexibility is non-negotiable in the Middle East’s AI transformation journey

Beyond automation: Why the surge in AI-driven security vulnerabilities demands human technical advocacy

Collaboration In Product Security | Compiler

Keeping Track Of Vulnerabilities With CVEs | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds