About Red Hat

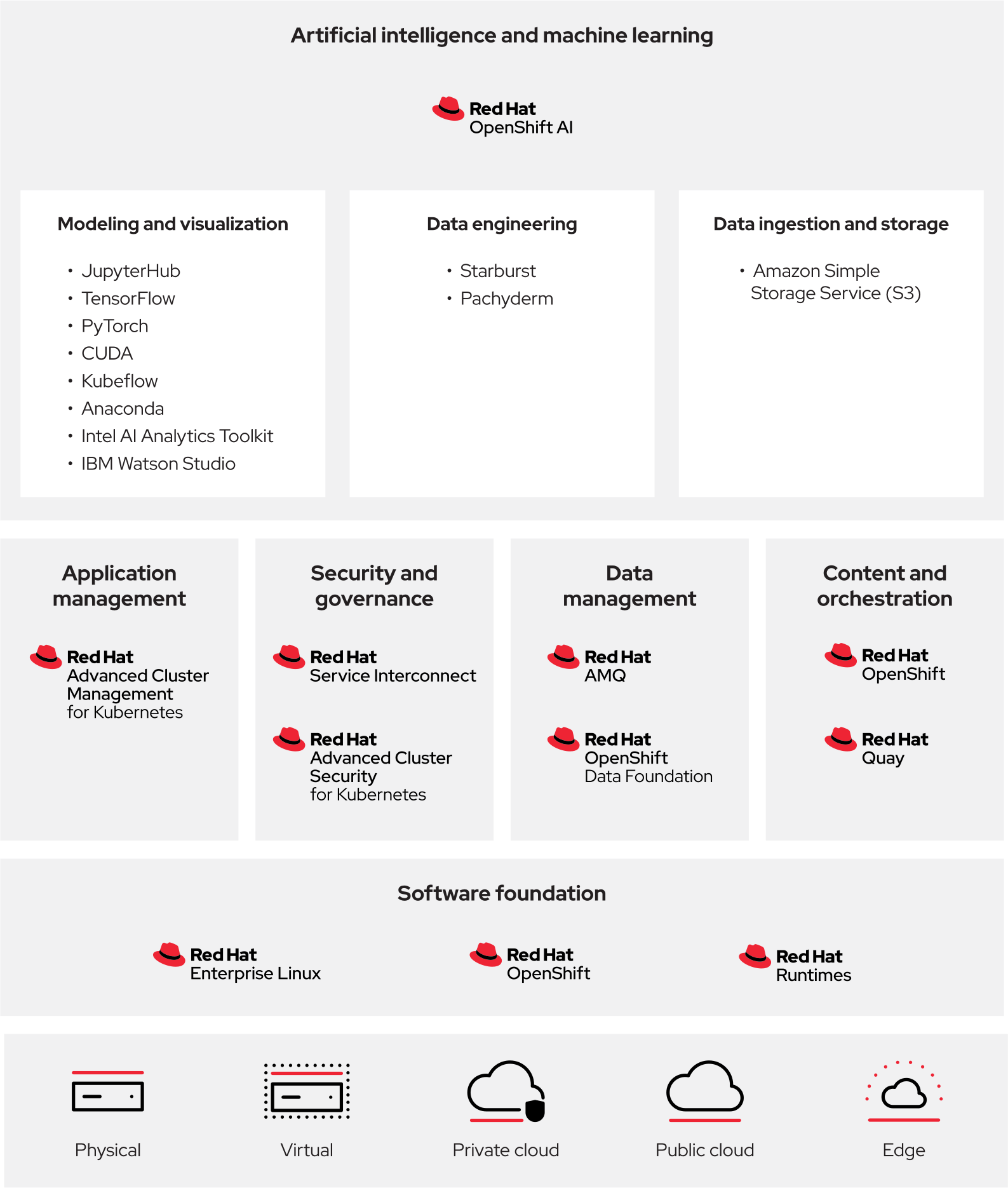

Red Hat is the open hybrid cloud technology leader, delivering a trusted, consistent and comprehensive foundation for transformative IT innovation and AI applications. Its portfolio of cloud, developer, AI, Linux, automation and application platform technologies enables any application, anywhere—from the datacenter to the edge. As the world's leading provider of enterprise open source software solutions, Red Hat invests in open ecosystems and communities to solve tomorrow's IT challenges. Collaborating with partners and customers, Red Hat helps them build, connect, automate, secure, and manage their IT environments, supported by consulting services and award-winning training and certification offerings.

- North America

- Asia Pacific

- Latin America

- Europe, Middle East, and Africa

- 888-REDHAT1

- +6564904200

- +5443297300

- +0080073342835

Copyright © 2026 Red Hat. Red Hat, the Red Hat logo, Ansible, and OpenShift are trademarks or registered trademarks of Red Hat, LLC or its subsidiaries in the United States and other countries. Linux® is the registered trademark of Linus Torvalds in the U.S. and other countries. The OPENSTACK logo and word mark are trademarks or registered trademarks of OpenInfra Foundation, used under license. All other trademarks are the property of their respective owners.