Although pods and services have their own IP addresses on Kubernetes, these IP addresses are only reachable within the Kubernetes cluster and not accessible to the outside clients. The Ingress object in Kubernetes, although still in beta, is designed to signal the Kubernetes platform that a certain service needs to be accessible to the outside world and it contains the configuration needed such as an externally-reachable URL, SSL, and more.

Creating an ingress object should not have any effects on its own and requires an ingress controller on the Kubernetes platform in order to fulfill the configurations defined by the ingress object.

Here at Red Hat, we saw the need for enabling external access to services before the introduction of ingress objects in Kubernetes, and created a concept called Route for the same purpose (with additional capabilities such as splitting traffic between multiple backends, sticky sessions, etc). Red Hat is one of the top contributors to the Kubernetes community and contributed the design principles behind Routes to the community which heavily influenced the Ingress design.

When a Route object is created on OpenShift, it gets picked up by the built-in HAProxy load balancer in order to expose the requested service and make it externally available with the given configuration. It’s worth mentioning that although OpenShift provides this HAProxy-based built-in load-balancer, it has a pluggable architecture that allows admins to replace it with NGINX (and NGINX Plus) or external load-balancers like F5 BIG-IP.

You might be thinking at this point that this is confusing! Should one use the standard Kubernetes ingress objects or rather the OpenShift routes? What if you already have applications that are using the route objects? What if you are deploying an application written with Kubernetes ingress in mind?

Worry not! Since the release of Red Hat OpenShift 3.10, ingress objects are supported alongside route objects. The Red Hat OpenShift ingress controller implementation is designed to watch ingress objects and create one or more routes to fulfill the conditions specified. If you change the ingress object, the Red Hat OpenShift Ingress Controller syncs the changes and applies to the generated route objects. These are then picked up by the built-in HAProxy load balancer.

Create Ingress Objects on OpenShift

Let’s see how that works in action. First, download minishift in order to create a single node local OKD (community distribution of Kubernetes that powers Red Hat OpenShift) cluster on your workstation:

$ minishift start --vm-driver=virtualbox --openshift-version=v3.10.0

Once OpenShift is up and running you can log in with the developer/developer and create a new project (namespace):

$ oc login

$ oc new-project demo

Now we need a container image to deploy and later expose with an ingress object. Spring Boot has a sample Java application called Spring PetClinic, which is available on GitHub and is a good application to use here. However, we need a container image for the Spring PetClinic application in order to deploy it on Kubernetes. Fortunately, that’s quite easy with Red Hat OpenShift, as it has a built-in Source-to-Image mechanism for building container images from the source code of applications.

Import the Red Hat OpenJDK image into minishift in order to use it as the base Java image for Spring PetClinic:

$ oc create -f https://raw.githubusercontent.com/openshift/library/master/official/java/imagestreams/java-rhel7.json -n openshift --as=system:admin

Create a build object in order to build a container image from the Spring PetClinic source code on GitHub using the Java runtime:

$ oc new-build java:8~https://github.com/spring-projects/spring-petclinic.git

# check the build logs

$ oc logs -f bc/spring-petclinic

After the build finishes, you can see the Spring PetClinic image in the OpenShift built-in image registry:

$ oc get is

NAME DOCKER REPO TAGS

spring-petclinic 172.30.1.1:5000/demo/spring-petclinic latest

We can now deploy the image via creating deployment and service objects:

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: spring-petclinic

labels:

app: spring-petclinic

spec:

template:

metadata:

labels:

app: spring-petclinic

spec:

containers:

- name: spring-petclinic

image: 172.30.1.1:5000/demo/spring-petclinic:latest

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

name: spring-petclinic

spec:

selector:

app: spring-petclinic

ports:

- name: http

protocol: TCP

port: 8080

targetPort: 8080

Save the above YAML definition in a file and then create the deployment and service:

$ oc create -f petclinic.yml

If you log in to the Red Hat OpenShift Web Console in your browser (hint: run minishift console), you should see that the Spring PetClinic application is deployed and is running.

In order to route external traffic to the deployed app, we can now create an ingress object to enable external access to the application. The host attribute specifies the URL that should be exposed on the load-balancer for external access to the services and the path attributes specify the mappings between the context path and each service. If you are running on minishift, you can use the generic nip.io wildcard DNS service, otherwise, make sure it matches the hostnames configured for your Red Hat OpenShift cluster:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: spring-petclinic

spec:

rules:

- host: petclinic.192.168.99.100.nip.io

http:

paths:

- path: /

backend:

serviceName: spring-petclinic

servicePort: 8080

$ oc create -f ingress.yml

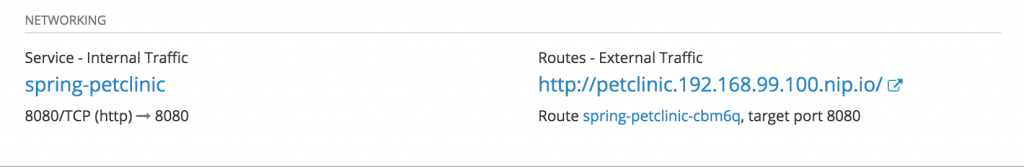

As soon as the ingress object is created, you would notice that a publicly accessible URL is added to the built-in load balancer which allows you to access the Spring PetClinic application in the browser.

If you list the ingress and route objects in the project, you’d see that there is a route object generated for the ingress object that we created in order to expose the service and is kept in sync by the ingress controller.

$ oc get ingress

NAME HOSTS ADDRESS PORTS

spring-petclinic petclinic.192.168.99.100.nip.io 80

$ oc get route

NAME HOST/PORT PATH SERVICES PORT

spring-petclinic-cbm6q petclinic.192.168.99.100.nip.io / spring-petclinic 8080

Should You Use Ingress or Route?

Ingress and Route while similar, have differences in maturity and capabilities. If you intend to deploy your application on multiple Kubernetes distributions at the same time then Ingress might be a good option, although you will still have to deal with the specific ways that each Kubernetes distribution is providing the more complex routing capabilities such HTTPs and TLS re-encryption. For all other applications, you may run into scenarios where Ingress does not provide the functionality you need. The following table summarizes the differences between Kubernetes Ingress and Red Hat OpenShift Routes.

| Feature | Ingress on OpenShift | Route on OpenShift |

| Standard Kubernetes object | X | |

| External access to services | X | X |

| Persistent (sticky) sessions | X | X |

| Load-balancing strategies (e.g. round robin) | X | X |

| Rate-limit and throttling | X | X |

| IP whitelisting | X | X |

| TLS edge termination for improved security | X | X |

| TLS re-encryption for improved security | X | |

| TLS passthrough for improved security | X | |

| Multiple weighted backends (split traffic) | X | |

| Generated pattern-based hostnames | X | |

| Wildcard domains | X |

Conclusion

Red Hat OpenShift can act as an incubator of capabilities that don’t exist in Kubernetes yet and work with the upstream communities in order to contribute these capabilities back into Kubernetes. Route and Ingress objects are examples of this upstream collaboration and, while how you specify the rules for each is different, they ultimately are trying to achieve the same capability, which is allowing external clients to reach cluster services according to a set of defined rules.

As a Red Hat OpenShift user, when these capabilities are introduced in Kubernetes, you have the option to switch to the Kubernetes objects and continue using the Red Hat OpenShift platform as you did before. Despite this, due to the feature-set differences between Ingress and Route, unless you need to deploy the same objects across multiple Kubernetes distributions, Route objects are my recommended approach on Red Hat OpenShift.

You can read more about the new features available on Red Hat OpenShift 3.10 in the Release Notes.

{{cta('1ba92822-e866-48f0-8a92-ade9f0c3b6ca')}}

About the author

Siamak Sadeghianfar is a member of the Hybrid Cloud product management team at Red Hat leading the cloud-native application build and delivery on OpenShift.

More like this

Confidential Containers workshop on Microsoft Azure Red Hat OpenShift: Learn interactively

AI in telco – the catalyst for scaling digital business

The Containers_Derby | Command Line Heroes

Crack the Cloud_Open | Command Line Heroes

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds