Part 1: Demystifying the High Availability and Performance of etcd

Kubernetes has two base node roles: supervisors and workers. A cluster may have additional roles derived from the “worker” role based on their unique infrastructure characteristics (e.g., type of CPU, NICs, GPUs, FPGAs, etc.) or the applications they run (e.g., infrastructure services, developers, development team, etc.). The supervisor nodes comprise the Kubernetes control plane, which hosts components that make cluster-level decisions. These include scheduling, responding to nodes' events, storing artifacts of all cluster-level objects from application manifests and ServiceAccounts definitions to Secrets and extensions to Kubernetes APIs in the form of CustomResourceDefinitions, and much more.

![WC - [Blog] Demystifying etcd key-value store](/rhdc/managed-files/ohc/WC%20-%20%5BBlog%5D%20Demystifying%20etcd%20key-value%20store%20.png)

The components in the Kubernetes control plane are stateless. All cluster data is stored in the etcd key-value store.

The etcd key-value store is the single source of truth for the cluster and the single component that, if lost, leads to a catastrophic failure of the etcd cluster. Fortunately, the etcd component can run in configurations to maintain high failure tolerance and survive multiple failure scenarios.

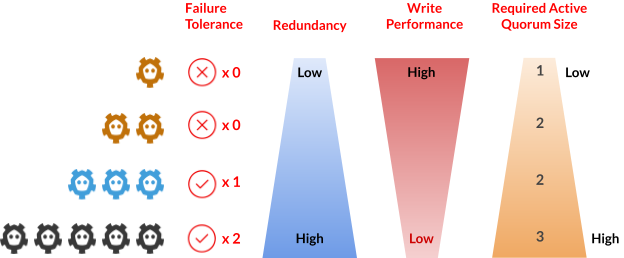

However, there is a delicate balance between failure tolerance and write performance or failure tolerance and the minimum required number of nodes to maintain quorum.

For example, a two-node etcd cluster is not high availability (HA) because if one node is lost, the cluster will have quorum loss and enter read-only mode. On the other hand, a three-node etcd cluster is the minimum high availability configuration but also requires a minimum of two nodes to remain operational. This time, it is an HA configuration. A three-node etcd cluster is the minimum HA configuration.

Write Performance and Quorum Size

What about using a higher quantity of etcd nodes? To answer this question, we have to look at the other columns.

As the number of etcd nodes increases, the write performance slows down. This is because there is more interaction over the networks and a larger number of disk writes that need to be completed by the quorum number of nodes before a "write" is committed across all etcd member nodes.

We must look at how an etcd writes works to understand the scenario better. But first, some background information:

- Only one leader node per etcd cluster is elected by consensus among etcd members.

- Only the leader node can coordinate a write to record a key-value pair into the etcd cluster.

- An etcd client randomly chooses an etcd member node to interact with, and it does not know in advance if the node is a leader or a follower.

- When a follower member receives a request to set or “write” a key-value pair, it proxies the request to the leader. It waits until the write is brokered and confirmed or rejected by the leader and then sends the confirmation back to the client.

- Only one leader node per etcd cluster is elected by consensus among etcd members.

- The leader must send periodic (HEARTBEAT_INTERVAL) heartbeat notifications to their followers to maintain leadership.

- When the follower nodes do not receive a leader heartbeat notification on a predefined interval (ELECTION_TIMEOUT), a new election process is triggered to choose a new leader

- Only the leader node can coordinate a write to record a key-value pair into the etcd cluster.

- During quorum loss or when no leader is available, the etcd cluster does not accept updates or writes. From the perspective of an etcd client, etcd becomes read-only.

With that background, let's explore the flow of a write or set of a key-value into etcd:

- Step 1: The leader appends the key-value entry into the Write Ahead Log (WAL). This action requires a fsync() writing to disk, hence impacted by the disk latency.

- Step 2a: The leader notifies the followers about the change. This action involves network communication, hence impacted by the network latency.

- Step 2b: Followers append entry into their local WAL. This action requires a fsync() writing to disk, hence impacted by disk latency.

- Step 2c: Followers notify the leader they have recorded the key-value entry in their WAL. This action requires network communication, hence impacted by the network latency.

- Step 3: The leader waits for confirmation from the majority (quorum) and commits the key-value entry. This action requires another fsync() writing to disk, hence impacted by the disk latency.

- Step 4a: The leader notifies followers that the entry is committed. This action requires network communication, hence impacted by the network latency.

- Step 4b: On confirmation from the leaders, the followers commit the key-value entry. This action requires a fsync() writing to disk, hence impacted by disk latency.

![WC - [Blog] Demystifying etcd key-value store (1)](/rhdc/managed-files/ohc/WC%20-%20%5BBlog%5D%20Demystifying%20etcd%20key-value%20store%20%20%281%29.png)

From the steps above, it is easy to see why the write performance of etcd is directly affected by the number of nodes in the etcd cluster. The more nodes, the higher the number of network interactions and disk writes required to achieve consensus. These disks and network interactions compound the etcd write latency, also affecting the number of write transactions per second that can be completed.

Beyond the write performance, disk and network latency plays an important role in the overall stability of the etcd cluster. We will explore this topic in more detail in Part 2 of this series.

Part 2: Demystifying Latency and etcd Cluster Stability

In Part 1, we explored the delicate balance between high availability and write performance on an etcd cluster. In this article, we'll explore how the disk write latency and the network latency impact the stability of an etcd cluster.

First, some important background information:

From the list above, we can identify two critical settings for etcd:

- HEARTBEAT_INTERVAL (default 100ms): Frequency with which the leader will notify followers that it is still the leader

- ELECTION_TIMEOUT (default 1000ms): How long a follower node will wait without hearing a heartbeat before attempting to become leader itself.

These timers not only influence the stability of the cluster but also drive architectural and deployment decisions for Kubernetes clusters.

Consider the heartbeat interval, which applies when communicating the leadership status between the leader and follower nodes. By default, this interval is set to 100ms. If the communication time exceeds this interval, the heartbeats may not arrive on time. However, the system is designed to handle heartbeats that are delayed or lost due to network issues. This is where the election timeout comes in. The default timeout is 1000ms (or one second), which is ten times longer than the heartbeat interval. This means that even if we lose up to nine consecutive heartbeats, the leader node can still maintain its role.

These two values are the main focus in discussions about deploying a Kubernetes control plane spanning multiple data centers. Focusing only on these two parameters ignores the impact of latency on the write performance of the etcd cluster, the impact of latency when interacting with the leader, and in general, how that impacts the transaction rate of the Kubernetes APIServer.

Latency, the Complete Picture

Understanding of the concepts from Part 1 on how disk latency and network latency affect the transaction latency when writing a key-value pair to the etcd store, and considering the etcd settings discussed in the previous section, we can take a more holistic view of the impact of latency for a cluster.

The upstream etcd project has recommendations about disk requirements for an etcd cluster and recommended values for etcd settings based on network latency. When considering these recommendations, remember that network and disk latencies are not fixed values; they vary over time. Many factors influence a network's latency (such as link saturation, fragmentation, packet loss & retransmissions, etc.), and disk writes' latency (such as a noisy neighbor, and saturation of write buffers, etc.) over time. This variance of the latency is known as the Jitter. More specifically, Jitter is the standard deviation of the latency over a certain amount of time.

Why is Jitter important? Consider a network with a Jitter of 15ms and a latency of 90ms between two nodes. This means the packets will arrive with a latency between 75ms to 105ms. In those cases where latency goes above 100ms, it can impact receiving the heartbeat within the predefined interval. Similarly, when it comes to storage, the Jitter will affect the write latency, increasing the time for the system to achieve consensus, hence affecting the write performance.

Let's bring all these values together and discuss them using various examples.

![WC - [Blog] Demystifying etcd key-value store (5)](/rhdc/managed-files/ohc/WC%20-%20%5BBlog%5D%20Demystifying%20etcd%20key-value%20store%20%20%285%29.png)

Example A is the regular deployment where the control plane nodes are co-located on the same data center. We will consider A as the “control group” where ideal network and disk conditions exist. For the purpose of this discussion, we will use the following values:

| Network Latency | Network Jitter | |

| Same location | 10ms | 2ms |

| White to yellow | 75ms | 10ms |

| White to green | 90ms | 15ms |

| Yellow to green | 80ms | 15ms |

| Disk Write Latency | Disk Write Jitter | |

| White | 5ms | 1ms |

| Yellow | 10ms | 3ms |

| Green | 20ms | 5ms |

Scenarios B and C represent deployments where the 3-node etcd is deployed across two data centers (white and yellow). The difference between these two scenarios is that is where the leader node resides at that moment in time. Remember, in the leader election, any node can become the leader if there is consensus among etcd members. In both cases (B and C), the etcd client is on the white location.

Consider the write flow for scenario lBl illustrated in the following figure:

![WC - [Blog] Demystifying etcd key-value store (4)](/rhdc/managed-files/ohc/WC%20-%20%5BBlog%5D%20Demystifying%20etcd%20key-value%20store%20%20%284%29.png)

Using the numbers from the table above, the latency experienced by a write from an etcd client in scenario B is 335ms ± 54ms. So, in this scenario, the system can commit between 2.5 to 3.5 writes per second.

How do we get there?

Step 1: (10ms ± 2ms) + (10ms ± 2ms) = 20ms ± 4ms

Step 2: (5ms ± 1ms) + (10ms ± 2ms) + (75ms ± 10ms) = 90ms ± 13ms

Step 3: (5ms ± 1ms) + (10ms ± 3ms) + (10ms ± 2ms) + (75ms ± 10ms) + (5ms ± 1ms) = 105ms ± 17ms

Step 4: (10ms ± 2ms) + (75ms ± 10ms) + (5ms ± 1ms) + (10ms ± 3ms) = 100ms ± 16ms

Step 5: (10ms ± 2ms) + (10ms ± 2ms) = 20ms ± 4ms

Then divide 1s (1000ms) by (335ms ± 54ms) and you will get the 2.5 to 3.5 range.

Note that for the example, we assume the etcd client is connecting to a local etcd node. Should that not be the case, the write latency will be higher.

If we do the same exercise with scenario C, where the only difference is that the leader is in the yellow location, the write latency experienced by a client is 660ms ± 94ms, which translates to a range of 1.3 to 1.7 writes per second. In other words, the write performance drops by ~50%.

To confirm the math, the total latency per step for scenario C are:

Step 1: 85ms ± 12ms

Step 2: 160ms ± 23ms

Step 3: 160ms ± 22ms

Step 4: 170ms ± 25ms

Step 5: 85ms ± 12ms

Finally, consider scenario D and illustrate with the case where the client is interacting with the follower in location green.

![WC - [Blog] Demystifying etcd key-value store (3)](/rhdc/managed-files/ohc/WC%20-%20%5BBlog%5D%20Demystifying%20etcd%20key-value%20store%20%20%283%29.png)

If we do the same math as before, in scenario D, we find the etcd client will experience a latency of 1775ms ± 153ms, or between 0.5 to 0.6 writes per second.

All these exercises are only using three-node etcd clusters. As explained in Part 1 of this series, by increasing the number of nodes, the write latency will increase exponentially. With the math presented here, it is easy to understand the write performance degradation that will be experienced by the etcd cluster.

As shown in this exercise and this series, the impact of the network latency, the disk latency, their corresponding Jitter, and the number of etcd members to use have much greater implications than we might think at first sight. Testing and validating a Kubernetes configuration with a specific etcd setting requires contemplating and considering how the system will work under those conditions. Furthermore, it requires a good understanding of the performance impact of changing the etcd attributes.

This is one of the reasons OpenShift 4 uses specific etcd settings profiles (HEARTBEAT_INTERVAL and ELECTION_TIMEOUT) optimized for the type of infrastructure for which they are being deployed. On infrastructures where we have found high variability in network latency or storage latency, OpenShift automatically sets profiles with higher timers. Otherwise, we use the etcd default settings for these parameters.

About the author

William is a Product Manager in Red Hat's AI Business Unit and is a seasoned professional and inventor at the forefront of artificial intelligence. With expertise spanning high-performance computing, enterprise platforms, data science, and machine learning, William has a track record of introducing cutting-edge technologies across diverse markets. He now leverages this comprehensive background to drive innovative solutions in generative AI, addressing complex customer challenges in this emerging field. Beyond his professional role, William volunteers as a mentor to social entrepreneurs, guiding them in developing responsible AI-enabled products and services. He is also an active participant in the Cloud Native Computing Foundation (CNCF) community, contributing to the advancement of cloud native technologies.

More like this

Confidential Containers workshop on Microsoft Azure Red Hat OpenShift: Learn interactively

Simplify Red Hat Enterprise Linux provisioning in image builder with new Red Hat Lightspeed security and management integrations

The Containers_Derby | Command Line Heroes

Can Kubernetes Help People Find Love? | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds