Red Hat OpenShift for Windows Containers provided OpenShift administrators the ability to manage their heterogeneous application workloads. This gave administrators the ease and power to manage their Windows Container workloads alongside their Linux Container workloads using a centralized control plane: OpenShift. When first released, administrators were able to run Windows Containers on Azure, AWS, and vSphere environments that were using the IPI installation method leveraging the Windows Machine Config Operator (WMCO).

Read more about Windows Containers Bring Your Own Hardware (BYOH) in the Announcement Blog

With this latest release of the WMCO, we have provided support for administrators to bring your own host”(BYOH) when adding Windows Server nodes. With BYOH support, administrators no longer need to use premade images on the hyperscalers or create a generic template on vSphere. Now, an administrator can install Windows Server 2019 version 2004 and add it as an OpenShift node ad-hoc. This provides support for platform agnostic bare metal UPI installs and vSphere UPI installs as well.

In this blog, I will be going over how to prepare and install a Windows Server node for Windows Containers using the BYOH method on vSphere.

Prerequisites

Before setting up a Windows node to be a member of an OpenShift cluster, you must make sure that the prerequisites are in place. Some of these prerequisites need to be done as part of the cluster installation, and cannot be performed after installation. So take special note at the prerequisites, and make sure they are done at the time of installation.

The prerequisites are as follows:

- OpenShift version 4.8+

- Use OVNKubernetes as the SDN (needs to be set up at Installation)

- Set up Hybrid-Overlay Networking (needs to be setup at Installation)

- Windows Server 2019 version 2004

The Windows Server specific requirements will be covered in a later section. For more information on Windows Containers, please refer to the official documentation. Pay special attention to the networking setup installation requisites as, noted earlier, they can only be done at installation time.

Verifying Prerequisites

Once the cluster has been installed, you can verify if the prerequisites were set up correctly before proceeding with the Windows Containers setup. There are not many platform prerequisites, but they are important. So make sure you take the time to verify if they were installed correctly.

Make sure that OpenShift version 4.8 or newer is installed:

$ oc version

Client Version: 4.8.2

Server Version: 4.8.2

Kubernetes Version: v1.21.1+051ac4f

Next, make sure that OVNKubernetes was installed as the SDN network type:

$ oc get network.operator cluster -o \

jsonpath='{.spec.defaultNetwork.type}{"\n"}'

OVNKubernetes

Finally, make sure that hybrid-overlay networking was set up during installation:

$ oc get network.operator cluster -o \

jsonpath='{.spec.defaultNetwork.ovnKubernetesConfig}{"\n"}' | jq -r

{

"genevePort": 6081,

"hybridOverlayConfig": {

"hybridClusterNetwork": [

{

"cidr": "10.132.0.0/14",

"hostPrefix": 23

}

],

"hybridOverlayVXLANPort": 9898

},

"mtu": 1400,

"policyAuditConfig": {

"destination": "null",

"maxFileSize": 50,

"rateLimit": 20,

"syslogFacility": "local0"

}

}

Note that hybridOverlayVXLANPort is only required for vSphere installations. It must not be set for other types of installations. Please see the Microsoft documentation for more information. This is also outlined in the OpenShift documentation.

For more information about installing an OpenShift 4 cluster, please consult the official documentation. In this blog, I am testing out a vSphere installation; but the networking prerequisites for Windows Containers is the same no matter what platform you end up installing on.

Installing the WMCO

Once the cluster is installed and you have verified that the prerequisites were satisfied, you can proceed to install the Windows Machine Config Operator (WMCO). The WMCO is the entry point for OpenShift administrators who want to run containerized Windows workloads on their clusters. You can install this Operator as a Day 2-task from the Operator Hub.

Login to your cluster as a cluster administrator and navigate to Operators ~> OperatorHub using the left side navigation:

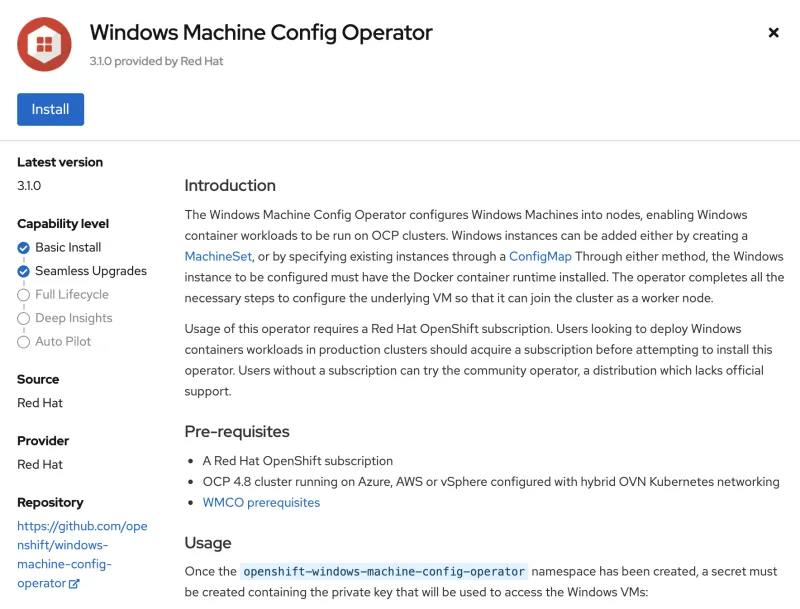

Now type Windows Machine Config Operator in the Filter by keyword … box. Click on the Windows Machine Config Operator card:

This will bring up the overview page. Here, you will click on the “Install” button:

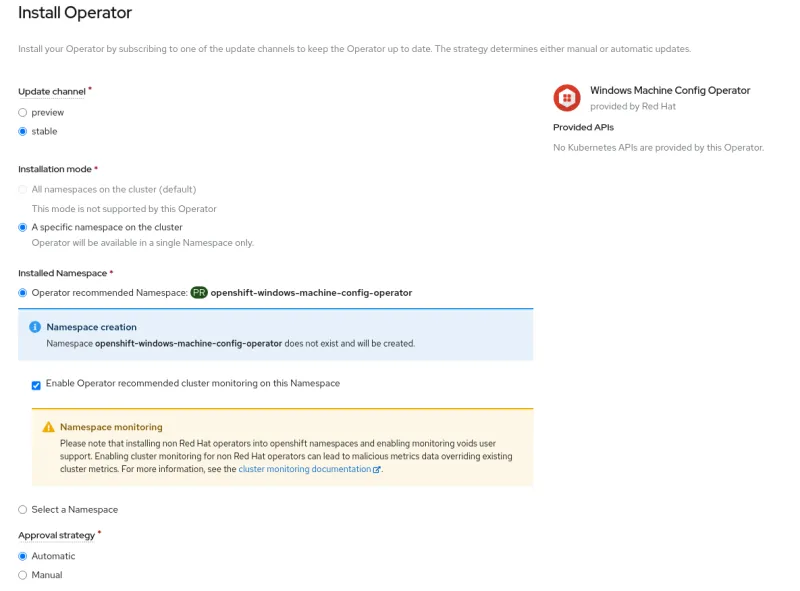

On the Install Operator overview page, make sure you have “stable” selected in the "Update channel" section. Also, in the "Installation mode" section, leave “A specific namespace on the cluster” selected. Leave the "Installed Namespace" section as “Operator recommended Namespace” and tick on “Enable Cluster Monitoring”. Finally, leave the "Approval strategy" as “Automatic”. Then click “Install”:

The "Installing Operator" status page will come up. This will stay up during the duration of the installation:

When the screen says "ready for use," the WMCO Operator is successfully installed:

You can verify the Operator has been installed successfully by checking to see if the Operator Pod is running:.

$ oc get pods -n openshift-windows-machine-config-operator

NAME READY STATUS RESTARTS AGE

windows-machine-config-operator-749bb9db45-7vzfh 1/1 Running 0 148m

Once the WMCO is installed and running, you will need to create/provide an SSH key for the WMCO to use. This same SSH key is also going to be installed on the Windows node and will be the way the WMCO configures it for OpenShift. You can use an existing SSH key. If you do not have one, or would like to create one specifically for Windows Nodes, you can do so by running:

$ ssh-keygen -t rsa -f ${HOME}/.ssh/winkey -q -N ''

Once you have your key ready, add the private key as a secret for the WMCO to use in the openshift-windows-machine-config-operator namespace.

$ oc create secret generic cloud-private-key \

--from-file=private-key.pem=${HOME}/.ssh/winkey \

-n openshift-windows-machine-config-operator

Please note that you can only have one Windows Server SSH key pair in the cluster. There is no way, currently, to provide a key pair per individual Windows Server.

For more information about how to install the WMCO, please refer to the official documentation.

Setting Up the Windows Server

To use Windows Containers with BYOH, you will need to use either Windows Server 2019 version 2004 or Windows Server 2019 version 20H2. For more information about supportability, please see the upstream Kubernetes documentation. For this test, I will be using Windows Server 2019 version 2004. I have installed the Windows Server ”Datacenter” version.

The first step in setting up your Windows Node is to install the Docker runtime. I am running the following PowerShell script to install Docker:

# Powershell script to install docker: https://bit.ly/3DzUdsP

#

# Install NuGet provider to avoid the manual confirmation

Install-PackageProvider -Name NuGet -MinimumVersion 2.8.5.201 -Force

# configure repository policy

Set-PSRepository PSGallery -InstallationPolicy Trusted

# install module with provider

Install-Module -Name DockerMsftProvider -Repository PSGallery -Force

# install docker package

Install-Package -Name docker -ProviderName DockerMsftProvider -Force

Once you run the above script (or the individual commands), you will need to reboot the Windows node for docker to start working:

PS C:\Users\Administrator\Documents> Restart-Computer -Force

Once the Windows node is up and running, you should be able to run docker from the CLI:

PS C:\Users\Administrator\Documents> docker version -f '{{.Client.Version}}'

20.10.6

The next thing that needs to be done is install SSH and open port 22 for the Windows node. SSH is used by the WMCO to set up and configure the Windows node to be a Kubernetes node. I will be using the following script:

PS C:\Users\Administrator\Documents> wget https://red.ht/3gOAA6y -OutFile install-openssh.ps1

Please note that the script is shown only as an example, and is in no way supported by Red Hat.

This script will require the public key of the ssh keypair you created earlier passed to it as an argument. The script will install SSH, enable the service to start on boot, create the firewall rule, and place the public key in the appropriate authorized_keys file:

PS C:\Users\Administrator> .\install-ssh.ps1 .\windows_node.pub

Path :

Online : True

RestartNeeded : False

LastWriteTime : 8/31/2021 2:01:50 PM

Length : 0

Name : administrators_authorized_keys

For more information about how to install and configure SSH on Windows Server, please see the official Microsoft documentation.

Next thing is that we have to make sure that port 10250 is open for log collection to function. The below was taken from the following script:

PS C:\Users\Administrator> New-NetFirewallRule -DisplayName "ContainerLogsPort" -LocalPort 10250 -Enabled True -Direction Inbound -Protocol TCP -A

ction Allow -EdgeTraversalPolicy Allow

Finally, we must make sure that the hostname of the Windows node follows the RFC 1123 DNS label standard. In short:

- Contain only lowercase alphanumeric characters or '-'.

- Start with an alphanumeric character.

- End with an alphanumeric character.

Another thing to note is that the WMCO does not like capital letters in the hostname. Set the hostname to the short DNS name. You will also need to restart the Windows node:

PS C:\Users\Administrator> Rename-Computer -NewName "dhcp-host-85" -Force -Restart

Once the Windows Node is rebooted, it is ready to be added as a Windows node.

Adding the Windows Server as an OpenShift Node

Now that the WMCO is installed and the Windows Node is set up, you can add it as an OpenShift Node. The WMCO watches for a configMap named windows-instances to be created in the openshift-windows-machine-config-operator namespace, describing the instances that should be joined to a cluster. The required information to configure an instance is:

- An address to SSH into the instance with. This should be the ipv4 address of the node.

- The name of the administrator user

Each Windows node entry in the data section of the ConfigMap should be formatted with the IP address of the Windows node as the key, and a value with the format of username=<username>. In my environment, the configmap looks like this:

kind: ConfigMap

apiVersion: v1

metadata:

name: windows-instances

namespace: openshift-windows-machine-config-operator

data:

192.168.1.85: |-

username=Administrator

The installation/setup can take some time. You can follow the installation process by looking at the WMCO Pod logs:

$ oc logs -l name=windows-machine-config-operator \

-n openshift-windows-machine-config-operator -f

The installation/setup of the Windows node can take some time. But after a while, you will see the following in the logs:

"Configured instance with address 192.168.1.85 as a worker node"

You should now see the Windows Server added as an OpenShift Node:

$ oc get nodes -l kubernetes.io/os=windows

NAME STATUS ROLES AGE VERSION

dhcp-host-85 Ready worker 20m v1.21.1-1396+38b3eccb7e23fd

To add another Windows Node, you just need to edit the configmap by running oc edit configmap/windows-instances -n openshift-windows-machine-config-operator and adding an entry. For example:

kind: ConfigMap

apiVersion: v1

metadata:

name: windows-instances

namespace: openshift-windows-machine-config-operator

data:

192.168.1.85: |-

username=Administrator

192.168.1.92: |-

username=myadminuser

Windows nodes can be removed by removing the instance's entry in the ConfigMap. This will revert the Windows node back to the state that it was in before, barring any logs and container runtime artifacts.

For example, in order to remove the instance 192.168.1.92 from the above example, the ConfigMap would be changed to the following:

kind: ConfigMap

apiVersion: v1

metadata:

name: windows-instances

namespace: openshift-windows-machine-config-operator

data:

192.168.1.85: |-

username=Administrator

For a Windows node to be cleanly removed, it must be accessible with the current private key provided to WMCO.

Deploying Sample Workload

Now that we have a Windows OpenShift Node up and running, we can test running a sample Windows Container. Before we begin, you will have to keep a few things in mind.

The Windows Containers you want to run on a Windows Server need to be compatible with each other. For instance, you cannot run a Windows Container built on Server 2016 to run on Server 2019. Even more granular, you may not be able to run a container built on Windows 10 on Server 2019. Since we are running Server 2019 version 2004, we will be using the mcr.microsoft.com/windows/servercore:2004 container.

Please consult the official Microsoft documentation for more information about compatibility.

Another thing to keep in mind is that Windows Container images can be VERY large. In some cases, a small base image can be 8GB in size. This can pose trouble for the Kubernetes scheduler which has a default timeout of 2 minutes. To work around this, it is suggested to pre-pull any base images you need. For example, I can login to my Windows node and pull the image with the following command:

PS C:\Users\Administrator> docker pull mcr.microsoft.com/windows/servercore:2004

After it has been pulled onto the host, you should see it as a list of local images:

PS C:\Users\Administrator> docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

mcr.microsoft.com/windows/servercore 2004 fa320eb93178 4 weeks ago 5.01GB

When the Windows node was added as an OpenShift node, a taint was placed on the node. This prevents Linux workloads from trying to schedule (to only fail) on the Windows node. Take a look at the taint on the Windows Node:

$ oc describe nodes -l kubernetes.io/os=windows | grep -i taint

Taints: os=Windows:NoSchedule

Take a note of this taint. We will be deploying this sample web application. Let's take a look at the Deployment as I would like you to take note of two things.

First, you will notice in this section that there is a toleration in place for the taint of the Windows node:

tolerations:

- key: "os"

value: "Windows"

Effect: "NoSchedule"

Second, in this section you will see that there is a node selector in place that will target nodes that have the label “windows” as the OS:

nodeSelector:

kubernetes.io/os: windows

This effectively says that this workload will deploy to nodes labeled as Windows nodes and will tolerate the Windows node taint. Go ahead and deploy this application.

$ oc apply -f https://red.ht/3t2QfEh

This will create the win-test-workload namespace along with the win-webserver service with a deployment named win-webserver. You can check the status of the pod to see where it is running:

$ oc get pods -n win-test-workload -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

win-webserver-c56644cc4-vcjn4 1/1 Running 0 3m2s 10.132.0.2 dhcp-host-85 <none> <none>

As you can see, it is running on the Windows node. You can verify this by logging into the Windows Node as see the running containers by running the docker ps command:

PS C:\Users\Administrator> docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

1ba736def9db fa320eb93178 "powershell.exe -com…" 9 minutes ago Up 9 minutes k8s_windowswebserv

er_win-webserver-c56644cc4-vcjn4_win-test-workload_ce0037ab-2109-4e21-b2b9-e0e3a90e99c8_0

214da55e2422 mcr.microsoft.com/oss/kubernetes/pause:3.4.1 "/pause.exe" 9 minutes ago Up 9 minutes k8s_POD_win-webser

ver-c56644cc4-vcjn4_win-test-workload_ce0037ab-2109-4e21-b2b9-e0e3a90e99c8_0

You can expose the route of this application just like any other application running on OpenShift:

$ oc expose service/win-webserver -n win-test-workload

Curling the URL (or visiting it on a browser) should return the following:

$ curl http://$(oc get route/win-webserver -n win-test-workload -o jsonpath='{.spec.host}')

<html><body><H1>Windows Container Web Server</H1></body></html>

Conclusion

Red Hat OpenShift for Windows Containers brought the ability to run containerized Windows-based applications in your Azure, AWS, and vSphere IPI installs. In this blog, we introduced a new feature that allows you to “bring your own host” to Windows Containers. This adds the support for Non-Integrated Platform Agnostic Bare Metal UPI installs, and for vSphere UPI Installs as well. This provides OpenShift Administrators with even more flexibility when running in their heterogeneous environments.

We invite you to try out the Windows MachineConfig Operator v3.0 and do not forget to catch “Ask and OpenShift Admin” on Red Hat Live Streaming, weekly at 11 a.m. Eastern Time Zone. This show is dedicated to OpenShift administrators, providing an open forum to ask questions and discover new tips and tricks. Please watch episode 43 where we go over Windows Containers BYOH, and stay tuned for future streams involving Windows Containers.

About the author

Christian is a well-rounded technologist with experience in infrastructure engineering, system administration, enterprise architecture, tech support, advocacy, and product management. He is passionate about open source and containerizing the world one application at a time. He is currently a maintainer of the OpenGitOps project, a maintainer of the Argo project, and works as a Technical Marketing Engineer and Tech Lead at Cisco. He focuses on GitOps practices, DevOps, Kubernetes, network security, and containers.

More like this

Ford's keyless strategy for managing 200+ Red Hat OpenShift clusters

F5 BIG-IP Virtual Edition is now validated for Red Hat OpenShift Virtualization

The Containers_Derby | Command Line Heroes

Can Kubernetes Help People Find Love? | Compiler

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds