SAP customers have historically developed large quantities of custom code written in ABAP (Advanced Business Application Programming) inside their SAP systems in order to create applications and extend standard data structures. This custom code is necessary to meet business requirements, but it presents a problem when upgrading SAP versions. All this custom code needs to be reviewed by the developers to make sure the standards in the new version cover the use cases each customization was created for. Does the custom code need to be kept, or can it be discarded? This long process adds massive overhead to the upgrade process, and typically takes from six months to over a year.

A solution to this is to stop developing inside of the SAP core and deploy all the applications and extensions somewhere else. That way the upgrade process can be straightforward, as only standard code is involved and it will not need to be reviewed when upgrading SAP.

This is the approach that SAP endorses for its customers when they migrate to their latest ERP suite, SAP S/4HANA. They initially set the deadline for migrating to 2025, but later postponed it to 2027. With the premise “keep the core clean,” they are encouraging customers to carve their custom code out of their SAP systems. Of course, this requires a thorough code analysis process to find the code that is being used and needs to be moved out, but there are tools to help with this.Once this discovery process is complete, customers can move their custom code somewhere else and do all further development there. There are no restrictions to where “somewhere else” can be, giving customers great freedom of choice, as well as letting developers use the development language of their choice, since they no longer strictly have to code in ABAP.

In most companies, different programming languages will be used in different areas. That means having to maintain numerous development platforms, which is not optimal as it makes the IT landscape architecture and its management more complex.

Red Hat OpenShift is one solution to this problem, as it provides a single platform that can be used to deploy and run applications written in many different languages. It also provides many runtimes, so developers do not need to worry about assembling the libraries and other components needed to run their code. As a result, they can be productive much faster and with much greater flexibility.

What is ROSA?

Red Hat OpenShift Service on AWS (ROSA) is a version of Red Hat OpenShift on the Amazon Web Services (AWS) cloud that is offered as a managed service. A Red Hat team of site reliability engineers (SRE) manages the OpenShift platform, including taking care of the upgrade process and making sure it works performantly—only the applications that run on it are the customer’s responsibility. This support is delivered jointly by Red Hat and AWS.

As a managed service, ROSA is an efficient option for customers who want to adopt DevOps methodologies by embracing Red Hat OpenShift, but who do not have the in-house expertise to manage the platform, or who do not want to invest in its infrastructure. ROSA’s features and functionalities are exactly the same as those of the on-premise version of Red Hat OpenShift.

How do ROSA and SAP talk to each other?

The main components of this solution are the SAP systems and the ROSA platform. SAP can also be on the AWS cloud or anywhere else (on-premise, on any cloud, or a hybrid cloud mix of both).

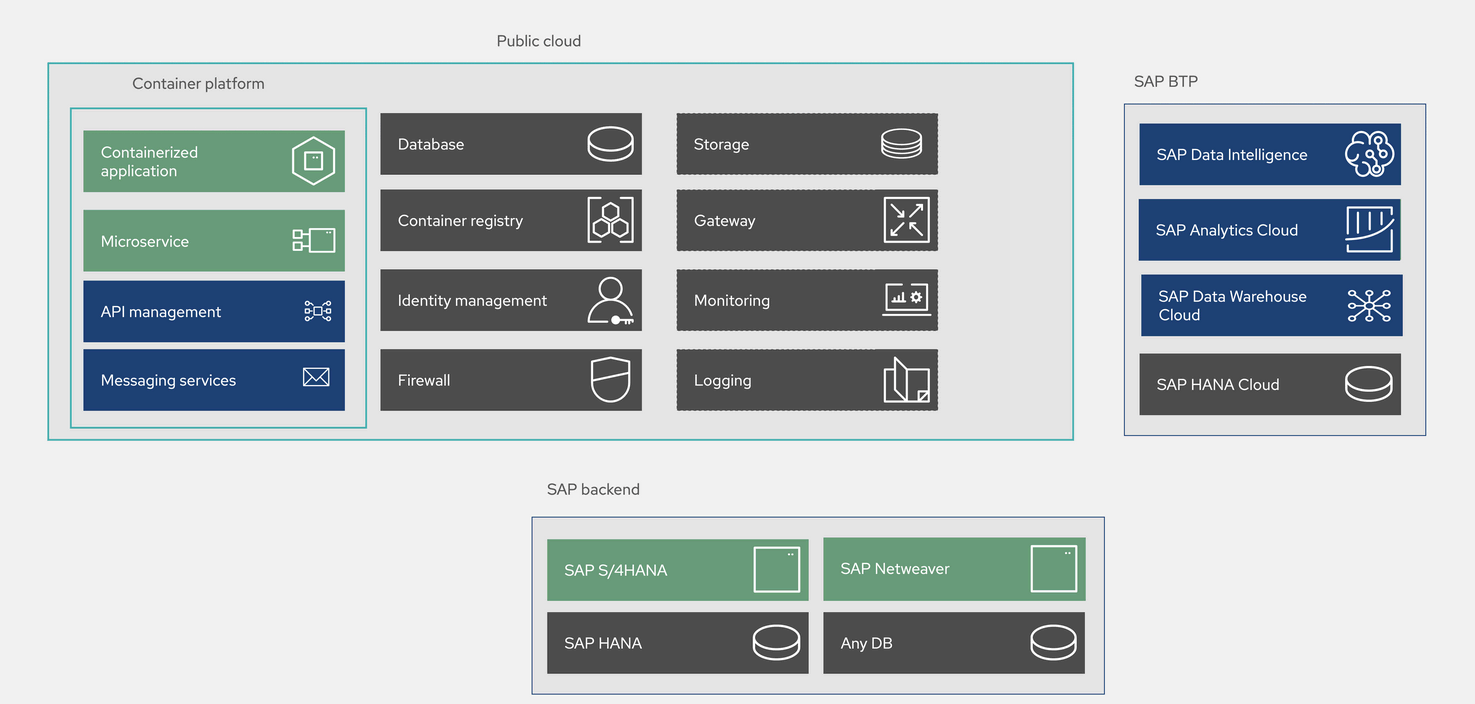

Figure 1. Solution components

In the picture above, we can also see SAP BTP (Business Technology Platform) which is a Platform-as-a-service (PaaS) offered by SAP through which customers can use products like SAP HANA Cloud, SAP Analytics Cloud and SAP Data Warehouse Cloud among others.

The central piece of this solution allows all these components to connect in order to share data. This is where API integration comes into play. Red Hat Integration (a part of Red Hat Application Foundations) provides an API-first approach with which customers can create all the integrations they need by exposing SAP data structures and business application programming interfaces (BAPIs) and creating APIs to call them.

Red Hat Fuse can be used to create these APIs, and Red Hat 3Scale can manage them, using role based access control (RBAC) to help secure them and allowing usage to be tracked. This helps keep spending under control for those APIs that are offered as a service on public clouds like AWS.

Red Hat Integration also provides messaging services (Red Hat AMQ) that are very useful when building event-driven architectures. Red Hat Integration can be deployed on both OpenShift and ROSA.

Figure 2. Integration of ROSA, SAP and SAP BTP

In the figure above, we can see the example of SAP systems deployed on AWS. In this case, they will reside in their own AWS virtual private cloud (VPC) in a private subnet so that they are not accessible from the Internet. Similarly, the ROSA platform will be in its own dedicated AWS VPC.

Red Hat Integration provides the tools to connect the applications and extensions running on ROSA with the SAP systems as we have seen. At the network level, the two separate VPCs connect using AWS VPC Endpoints, which can also be used by the SAP systems to access other AWS services like S3, Textract or Amazon Translate, among others—which can be very useful for SAP. In order to use these AWS services, AWS ABAP SDK must be installed in the SAP application layer.

Finally, if customers wish to use the services available on SAP BTP, SAP Cloud Connector needs to be installed in the SAP backend so that the SAP application tier can communicate with SAP BTP.

Conclusion

Developing and keeping custom code inside of SAP is no longer an acceptable practice. By discontinuing it, customers become much more flexible and can save huge amounts of time and money when upgrading their SAP systems.

Customizations and extensions are still often needed, but they should live outside of the SAP core. OpenShift in any of its forms, such as ROSA, is an ideal platform for all applications, including those that interact with SAP. Having only one development platform in the IT ecosystem greatly simplifies it and makes its administration much easier. It also attracts new developers to the companies using OpenShift because they can use modern DevOps methodologies and follow a cloud native approach.

Red Hat Integration is at the center of the solution as it makes all the interactions of the applications with SAP possible with an API-first approach, providing flexibility and reusability. Together with OpenShift, this can be a big boost to developer productivity.

Learn more

About the author

Ricardo Garcia Cavero joined Red Hat in October 2019 as a Senior Architect focused on SAP. In this role, he developed solutions with Red Hat's portfolio to help customers in their SAP journey. Cavero now works for as a Principal Portfolio Architect for the Portfolio Architecture team.

More like this

Build security into ITOps from the start with automation

Why flexibility is non-negotiable in the Middle East’s AI transformation journey

Operating System Management | Compiler

Technically Speaking | Taming AI agents with observability

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds