Introduction

MicroShift is a minimal k8s (Kubernetes) distro, which runs preferably on ostree-based deployments. Ostree is great for edge deployment as it enables immutable (read-only) operating systems, transactional updates, over-the-air delta updates and more. Ostree tooling can ingest artifacts like rpm packages, container images, and OS customizations such as users and services. Adding MicroShift to this is easy and well documented, as MicroShift itself is made out of rpm packages and container images.

But how do I add my k8s workload to it? Let's assume you have a microservices based application which consists of a couple of k8s objects like Deployment, Services, Ingress etc.. You have the container images and the k8s manifests (.yaml files) to deploy. How could you add this to your OS image so that even in a completely air gapped, disconnected, offline edge environment, your workload would be able to start?

This is a tutorial on how to achieve this!

Overview

There are many tutorials and blog posts available that describe how to build an ostree based image, including MicroShift. The MicroShift documentation explains how to add it to an ostree image.

But how can you add your workload, k8s manifests and images?

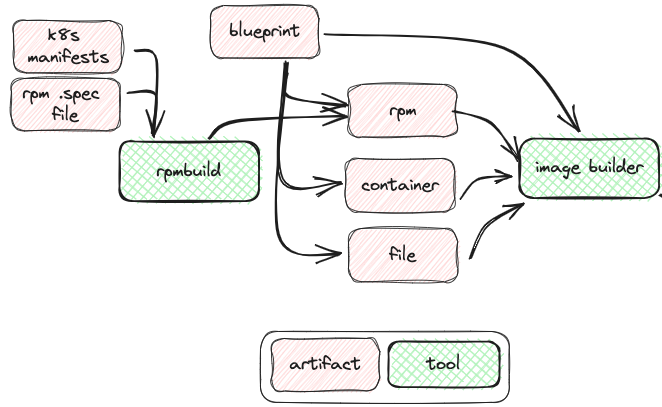

Let’s first try to understand the involved tooling and flow of artifacts:

You create a blueprint toml file that declares all the rpms, container images, files and customizations you need. You hand this over to the image builder to build ostree commits. If you need an iso image to boot from, you do another osbuild run to utilize the simplified installer. For subsequent delta update, or initial network based installations, you integrate the new commit into your repo (hosted on a http server) using ostree.

Your k8s workload comes as a manifest: a couple of yaml files describing your deployment, services, ingress, service account etc -possibly using kustomize. This can be used for application deployment with MicroShift, by placing the manifest files into the right directory like /etc/microshift/manifests. MicroShift will ‘kubectl apply -k’ these on initial/next startup.

The big question now is: how can you add your k8s deployment manifests, kustomize files into your blueprint toml file?

At first glance, using the “file customization” in the blueprint of osbuilder seems to be the tool of choice. Turns out that it’s best for individual file, and quite cumbersome to add (especially if you have lots of micro services apps to add).

The simple solution to this challenge is to create your own rpm package!

These can be easily added to your blueprint. If you are like me, with an app dev/cloud background, creating your own rpm package sounds scary and complicated. Actually, it is not; it just adds one more tool to our picture, the rpmbuild tool. The trick is to create a single rpm .spec file that takes the manifests and moves them to the right location inside the rpm package. Now we just have one more tool on the left hand side of the flow chart:

Let me provide step by step instructions.

The starting point

Let's assume you have setup your image builder host, e.g. following the official documentation or this nice blogpost. Basic image building works. Let's add your directory of manifests to it.

Setup to build your own RPM package

First, install the tooling. We need rpm development tools and repo tools to create our own rpm repository:

$ sudo dnf install rpmdevtools rpmlint yum-utils createrepo

Now initialize the rpm build tree:

$ rpmdev-setuptree

This create a directory structure ~/rpmbuild

Build your own RPM package that includes the manifests

Now we need a .spec file that adds the application manifests to the rpm package.

In ~/rpmbuild/SPECS create a file named ushift-workload-manifests.spec with the following content

Name: ushift-workload-manifests

Version: 0.0.1

Release: 1%{?dist}

Summary: Adds workload manifests to microshift

BuildArch: noarch

License: GPL

Source0: %{name}-%{version}.tar.gz

#Requires: microshift

%description

Adds workload manifests to microshift

%prep

%autosetup

%install

rm -rf $RPM_BUILD_ROOT

mkdir -p $RPM_BUILD_ROOT/%{_prefix}/lib/microshift/manifests

cp -pr ~/manifests $RPM_BUILD_ROOT/%{_prefix}/lib/microshift/

%clean

rm -rf $RPM_BUILD_ROOT

%files

%{_prefix}/lib/microshift/manifests/**

%changelog

* Fri Jul 07 2023 dfroehli@redat.com - 0.0.1

- Initial version

The %install section is the important one. It creates the target directory /usr/lib/microshift/manifests/

and copies the manifests from ~/manifests over there.

So make sure all your YAML files are located in that directory. If you use kustomize, dont forget your kustomize.yaml!

When everything is in place, you can build your RPM package:

$rpmbuild -bb ~/rpmbuild/SPECS/ushift-workload-manifests.spec

This creates the new package under ~/rpmbuild/RPMS

Add it to your blueprint by using a local repo

Now we need to add that RPM to image builder. For that, we need to create a local RPM repository we can point image builder to.

$ createrepo ~/rpmbuild/RPMS/

Image builder runs as a different user, so make sure it can access the repo:

$ chmod a+rx ~

Now tell image builder about our new local repository. Create repo-local-rpmbuild.toml with this content:

id = "local-rpm-build"

name = "RPMs build locally"

type = "yum-baseurl"

url = "file:///home/builder/rpmbuild/RPMS"

check_gpg = false

check_ssl = false

system = false

And tell image builder about it:

$ sudo composer-cli sources add repo-local-rpmbuild.toml

Now you can add the rpm to your toml blueprint, by adding the following lines:

…

[[packages]]

name = "ushift-workload-manifests"

version = "*"

…

Dont forget to push the updated blueprint to image builder:

$ sudo composer-cli blueprints push my-blueprint.toml

That would be already good enough to get the manifests added and applied on startup. If you keep it like this, the required container images for your workload will be pulled over the network.

Add the workload container images to the blueprint

Maybe you want to also embed the container images so that you have a fully self-contained commit or ISO image that can start everything, even without any network connection.

For that, we need to render your manifests, grep all image references out of it and translate it to blueprint container sources. The following command does that trick:

$ oc kustomize ~/manifests | grep "image:" | grep -oE '[^ ]+$' | while read line; do echo -e "[[containers]]\nsource = ${line}\n"; done >>my-blueprint.toml

Again, don't forget to push the updated blueprint to image builder:

$ sudo composer-cli blueprints push my-blueprint.toml

If your workload containers are located in a private repository, don't forget to provide image builder with the necessary pull secrets. To do so, set the auth_file_path in the [containers] section of the osbuilder worker configuration in /etc/osbuild-worker/osbuild-worker.toml to point to the secret. You might need to create a directory and file. It should look like this:

[containers]

auth_file_path = "/home/builder/pull-secret.json"

Now everything for a fully self contained image is prepared and we can start the build:

$ sudo composer-cli compose start-ostree my-plueprint edge-commit

From here, you can proceed with your regular ostree image flow, like waiting for the build to complete, export the image and integrate it into your ostree repository or create bootable iso.

Verifying that it worked

Lets assume you have integrated the new build into you ostree repository and have and edge device installed from the previous version. Lets check that our new rpm package is actually part of the update:

# rpm-ostree upgrade

Validating checksum 'a4984e5ad8418428a8fc97fbfca0c7a664f4f3278b18993ad0e9e5fce26a13e5'

1 metadata, 0 content objects fetched; 196 B transferred in 0 seconds; 0 bytes content written

2 delta parts, 2 loose fetched; 44 KiB transferred in 0 seconds; 0 bytes content written

Staging deployment... done

Added:

ushift-workload-manifests-0.0.1-1.el9.noarch

Run "systemctl reboot" to start a reboot

So the package is being added, great! Keep in mind that you have to reboot for the package to be actually visible. So after the reboot, you can verify with

# rpm -qa | grep workload

ushift-workload-manifests-0.0.1-1.el9.noarch

You can also see the manifests in the file system:

# ll /usr/lib/microshift/manifests/

total 8

-rw-r--r--. 2 root root 135 Jan 1 1970 kustomization.yaml

-rw-r--r--. 2 root root 1650 Jan 1 1970 microshift-sample-app.yaml

And check that the app is running:

# oc get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

openshift-dns dns-default-w7glx 2/2 Running 0 42s

openshift-dns node-resolver-qz7wc 1/1 Running 0 43s

[...]

storage-example sample-6587459c47-hgrwl 1/1 Running 3 27h

In this example, it’s the last pod in the list.

Voila! This is how you can integrate your k8s application workload into an ostree container image with MicroShift. Enjoy!

About the author

Daniel works as a Principal Product Manager at Red Hat. He is responsible for defining and managing the Red Hat OpenShift edge related projects including: MicroShift, Red Hat Device Edge and Single Node OpenShift products. Daniel is a catalyst that brings together the necessary resources (people, technology, methods) to make projects and products a success. Daniel has more than 25 years of experience in IT. In the past years, Daniel has focused on hybrid cloud and container technologies in the Industrial space.

More like this

AI in telco – the catalyst for scaling digital business

Simplify Red Hat Enterprise Linux provisioning in image builder with new Red Hat Lightspeed security and management integrations

Kubernetes and the quest for a control plane | Technically Speaking

Compute confidential: In hardware we trust | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds