External Secrets Operator is a Kubernetes operator that integrates with external secret management systems like AWS Secrets Manager, HashiCorp Vault, Google Secrets Manager, Azure Key Vault, and many more. The operator reads information from external APIs and automatically injects the values into a Kubernetes secret.

You can read more about External Secrets Operator here.

In this blog post, we will create an ArgoCD application to showcase how an External Secrets Operator fetches the GitLab CI variable and creates a Kubernetes secret object in the cluster.

Below is the workflow diagram showing how ROSA, ArgoCD, GitLab CI, and ESO work together:

Product versions used in this blog post:

| Name | Version |

| Red Hat OpenShift on AWS | 4.12.27 |

| External Secrets Operator | 0.9.3 |

| Red Hat OpenShift Gitops (Argo CD) Operator | 1.5.10 |

| GitLab Enterprise Edition | 16.3.0 |

| OC CLI | 4.12.x |

GitLab Configuration

Referring to the above diagram, we will set up GitLab CI as an external secret store.

To talk to GitLab API, ESO needs a GitLab access token and project or group ID. ESO secret store will leverage the access token to authenticate against Gitlab APIs:

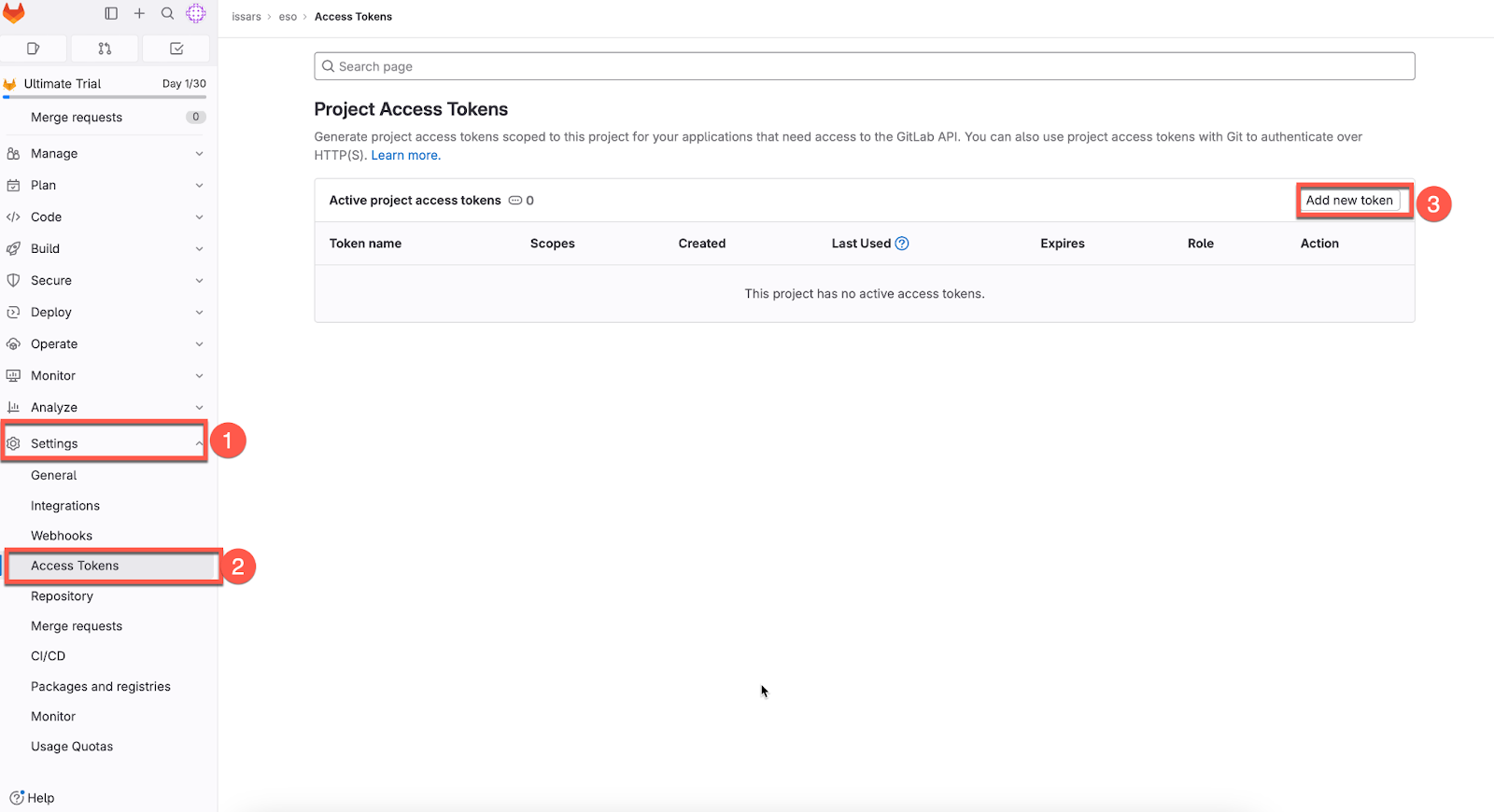

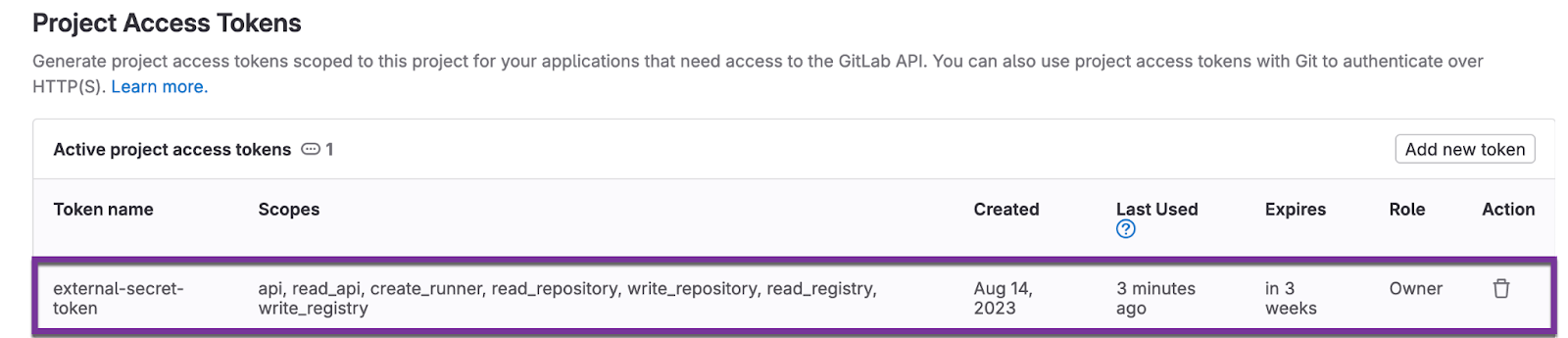

To create a token in GitLab, on the left pane, navigate to Settings -> Access Tokens - > add a new token

Set the token name, expiration date, and role (I have added all, but grant API and read_api roles should be enough for ESO to fetch the Gitlab CI variables).

Be sure to save the token, as you won't be able to re-access it.

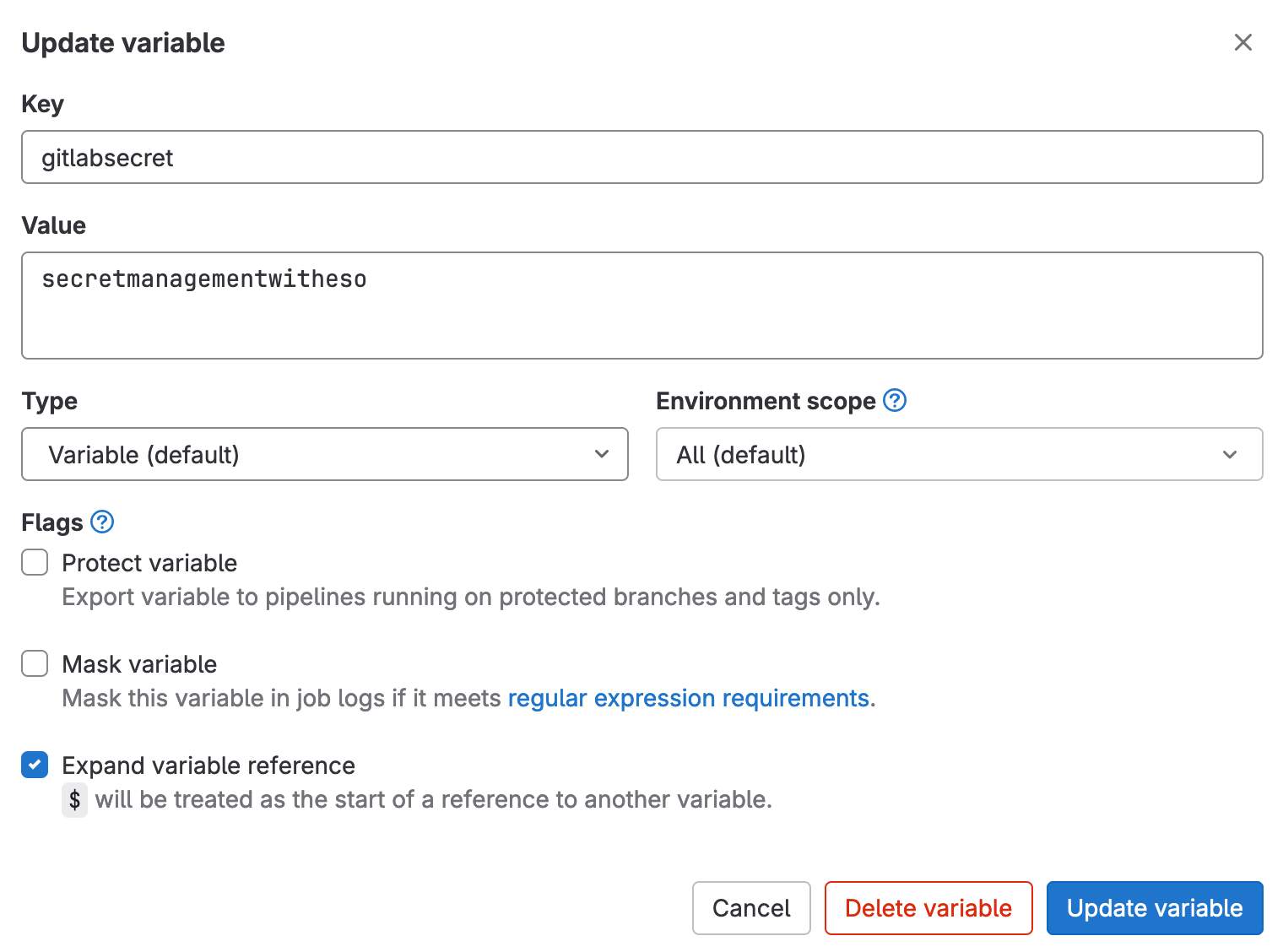

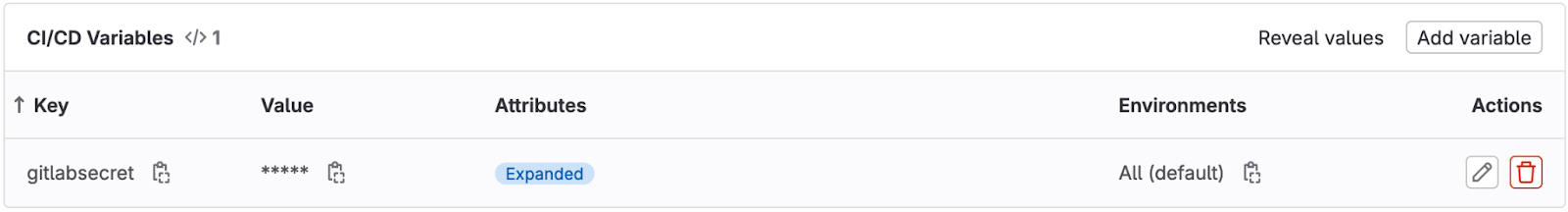

Now we will define the secrets in GitLab CI UI as a variable and ensure that sensitive secrets like tokens or passwords are stored under settings in the GitLab UI, not in the .gitlab-ci.yml file.

Navigate to Settings -> CI/CD -> Add variables in Gitalb UI:

ESO Configuration

Now we will configure the External Secrets Operator and RedHat OpenShift GitOps (Argo CD) on the Red Hat OpenShift on AWS cluster. (I will not cover the installation of ESO and Red Hat OpenShift GitOps as they are relatively straightforward.) The operators (ESO and Red Hat OpenShift GitOps) can be installed declaratively using the GitOps principle, but I will leave that for another time.

Note that if you are planning to use External Secrets Operator from the OpenShift operator hub, after installing ESO, you need to create the CR (operator.external-secrets.io/OperatorConfig) to install an instance of external Secrets and a namespace for the OperatorConfig. Let's create a namespace, "external-secrets", to host the OperatorConfig CR. Below are the manifests files for reference:

OperatorConfig Namespace:

apiVersion: v1

kind: Namespace

metadata:

name: external-secrets

annotations:

argocd.argoproj.io/sync-wave: "-10"

OperatorConfig Custom Resource:

apiVersion: operator.external-secrets.io/v1alpha1

kind: OperatorConfig

metadata:

name: cluster

namespace: external-secrets

annotations:

argocd.argoproj.io/sync-wave: "2"

argocd.argoproj.io/sync-options: SkipDryRunOnMissingResource=true

spec:

prometheus:

enabled: true

service:

port: 8080

resources:

requests:

cpu: 10m

memory: 96Mi

limits:

cpu: 100m

memory: 256Mi

Next we will create a Kubernetes secret object containing the GitLab access token. External Secrets Operator will leverage the token to access the GitLab APIs to fetch the secrets stored as GitLab CI variables.

Secret Containing GitLab Access Token:

apiVersion: v1

kind: Secret

metadata:

name: external-secret-token

namespace: openshift-config

labels:

type: gitlab

type: Opaque

stringData:

token: "XXXXXXXX" (Gitlab project access token goes here)

It's time to create the SecretStore, which will specify how to access GitLab to fetch the project variables as secret. It uses the GitLab Access Token created earlier to authenticate against the GitLab API:

SecretStore Resource:

apiVersion: external-secrets.io/v1beta1

kind: SecretStore

metadata:

name: gitlab-demo-secret-store

namespace: openshift-config

spec:

provider:

gitlab:

url: https://gitlab.com/ (replace with your GitLab url)

auth:

SecretRef:

accessToken:

name: external-secret-token (we are referring to GitLab token secret)

key: token

projectID: "48411817" (replace with your GitLab project id)

Note: If you need a global, cluster-wide SecretStore that can be referenced from all namespaces, a ClusterSecretStore would be more appropriate.

The last resource to create is an External Secrets resource that declares what data to fetch (GitLab CI variable in this case). It has a reference to a SecretStore, which knows how to access that data.

External Secrets Resource:

apiVersion: external-secrets.io/v1beta1

kind: ExternalSecret

metadata:

name: gitlab-demo-external-secret

namespace: openshift-config

spec:

refreshInterval: '15s'

secretStoreRef:

kind: SecretStore

name: gitlab-demo-secret-store (name must match secret store created earlier)

target:

name: eso-secret (secret name to be created on target cluster)

creationPolicy: Owner

template:

data:

secretKey: "{{ .secretKey }}"

data:

- secretKey: secretKey (key for the secret to be created on the cluster)

remoteRef:

key: gitlabsecret (key for CI variable on GitLab)

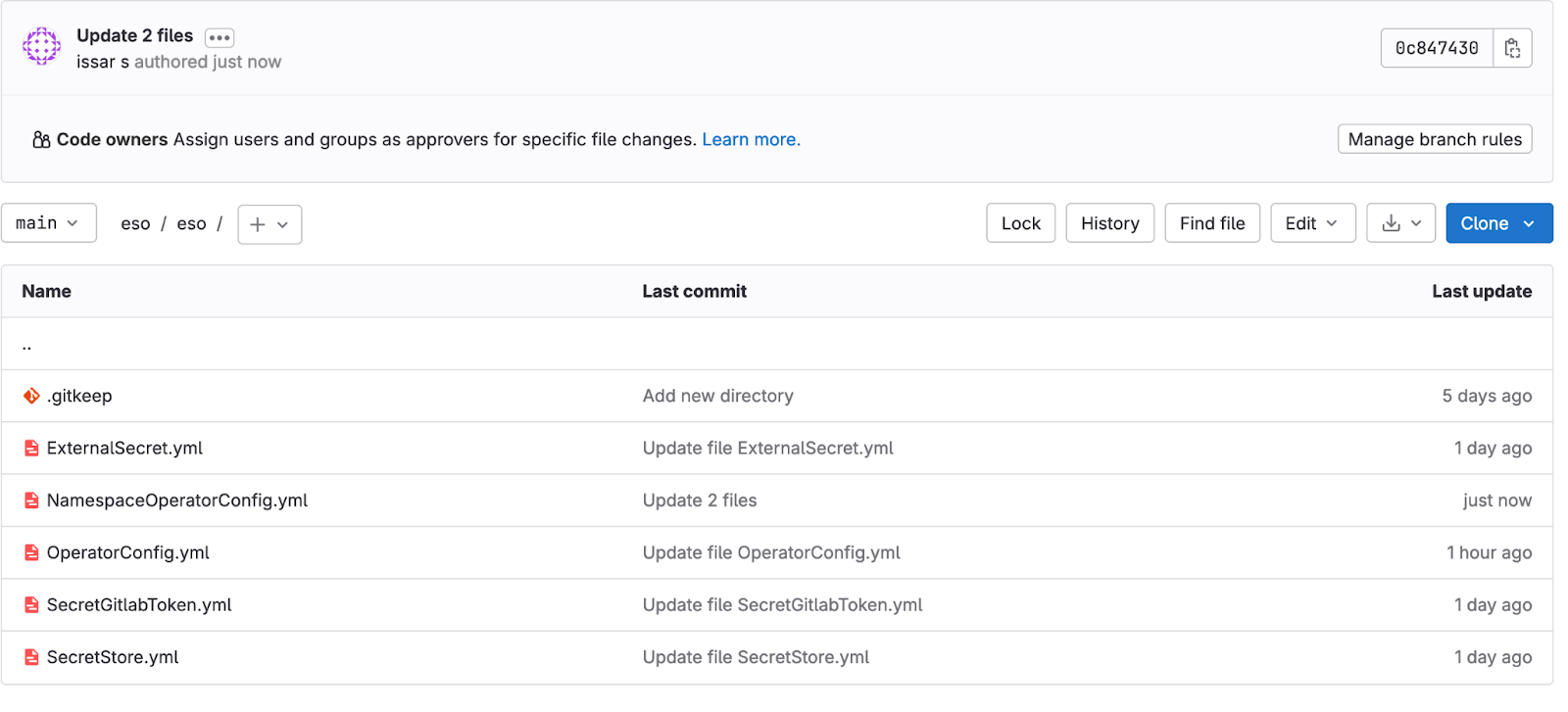

Below is a snapshot of my Git repository with the above files:

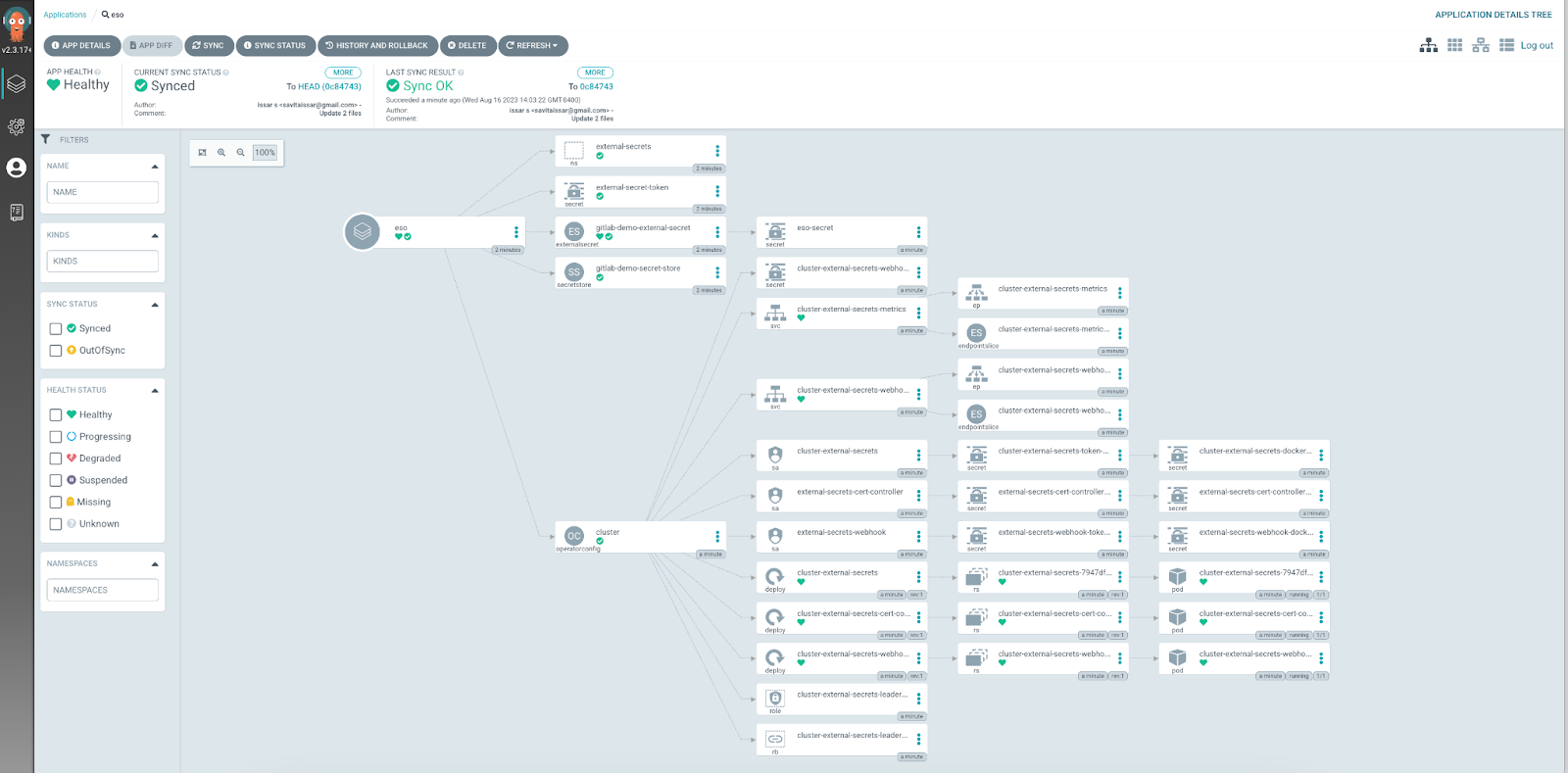

Let's add my GitLab repository to the Argo and deploy the External Secrets manifests to our Red Hat Openshift on AWS cluster:

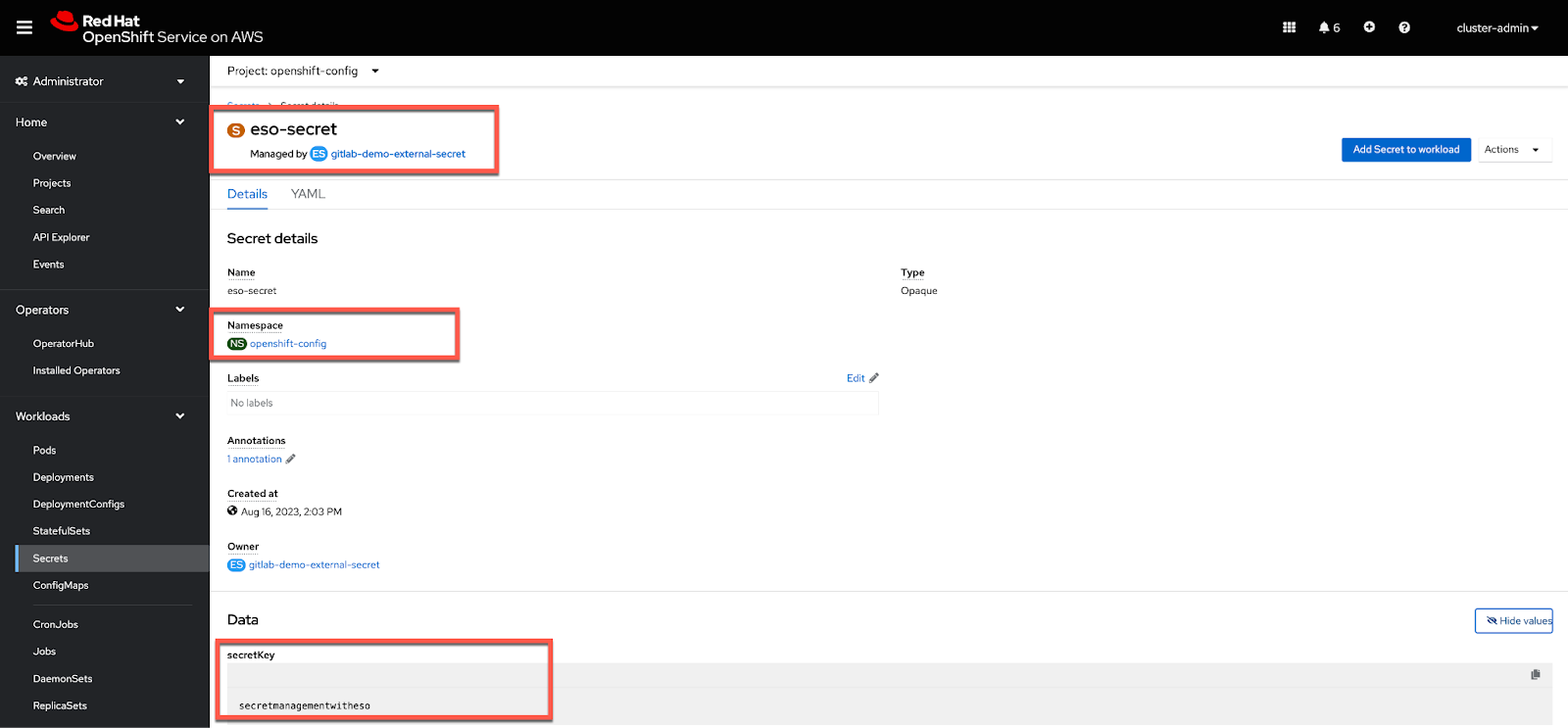

We will now create an ArgoCD application to showcase the demo. Our test case here is the External Secrets Operator, which should fetch the GitLab CI variable with key:"gitlabsecret" and value: "secretmanagementwitheso" and create a secret object with the name "eso-secret" and value as "secretmanagementwitheso" in the "openshift-config" namespace.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: eso

spec:

destination:

name: ''

namespace: ''

server: 'https://kubernetes.default.svc'

source:

path: eso

repoURL: 'https://gitlab.com/issars2/eso.git'

targetRevision: HEAD

project: default

syncPolicy:

automated:

prune: false

selfHeal: false

As shown below, the ArgoCD application synced successfully:

Navigate back to the OpenShift console under the "openshift-config" namespace to ensure the secret "eso-secret" is created:

As you can see, ESO fetched the GitLab CI/CD variable and created the secret in the desired namespace.

Wrap-up

I find ESO with GitLab CI a very neat solution for organizations that don't have an enterprise-grade secret management solution.

Additionally, ESO not only creates the secrets but also keeps them updated/synced to the external secret store.

ESO supports a wide variety of secrets types, including Dockerconfig, TLS Cert, SSH keys, and more. Refer to the ESO Secret Types for more information.

I have used RedHat OpenShift on AWS as a reference in the blog post; however, the External Secrets Operator configuration will work for all OpenShift managed and on-prem offerings.

About the author

More like this

Why flexibility is non-negotiable in the Middle East’s AI transformation journey

4 reasons to start using image mode for Red Hat Enterprise Linux right now

Unlocking zero-trust supply chains | Technically Speaking

The Agile_Revolution | Command Line Heroes

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds