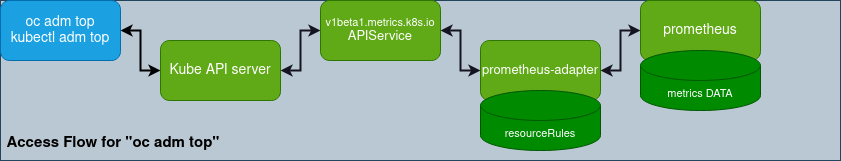

To provide reliable services, you need to monitor your services’ performance by examining the containers and pods in OpenShift (Kubernetes) clusters. OpenShift provides the oc adm top command for that purpose. It depends on kubectl adm top internally, and it performs the same function.

This post will focus on the command aspect of monitoring memory resources. The memory metrics in OpenShift are a bit different from the legacy Linux information provided by top and free. This post aims to help you better understand the memory resources in OpenShift.

Using oc adm top pods to monitor the memory usage of pods

oc adm top pods shows the memory usage of pods based on container_memory_working_set_bytes metrics through the prometheus-adapter service in openshift-monitoring, which is provided according to the metrics rules in the Prometheus adapter configuration.

// The memory metrics depends on "v1beta1.metrics.k8s.io" API service,

// and it's provided by "prometheus-adapter" according to the metrics rules, container_memory_working_set_bytes.

$ oc adm top pod -n test

NAME CPU(cores) MEMORY(bytes)

httpd-675fd5bfdd-96br8 1m 53Mi

$ oc get pods.metrics.k8s.io httpd-675fd5bfdd-96br8 -n test -o yaml

apiVersion: metrics.k8s.io/v1beta1

containers:

- name: httpd

usage:

cpu: 1m

memory: 55048Ki

kind: PodMetrics

:

$ oc get apiservice v1beta1.metrics.k8s.io

NAME SERVICE AVAILABLE AGE

v1beta1.metrics.k8s.io openshift-monitoring/prometheus-adapter True 21d

$ oc get cm/adapter-config -o yaml -n openshift-monitoring

apiVersion: v1

data:

config.yaml: |-

"resourceRules":

:

"memory"

"containerLabel": "container"

"containerQuery": |

sum by (<<.GroupBy>>) (

container_memory_working_set_bytes{<<.LabelMatchers>>,container!="",pod!=""}

)

:

The container_memory_working_set_bytes is collected from the cgroup memory control files as follows:

container_memory_working_set_bytes = memory.usage_in_bytes - total_inactive_file

Refer to Source codes about the workingset for more details.

The top command in Linux shows process memory usage in three columns: VIRT (virtual memory), RES (actual memory), and SHR (shared memory). It's a different point with OpenShift pod memory usage values, and you need to check to determine why OpenShift (Kubernetes) adapted the working set.

In Kubernetes documentation, Measuring resource usage - Memory, the working set is the amount of memory in use that cannot be freed under memory pressure. The following information comes from the Kubernetes documentation:

In an ideal world, the "working set" is the amount of memory in-use that cannot be freed under memory pressure. However, calculation of the working set varies by host OS, and generally makes heavy use of heuristics to produce an estimate.

The Kubernetes model for a container's working set expects that the container runtime counts anonymous memory associated with the container in question. The working set metric typically also includes some cached (file-backed) memory, because the host OS cannot always reclaim pages.

In other words, working set is the appropriate metric for monitoring OOM limitations if you set up a resources.limits.memory limitation in pods. As you know, when reaching the memory limits size, the cgroup cuts pods out with OOM events if it's not successful trying to reclaim memory through techniques such as using some kinds of caches.

However, the working set includes the page cache, which is for disk I/O performance. For example, when loading large files in processes, consider that an unexpected page cache would be bigger, making the working set larger than the actual in-use memory size not reclaimed for the pod.

As a result, the working set intends to show how much memory is required to run the pods. Even though the working set is bigger than the size limit, it does not always trigger OOM events due to the caches. So consider that during monitoring pods’ memory, the working set provides only an estimate of memory usage and trends.

Using oc adm top nodes to monitor memory usage of nodes

The oc adm top nodes shows a different memory usage compared to oc adm top pods. It means the memory usage is available for evaluation as to whether it's enough to schedule pods to the nodes.

By default, oc adm top nodes is based on Allocatable logical total size of the memory, not the actual total size. To check the actual memory usage of your nodes, run oc adm top nodes --show-capacity instead.

Remember, OpenShift (Kubernetes) is a container platform, and the most important workload unit is a pod, so recognize that the memory usage metrics of OpenShift (Kubernetes) differ from those of legacy Linux hosts. Applying the same resource mindset of Linux hosts to OpenShift (Kubernetes) nodes leads to misunderstanding. The Allocatable is the total resource size for those pods; the Kubernetes scheduler considers only the memory needed to schedule the pods based on Node Allocatable.

The total memory size of Allocatable is calculated as follows:

[Allocatable] = [Node Capacity] - [system-reserved] - [Hard-Eviction-Thresholds] - [Pre-allocated memory like huge pages]

And the node memory usage metrics are collected as node_memory_MemTotal_bytes - node_memory_MemAvailable_bytes. As a result, oc adm top nodes shows us information according to the (( the node memory usage ) / ( Allocatable ) x 100) formula.

// "--show-capacity" shows us the node memory usage

// based on "MemTotal" in "/proc/meminfo" instead of "Allocatable".

$ oc adm top nodes test-ocp-2jpnc-worker-0-v5mpd

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

test-ocp-2jpnc-worker-0-v5mpd 952m 12% 7986Mi 53%

$ oc adm top nodes test-ocp-2jpnc-worker-0-v5mpd --show-capacity

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

test-ocp-2jpnc-worker-0-v5mpd 638m 7% 8041Mi 50%

// As follows, the memory usage of nodes is the same with,

// "node_memory_MemAvailable_bytes" is deducted from "node_memory_MemTotal_bytes".

$ oc get cm/adapter-config -o yaml -n openshift-monitoring

apiVersion: v1

data:

config.yaml: |-

"resourceRules":

:

"memory":

:

"nodeQuery": |

sum by (<<.GroupBy>>) (

node_memory_MemTotal_bytes{job="node-exporter",<<.LabelMatchers>>}

-

node_memory_MemAvailable_bytes{job="node-exporter",<<.LabelMatchers>>}

)

:

The Allocatable is not an actual total size, and it's calculated from the above formula. For example, the MEMORY% column value can be bigger than 100% when Allocatable is deducted from the actual usage. Suppose you set up many huge pages on your nodes. Even though the huge pages are deducted from the Allocatable total in advance, the node memory usage includes all the huge pages as a kind of pre-allocated memory. As a result, memory usage doubles the huge pages’ size during the calculation, and the discrepancy is bigger than you would expect. But currently, it's a design of oc adm top nodes to not revise the metrics and keep the implementation simple.

Except for the huge pages case, the discrepancy is generally not great. Consider not only oc adm top nodes but also oc adm top nodes --show-capacity for better capacity planning of pods.

While this post focuses on monitoring memory usage for pods and nodes memory, oc adm top would be useful for a variety of use cases. I hope this has helped to deepen your knowledge of monitoring in OpenShift (Kubernetes).

About the author

Daein Park is an OpenShift Software Maintenance Engineer in Japan. He has started his career as a Java Software Engineer, and has experience with various e-commerce projects as an Infrastructure Engineer. He is also an active open source contributor.

More like this

A decade of open innovation: Red Hat continues to scale the open hybrid cloud with Microsoft

Stop managing the past and start building IT’s future

Crack the Cloud_Open | Command Line Heroes

Edge computing covered and diced | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds