What is a microservice?

Microservices refer to a style of application architecture where a collection of independent services communicate through lightweight APIs.

Think of your last visit to an online retailer. You might have used the site’s search bar to browse products. That search represents a service. Maybe you also saw recommendations for related products—or added an item to your online shopping cart. Those are both services, too. Add all those microservices together and you have a fully-functioning application.

A more efficient approach to application development

A microservices architecture is a cloud-native approach to building software in a way that allows for each core function within an application to exist independently.

When elements of an application are segregated in this way, it allows for development and operations teams to work in tandem without getting in the way of one another. This means more developers working on the same app, at the same time, which results in less time spent in development.

Red Hat resources

Monolithic architecture vs microservices architecture

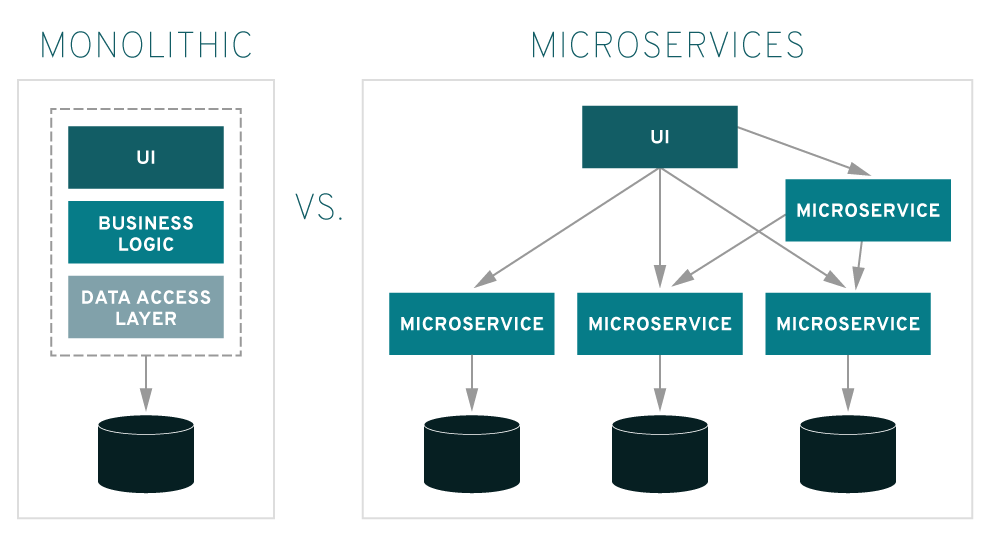

The traditional approach to building applications has focused on the monolith. In a monolithic architecture, all the functions and services within an application are locked together, operating as a single unit. When the application is added to or improved upon in any way, the architecture grows more complex. This makes it more difficult to optimize any singular function within the application without taking the entire application apart. This also means that if one process within the application needs to be scaled, the entire application must be scaled as well.

In microservices architectures, applications are built so that each core function within the app runs independently. This allows development teams to build and update new components to meet changing business needs without disrupting the application as a whole.

Service-oriented architecture vs microservices architecture

Microservices architecture is an evolution of service-oriented architecture (SOA). The two approaches are similar in that they break large, complex applications into smaller components that are easier to work with. Because of their similarities, SOA and microservices architecture are often confused. The main characteristic that can help differentiate between them is their scope: SOA is an enterprise-wide approach to architecture, while microservices is an implementation strategy within application development teams.

Benefits of a microservices architecture

Microservices give your teams and routines a boost through distributed development. You can also develop multiple microservices concurrently. This means more developers working on the same app, at the same time, which results in less time spent in development.

Ready for market faster

Since development cycles are shortened, a microservices architecture supports more agile deployment and updates.

Highly scalable

As demand for certain services grows, you can deploy across multiple servers, and infrastructures, to meet your needs.

Resilient

These independent services, when constructed properly, do not impact one another. This means that if one piece fails, the whole app doesn’t go down, unlike the monolithic app model.

Easy to deploy

Because your microservice-based apps are more modular and smaller than traditional, monolithic apps, the worries that came with those deployments are negated. This requires more coordination, which a service mesh layer can help with, but the payoffs can be huge.

Accessible

Because the larger app is broken down into smaller pieces, developers can more easily understand, update, and enhance those pieces, resulting in faster development cycles, especially when combined with agile development methodologies such as DevOps.

More open

Due to the use of polyglot APIs, developers have the freedom to choose the best language and technology for the necessary function.

Potential challenges for microservices

The flexibility that comes along with microservices can create a rush to deploy new changes, which means creating new patterns. In software engineering, a “pattern” is meant to refer to any algorithmic solution that is known to work. An “anti-pattern” refers to common mistakes that are made with the intention of solving a problem, but can create more issues in the long run.

Beyond culture and process, complexity and efficiency are two major challenges of a microservice-based architecture. When working with a microservices architecture, it’s important to look out for these common anti-patterns.

- Scaling: Scaling any function within the software lifecycle development process can pose challenges—especially in the beginning. During initial set up, it’s important to spend time identifying dependencies between services, and be aware of potential triggers that could break backward compatibility. When it comes time to deploy, investing in automation is critical as the complexity of microservices becomes overwhelming for human deployment.

- Logging: With distributed systems, you need centralized logs to bring everything together. Otherwise, the scale is impossible to manage.

- Monitoring: It’s critical to have a centralized view of the system to pinpoint sources of problems.

- Debugging: Remote debugging through your local integrated development environment (IDE) isn’t an option and it won’t work across dozens or hundreds of services. Unfortunately there’s no single answer to how to debug at this time.

- Connectivity: Consider service discovery, whether centralized or integrated.

Tools and technologies that enable microservices

Containers and Kubernetes

A Kubernetes is a container orchestration platform that allows for single components within an application to be updated without affecting the rest of the technology stack, which makes it perfect for automating the management, scaling, and deployment of microservices applications.

APIs

An application programming interface, or API, is the part of an application that is responsible for communicating with other applications. Within the infrastructure of microservices architecture, APIs play the critical role of allowing the different services within a microservice to share information and function as one.

Event streaming

An event can be defined as any thing that happens within a microservice service. For example, when someone adds something to their online shopping cart, or removes it.

Events form into event streams, which reflect the changing behavior of a system. Monitoring events allow organizations to arrive at useful conclusions about data and user behavior. Event stream processing allows immediate action to be taken, it can be used directly with operational workloads in real-time. Companies are applying event streaming to everything from fraud analysis to machine maintenance.

Serverless computing

Serverless computing is a cloud-native development model that allows developers to build and run applications while a cloud provider is responsible for provisioning, maintaining, and scaling the server infrastructure. Developers can simply package their code in containers for deployment. Serverless helps organizations innovate faster because the application is abstracted from the underlying infrastructure.

How Red Hat can help

Red Hat’s open source solutions help you break down your monolithic applications into microservices, manage them, orchestrate them, and handle the data they create.

Red Hat OpenShift

Red Hat® OpenShift® is a Kubernetes-based platform that enables microservices by providing a uniform way to connect, manage, and observe microservices-based applications. It supports containerized, legacy, and cloud-native applications, as well as those being refactored into microservices. OpenShift integrates with Red Hat Application Services and can be used with existing automation tools like Git and Jenkins. It also incorporates an enterprise-grade Linux operating system, for greater security across your entire cluster.

Whether you’re optimizing legacy applications, migrating to the cloud, or building totally new, microservices-based solutions, Red Hat OpenShift provides those applications with a more secure and stable platform across your infrastructure.

Red Hat Runtimes

Red Hat Runtimes is a set of prebuilt, containerized runtime foundations for microservices. It supports a wide range of languages and frameworks to use when designing microservice architectures, such as Quarkus, Spring Boot, MicroProfile, and Node.js. Additionally, Red Hat Runtimes includes supporting services for fast data access with Red Hat Data Grid, and services to secure microservice APIs with Red Hat Single-sign on.

Red Hat Integration

Red Hat Integration is a comprehensive set of integration and messaging technologies to connect applications and data across hybrid infrastructures. It is an agile, distributed, containerized, and API-centric solution. It provides service composition and orchestration, application connectivity and data transformation, real-time message streaming, and API management—all combined with a cloud-native platform and toolchain to support the full spectrum of modern application development.

Developers can use tooling like drag-and-drop services and built-in integration patterns to build microservices, while business users can use web-based tooling to develop APIs that can integrate different microservices.

Red Hat named a Leader in 2025 Gartner® Magic Quadrant™ for Container Management

Read the 2025 Gartner® Magic Quadrant™ for Container Management to learn why Red Hat OpenShift has been named a “Leader” for the 3rd year in a row.