Operators and Red Hat OpenShift Container Platform

Red Hat® OpenShift® Operators automate the creation, configuration, and management of instances of Kubernetes-native applications. Operators provide automation at every level of the stack—from managing the parts that make up the platform all the way to applications that are provided as a managed service.

Red Hat OpenShift uses the power of Operators to run the entire platform in an autonomous fashion while exposing configuration natively through Kubernetes objects, allowing for quick installation and frequent, robust updates. In addition to the automation advantages of Operators for managing the platform, Red Hat OpenShift makes it easier to find, install, and manage Operators running on your clusters.

Included in Red Hat OpenShift is the Embedded OperatorHub, a registry of certified Operators from software vendors and open source projects. Within the Embedded OperatorHub you can browse and install a library of Operators that have been verified to work with Red Hat OpenShift and that have been packaged for easy lifecycle management.

What is a Kubernetes Operator?

A Kubernetes Operator is a method of packaging, deploying and managing a Kubernetes-native application. A Kubernetes-native application is an application that is both deployed on Kubernetes and managed using the Kubernetes APIs and kubectl tooling.

An Operator is essentially a custom controller.

A controller is a core concept in Kubernetes and is implemented as a software loop that runs continuously on the Kubernetes master nodes comparing, and if necessary, reconciling the expressed desired state and the current state of an object. Objects are well known resources like Pods, Services, ConfigMaps, or PersistentVolumes. Operators apply this model at the level of entire applications and are, in effect, application-specific controllers.

The Operator is a piece of software running in a Pod on the cluster, interacting with the Kubernetes API server. It introduces new object types through Custom Resource Definitions, an extension mechanism in Kubernetes. These custom objects are the primary interface for a user; consistent with the resource-based interaction model on the Kubernetes cluster.

An Operator watches for these custom resource types and is notified about their presence or modification. When the Operator receives this notification it will start running a loop to ensure that all the required connections for the application service represented by these objects are actually available and configured in the way the user expressed in the object’s specification.

What's new, what's next in Red Hat OpenShift

The Operator Framework

The Operator Framework is an open source project that provides developers and cluster administrators tooling to accelerate development and deployment of an Operator.

The project includes the Operator software development kit (SDK) for building Kubernetes applications, a management framework for extending Kubernetes with Operators, and a catalog of existing Operators from the Kubernetes community.

Built Operators

Community Operators

With access to community Operators, developers and cluster admins can try out Operators at various maturity levels that work with any Kubernetes. Check out the community Operators on OperatorHub.io.

Certified Operators

With Red Hat OpenShift Certified Operators found in the Embedded OperatorHub, developers and cluster admins have access to a library of workloads "as-a-service," verified on Red Hat OpenShift and backed by Red Hat and its partners.

Build with the Operator SDK

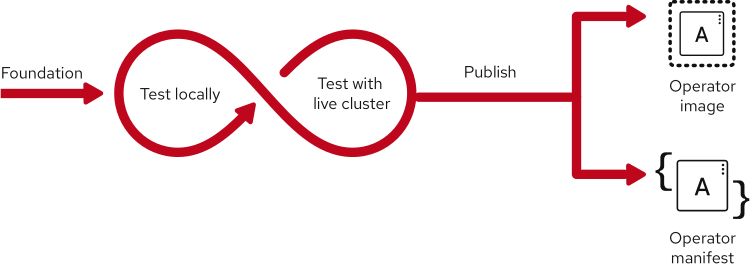

The Operator software development kit (SDK) provides the tools to build, test and package Operators. The SDK strips away a lot of the boilerplate code that is normally required to integrate with the Kubernetes API. It also provides a useable scaffolding so developers can focus on adding business logic (for example, how to scale, upgrade, or backup the application it manages). Leading practices and code patterns shared across Operators are included in the SDK to help prevent duplicating efforts. The SDK also encourages short, iterative development and test cycles with tooling that allow for basic validation of the Operator, and automated packaging for deployment using the Operator Lifecycle Manager.

Package with the Operator Lifecycle Manager

The Operator Lifecycle Manager (OLM) is the backplane that facilitates management of operators on a Kubernetes cluster. Operators that provide popular applications as a service are going to be long-lived workloads with, potentially, lots of permissions on the cluster.

With OLM, administrators can control which Operators are available in what namespaces and who can interact with running Operators. The permissions of an Operator are accurately configured automatically to follow a least-privilege approach. OLM manages the overall lifecycle of Operators and their resources, by doing things like resolving dependencies on other Operators, triggering updates to both an Operator and the application it manages, or granting a team access to an Operator for their slice of the cluster.

Simple, stateless applications can use the Lifecycle Management features of the Operator Framework—without writing any code—by using a generic Operator (for example, the Helm Operator). However, complex and stateful applications are where an Operator can be especially useful. The managed-service capabilities that are encoded into the Operator code can provide an advanced user experience, automating such features as updates, backups and scaling.

Watch a video overview of how Operators and Helm work

Measure with Operator Metering

With the metering extensions, IT teams can have greater control of their budgets and software vendors can more easily track the usage of their commercial software. Operator Metering is designed to tie into the cluster’s CPU and memory reporting, as well as calculate IaaS cost and customized metrics, like licensing.

Accelerate app development with Red Hat OpenShift

Learn how Red Hat OpenShift can help your organization build, accelerate, and scale traditional and AI-powered workloads with security and compliance.