As a cluster administrator, you can back up and restore applications running on the OpenShift Container Platform by using the OpenShift API for Data Protection (OADP).

OADP backs up and restores Kubernetes resources and internal images at the granularity of a namespace by using the version of Velero that is appropriate for the version of OADP you install, according to the table in Downloading the Velero CLI tool.

OADP backs up and restores persistent volumes (PVs) using snapshots or Restic. For details, see OADP features. This guide uses Restic for volume backup/restore.

This guide uses Restic for volume backup/restore.

Leverage OADP to recover from any of the following situations:

- Cross Cluster Backup/Restore

- You have a cluster that is in an irreparable state.

- You have lost the majority of your control plane hosts, leading to etcd quorum loss.

- Your cloud/data center region is down.

- You simply want or need to move to another OCP cluster

- In-Place Cluster Backup/Restore

- You have deleted something critical in the cluster by mistake.

- You need to recover from workload data corruption within the same cluster.

The guide discusses disaster recovery in the context of implementing application backup and restore. Read the Additional Learning Resources section at the end of the article to get a broader understanding of DR in general.

Objective

The goal is to demonstrate a cross-cluster Backup and Restore by backing up applications in a cluster running in the AWS us-east-2 region and restoring them on a cluster deployed in the AWS eu-west-1 region. You could also use this guide to set up an in-place backup and restore. I’ll point out the setup differences below.

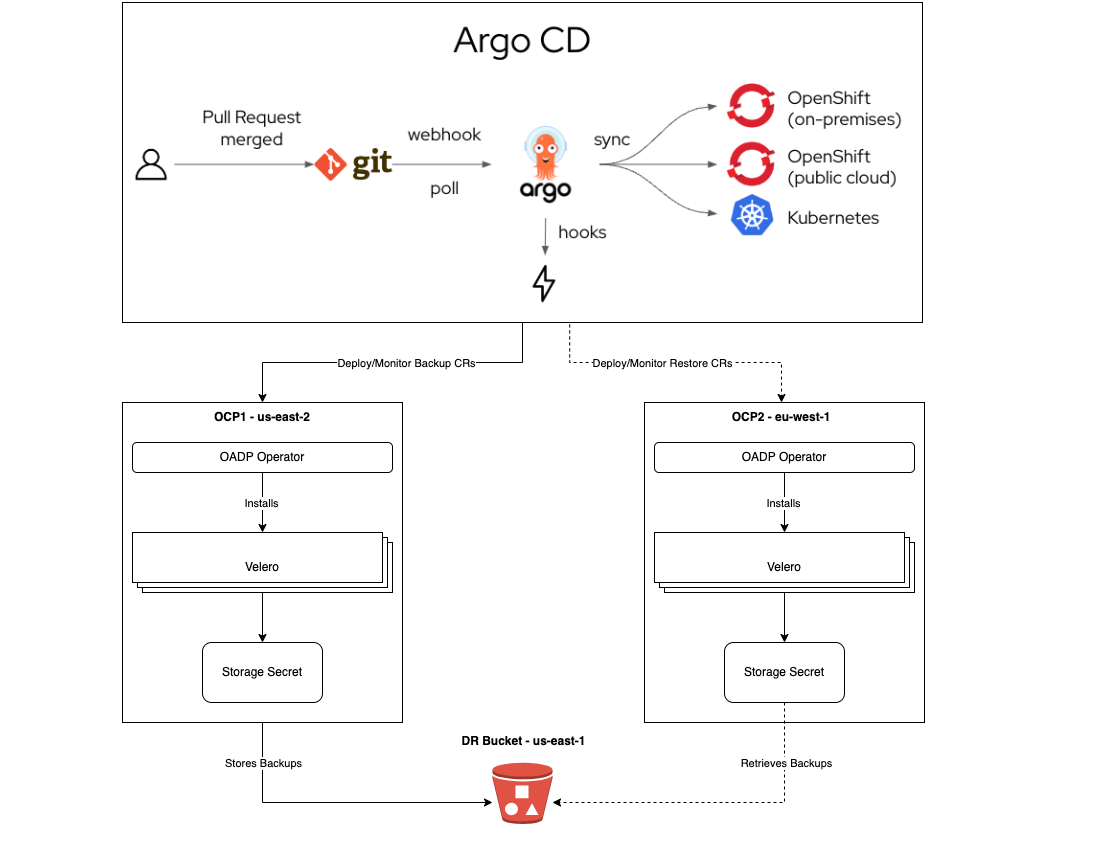

Here's the architecture for the demonstration:

This guide assumes an up-and-running OpenShift cluster and that the cluster's default storage solution implements the Container Storage Interface (CSI). Moreover, the guide supplements applications CI/CD best practices. One should opt for a fast, consistent, and repeatable Continuous Deployment/Delivery process for restoring lost/deleted Kubernetes API objects.

Prerequisites

Cluster Storage Solution

- Confirm the storage solution implements the Container Storage Interface (CSI).

- This must be true for source (backup from) and target (restore to) clusters.

- Storage solution supports StorageClass and VolumeSnapshotClass API objects.

- StorageClass is configured as Default, and it is the only one with this status.

- Annotate it with: storageclass.kubernetes.io/is-default-class: 'true'

- VolumeSnapshotClass is configured as Default, and it is the only one with this status.

- Annotate: snapshot.storage.kubernetes.io/is-default-class: 'true'

- Label: velero.io/csi-volumesnapshot-class: 'true'

Bastion (CI/CD) Host

- Linux packages: yq, jq, python3, python3-pip, nfs-utils, openshift-cli, aws-cli

- Configure your bash_profile (~/.bashrc):

alias velero='oc -n openshift-adp exec deployment/velero -c velero -it — ./velero'

- Permission to run commands with sudo

- Argo CD CLI installed

OpenShift

- You must have an S3-compatible object storage.

- You must have the cluster-admin role.

- Up and running GitOps instance. To learn more, follow the steps described in Installing Red Hat OpenShift GitOps.

- OpenShift GitOps instance should be installed in an Ops (Tooling) cluster.

- To protect against regional failure, the Operations cluster should run in a region different from the BACKUP cluster region.

- For example, if the BACKUP cluster runs in us-east-1, the Operations Cluster should be deployed in us-east-2 or us-west-1.

- Applications being backed up should be healthy.

Procedure

Setup: OpenShift GitOps

1. Login (CLI) to the OpenShift cluster where GitOps is or will be running:

oc login --token=SA_TOKEN --server=API_SERVER

2. Login to ArgoCD CLI:

ARGO_PASS=$(oc get secret/openshift-gitops-cluster -n

openshift-gitops -o jsonpath='{.data.admin\password}' | base64 -d)

ARGO_URL=$(oc get route openshift-gitops-server -n openshift-gitops -o jsonpath='{.spec.host}{"\n"}')

argocd login --insecure --grpc-web $ARGO_URL --username admin --password $ARGO_PASS

3. Add the OADP CRs repository.

- Generate a read-only Personal Access Token (PAT) token from your GitHub account or any other Git solution provider.

- Using the username (i.e., git) and password (PAT Token) above, register the repository to ArgoCD:

argocd repo add https://github.com/luqmanbarry/k8s-apps-backup-restore.git \

--name CHANGE_ME \

--username git \

--password PAT_TOKEN \

--upsert \

--insecure-skip-server-verification

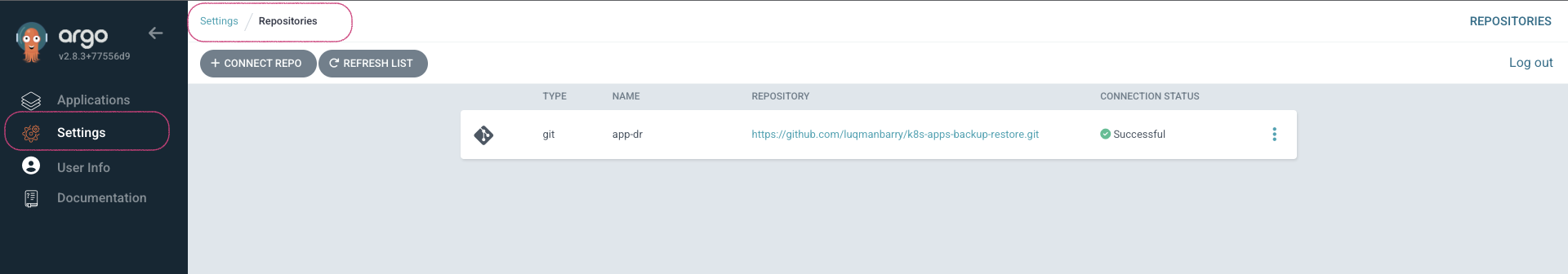

If there are no ArgoCD RBAC issues, the repository should show up in the ArgoCD web console, and the outcome should look like this:

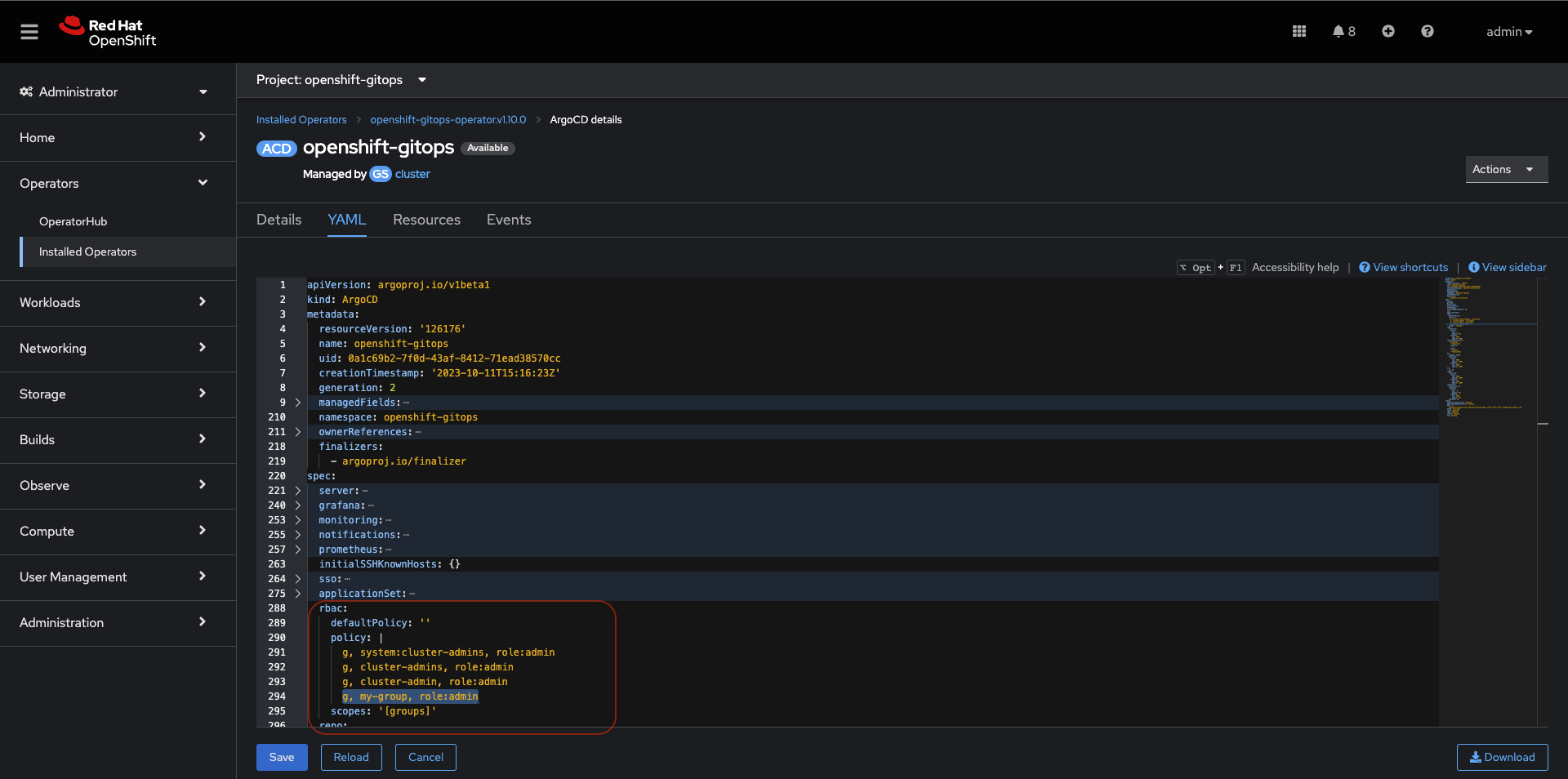

If the repository does not appear in the ArgoCD UI, look at the openshift-gitops-server-xxxx-xxxx pod logs. If you find error messages such as repository: permission denied: repositories, create, they are most likely related to RBAC issues; edit the ArgoCD custom resource to add the group/user with required resources and actions.

For example:

Click on ArgoCD RBAC Configuration to learn more.

4. Add the Source (BACKUP) OpenShift Cluster

Use an account (a ServiceAccount is recommended) with permission to create Projects, OperatorGroups, and Subscriptions resources.

Log in to the BACKUP cluster:

BACKUP_CLUSTER_SA_TOKEN=CHANGE_ME

BACKUP_CLUSTER_API_SERVER=CHANGE_ME

oc login --token=$BACKUP_CLUSTER_SA_TOKEN --server=$BACKUP_CLUSTER_API_SERVER

Add the BACKUP cluster to ArgoCD:

BACKUP_CLUSTER_KUBE_CONTEXT=$(oc config current-context)

BACKUP_ARGO_CLUSTER_NAME="apps-dr-backup"

argocd cluster add $BACKUP_CLUSTER_KUBE_CONTEXT \

--kubeconfig $HOME/.kube/config \

--name $BACKUP_ARGO_CLUSTER_NAME \

--yes

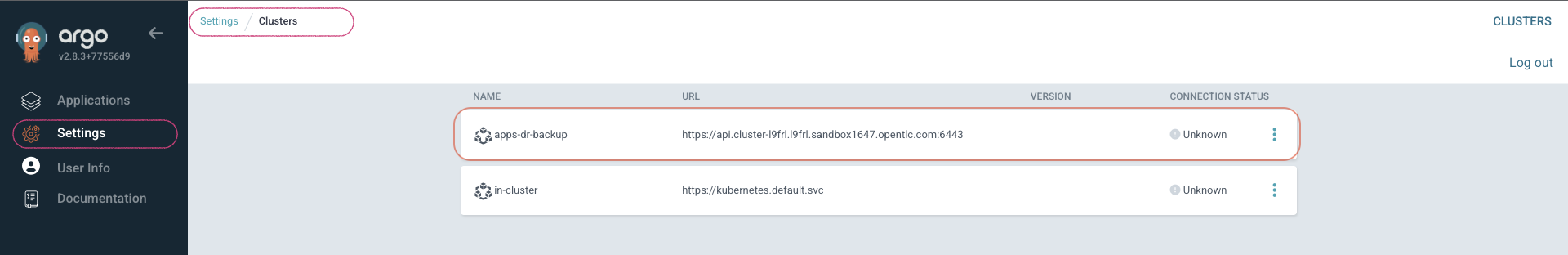

If things go as they should, the outcome should look like this:

Setup: S3-compatible Object Storage

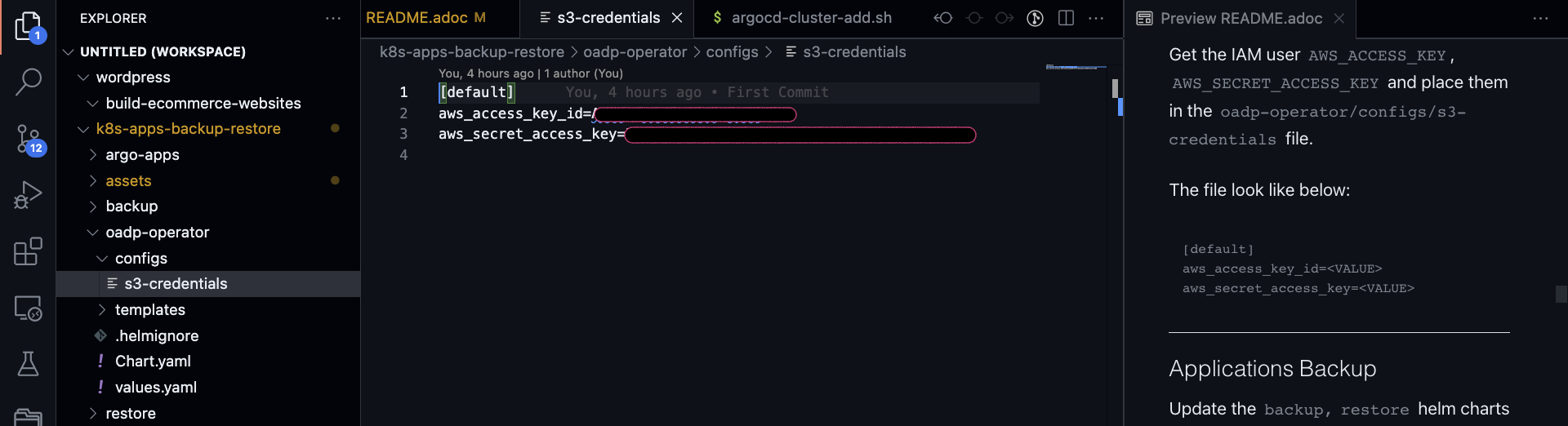

You should use an IAM account with read/write permissions for just this specific S3 bucket. For simplicity, I placed the S3 credentials in the oadp-operator Helm chart; however, AWS credentials should be injected at deploy time rather than stored in Git.

Get the IAM user AWS_ACCESS_KEY and AWS_SECRET_ACCESS_KEY, and place them in the oadp-operator/configs/s3-credentials file.

The file looks like the following:

Both the backup Helm chart and restore Helm chart deploy the OADP operator defined in the oadp-operator Helm chart as a dependency.

Once the S3 credentials are set, update the backup Helm chart and restore Helm chart dependencies. You only need to do it once per S3 bucket.

cd backup

helm dependency update

helm lint

cd restore

helm dependency update

helm lint

You are ready to proceed to the next steps if no errors occur.

Deploy the sample apps used for testing the backup

I have prepared two OpenShift templates that will deploy two stateful apps (Deployment and DeploymentConfig) in the web-app1 and web-app2 namespaces.

Log in to the BACKUP cluster:

BACKUP_CLUSTER_SA_TOKEN=CHANGE_ME

BACKUP_CLUSTER_API_SERVER=CHANGE_ME

oc login --token=$BACKUP_CLUSTER_SA_TOKEN --server=$BACKUP_CLUSTER_API_SERVER

# Web App1

oc process -f sample-apps/web-app1.yaml -o yaml | oc apply -f -

sleep 10

oc get deployment,pod,svc,route,pvc -n web-app1

# Web App2

oc process -f sample-apps/web-app2.yaml -o yaml | oc apply -f -

sleep 10

oc get deploymentconfig,pod,svc,route,pvc -n web-app2

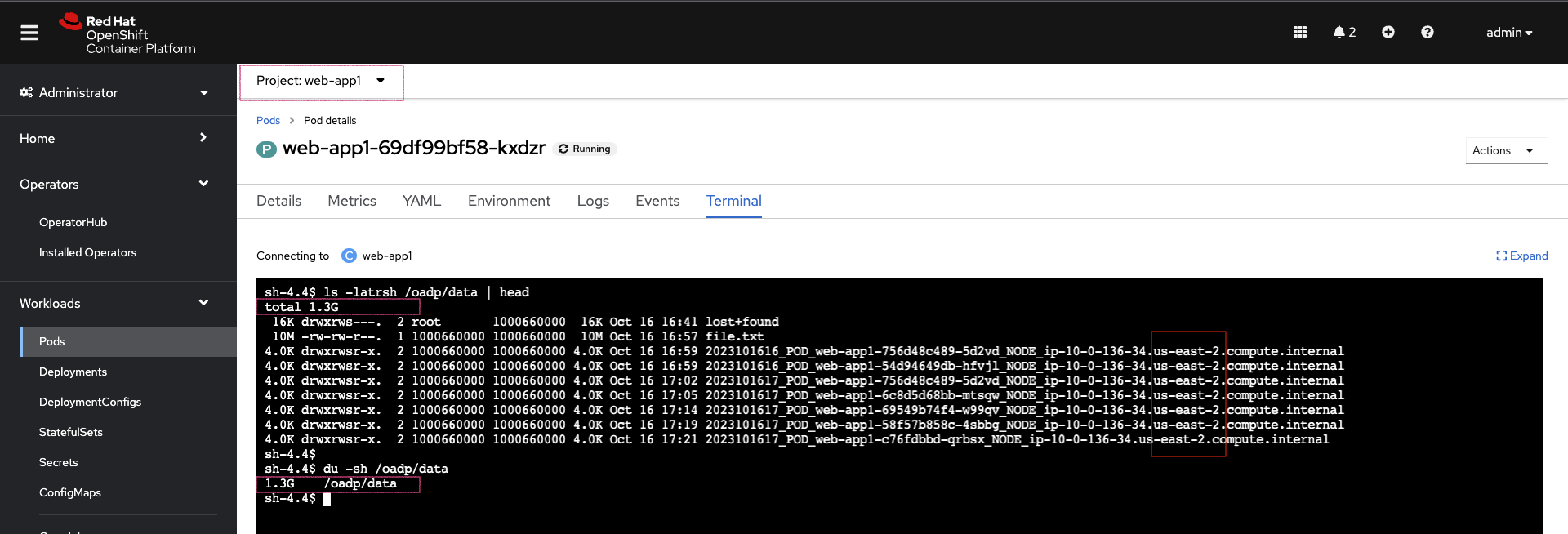

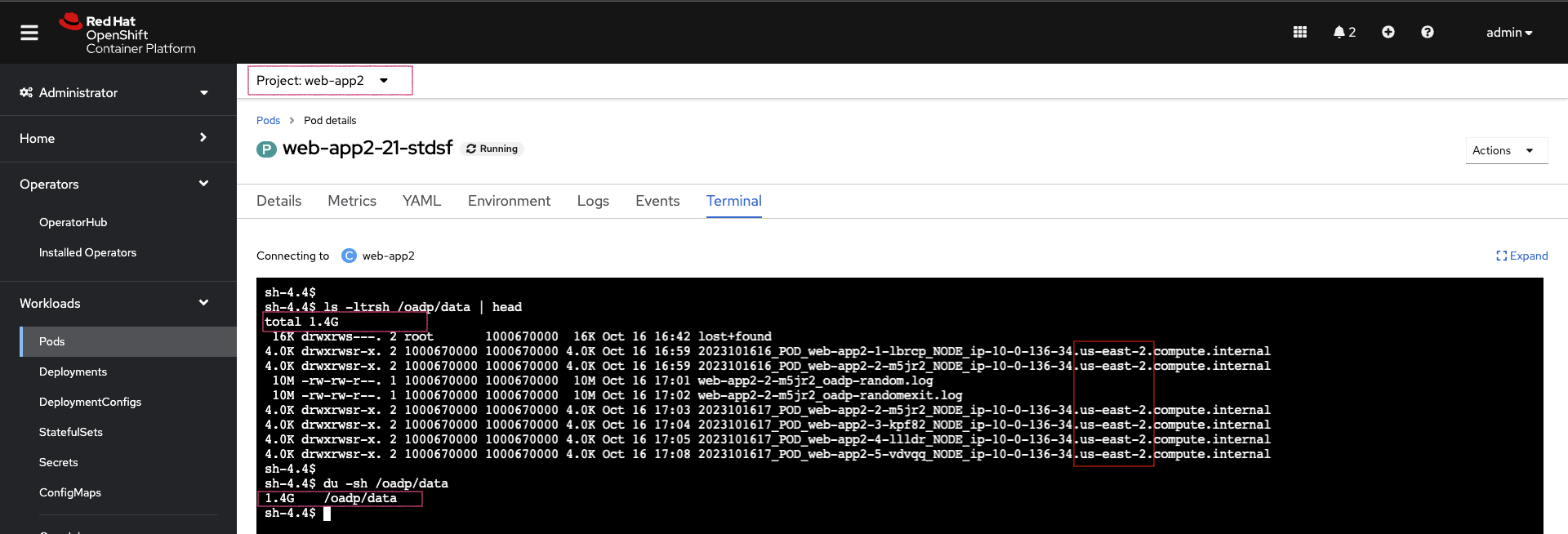

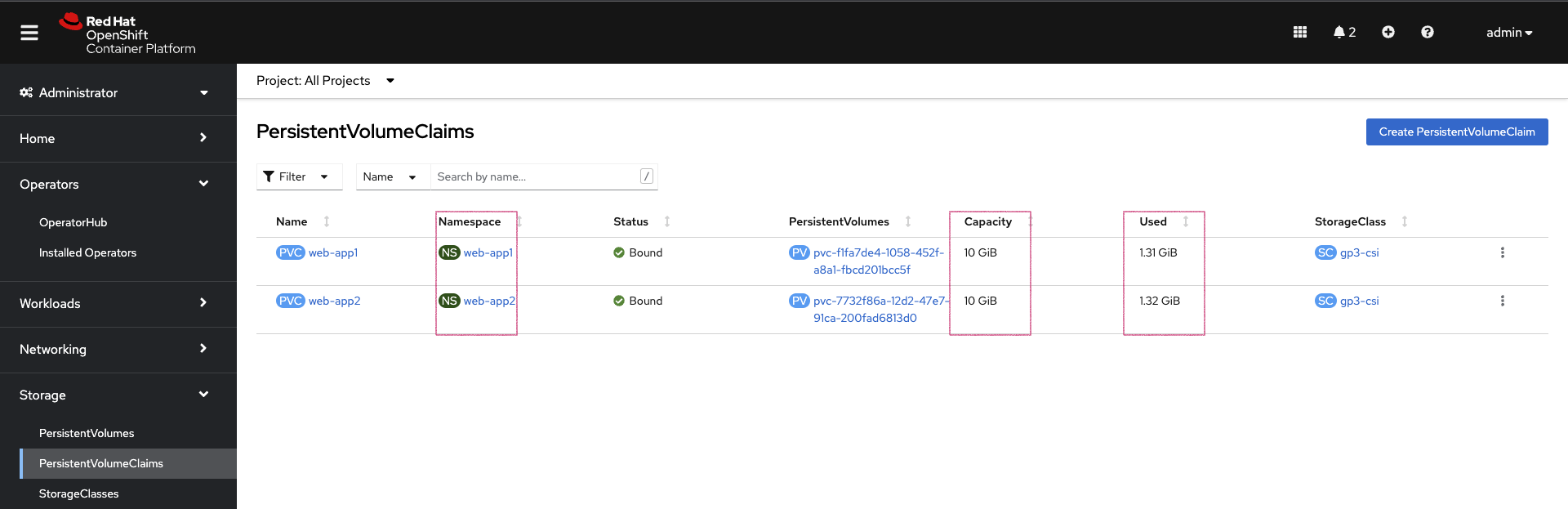

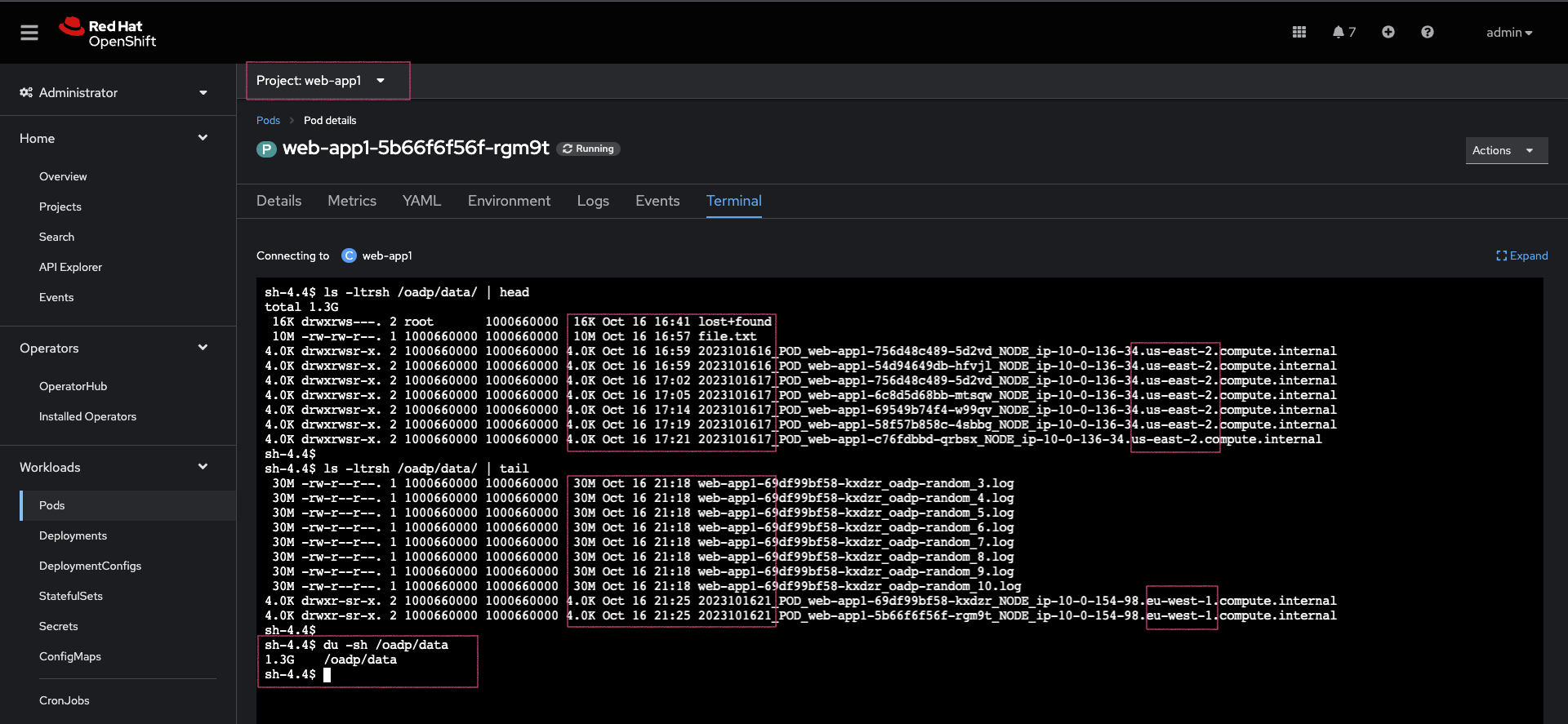

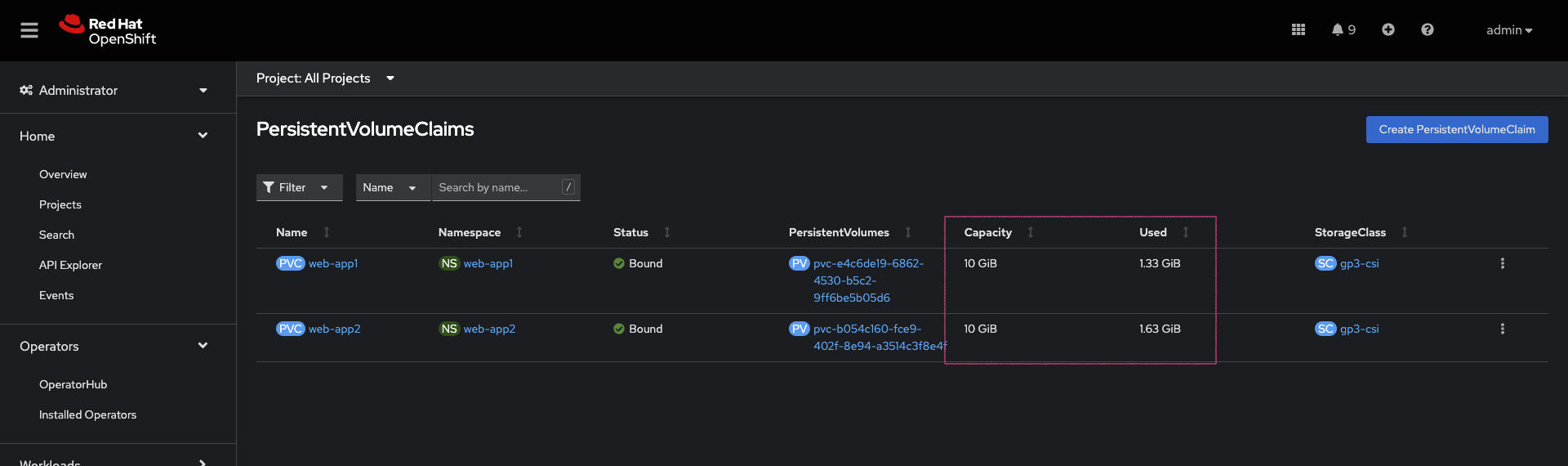

Sample web-app1 and web-app2 volumes data before starting the backup. Every time the pods spin up, the entry command generates about 30MB of data. To generate more data, delete the pods a few times.

After the restore, along with application resources, we expect this same data to be present on the restore cluster volumes.

Applications Backup

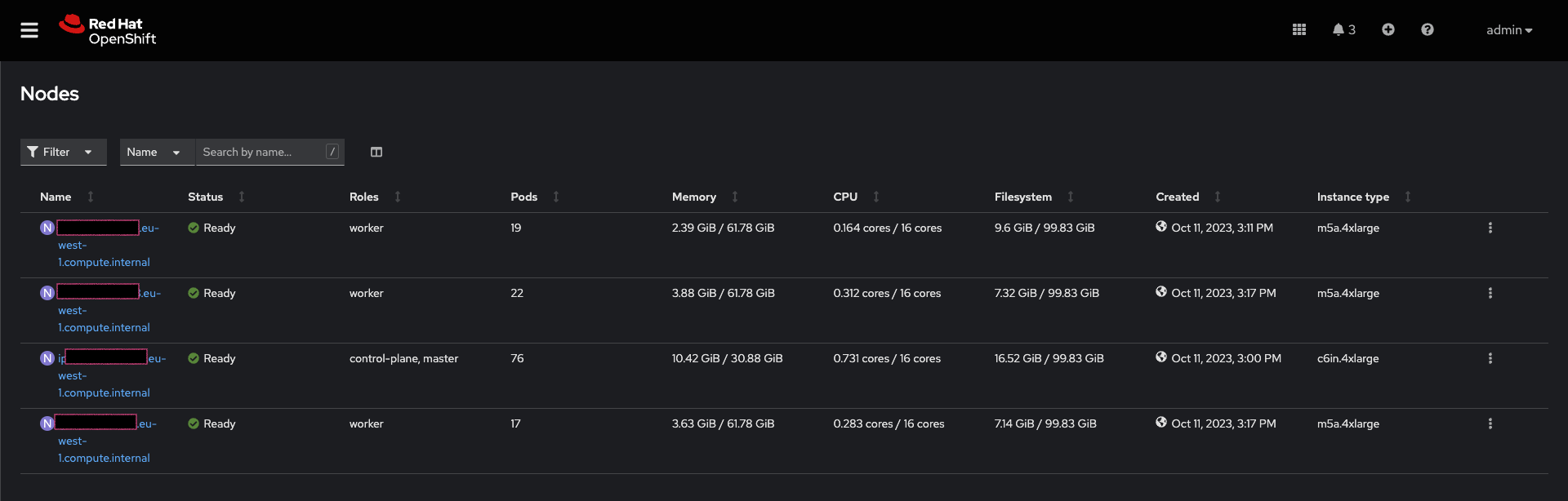

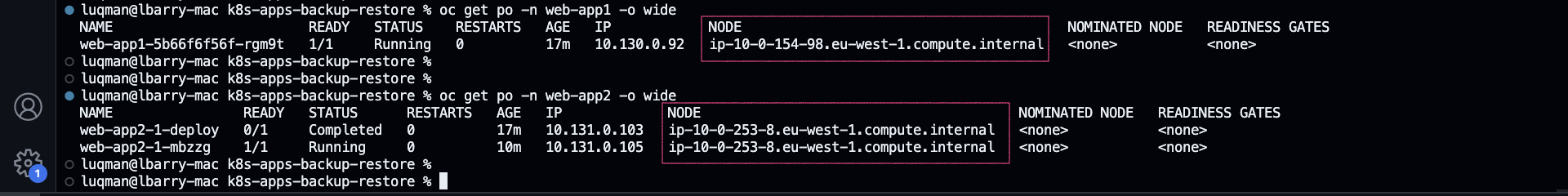

As you can see in the node name column, the BACKUP cluster is running in the us-east-2 region.

1. Prepare the ArgoCD Application used for backup

The ArgoCD Application is located in the argocd-applications/apps-dr-backup.yaml.

Make the necessary changes, and pay special attention to spec.source: and spec.destination:

spec:

project: default

source:

repoURL: https://github.com/luqmanbarry/k8s-apps-backup-restore.git

targetRevision: main

path: backup

# Destination cluster and namespace to deploy the application

destination:

# cluster API URL

name: apps-dr-backup

# name: in-cluster

namespace: openshift-adp

2. Prepare the backup Helm chart

Update the backup/values.yaml file to provide the following:

- S3 bucket name

- Namespace list

- Backup schedule

For example:

global:

operatorUpdateChannel: stable-1.2 # OADP Operator Subscription Channel

inRestoreMode: false

resourceNamePrefix: apps-dr-guide # OADP CR instances name prefix

storage:

provider: aws

s3:

bucket: apps-dr-guide # S3 BUCKET NAME

dirPrefix: oadp

region: us-east-1

backup:

cronSchedule: "*/30 * * * *" # Cron Schedule - For assistance, use https://crontab.guru

excludedNamespaces: []

includedNamespaces:

- web-app1

- web-app2

Commit and push your changes to the git branch specified in the ArgoCD Application.spec.source.targetRevision. You could use a Pull Request (recommended) to update the branch being monitored by ArgoCD.

3. Create the apps-dr-backup ArgoCD Application

Log on to the OCP cluster where the GitOps instance is running:

OCP_CLUSTER_SA_TOKEN=CHANGE_ME

OCP_CLUSTER_API_SERVER=CHANGE_ME

oc login --token=$OCP_CLUSTER_SA_TOKEN --server=$OCP_CLUSTER_API_SERVER

Apply the ArgoCD argocd-applications/apps-dr-backup.yaml manifest.

oc apply -f argocd-applications/apps-dr-backup.yaml

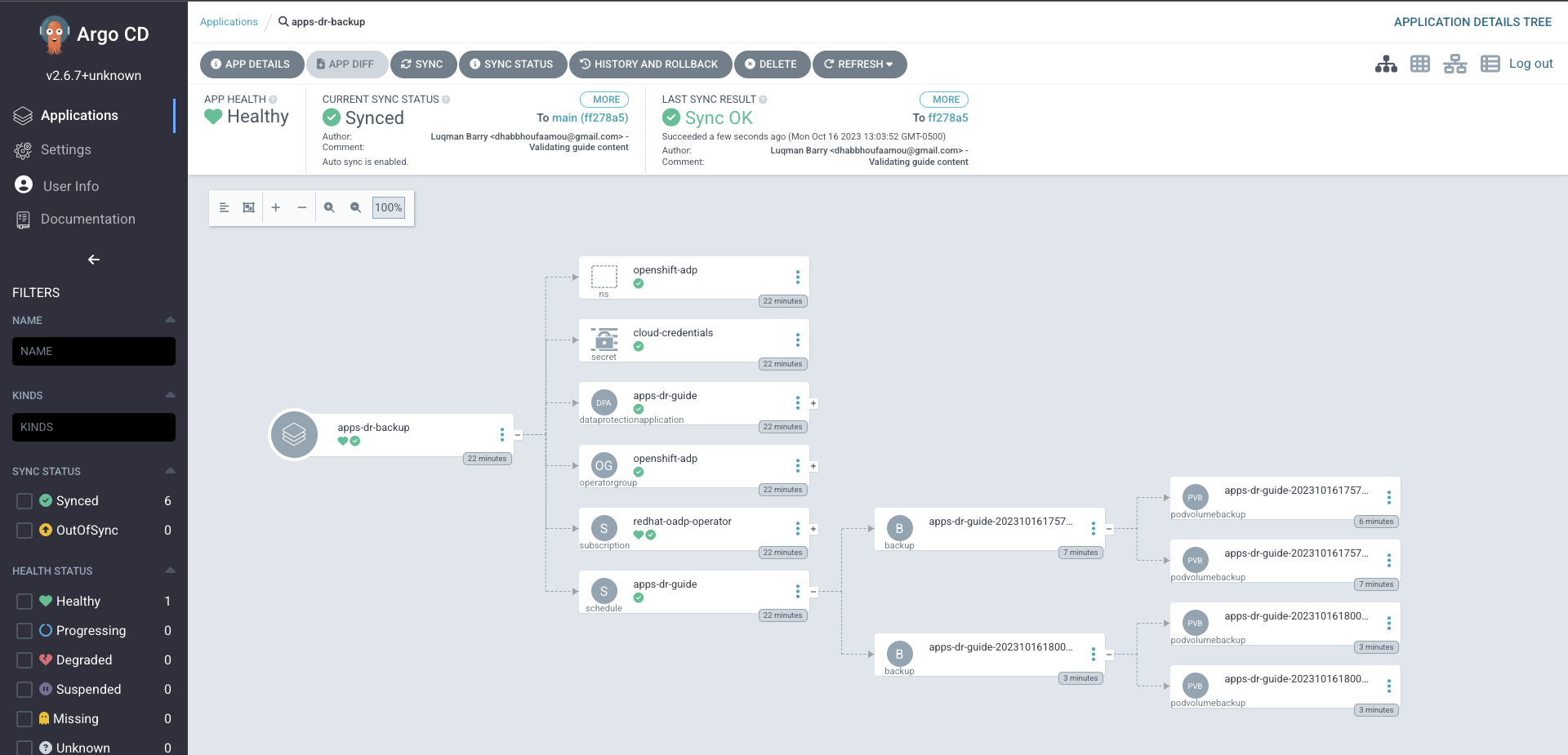

4. Inspect the Argo CD UI to verify resources are syncing

If things go as they should, the outcome should look the image below:

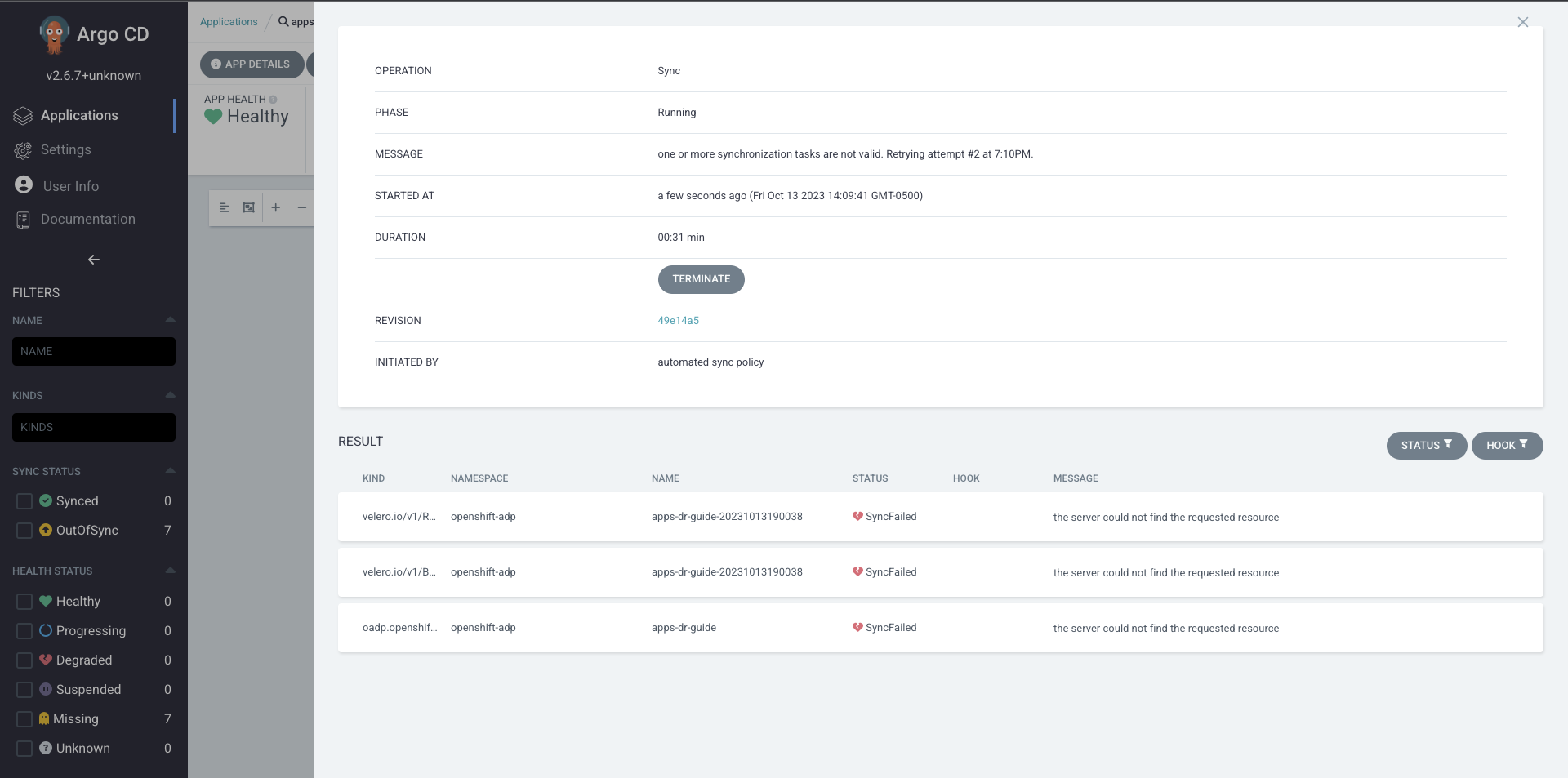

If you run into errors such as DataProtectionApplication, Schedule CRDs not found as shown below, install the OADP Operator, then uninstall it and delete the openshift-adp namespace.

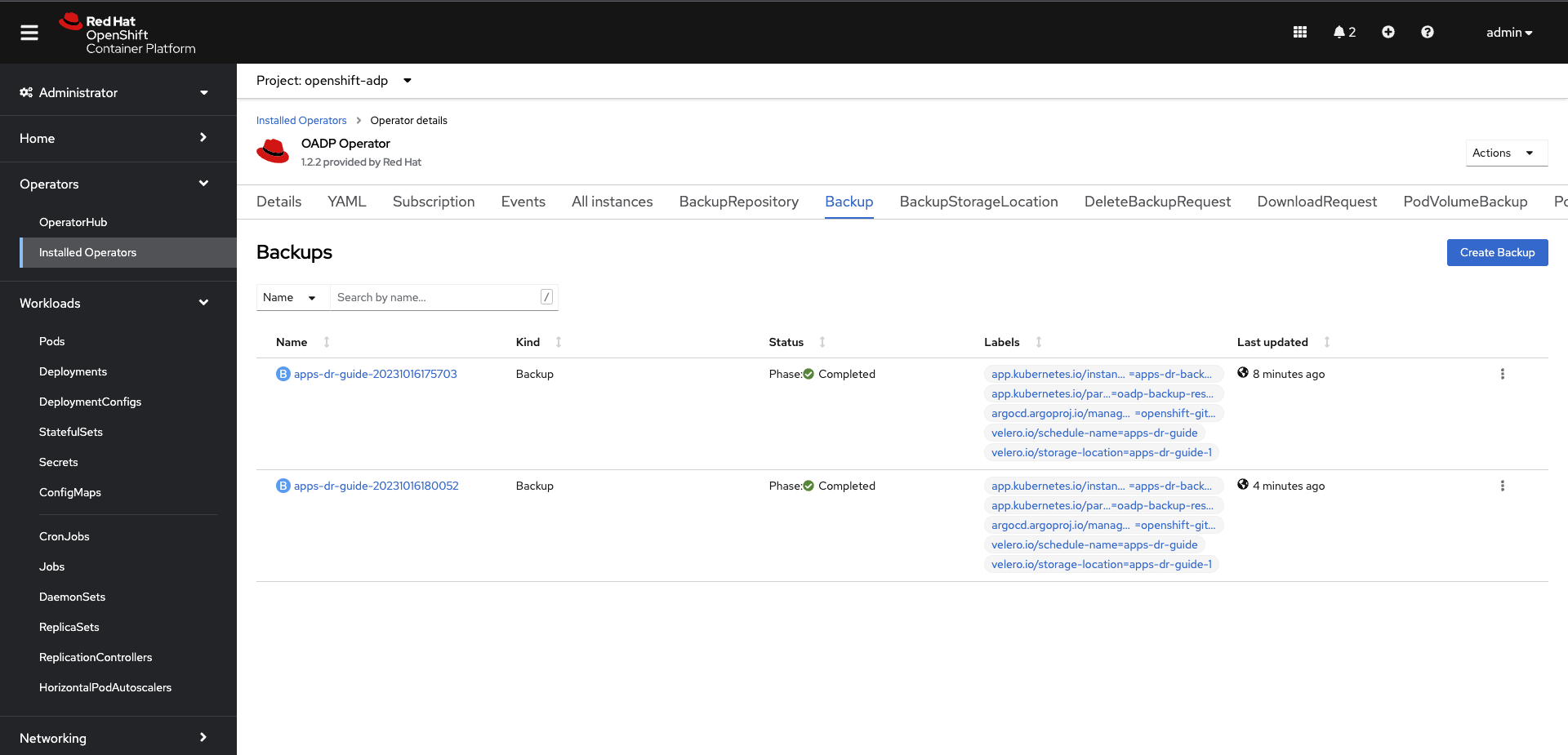

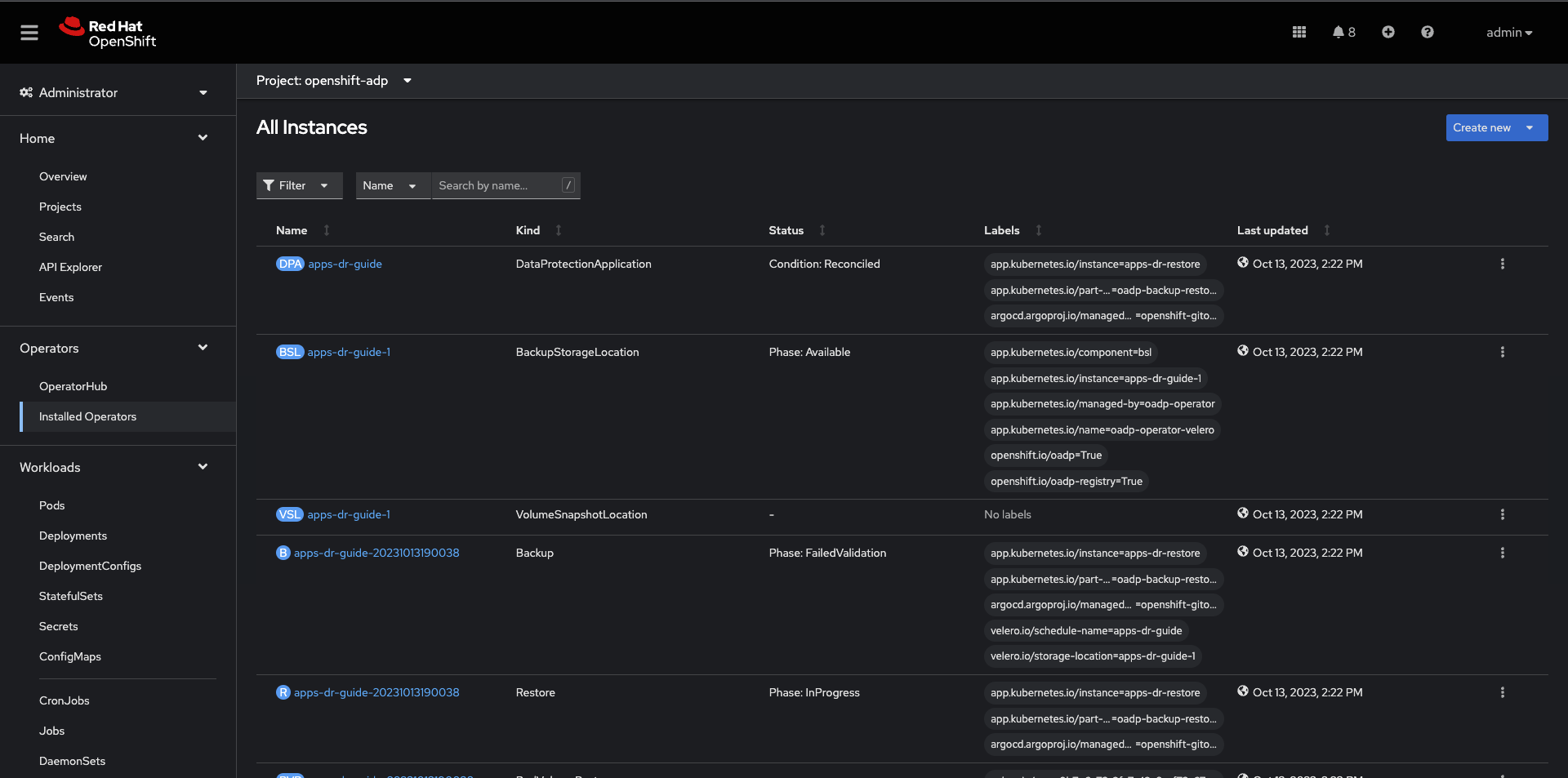

4. Verify Apps Backed Up - OpenShift Backup Cluster

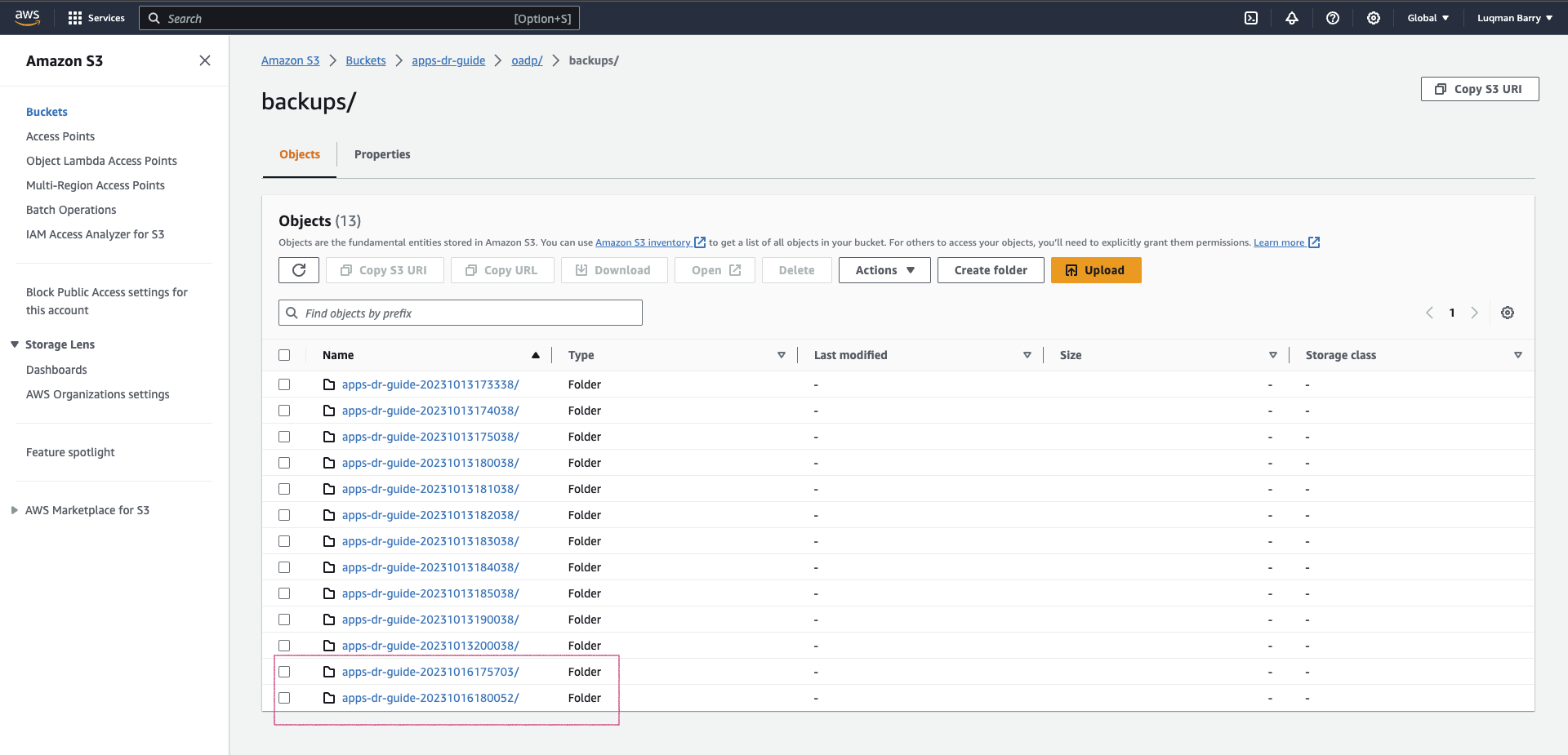

5. Verify Apps Backed Up - S3

After a successful backup, resources will be saved in S3. For example, the backup directory content will look like the below:

Follow the Troubleshooting guide at the bottom of the page if the OAD Operator Backup status changes to Failed or PartiallyFailed.

Applications Restore

As you can see in the node name column, the RESTORE cluster is running in the eu-west-1 region.

If the RESTORE cluster is the same as the BACKUP cluster, skip steps 1, 2, 3, and 4, and start at step 5.

1. Setup: Install the "OpenShift GitOps" Operator.

2. Setup: Add the OADP CRs repository.

Log in to the RESTORE cluster:

RESTORE_CLUSTER_SA_TOKEN=CHANGE_ME

RESTORE_CLUSTER_API_SERVER=CHANGE_ME

oc login --token=$RESTORE_CLUSTER_SA_TOKEN --server=$RESTORE_CLUSTER_API_SERVER

Log in to the ArgoCD CLI:

ARGO_PASS=$(oc get secret/openshift-gitops-cluster -n

openshift-gitops -o jsonpath='{.data.admin\password}' | base64 -d)

ARGO_URL=$(oc get route openshift-gitops-server -n openshift-gitops -o jsonpath='{.spec.host}{"\n"}')

argocd login --insecure --grpc-web $ARGO_URL --username admin --password $ARGO_PASS

Add the OADP configs repository:

argocd repo add https://github.com/luqmanbarry/k8s-apps-backup-restore.git \

--name CHANGE_ME \

--username git \

--password PAT_TOKEN \

--upsert \

--insecure-skip-server-verification

If there are no ArgoCD RBAC issues, the repository should show up in the ArgoCD web console, and the outcome should look like this:

3. Setup: Add the Recovery OpenShift Cluster

RESTORE_CLUSTER_KUBE_CONTEXT=$(oc config current-context)

RESTORE_ARGO_CLUSTER_NAME="apps-dr-restore"

argocd cluster add $RESTORE_CLUSTER_KUBE_CONTEXT \

--kubeconfig $HOME/.kube/config \

--name $RESTORE_ARGO_CLUSTER_NAME \

--yes

If things go as they should, the outcome should look like this:

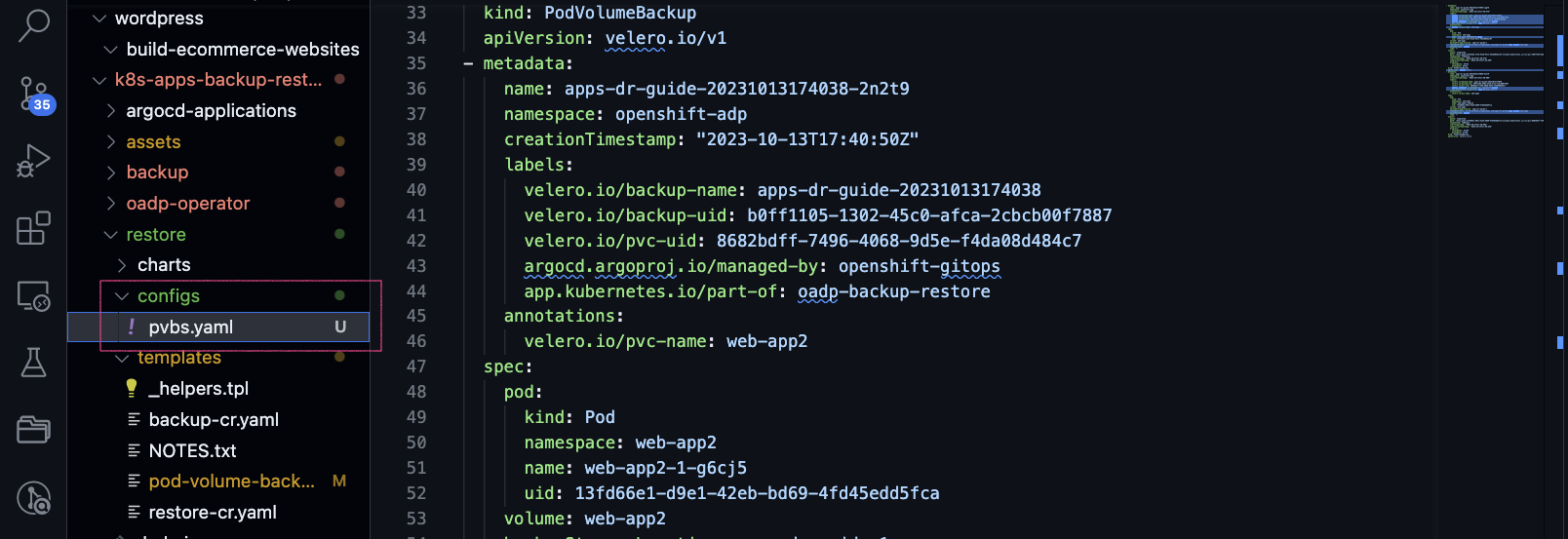

4. Prepare the PodVolumeBackup resources

Log in to the AWS CLI:

export AWS_ACCESS_KEY_ID=CHANGE_ME

export AWS_SECRET_ACCESS_KEY=CHANGE_ME

export AWS_SESSION_TOKEN=CHANGE_ME # Optional in some cases

Verify you have successfully logged in by listing objects in the S3 bucket:

# aws s3 ls s3://BUCKET_NAME

# For example

aws s3 ls s3://apps-dr-guide

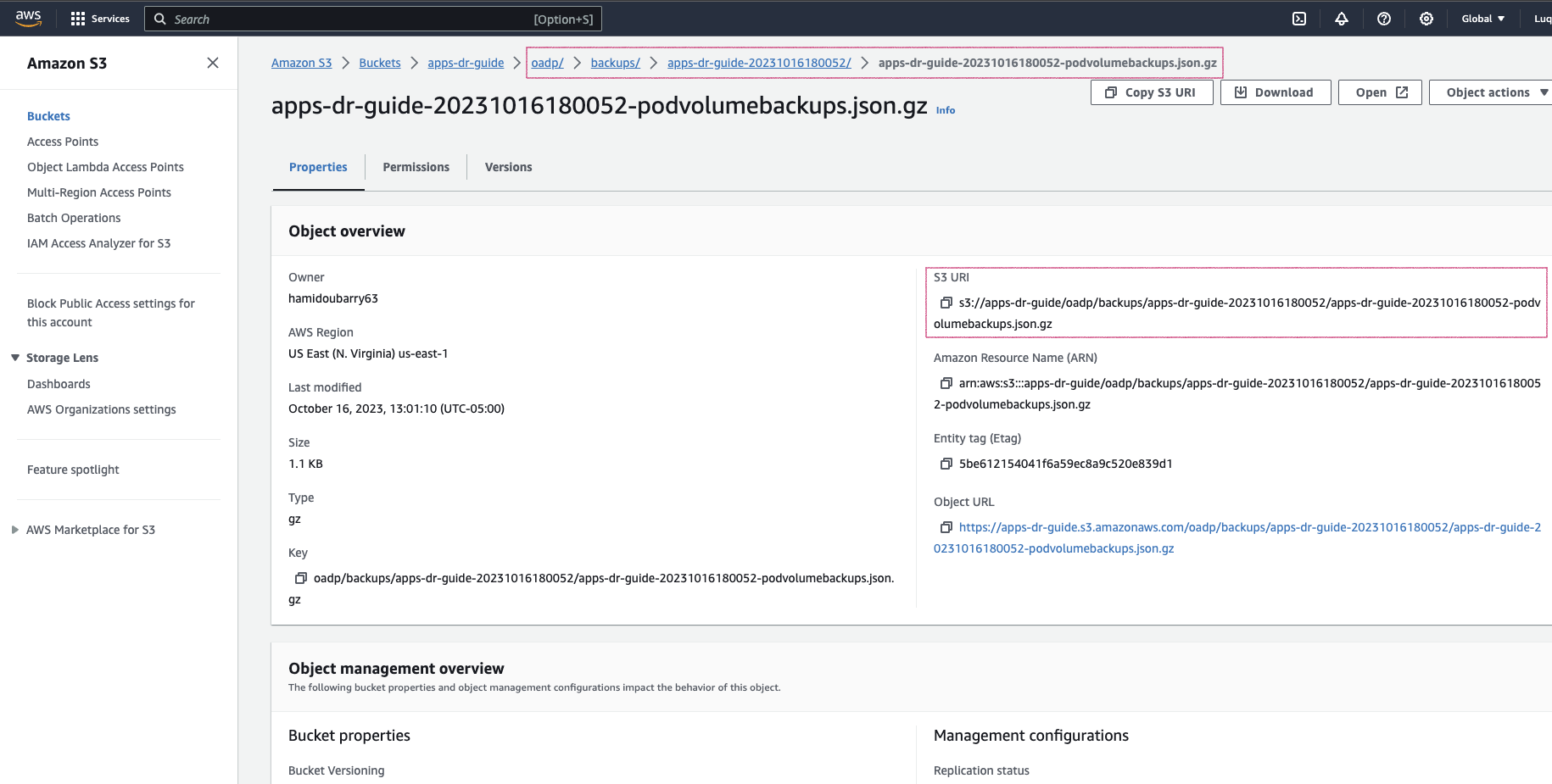

In S3, select the backup (usually the latest) you want to restore from, find the BACKUP_NAME-podvolumebackups.json.gz file, and copy its S3 URI.

In the example below, the backup name is apps-dr-guide-20231016200052.

Run the scripts/prepare-pvb.sh script; when prompted, provide the S3 URI you copied and then press Enter.

cd scripts

./prepare-pvb.sh

Once the script completes, a new directory will be added to the restore Helm chart.

5. Prepare the restore Helm chart

Update the restore/values.yaml file with the backupName selected in S3.

Set isSameCluster: true if you are doing an in-place backup and restore.

isSameCluster: false # Set this flag to true if the RESTORE cluster is the same as the BACKUP cluster

global:

operatorUpdateChannel: stable-1.2 # OADP Operator Sub Channel

inRestoreMode: true

resourceNamePrefix: apps-dr-guide # OADP CRs name prefix

backup:

name: "apps-dr-guide-20231016200052" # Value comes from S3 bucket

excludedNamespaces: [] # Leave empty unless you want to exclude certain namespaces from being restored.

includedNamespaces: [] # Leave empty if you want all namespaces. You may provide namespaces if you want a subset of projects.

Once satisfied, commit your changes and push.

git add .

git commit -am "Initiating restore from apps-dr-guide-20231016200052"

git push

# Check that the repo is pristine

git status

6. Create the apps-dr-restore ArgoCD Application

Inspect the argocd-applications/apps-dr-restore.yaml manifest and confirm it is polling from the correct Git branch and that the destination cluster is correct.

Log on to the OCP cluster where the GitOps instance is running. In this case, it is on the recovery cluster.

OCP_CLUSTER_SA_TOKEN=CHANGE_ME

OCP_CLUSTER_API_SERVER=CHANGE_ME

oc login --token=$OCP_CLUSTER_SA_TOKEN --server=$OCP_CLUSTER_API_SERVER

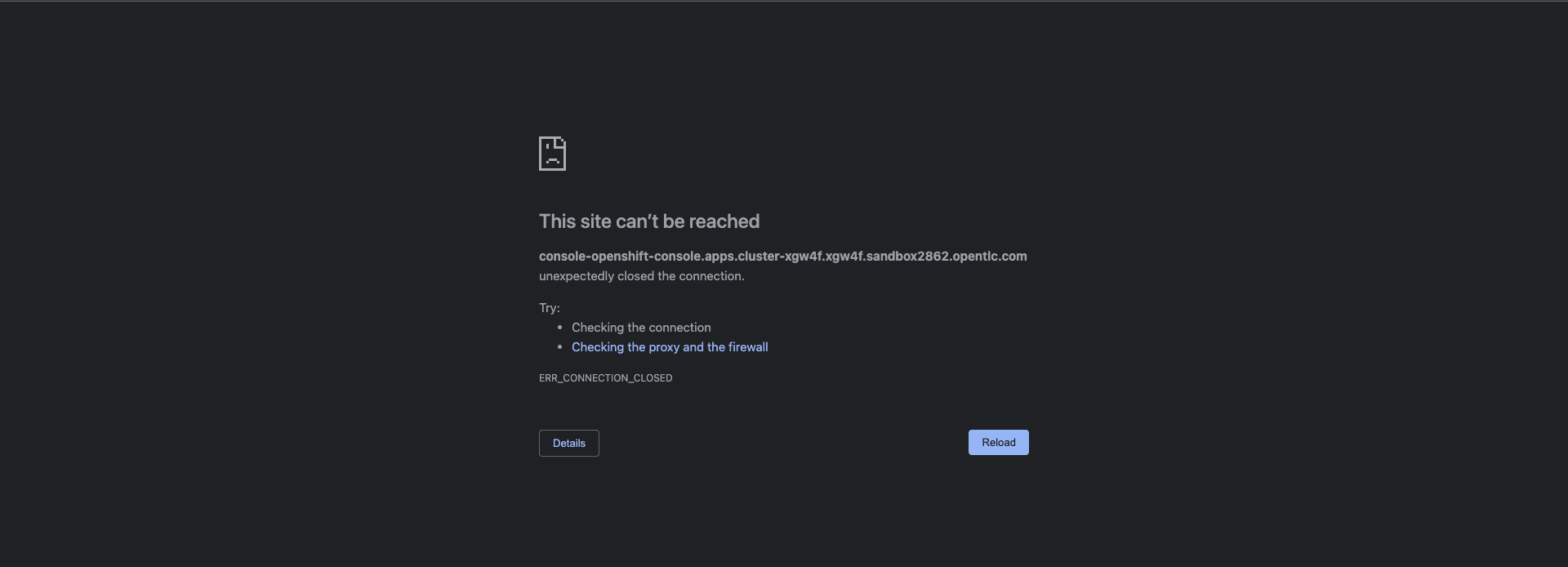

Before applying the ArgoCD Application to trigger the recovery, I will simulate a DR by shutting down the BACKUP cluster.

After reloading the page.

Apply the ArgoCD argocd-applications/apps-dr-restore.yaml manifest.

oc apply -f argocd-applications/apps-dr-restore.yaml

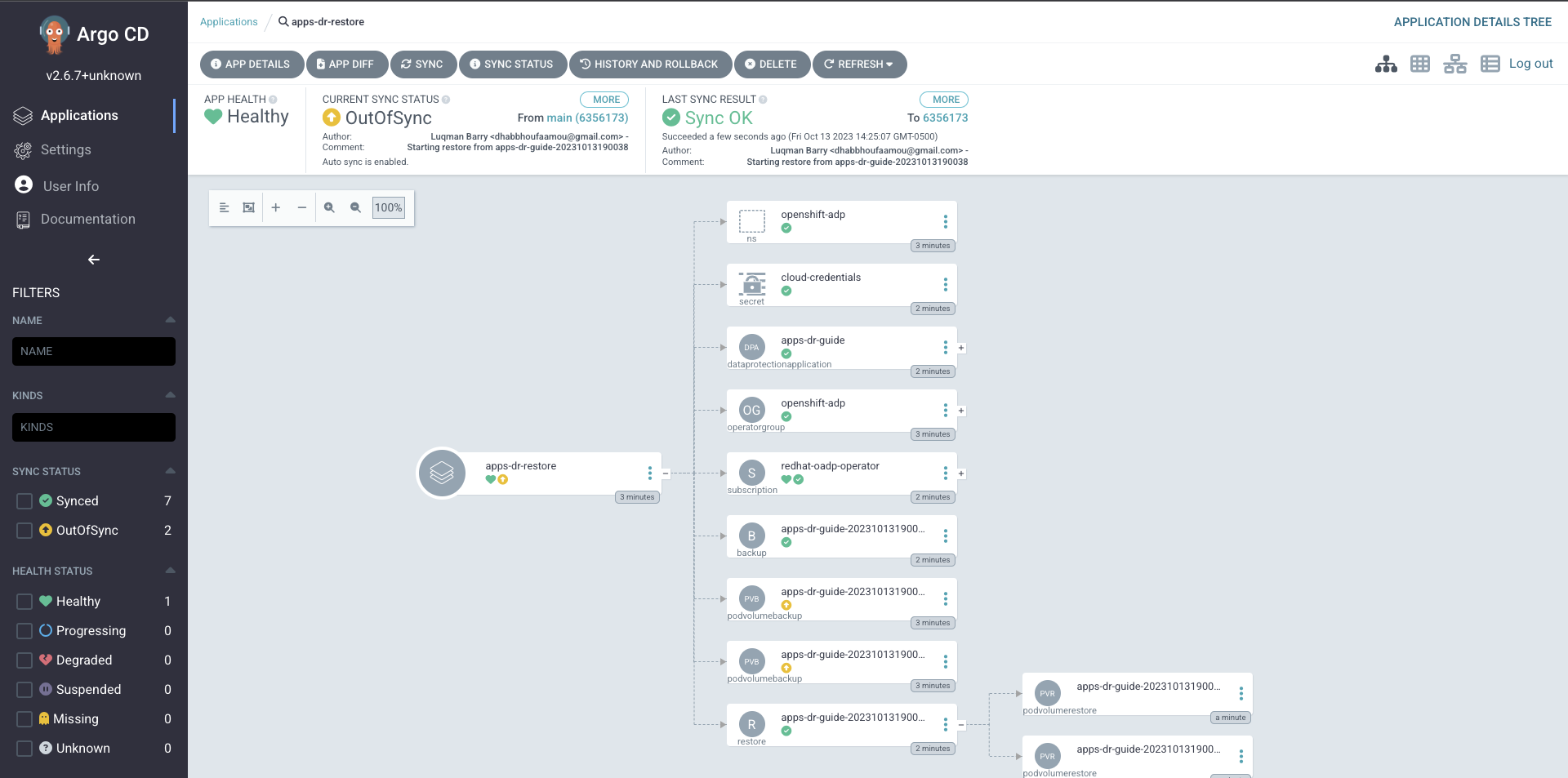

7. Verify Applications Restore - ArgoCD UI

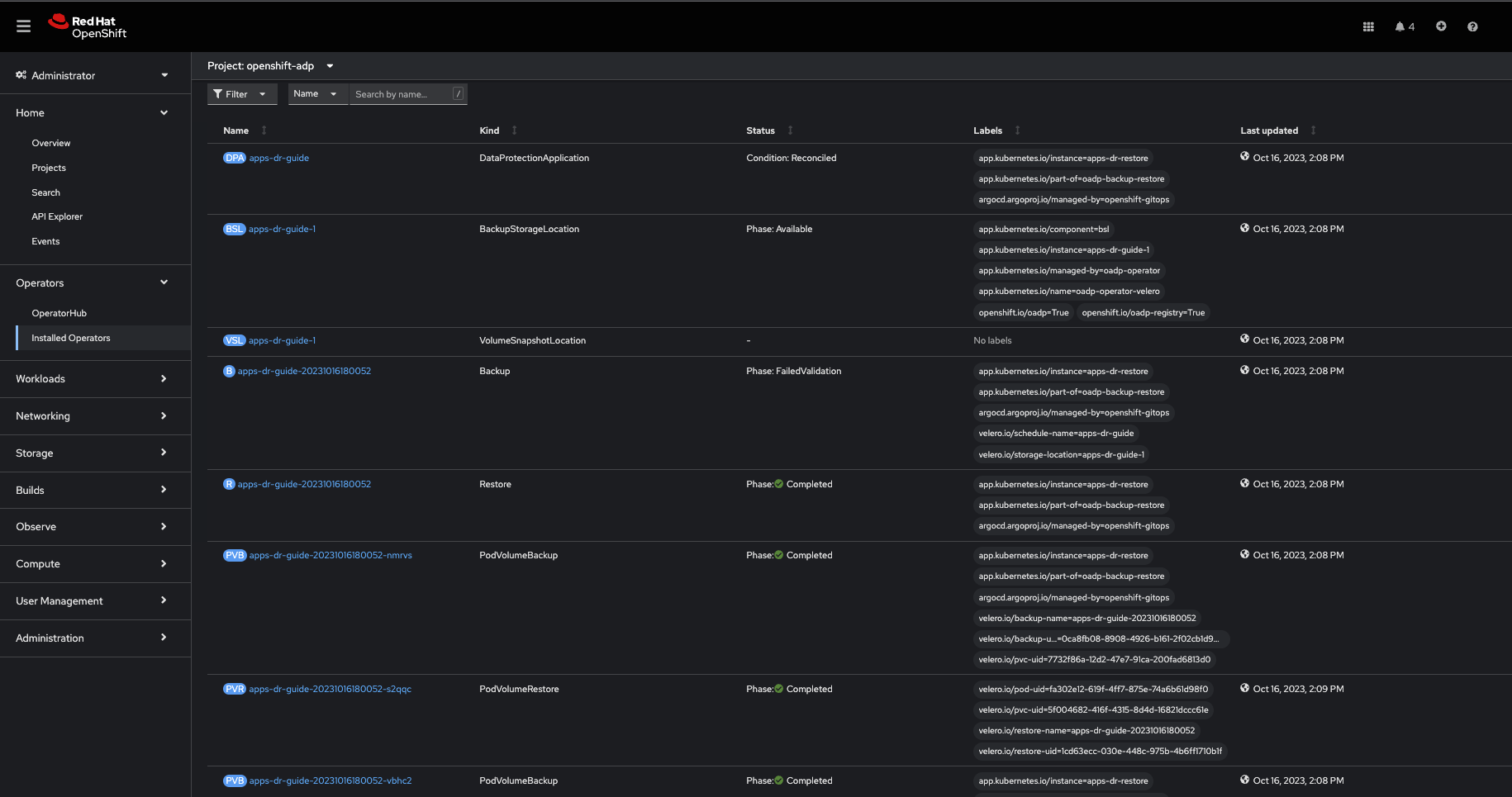

8. Verify Applications Restore - OCP Web Console

After a successful restore, resources will be saved to S3. For example, the restores directory content for backup apps-dr-guide-20231016200052 will look like the following:

Follow the Troubleshooting guide below if the OAD Operator Restore status changes to Failed or PartiallyFailed.

9. Post Restore: Cleanup orphaned resources

By default, OADP scales down DeploymentConfigs and does not clean up orphaned pods. Run the dc-restic-post-restore.sh to do the cleanup.

Log in to the RESTORE OpenShift Cluster:

oc login --token=SA_TOKEN_VALUE --server=CONTROL_PLANE_API_SERVER

Run the cleanup script:

# REPLACE THIS VALUE

BACKUP_NAME="apps-dr-guide-20231016200052"

./scripts/dc-restic-post-restore.sh $BACKUP_NAME

10. Post Restore: Applications Validation

Test your applications to check they are working as before.

OADP restored web-app1, web-app2, and all their resources, including volume data.

As shown, the pods are running in the eu-west-1 region.

Troubleshooting Guide

Set up the Velero CLI program before starting:

alias velero='oc -n openshift-adp exec deployment/velero -c velero -it — ./velero'

Backup in PartiallyFailed status

Log in to the BACKUP OCP cluster:

oc login --token=SA_TOKEN_VALUE --server=BACKUP_CLUSTER_CONTROL_PLANE_API_SERVER

Describe the backup:

velero backup describe BACKUP_NAME

View Backup Logs (all):

velero backup logs BACKUP_NAME

View Backup Logs (warnings):

velero backup logs BACKUP_NAME | grep 'level=warn'

View Backup Logs (errors):

velero backup logs BACKUP_NAME | grep 'level=error'

Restore in PartiallyFailed status

Log in to the RESTORE OCP cluster:

oc login --token=SA_TOKEN_VALUE --server=RESTORE_CLUSTER_CONTROL_PLANE_API_SERVER

Describe the Restore CR:

velero restore describe RESTORE_NAME

View Restore Logs (all):

velero restore logs RESTORE_NAME

View Restore Logs (warnings):

velero restore logs RESTORE_NAME | grep 'level=warn'

View Restore Logs (errors):

velero restore logs RESTORE_NAME | grep 'level=error'

Read these guides to learn more about debugging OADP/Velero:

Wrap up

This guide has demonstrated how to perform cross-cluster application backup and restore. It used OADP to back up applications running in the OpenShift v4.10 cluster deployed in us-east-2, simulated a disaster by shutting down the cluster, and restored the same apps and their state to the OpenShift v4.12 cluster running in eu-west-1.

Additional Learning Resources

About the author

More like this

Stop managing the past and start building IT’s future

The agentic paradox and the case for hybrid AI

You need Ops to AIOps | Technically Speaking

GitOps with Argo CD | Technically Speaking

Browse by channel

Automation

The latest on IT automation for tech, teams, and environments

Artificial intelligence

Updates on the platforms that free customers to run AI workloads anywhere

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

The latest on how we reduce risks across environments and technologies

Edge computing

Updates on the platforms that simplify operations at the edge

Infrastructure

The latest on the world’s leading enterprise Linux platform

Applications

Inside our solutions to the toughest application challenges

Virtualization

The future of enterprise virtualization for your workloads on-premise or across clouds